Capturing linguistic interaction in a grammar

270 likes | 584 Vues

Capturing linguistic interaction in a grammar. A method for empirically evaluating the grammar of a parsed corpus. Sean Wallis Survey of English Usage University College London s.wallis@ucl.ac.uk. Capturing linguistic interaction. Parsed corpus linguistics Empirical evaluation of grammar

Capturing linguistic interaction in a grammar

E N D

Presentation Transcript

Capturing linguistic interaction in a grammar A method for empirically evaluatingthe grammar of a parsed corpus Sean Wallis Survey of English Usage University College London s.wallis@ucl.ac.uk

Capturing linguistic interaction... • Parsed corpus linguistics • Empirical evaluation of grammar • Experiments • Attributive AJPs • Preverbal AVPs • Embedded postmodifying clauses • Conclusions • Comparing grammars or corpora • Potential applications

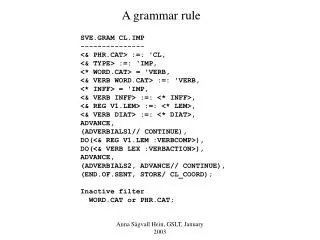

Parsed corpus linguistics • Several million-word parsed corpora exist • Each sentence analysed in the form of a tree • different languages have been analysed • limited amount of spontaneous speech data • Commitment to a particular grammar required • different schemes have been applied • problems: computational completeness + manual consistency • Tools support linguistic research in corpora

Parsed corpus linguistics • An example tree from ICE-GB (spoken) S1A-006 #23

Parsed corpus linguistics • Three kinds of evidence may be obtained from a parsed corpus • Frequencyevidence of a particular known rule, structure or linguistic event • Coverage evidence of new rules, etc. • Interaction evidence of the relationshipbetween rules, structures and events • This evidence is necessarily framed within a particular grammatical scheme • So… how might we evaluate this grammar?

Empirical evaluation of grammar • Many theories, frameworks and grammars • no agreed evaluation method exists • linguistics is divided into competing camps • status of parsed corpora ‘suspect’ • Possible method: retrievability of events • circularity: you get out what you put in • redundancy: ‘improvement’ by mere addition • atomic: based on single events, not pattern • specificity: based on particular phenomena • New method: retrievability of eventsequences

Experiment 1: attributive AJPs • Adjectives before a noun in English • Simple idea: plot the frequency of NPs with at leastn = 0, 1, 2, 3… attributive AJPs

Experiment 1: attributive AJPs • Adjectives before a noun in English • Simple idea: plot the frequency of NPs with at leastn = 0, 1, 2, 3… attributive AJPs Raw frequency Log frequency NB: not a straight line

Experiment 1: analysis of results • If the log-frequency line is straight • exponential fall in frequency (constant probability) • no interaction between decisions (cf. coin tossing) • Sequential probability analysis • calculate probability of adding each AJP • error bars (binomial) • probabilityfalls • second < first • third < second • fourth < second • decisions interact

Experiment 1: analysis of results • If the log-frequency line is straight • exponential fall in frequency (constant probability) • no interaction between decisions (cf. coin tossing) • Sequential probability analysis • calculate probability of adding each AJP • error bars (binomial) • probabilityfalls • second < first • third < second • fourth < second • decisions interact probability

Experiment 1: analysis of results • If the log-frequency line is straight • exponential fall in frequency (constant probability) • no interaction between decisions (cf. coin tossing) • Sequential probability analysis • calculate probability of adding each AJP • error bars (binomial) • probability falls • decisions interact • fit to a power law • y = m.xk • findmandx probability y = 0.1931x-1.2793

Experiment 1: explanations? • Feedback loop: for each successive AJP, it is more difficult to add a further AJP • Explanation 1: semantic constraints • tend to say tall green ship • do not tend to say tall short shipor green tall ship • Explanation 2: communicative economy • once speaker said tall green ship, tends to only say ship • Further investigation required • General principle: • significant change (usually, fall) in probability is evidence of an interaction along grammatical axis

Experiments 2,3: variations Restrict head: common and proper nouns • Common nouns: similar results • Proper nouns and adjectives are often treated as compounds (Northern Englandvs. lower Loire) Ignore grammar: adjective + noun strings • Some misclassifications / miscounting (‘noise’) • she was [beautiful, people] said; tall very [green ship] • Similar results • slightly weaker (third < second ns at p=0.01) • Insufficient evidence for grammar • null hypothesis: simple lexical adjacency

Experiment 4: preverbal AVPs • Consider adverb phrases before a verb • Results very different • Probability does not fall significantly between first and second AVP • Probability does fall between third and second AVP • Possible constraints • (weak) communicative • not (strong) semantic • Further investigationneeded

Experiment 4: preverbal AVPs • Consider adverb phrases before a verb • Results very different • Probability does not fall significantly between first and second AVP • Probability does fall between third and second AVP • Possible constraints • (weak) communicative • not (strong) semantic • Further investigationneeded • Not power law: R2 < 0.24 probability

Experiment 5: embedded clauses • Another way to specify nouns in English • add clause after noun to explicate it • the ship [that was tall and green] • the ship [in the port] • may be embedded • the ship [in the port [with the ancient lighthouse]] • or successively postmodified • the ship [in the port][with a very old mast] • Compare successive embedding and sequential postmodifying clauses • Axis = embedding depth / sequence length

Experiment 5: method • Extract examples with FTFs • at least nlevels of embedded postmodification:

Experiment 5: method • Extract examples with FTFs • at least nlevels of embedded postmodification: 0 1 2 (etc.)

Experiment 5: method • Extract examples with FTFs • at least nlevels of embedded postmodification: 0 1 2 • problems: • multiple matching cases (use ICECUP IV to classify) • overlapping cases (subtract extra case) • co-ordination of clauses or NPs (use alternative patterns) (etc.)

Experiment 5: analysis of results • Probability of adding a further embedded clause falls with each level • second < first • sequential < embedding • Embedding only: • third < first • insufficient data forthird < second • Conclusion: • Interaction along embedding and sequential axes

Experiment 5: analysis of results • Probability of adding a further embedded clause falls with each level • second < first • sequential < embedding • Embedding only: • third < first • insufficient data forthird < second • Conclusion: • Interaction along embedding and sequential axes embedded sequential probability

Experiment 5: analysis of results • Probability of adding a further embedded clause falls with each level • second < first • sequential < embedding • Fitting to f = m.xk • k < 0 = fall (f = m/x|k|) • |k| is high = steep • Conclusion: • Both match power law: R2 > 0.99 embedded y = 0.0539x-1.2206 sequential y = 0.0523x-1.6516

Experiment 5: explanations? • Lexical adjacency? • No: 87% of 2-level cases have at least one VP, NP or clause between upper and lower heads • Misclassified cases of embedding? • No: very few (5%) semantically ambiguous cases • Language production constraints? • Possibly, could also be communicative economy • contrast spontaneous speech with other modes • Positive ‘proof’ of recursive tree grammar • Established from parsed corpus • cf. negative ‘proof’ (NLP parsing problems)

Conclusions • A new method for evaluating interactions along grammatical axes • General purpose, robust, structural • More abstract than ‘linguistic choice’ experiments • Depends on a concept of grammatical distance along an axis, based on the chosen grammar • Method has philosophical implications • Grammar viewed as structure of linguistic choices • Linguistics as an evaluable observational science • Signature (trace) of language production decisions • A unification of theoretical and corpus linguistics?

Comparing grammars or corpora • Can we reliably retrieve known interaction patterns with different grammars? • Do these patterns differ across corpora? • Benefits over individual event retrieval • non-circular: generalisation across local syntax • not subject to redundancy: arbitrary terms makes trends more difficult to retrieve • not atomic: based on patterns of interaction • general: patterns may have multiple explanations • Supplements retrieval of events

Potential applications • Corpus linguistics • Optimising existing grammar • e.g. co-ordination, compound nouns • Theoretical linguistics • Comparing different grammars, same language • Comparing different languages or periods • Psycholinguistics • Search for evidence of language production constraints in spontaneous speech corpora • speech and language therapy • language acquisition and development

Links and further reading • Survey of English Usage • www.ucl.ac.uk/english-usage • Corpora and grammar • .../projects/ice-gb • Full paper • .../staff/sean/resources/analysing-grammatical-interaction.pdf • Sequential analysis spreadsheet (Excel) • .../staff/sean/resources/interaction-trends.xls