“ Operational Requirements for Core Services ”

“ Operational Requirements for Core Services ”. James Casey, IT-GD, CERN CERN, 21 st June 2005. SC4 Workshop. Summary. Issues as expressed by sites ASGC, CNAF, FNAL, GRIDKA, PIC, RAL, TRIUMF My synopsis of the most important issues Where we are on them…

“ Operational Requirements for Core Services ”

E N D

Presentation Transcript

“Operational Requirements for Core Services” James Casey, IT-GD, CERN CERN, 21st June 2005 SC4 Workshop

Summary • Issues as expressed by sites • ASGC, CNAF, FNAL, GRIDKA, PIC, RAL, TRIUMF • My synopsis of the most important issues • Where we are on them… • What are possible solutions in longer term CERN IT-GD

ASGC - Features missing in core services • Local/remote diagnostic tests to verify the functionality and configuration. • This will be helpful for • Verifying your configuration • Generating test results that can be used as the basis for local monitoring • Detailed step-by-step troubleshooting guides • Example configurations for complex services • e.g VOMS, FTS • Some error message can be improved to provide more information to facilitate troubleshooting CERN IT-GD

CNAF - Outstanding issues (1/2) • Accounting (monthly reports): • CPU usage in KSI2K-days DGAS • Wall-clock time in KSI2K-days DGAS • Disk space used in TB • Disk space allocated in TB • Tape space used in TB • Validation of raw data gathered, by comparison via different tools • Monitoring of data transfer: GridView and SAM? • More FTS monitoring tools necessary • (traffic load per channel, per VO) • Routing in LHC Optical Private Network? • Backup connection to FZK becoming urgent, and a lot of traffic using the production network infrastructure, between non-associated T1-T1 and T1-T2 sites CERN IT-GD

CNAF – Outstanding Issues (2/2) • Implementation of a LHC OPN monitoring infrastructure still in its infancy • SE Reliability when in unattended mode: greatly improved with latest Castor2 upgrade • Castor2 performance during concurent import and export activities CERN IT-GD

FNAL – Middleware additions • It would be useful to have better hooks in the grid services to enable monitoring for 24/7 systems • We are implementing our own tests to connect to the paging system • If the services had reasonable health monitors we could connect to it might spare us re-implementing or missing an important element to monitor CERN IT-GD

GRIDKA – Feature Requests • improved (internal) monitoring • developers not always seem to be aware that hosts can have more than 1 network interface. • It should be that hosts can be reached via their long living alias and the actual name is unimportant (for reachability, not for security). • Error messages should make sense and be human readable! • simple example : • $ glite-gridftp-ls gsiftp://f01-015-105-r.gridka.de/pnfs/gridka.de/ • (typo in the hostname ^^^) • t3076401696:p17226: Fatal error: [Thread System] GLOBUSTHREAD: pthread_mutex_destroy() failed • [Thread System] mutex is locked (EBUSY)Aborted CERN IT-GD

PIC – Some missing Features • All in general: • Clearer error messages • Difficult to operate (eg, it should be possible to reboot a host without affecting the service) • SEs: • Missing a procedure for “draining” an SE or gently “take it out of production” • Difficult to control access: for some features to be tested need the SE published in the BDII, but once is there there is no way to control who can access • Glite-CE: • A simple way to gather the DN of the submitter, having the Local Batch jobID (GGUS-9323) • FTS: • Unable to delete a channel which has “cancelled” transfers • Difficult to see a) that the service is having problems, and b) then to debug them CERN IT-GD

RAL – Missing Features in File Transfer Service • Could collect more information (endpoints) dynamically • This is happening now in 1.5 • Logs • Comparing a successful and failed transfer is quite tricky • I can show you two 25 line logs, one for a failed and one for a successful srmcopy. The logs are completely identical. • Having logs files that are easy to parse for alerts or errors is of course very useful. • Offsite monitoring • How do we know a service at CERN is dead? • And what is provided to interface it to local T1 monitoring. CERN IT-GD

TRIUMF – Core Services (1/2) • 'yaim', like any tool that wants to be general and complete, ends up being complicated to implement, to debug and to maintain. • In trying to do a lot from two scripts (install_node and configure_node) and one environment file (node-info.def) bypasses some basic principles of unix system management: • use small, independent tools, and combine them to achieve your goal. • Often a 'configure_node' process needs to be run multiple times to get it right. • It would help a lot if it did not repeat useless, already completed, time-consuming 'config_crl'. CERN IT-GD

TRIUMF – Core Services (2/2) • An enhancement for the yaim configure process: • it would also be useful if the configure_node process would contain a hook to run a user-defined post-configuration step. • There is frequently some local issue that needs to be addressed, and we would like to have a line in the script that calls a local, generic script that we could manage, and would not be over-written during 'yaim' updates. • The really big hurdle will always be Tier 2's (large number of sites out there). • The whole process is just difficult for the Tier 2's. • It doesn't really matter all that much what the Tier 1's say - they will andmust cope. • One should be aggressively soliciting feedback from the Tier 2's. CERN IT-GD

Top 5…. • Better logging • Missing Information (e.g. DN in transfer log) • Hard to understand logs • Better diagnostics tools • How do I verify my configuration is correct? • … and functional for all VOs? • Toubleshooting guides • Better error messages from tools • Monitoring • … and interfaces to allow central/remote components to be interfaced to local monitoring system CERN IT-GD

Logging • FTS Logs have several problems: • Only access to logs via interactive login on transfer node • Plans to have full info in DB • Will come after schema upgrade in next FTS release • CLI tools/web interface to retrieve them • Intermediate stage is to have final reason in DB • Outstanding bug sets this to AGENT_ERROR for 90% of messages • Should be fixed soon (I hope!) • Logs not understandable • When SRM v2.2 rewrite is done, a lot of cleanup will (need to) happen CERN IT-GD

Diagnostic tools/ Troubleshooting guides • SAM (Site Availability Monitoring) is the solution for diagnostics • Can run validation tests as any VO, and see the results • System is in infancy • Tests need expanding • But the system is very easy to write tests for • … and the web interface is quite nice to use • Troubleshooting guides • These are acknowledged needed for all services • T-2 tutorials helped in gathering some of these materials • Look at tutorials from last week in Indico for more info CERN IT-GD

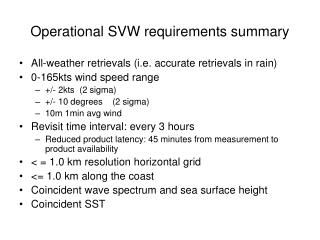

SAM 2 • Tests run as operations VO: ops • sensor test submission available for all VOs • critical test set for VOs (defined using FCR) • Availability Monitoring • aggregation of results over a certain time • site services: CE, SE, sBDII, SRM • central services: FTS, LFC, RB • status calculated in every hour → availability • current (last 24 hours), daily, weekly, monthly CERN IT-GD

SAM Portal -- main CERN IT-GD

SAM -- sensor page CERN IT-GD

Monitoring • It’s acknowledged the GRIDVIEW is not enough • It’s good for “static” displays, but not good for interactive debugging • We’re looking at other tools to parse the data • SLAC have interesting tools for monitoring netflow data • This is very similar in format to the info we have in globus XFERLOGs • And they even are thinking of alarm systems • I’m interested to know what types of features such a debugging/monitoring system should have • We’d keep it all integrated in a GRIDVIEW like-system CERN IT-GD

Netflow et. al. • Peaks at known capacities and RTTs • RTTs might suggest windows not optimized CERN IT-GD

Mining data for sites CERN IT-GD

Diurnal behavior CERN IT-GD

One month for one site CERN IT-GD

Effect of multiple streams • Dilemma what do you recommend: • Maximize throughput but unfair, pushes other flows aside • Use another TCP stack, e.g. BIC-TCP, H-TCP etc. CERN IT-GD

Thank you … CERN IT-GD