ASIQ Multiplex: Understanding the Differences from Simplex Systems

Explore the transition to Multiplex in ASIQ 12.5, key terms like Writer Nodes and Reader Nodes, benefits of Multiplex, and necessary steps for the implementation. Learn the distinctions between Simplex and Multiplex configurations, including query handling and administration in a multiplex setup. Discover why choosing Simplex vs. Multiplex is a crucial decision and how DDL operations function in a Multiplex environment.

ASIQ Multiplex: Understanding the Differences from Simplex Systems

E N D

Presentation Transcript

11 Simplex or Multiplex?

AS IQ Multiplex • All of the released software for ASIQ is now Multiplex enabled • All platforms are now Multiplex enabled • You can still run a “simple” IQ system, however this is called a Simplex system • In 12.5 there is no such thing as Simplex

AS IQ 12.5 Multiplex • The initial database/server creation will create a Simplex database • The creation of the first reader node will trigger the creation of the Multiplex Writer

Glossary - 1 • Simplex An IQ installation with only one Node or instance of IQ Running • Multiplex An IQ installation with multiple instances of IQ executing against a single IQ database • Writer Node The only IQ instance in a multiplex that can execute DML commands

Glossary – 2 • Reader Node All the other instances of IQ running in the multiplex that are excluded from running DML commands • Synchronize The command to implement DDL changes across the Multiplex

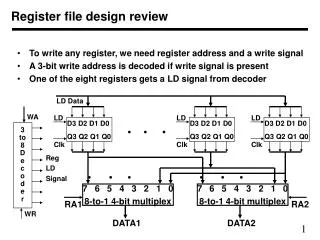

What is a Multiplex? • An IQ Multiplex consists of: • A number of nodes, each running IQ 12 • A shared disk subsystem connected to all nodes • One node designated as the writer node, others readers • Why do IQ Multiplex? • Scale query performance past limits of one node • Match I/O to CPU capabilities • This is important IQ-M will NOT increase individual query speed

IQ VLM IQ VLM IQ VLM IQ VLM IQ Multiplex functions Unix Unix/NT Unix/NT Unix/NT VLM Alpha Server Compaq Server Compaq Server Compaq Server IQ IQ A Multiplex –Marketing View IQ 12.0 provides Table Level Versioning Version maintained while queries are running. Users see no change in database until update is complete Can add as many CPUs as needed to speed up load process, 100% paralleled.

A Multiplex – Real View IQ Temp. Store IQ Temp. Store IQ Temp. Store IQ Temp. Store AS/IQ 12.0 AS/IQ 12.0 AS/IQ 12.0 AS/IQ 12.0 Catalog Store Catalog Store Catalog Store Catalog Store IQ Message Log IQ Message Log IQ Message Log IQ Message Log IQ Main Store

How Does it work? • We allow for the creation of two sorts of IQ-M engines • A Writer Node • Reader Nodes • In a reader we restrict the ability to write to the IQ Main Store, without impacting IO to the catalog and the IQ Temp Store • In the writer node we restrict the ability to perform DDL changes unless the writer is brought up in Simplex Node (the only IQ-M instance executing in the Multiplex

Simplex vs. Multiplex • Each “version” of IQ has differing capabilities and the selection of Simplex vs. multiplex is an important decision to make • Simplex limits users and specialised query performance • Multiplex is more complex and has some subtle limitations

DDL in Multiplex • In 12.4.3 when a multiplex is running there could be no DDL executed on permanent structures • (Temp tables are excluded from this) • To run DDL the Writer must be in Simplex mode • In 12.5 the Writer can be in Multiplex, but there other restrictions • Writer stays up on Sync. – but readers must be brought down • Sync is now a 2 stage process

Query Handling • In a Simplex system there is no way to “partition” queries and loads • In a Multiplex you can have dedicated servers to service “types” of queries or users • In a Multiplex Loads are (virtually) transparent to the user base

Administration • In a Multiplex each node can be tuned as a separate server • In a Simplex system there is only one server, so administration is hugely simpler • Even with the 12.5 DDL changes there are still “inconveniences” while using Multiplex • Remember you can always run a Simplex Server operationally on a Multiplex database

Steps to Generate - 1 • In a very simple form the steps to build Multiplex are as follows • Create a shared area of disk for the IQ Main store • This is usually a fiber channel disk array (but could be an NFS disk area) • Create an area of disk that can be seen (mounted) by all of the nodes (computers) in the Multiplex • This is (practically always) an NFS region • 12.5 Change – There is no requirement for the shared disk area in 12.5

Steps to Generate – 2 • Build an IQ-M database using what will become the Writer Node • Create the reader nodes • Test the system • Bring down the Multiplex and bring up the writer in simplex mode • Build all the tables and indexes using the Simple Writer

Steps to Generate – 3 • Synchronize the Multiplex • Bring up the Writer and all the readers nodes • Tune the Writer and Reader Nodes • Apply the SQL changes required by each node • Users/permissions etc.

Sybase Central • All of the above routines will be performed by Sybase Central • The work required to generate the Multiplex is quite high and is infinitely easier to accomplish using Sybase Central • The only area that I would still script would be the DDL (table and Index creation) • Maybe I would also, possibly, run the synchronize as a script – although this is not an every day operation

Tuning • There are three areas of immense importance when considering the tuning of a Multiplex system • 1. Get the disk layout correct! • 2. Tune the Writer Node for load speed • 3. Tune the Readers nodes for query performance • Maybe even tune individual nodes differently for differing query or user types

Disk Layout • This is so vital I cannot begin to emphasize this enough • The entire performance of the Multiplex will come down to how well the disk has been laid out • There are really only 2 disk subsystems we should consider for IQ-M • EMC disk arrays • 2-d striping on a disk farm (SOS)

Synchronization - 1 • Before we talk about tuning the nodes it is time to discuss Multiplex Synchronization • When DML changes are applied by the writer to the multiplex the versioning information is passed via ASA replication • This is automatic and completely user invisible • When a writer (in Simplex Mode) applies a DDL change to the database the readers need to be synchronized to the writer

Synchronization – 2 • The synchronization process is simply the copying of the catalog store from the writer to each of the reader nodes • So effectively the readers are then clones of the writer node • In order to tune the readers (maybe because of differing hardware or query constraints) we need to have the ability to modify them

Synchronization – 12.5 (1) • Writer Node issues a checkpoint, and during the checkpoint the Catalog Store (.db) and the Log (.log) are copied to a local area of disk. • The Writer node then continues processing, as you can see this will be a minute stop in the Writer – certainly under 1 second • Now the readers are brought down (singly if required) and the new load and Catalog files are copied over the existing files.

Synchronization – 12.5 (2) • The Query nodes are brought back up, followed by the dbremote tasks. • There is a new recovery process run on the reader nodes as they are brought back up – this is because the log file is not logically empty – so there is a transaction recovery phase – this should have little or no impact on the start of the query servers.

IQ Multiplex Changes • Significant Changes for IQ Multiplex Servers • Query Servers may have their own 'local store' for users to create permanent tables • Synchronization no longer required after performing DDL • IQ Multiplex servers may run different versions of Sybase IQ • Intended to permit testing of new versions of Sybase IQon Query Servers before upgrading • Intermediate versioning changes

Query Server Resynchronization • Resynchronization is no longer required after performing DDL on the Write Server • New objects will be immediately visible on query servers • Few circumstances will require Synchronization • Adding a new Query Server to the Multiplex • Restoring a Query Server • Restarting a Query Server that has been Excluded • After running the Write Server in Single Node Mode

IQ Multiplex – Local Store for Query Servers • Query Servers may have a 'Local IQ Store' allowing users to create permanent objects in the database • This will benefit users such as analysts and developers who need to create their own tables or procedures • Objects created in the Local Store persist after a server recycle or synchronization of the Query Server • These objects will only be visible to users connected to the query server that owns the Local IQ Store • Object access is subject to standard permissions

DBAs Analysts Developers ETL Processes IQ Multiplex 12.5 (and earlier) Sybase IQ Write Server Sybase IQ Query Server Base Tables Developer Tables Analyst Tables WEB Access Query Only Users IQ Main Store

Sybase IQQuery Only Server WEB Access Query Only Users Query Serverwith aLocal Store Analysts Developers DBAs ETL Processes Analysis Area Developer Tables Local Store IQ Multiplex 12.6 w/ Local Store Sybase IQ Write Server Base Tables IQ Main Store

Local Store for Query Servers • Data in a Local Store appears as if it were in the Main Store • Only visible to users connected to Query Server with the Local Store • Local Stores may be built on file systems • Backup of the IQ Store does NOT backup data in Local Store • Local Store backup is permitted but must be performed separately • The same applies to consistency checking of the data on local stores

Reader Configuration • For the writer node and each reader node there is a directory structure under which the execute (shared disk) • Each instance has its own config file that none of the Multiplex operations touch • It is in this config file that we place the startup configurations for the reader (and writer) nodes • Here is where we can specify the cache sizes etc.

postsync.sql – 1 • After a synchronization all the writers have exactly the same catalog store (apart from some subtle changes due to file positioning for the temp stores etc.) • In order to allow for differing user populations or configurations or permissions between the reader nodes there is a file (for each reader) that is executed the first time a reader is brought up after a synchronization

postsync.sql – 2 • Any sql can be placed in this file for execution after a synchronization • The only restrictions are • No DDL (not allowed on a multiple) • No DML (only allowed for the writer) • No transaction statements • Generally this would be used for the addition (or removal) users, permissions etc.

postsync.sql – 3 • We can add a series of stored procedures on to the Writer Node, then after synchronization these are all on all the reader nodes • These procedures may perform all of the complex tasks to set up the individual configurations for each reader. • In this case the postsync.sql file will merely contain the execution instructions for the particular procedure that applies to this specific reader node

Configuring Nodes • As we have just seen we have these two methods to configure the nodes for specific tasks • The Writer should (in an ideal system) only handle loads, inserts, updates and deletes • This will allow for the Writer to be a load engine • The readers, relieved of the DML processes can then be configured as query engines • We will discuss the tuning of the nodes later in the course

Reader Configuration • There are three areas to consider here • What is the hardware configuration of the writer nodes • Are they the same or different • What is each reader going to run • How are you partitioning the readers • By User ID • By Query Type • Do you have Network Spoofing or Open Switch above the reader nodes?

Reader Hardware • If the readers are all different specification machines then the config files need to be altered for each machine • Even if they are all the same then you may want differing memory cache sizes to allow for the faster operation of specific query types

Query/User Partitioning • If you are going to partition differing queries and or users then you will need to modify the following areas • Memory (probably) • User and Permission system tables • Query Options • Pre-Fetch • Sweeper threads and wash area (small) • Etc.

Network Spoofing • If you are going to have users sign on to any machine, maybe even the same application talking to multiple IQ instances you should consider setting strict mode on