General framework for features-based verification

Explore a general framework for verifying meteorological data using object-oriented methods, joint distributions of forecasts and observations, feature characterization, and object matching. Learn key terminology, methods for identifying, classifying, and comparing features, and measuring accuracy and reliability.

General framework for features-based verification

E N D

Presentation Transcript

General framework for features-based verification Mike Baldwin Purdue University

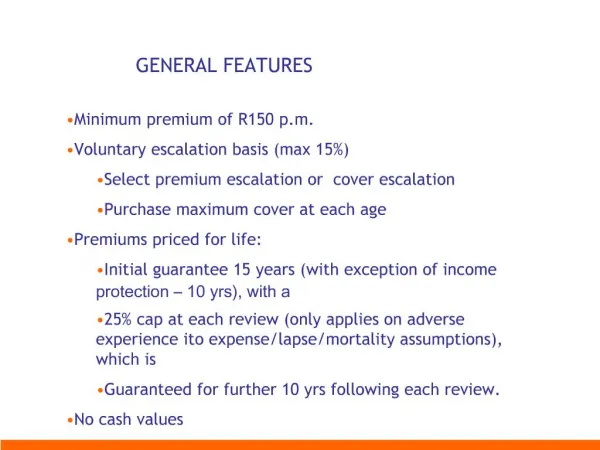

General framework • Any verification method should be built upon the general framework for verification outlined by Murphy and Winkler (1987) • Object-oriented or features-based methods can be considered an extension or generalization of the original framework • Joint distribution of forecasts and observations: p(f,o)

general joint distribution • p(f,o) : where f and o are vectors containing all variables, 3-D, same time • o could come from data assimilation o=first guess + weight*forward_model(obs) • p(fm,om) : fm and om are values of a single variable across the entire domain • traditional joint distribution

general joint distribution • p(G(f),G(o)) : where G is some mapping/transformation/operator that is applied to the variable values • morphing • filter • convolution • deformation • fuzzy

general joint distribution • p(Gm(f),Gm(o)) : where Gm is a specific aspect/attribute/characteristic that results from the mapping operator • distributions-oriented • measures-oriented • compute some error measure or score that is a function of Gm(f),Gm(o) • MSE

Terminology that we might standardize • “feature” – a distinct or important physical object that can be identified within meteorological data • “attribute” – a characteristic or quality of a feature, an aspect that can be measured • “similarity” – the degree of resemblance between features • “distance” – the degree of difference between features • (others?)

framework • follow Murphy (1993) and Murphy and Winkler (1987) terminology • joint distribution of forecast and observed features • goodness: consistency, quality, value

aspects of quality • accuracy: correspondence between forecast and observed feature attributes • single and/or multiple? • bias: correspondence between mean forecast and mean observed attributes • resolution • reliability • discrimination • stratification

Features-based process • Identify features FCST OBS

Features-based process • Characterize features FCST OBS

How to determine false alarms/missed events? How to measure differences between objects? Features-based process • Compare features FCST OBS

Features-based process • Classify features FCST OBS

feature identification • procedures for locating a feature within the meteorological data • will depend on the problem/phenomena/user of interest • a set of instructions that can (easily) be followed/programmed in order for features to be objectively identified in an automated fashion

feature characterization • a set of attributes that describe important aspects of each feature • numerical values will be the most useful

feature comparison • similarity or distance measures • systematic method of matching or pairing observed and forecast features • determination of false alarms? • determination of missed events?

classification • a procedure to place similar features into groups or classes • reduces the dimensionality of the verification problem • similar to going from a scatter plot to a contingency table • not necessary/may not always be used

How to match observed and forecast objects? = missed event dij = ‘distance’ between F i and O j O1 O3 Objects might “match” more than once… If d*j > dT : missed event O2 F1 …for each observed object, choose closest forecast object …for each forecast object, choose closest observed object If di* > dT then false alarm F2 = false alarm

Example of object verf ARW 2km (CAPS) Radar mosaic Fcst_2 Obs_2 Fcst_1 Obs_1 Object identification procedure identifies 4 forecast objects and 5 observed objects

ARW 2km (CAPS) Radar mosaic Distances between objects • Use dT = 4 as threshold • Match objects, find false alarms, missed events

ARW4 ARW2 Df = .04 Dl = -.07 Df = .07 Dl = .08 median position errors matching obs object given a forecast object NMM4 Df = .04 Dl = .22