A support Vector Method for Multivariate performance Measures

This presentation explores a novel approach for directly optimizing various performance measures in classification tasks, focusing on F-measure and its challenges. Traditional classifiers primarily aim to minimize error rates without considering alternative metrics like precision and recall. We propose a sample-based loss reformulation that allows for optimizing the loss function through linear decomposition. This method enhances the applicability of support vector machines by addressing the constraints involved in contingency tables and providing algorithms for efficient constraint selection.

A support Vector Method for Multivariate performance Measures

E N D

Presentation Transcript

A support Vector Method for Multivariate performance Measures Author: Thorsten Joachims (ICML’05) Presenter: Lei Tang

Motivation • Current classifier focus on error-rate, how to optimize it directly for different performance measures? • Precision, recall, F-measure etc.

Existing Approach • Accurately estimate the probabilities of class membership of each example. (Difficult) • Optimize tractable different variants. But for non-linear measure(F-measure), extensive CV is required. • Directly optimize the measure like ROCArea. But non on F-measure.

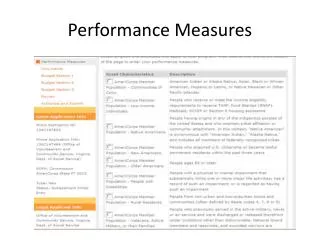

Reformulation Sample-based Loss • Given training examples and test examples S’, our goal is to minimize • Decompose the loss function linearly: Empirical loss: Example-Based Loss

SVM • Original SVM: • Multivariate SVM: Here, is a function that returns a feature vector of x,y Prediction:

Problems Too many constraints!!!! N samples, k class labels, then |Y|=k^N. Do we really need to include all the constraints?

Algorithm Constraint Selection

Contingency Table • Still impractical!! We have to calculate • Contingency table N samples, how many different tables?

Algorithm for argmax Given a table, • Exhaustive search all the possible contingency tables and get the maximum. What should the assignment be?

Various Loss • F-measure: • Precision /Recall (Just look at top k data points) • Precision/Recall Break-Even Point The search space is reduced as a+b=a+c