Surface normals and principal component analysis (PCA)

Surface normals and principal component analysis (PCA). 3DM slides by Marc van Kreveld. Normal of a surface. Defined at points on the surface: normal of the tangent plane to the surface at that point Well-defined and unique inside the facets of any polyhedron

Surface normals and principal component analysis (PCA)

E N D

Presentation Transcript

Surface normals and principal component analysis (PCA) 3DM slides byMarc van Kreveld

Normal of a surface • Defined at points on the surface: normal of the tangent plane to the surface at that point • Well-defined and unique inside the facets of any polyhedron • At edges and vertices,the tangent plane is notunique or not defined(convex/reflex edge) normal is undefined

Normal of a surface • On a smooth surface without a boundary, the normal is unique and well-defined everywhere (smooth simply means that the derivatives of the surface exist everywhere) • On a smooth surface (manifold) with boundary, the normal is not defined on the boundary

Normal of a surface • The normal at edges or vertices is often definedin some convenient way: some average of normalsof incident triangles

Normal of a surface • No matter what choice we make at a vertex, a piecewise linear surface will not have a continuously changing normal visible after computing illumination not normals! they would be parallel

Curvature • The rate of change of the normal is the curvature infinite curvature higher curvature lower curvature zero curvature

Curvature • A circle is a shape that has constant curvature everywhere • The same is true for a line, whose curvature is zero everywhere

Curvature • Curvature can be positive or negative • Intuitively, the magnitude of the curvature is the curvature of the circle that looks most like the curve, close to the point of interest negative curvature positive curvature

Curvature • The curvature at any point on a circle is the inverse of its radius • The (absolute) curvature at any point on a curve is the curvature of the circle through that point that has the same first and second derivative at that point (so it is defined only for C2 curves) r curvature = 1/r r

Curvature • For a 3D surface, there are curvatures in all directions in the tangent plane

Curvature negative positive inside

Properties at a point • A point on a smooth surface has various properties: • location • normal (first derivative) / tangent plane • two/many curvatures (second derivative)

Normal of a point in a point set? • Can we estimate the normal for each point in a scanned point cloud? This would help reconstruction (e.g. for RANSAC)

Normal of a point in a point set • Main idea of various different methods, to estimate the normal of a point q in a point cloud: • collect some nearest neighbors of q, for instance 12 • fit a plane for q and its 12 neighbors • use the normal of this plane as the estimated normal for q

Normal estimation at a point • Risk: the 12 nearest neighbors of q are not nicely spread in all directions on the plane the computed normal could even be perpendicular to the real normal!

Normal estimation at a point • Also: the quality of normals of points near edges of the scanned shape is often not so good • We want a way of knowing how good the estimated normal seems to be

Principal component analysis • General technique for data analysis • Uses the statistical concept of correlation • Uses the linear algebra concept of eigenvectors • Can be used for normal estimation and tells something about the quality (clearness, obviousness) of the normal

Correlation • Degree of correspondence/changing together of two variables measured from objects • in a population of people, length and weight are correlated • in decathlon, performance on 100 meters and long jump are correlated (so are shot put and discus throw) Pearson’s correlation coefficient

Covariance, correlation • For two variables x and y, their covariance is defined as (x,y) = E[ (x – E[x]) (y – E[y]) ] = E[xy] – E[x] E[y] • E[x] is the expected value of x, equal to the mean x • Note that the variance 2(x) = (x,x), the covariance of x with itself, where (x) is the standard deviation • Correlation x,y = (x,y) / ((x) (y))

Covariance • For a data set of pairs (x1, y1), (x2, y2), …, (xn, yn), the covariance can be computed aswhere x and y are the mean values of xi and yi

Data matrix • Suppose we have weight w, length l, and blood pressure b of seven people • Let the mean of w, l, and b be w, l, and b • Assume the measurements have been adjusted by subtracting the appropriate mean • Then the data matrix is X = • Note: Each row has zero mean, the data is mean-centered

Covariance matrix • The covariance matrix is XXT • This is in the example: • The covariance matrix is square and symmetric • The main diagonal contains the variances • Off-diagonal are the covariance values

Principal component analysis • PCA is a linear transformation (3 x 3 in our example) that makes new base vectors such that • the first base vector has a direction that realizes the largest possible variance (when projected onto a line) • the second base vector is orthogonal to the first and realizes the largest possible variance among those vectors • the third base vector is orthogonal to the first and second base vector and … • … and so on … • Hence, PCA is an orthogonal linear transformation

Principal component analysis • In 2D, after finding the first base vector, the second one is immediately determined because of the requirement of orthogonality

Principal component analysis • In 3D, after the first base vector is found, the data is projected onto a plane with this base vector as its normal, and we find the second base vector in this plane as the direction with largest variance in that plane(this “removes” the varianceexplained by the first base vector)

Principal component analysis • After the first two base vectors are found, the data is projected onto a line orthogonal to the first two base vectors and the third base vector is foundon this line it is simply givenby the cross product of the first two base vectors

Principal component analysis • The subsequent variances we find are decreasing in value and give an “importance” to the base vectors • The mind-process explains why principal component analysis can be used for dimension reduction: maybe all the variance in, say, 10 measurement types can be explained using 4 or 3 (new) dimensions

Principal component analysis • In actual computation, all base vectors are found at once using linear algebra techniques

Eigenvectors of a matrix • A non-zero vector v is an eigenvector of a matrix X if Xv = v for some scalar , and is called an eigenvalue corresponding to v • Example 1: (1,1) is an eigenvector of because = 3 In words: the matrix leaves the direction of an eigenvector the same, but its length is scaled by the eigenvalue 3

Eigenvectors of a matrix • A non-zero vector v is an eigenvector of a matrix Xif Xv = v for some scalar , and is called an eigenvalue corresponding to v • Example 1: (1, –1) is also an eigenvector of because = In words: the matrix leaves the direction and length of (1, –1) the same because its eigenvalue is 1

Eigenvectors of a matrix • Consider the transformation animated Blue vectors: (1,1) Pink vectors: (1, –1) and (–1, 1) Red vectors are not eigenvectors (they change direction)

Eigenvectors of a matrix • If v is an eigenvector, then any vector parallel to v is also an eigenvector (with the same eigenvalue!) • If the eigenvalue is –1 (negative in general), then the eigenvector will be reversed in direction by the matrix • Only square matrices have eigenvectors and values

Eigenvectors, a 2D example • Find the eigenvectors and eigenvalues of • We need: Av = v by definition, or (A–I) v = 0(in words: our matrix minus times the identity matrix applied to v is the zero vector) • This is the case exactly when det(A–I) = 0 • det(A–I) = det = = (–2– )(2 – ) – (–3) = 2 – 1 = 0

Eigenvectors, a 2D example • 2– 1 = 0 gives = 1 or = –1 • The corresponding eigenvectors can be obtained by filling in each and solving a set of equations • The polynomial in given by det(A–I) is called the characteristic polynomial

Questions • Determine the eigenvectors and eigenvalues of What does the matrix do? Does that explain the eigenvectors and values? • Determine the eigenvectors and eigenvalues of What does the matrix do? Does that explain the eigenvectors and values? • Determine the eigenvectors and eigenvalues of

Principal component analysis • Recall: PCA is an orthogonal linear transformation • The new base vectors are the eigenvectors of the covariance matrix! • The eigenvalues are the variances of the data points when projected onto a line with the direction of the eigenvector • Geometrically, PCA is a rotation around the multi-dimensional mean (point) so that the base vectors align with the principal components(which is why the data matrix must be mean centered)

PCA example • Assume the data pairs (1,1), (1,2), (3,2), (4,2), and (6,3) • X= 15/5 = 3 and Y= 10/5 = 2 • The mean-centered data becomes(-2,-1), (-2,0), (0,0), (1,0), and (3,1) • The data matrix X = • The covariance matrix XXT= • The characteristic polynomial is det

PCA example • The characteristic polynomial is det = ((18 –)(2 –) – 25) = (2– 20 + 11) • When setting it to zero we can omit the factor • We get = , 1 19.43 and 0.57as the eigenvalues of the covariance matrix • We always choose the eigenvalues to be in decreasing order: 1>> …

PCA example • The first eigenvalue 119.43 corresponds to an eigenvector (1, 0.29) or anything parallel to it • The second eigenvalue 0.57 corresponds to an eigenvector (–0.29, 1) or anything parallel to it

PCA example • The data points and the mean-centered data points

PCA example • The first principal component (purple): (1, 0.29) • Orthogonal projection onto the orange line (direction of first eigenvector) yields the largest possible variance • The first eigenvalue 119.43 is the sum of the squared distances to the mean (variance times 5) for this projection

PCA example • Enlarged, and the non-squared distances shown

PCA example • The second principal component (green): (–0.29, 1) • Orthogonal projection onto the dark blue line (direction of second eigenvector) yields the remaining variance • The second eigenvalue 20.57 is the sum of the squared distances to the mean (variance times 5) for this projection

PCA example • The fact that the first eigenvalue is much larger than the second means that there is a direction that explains most of the variance of the data a line exists that fits well with the data • When both eigenvalues are equally large, the data is spread equally in all directions

PCA, eigenvectors and eigenvalues • In the pictures, identify the eigenvectors and state how different the eigenvalues appear to be

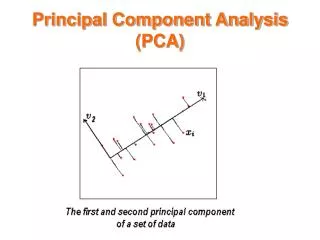

PCA observations in 3D • If the first eigenvalue is large and the other two are small, then the data points lie approximately on a line • through the 3D mean • with orientation parallel to the first eigenvector • If the first two eigenvalues are large and the third eigenvalue is small, then the points lie approximately on a plane • through the 3D mean • with orientation spanned by the first two eigenvectors /with normal parallel to the third eigenvector

PCA and local normal estimation • Recall that we wanted to estimate the normal at every point in a point cloud • Recall that we decided to use the 12 nearest neighbors for any point q, and find a fitting plane for q and its 12 nearest neighbors Assume we have the 3D coordinates of these points measured in meters q

PCA and local normal estimation • Treat the 13 points and their three coordinates as data with three measurements, x, y, and z: we have a 3 x 13 data matrix • Apply PCA to get three eigenvalues 1,2 ,3 , (in decreasing order) and eigenvectors v1,v2 , and v3 • If the 13 points lie roughly in a plane, then 3 is small and the plane contains directions parallel to v1 , v2 • The estimated normal is the perpendicular to v1 , v2, so it is the third eigenvector v3

PCA and local normal estimation • How large should the eigenvalues 1,2 3 be, for this to be true? • Thisdepends on • scanning density • point distribution • scanning accuracy • curvature of the surface