Maximum likelihood (ML)

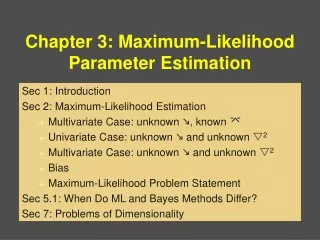

Maximum likelihood (ML). Conditional distribution and likelihood Maximum likelihood estimator Information in the data and likelihood Observed and Fisher’s information Home work. Introduction.

Maximum likelihood (ML)

E N D

Presentation Transcript

Maximum likelihood (ML) • Conditional distribution and likelihood • Maximum likelihood estimator • Information in the data and likelihood • Observed and Fisher’s information • Home work

Introduction It is often the case that we are interested in finding values of some parameters of the system. Then we design an experiment and get some observations (x1,,,xn). We want to use these observations and estimate the parameters of the system. Once we know (it might be a challenging mathematical problem) how parameters and observations are related then we can use this to estimate the parameters. Maximum likelihood is one the techniques to estimate parameters using observations or experimental data. There are other estimation techniques also. These include Bayesian, least-squares, method of moments, M-estimators. The result of the estimation is a function of observation – t(x1,,,xn). A function of the observations is called statistic. It is a random variable and in many cases we want to find its distribution also. In general, finding the distribution of the statistic is a challenging problem. But there are numerical technique (e.g. bootstrap) to approximate this distribution.

Desirable properties of an estimation • Unbiasedness. Bias is defined as a difference between estimator (t) and true parameter (). Expectation is taken using the probability distribution of observations • Efficiency. Efficient estimation is that with minimum variance (var(t)=E(t-E(t))2). Efficiency of the estimator is measured by its variance. • Consistency. If the number of observations goes to infinity then an estimator converges to true value then this estimator is called a consistent estimator • Minimum mean square error (Minimum m.s.e). M.s.e. is defined as the expectation value of the square of the difference (error) between estimator and the true value Minimum m.s.e. means that this estimator must be efficient and unbiased. It is very difficult to achieve all these properties. Under some conditions ML estimator obeys them asymptotically. Moreover the distribution of ML estimator is asymptotically normal that simplifies the interpretation of the results.

Conditional probability distribution and likelihood Let us assume that we know that our random sample points came from a population with the distribution with parameter(s) - . We do not know . If we would know it, then we could write the probability distribution of a single observation f(x|). Here f(x|) is the conditional distribution of the observed random variable if the parameter(s) would be known. If we observe n independent sample points from the same population then the joint conditional probability distribution of all observations can be written: We could write the product of the individual probability distributions because the observations are independent (independent conditionally when parameters are known). f(x|) is the probability mass function of an observation for discrete and density of the distribution for continuous cases. We could interpret f(x1,x2,,,xn|) as the probability of observing given sample points if we would know the parameter . If we would vary the parameter(s) we would get different values for the probability f. Since f is the probability distribution, parameters are fixed and observation varies. For a given set of observations we define likelihood proportional to the conditional probability distribution.

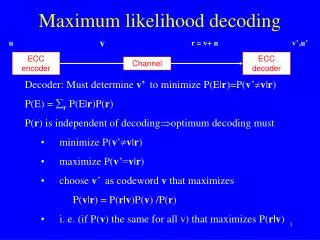

Conditional probability distribution and likelihood: Cont. When we talk about conditional probability distribution of the observations given parameter(s) then we assume that parameters are fixed and observations vary. When we talk about likelihood then observations are fixed parameters vary. That is the major difference between likelihood and conditional probability distribution. Sometimes to emphasize that parameters vary and observations are fixed, likelihood is written as: In this and following lectures we will use one notation for probability and likelihood. When we talk about probability then we assume that observations vary and when we talk about likelihood we assume that parameters vary. Principle of maximum likelihood states that the best parameters are those that maximise probability of observing current values of the observations. Maximum likelihood chooses parameters that satisfy:

Maximum likelihood Purpose of the maximum likelihood is to maximize the likelihood function and estimate parameters. If the derivatives of the likelihood function exist then it can be done using: Solution of this equation will give possible values for maximum likelihood estimator. If the solution is unique then it will be the only estimator. In real application there might be many solutions. Usually instead of likelihood its logarithm is maximized. Since log is strictly monotonically increasing function, derivative of the likelihood and derivative of the log of likelihood will have exactly same roots. If we use the fact that observations are independent then the joint probability distributions of all observations is equal to the product of the individual probabilities. We can write log of the likelihood (denoted as l): Usually working with sums is easier than working with products

Likelihood: Normal distribution example Let us assume that our observations come from the population with N(0,1). We have five observations. For each obervation we can write loglikelihood function (red lines). Loglikelihood function for all observations is the sum of individual loglikelihood functions (black line). As it can be seen likelihood function for five observations combined has much more pronounced maximum than that for individual observations. Usually more observations we have from the same population better is the estimation of the parameter.

Likelihood: Binomial distribution example Now let us take 10 observations from binomial distributions with size=1 (i.e. we do only one trial). Let us assume that probability of success is equal to 0.5. Since each observation is either 0 or 1 loglikelihood function for individual observation will be one of the two functions (red lines on the left figure). Product of individual loglikelihood functions has well defined maximum. Although logglikelihood function has flat maximum, the likelihood function (right figure) has very well pronounced maximum. Likelihood function for five observations, normalised to make the integral equal to one Loglikelihood function

Maximum likelihood: Example – success and failure Let us consider two examples of estimation using maximum likelihood. First example corresponds to discrete probability distribution. Let us assume that we carry out trials. Possible outcomes of the trials are success or failure. Probability of success is and probability of failure is 1- . We do not know the value of . Let us assume we have n trials and k of them are successes and n-k of them are failures. Values of random variables in our trials can be either 0 (failure) or 1 (success). Let us denote observations as y=(y1,y2,,,,yn). Probability of the observation yi at the ith trial is: Since individual trials are independent we can write for n trials: log of this function is: Equating the first derivative of the likelihood w.r.t unknown parameter to zero we get: The ML estimator for the parameter is equal to the fraction of successes.

Maximum likelihood: Example – success and failure In the example of successes and failures the result was not unexpected and we could have guessed it intuitively. More interesting problems arise when parameter itself becomes function of some other parameters. Let us say: The most popular form of the function is logistic curves: If for each trial x takes different value then the log likelihood function looks like: Finding maximum of this function is more complicated. This problem can be considered as a non-linear optimization problem. This kind of problems are usually solved iteratively. I.e. a solution to the problem is guessed and then it is improved iteratively. We will come back to this problem in the lecture on generalised linear models Logistic curve

Maximum likelihood: Example – normal distribution Now let us assume that the sample points came from the population with normal distribution with unknown mean and variance. Let us assume that we have n observations, y=(y1,y2,,,yn). We want to estimate the population mean and variance. Then log likelihood function will have the form: If we get derivative of this function w.r.t mean value and variance then we can write: Fortunately first of these equations can be solved without knowledge about the second one. Then if we use result from the first solution in the second solution (substitute by its estimate) then we can solve second equation also. Result of this will be sample variance:

Maximum likelihood: Example – normal distribution Maximum likelihood estimator in this case gave a sample mean and sample variance. Many statistical techniques are based on maximum likelihood estimation of the parameters when observations are distributed normally. All parameters of interest are usually inside the mean value. In other words is a function of parameters of interest. Then the problem is to estimate parameters using maximum likelihood estimator. Usually x-s are fixed values (fixed effects model). When x-s are random (random or mixed effect models) then the treatment becomes more complicated. We will have one lecture on mixed effect models. Parameters are -s. If this function is linear on parameters then we have linear regression. If variances are known then the Maximum likelihood estimator using observations with normal distribution becomes least-squares estimator.

Maximum likelihood: Example – normal distribution If all σ-s are equal to each other and our interest is only in estimation of mean value (μ) then minus loglikelihood function, after multiplying by σ2 and igonring all constants that do not depend on mean value, can be written: It is the most popular estimator - least-squares function. If we consider the central limit theorem then we can say that in many cases distributions of the errors in the observations can be approximated with normal distribution and that explain why this function is so popular. It is a special case of maximum likelihood estimators. We will come back to this function in linear model lecture.

Information matrix: Observed and Fisher’s One of the important aspects of a likelihood function is its behavior near to the maximum. If the likelihood function is flat then observations have little to say about the parameters. It is because changes of the parameters will not cause large changes in the probability. That is to say same observation can be observed with similar probabilities for various values of the parameters. On the other hand if the likelihood has a pronounced peak then small changes of the parameters would cause large changes in the probability. In this cases we say that observation has more information about parameters. It is usually expressed as the second derivative (or curvature) of the minus log-likelihood function. Observed information is equal to the second derivative of the minus log-likelihood function: When there are more than one parameter it is called information matrix. Usually it is calculated at the maximum of the likelihood. Example: In case of successes and failures we can write: N.B. Note that it is one of the definitions of information.

Information matrix: Observed and Fisher’s Expected value of the observed information matrix is called expected information matrix or Fisher’s information. Expectation is taken over observations: It is calculated at any value of the parameter. Interesting fact about Fisher’s information matrix is that it is also equal to the expected value of the product of the gradients of loglikelihood function: Note that observed information depends on particular values of the observations whereas expected information depends only on the probability distribution of the observations (It is a result of integration. When we integrate over some variables we loose dependence on particular values): When sample size becomes large then maximum likelihood estimator becomes approximately normally distributed with the variance close to : Fisher points out that inversion of observed information matrix gives slightly better estimate to variance than that of the expected information matrix.

Information matrix: Observed and Fisher’s More precise relation between expected information and variance is given by Cramer and Rao inequality. According to this inequality variance of the maximum likelihood estimator never can be less than inversion of expected information:

Information matrix: Observed and Fisher’s Now let us consider an example of successes and failures. If we get expectation value for the second derivative of minus log likelihood function we can get: If we take this at the point of maximum likelihood then we can say that variance of the maximum likelihood estimator can be approximated by: This statement is true for large sample sizes.

Information matrix and distribution of parameters: Example Distribution of parameter of the interest can be derived using Bayes’s theorem and assuming that we have no information about the parameter before the observations are made. If we assume that f(β) is constant then the distribution of parameter can be derived by renormalisation of the conditional probability distribution of observations given parameter(s) is known. Let us compare for binomial distributions (with the number of trials 1, the number of observations 50 and probability of success 0.5) normal approximation and the distribution itself. Mean value is 0.46, standard deviation of normal approximation derived using information matrix is 0.0705. Black line actual distribution and red line normal approximation. For this case asymptotic distribution almost exactly coincides with the actual distribution

References • Berthold, M. and Hand, DJ (2003) “Intelligent data analysis” • Stuart, A., Ord, JK, and Arnold, S. (1991) Kendall’s advanced Theory of statistics. Volume 2A. Classical Inference and the Linear models. Arnold publisher, London, Sydney, Auckland

Exercise 1 a) Assume that we have a sample of size n (x1, x2, …,xn) independently drawn from the population with the density of probability distribution (it is exponential distribution where has been replaced by 1/ ) Find the maximum likelihood estimator for . Show that maximum likelihood estimator is unbiased. Find the observed and expected information? b) Negative binomial distribution has the form: This is the probability mass function for the number of successful trials before a failure occurs. Probability of success is p and that of failure is 1-p. If we have a sample of n independent points – (k1,k2,….,kn) drawn from the population with the negative binomial distribution (in i-th time we had ki successes and (ki+1)-st trial was failure). Find the maximum likelihood estimator for p. What is the observed and expected information?