4. Using panel data

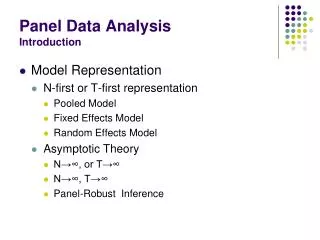

4. Using panel data. 4.1 The basic idea 4.2 Linear regression 4.3 Logit and probit models 4.4 Other models. 4.1 The basic idea. Panel data = data that are pooled for the same companies across time.

4. Using panel data

E N D

Presentation Transcript

4. Using panel data • 4.1 The basic idea • 4.2 Linear regression • 4.3 Logit and probit models • 4.4 Other models

4.1 The basic idea • Panel data = data that are pooled for the same companies across time. • In panel data, there are likely to be unobserved company-specific characteristics that are relatively constant over time. • I have already explained that it is necessary to control for this time-series dependence in order to obtain unbiased standard errors. • In STATA we can do this using the robust cluster () option

4.1 The basic idea • The first advantage of panel data is that we are using a larger sample compared to the case where we have only one observation per company. • The larger sample permits greater estimation power, so the coefficients can be estimated more precisely. • Since the standard errors are lower (even when they are adjusted for time-series dependence), we are more likely to find statistically significant coefficients. • use "J:\phd\Fees.dta", clear • gen fye=date(yearend, "MDY") • format fye %d • gen year=year(fye) • sort year • gen lnaf=ln(auditfees) • gen lnta=ln(totalassets) • by year: reglnaflnta, robust cluster(companyid) • reglnaflnta, robust cluster(companyid)

4.1 The basic idea • The second advantage of panel data is that we can estimate “dynamic” models. • For example, suppose we believe that audit fees depend not only on the company’s size but also its rate of growth • sort companyidfye • gen growth= lnta- lnta[_n-1] if companyid== companyid[_n-1] • reglnaflnta growth, robust cluster( companyid) • We find that audit firms offer lower fees to companies that are growing more quickly • If we had had only one year of data, we would not have been able to estimate this model.

4.1 The basic idea • The third – and most important – advantage of panel data is that we are able to control for unobservable company-specific effects that are correlated with the observed explanatory variables • Let’s start with a simple regression model: • Let’s assume that the error term has an unobserved company-specific component that does not vary over time and an idiosyncratic component that is unique to each company-year observation:

4.1 The basic idea • Putting the two together: • Recall that the standard error of will be biased if we do not adjust for time-series dependence • this adjustment is easy using the robust cluster () option • The OLS estimate of the coefficient will be unbiased as long as the unobservable company-specific component (ui) is uncorrelated with Xit

4.1 The basic idea • Unfortunately, the assumption that ui is uncorrelated with Xit is unlikely to hold in practice. • If ui is correlated with Xit then it is also correlated with Xit • The OLS estimate of will be biased if it is correlated with Xit (recall our previous discussion and notes on omitted variable bias)

4.1 The basic idea • An example can illustrate this bias. • Go to MySite • use "J:\phd\beatles.dta", clear • list • This dataset is a panel of four individuals observed over three years (1968-70) • In each year they were asked how satisfied they are with their lives • this is the lsat variable which takes larger values for increasing satisfaction • You want to test how age affects life satisfaction • reglsat age • It appears that they became slightly more satisfied as they got older.

4.1 The basic idea • Suppose you now include dummy variables for each individual • tab persnr, gen(dum_) • Recall that you must omit either one dummy variable or the intercept in order to avoid perfect collinearity (see the previous notes about multicollinearity) • reglsat age dum_1 dum_2 dum_4 • reglsat age dum_1 dum_2 dum_3 dum_4, nocons • There now appears to be a highly significant negative impact of age on life satisfaction • What’s going on here?

4.1 The basic idea • Recall that fitting a simple OLS model (lsat on age) is equivalent to plotting a line of best fit through the data • twoway (lfitlsat age) (scatter lsat age)

4.1 The basic idea • I am now going to introduce a new command, separate , by() • separate lsat, by(persnr) • This creates four separate life satisfaction variables for each of the four individuals • Now graph the relationship between life satisfaction and age for each of the four people • twoway (lfit lsat1 age) (scatter lsat1 age) • twoway (lfit lsat2 age) (scatter lsat2 age) • twoway (lfit lsat3 age) (scatter lsat3 age) • twoway (lfit lsat4 age) (scatter lsat4 age)

It is clear that each of the four individuals became less satisfied as they got older. • The simple OLS regression was biased because John and Ringo (who happened to be older) were generally more satisfied than Paul and George (who happened to be younger) • The multiple OLS regression controlled for these idiosyncratic differences by including dummy variables for each person • We can see this by plotting the simple OLS results and the multiple OLS results • reglsat age dum_1 dum_2 dum_3 dum_4, nocons • predict lsat_hat • separate lsat_hat, by(persnr) • twoway (line lsat_hat1-lsat_hat4 age) (lfitlsat age) (scatter lsat1-lsat4 age)

4.1 The basic idea • What does all this have to do with panel data being advantageous? • Without panel data we would not have been able to control for the idiosyncracies of the four individuals. • If we had data for only one year, we would not have known that the age coefficient was biased in the simple regression. • We can demonstrate this by running a regression of lsat on age for each year in the sample • sort time • by time: reglsat age • Without panel data, we would have incorrectly concluded that people get happier as they get older

4.1 The basic idea • In the multiple regression, we include dummy variables (dum_1 dum_2 dum_3 dum_4) which control for the individual-specific effects (ui) • Without including the person dummies, our estimate of would be biased because the dummies are correlated with age. • The person dummies “explain” all the cross-sectional variation in life satisfaction across the four individuals. • The only variation that is left is the change in satisfaction within each person as he gets older. • Therefore, the model with dummies is sometimes called the “within” estimator or the “fixed-effects” model.

4.1 The basic idea • In small datasets like this, it is easy to create dummy variables for each person (or each company). • In large datasets, we may have thousands of individuals or companies. • The number of variables in STATA is restricted due to memory limits. • Also it is not very inconvenient to have results for thousands of dummy variables (just imagine how long your log file would be!).

4.1 The basic idea • Instead of including dummy variables, we can control for idiosyncratic effects by transforming the Y and X variables. • Taking averages of eq. (1) over time gives: • Subtracting eq. (2) from eq. (1) gives: • The key thing to note here is that the individual-specific effects (ui) have been “differenced out” so they will not bias our estimate of .

4.1 The basic idea • Another transformation that will do the same trick is to take differences rather than subtract means • Lagging by one period • Subtracting eq. (2) from eq. (1) gives: • Again the individual-specific effects (ui) have been “differenced out” so they will not bias our estimate of .

Class exercise 4a • Estimate the following models, where Y = life satisfaction and X = age. • Compare the age coefficients in these models to the age coefficient in the untransformed model with person dummies (ignore the standard errors of the age coefficients because they are biased)

4.2 Linear regression using panel data (xtreg, fe i()) • Fortunately, STATA has a command that: • allows us to avoid creating dummy variables for each person • corrects the standard errors • xt is a prefix that tells STATA we want to estimate a panel data model • The fe option tells STATA we want to estimate a fixed effects model • in OLS this is equivalent to including dummy variables to control for person-specific effects • The i() term tells STATA the variable that identifies each unique person • xtreglsat age , fei(persnr)

Note that the age coefficient and t-statistic are exactly the same as in the OLS model that includes person dummies • reg lsat age dum_1 dum_2 dum_3 dum_4, nocons • There are 12 person-years, 4 persons, and the minimum, average and maximum number of observations per person is 3.

Since we are estimating a within-effects model, it is the within R2 that is directly relevant (93.2%). • If we used the same independent variables to estimate a “between-effects” model, we would have an R2 of 88.4% (I will explain later what we mean by the “between-effects” model). • If we used the same independent variables to estimate a simple OLS model, we would get an R2 of 16.5%. (reglsat age) • The F-statistic is a test that the coefficient(s) on the X variable(s) (i.e., age) are all zero.

sigma_u is the standard deviation of the estimates of the fixed effects, ui (u) • sigma_e is the standard deviation of the estimates of the residuals, eit (e) • rho = u2 / (u2 + e2) = 4.932 / (4.932 + 0.472) = 0.99

The correlation between uit and Xit is -0.83. • This correlation appears to be high confirming our prior finding that the fixed effects are correlated with age. • The F-test allows us to reject the hypothesis that there are no fixed effects. • If we had not rejected this hypothesis, we could estimate a simple OLS instead of the fixed-effects model.

4.2 Linear regression (predict) • After running the fixed-effects model, we can obtain various predicted statistics using the predict command • predict , xb • predict , u • predict , e • predict , ue

4.2 Linear regression (predict) • For example: • xtreglsat age , fei(persnr) • drop lsat_hat • predict lsat_hat, xb • predict lsat_u, u • predict lsat_e, e • predict lsat_ue, ue • Checking that lsat_ue = lsat_u + lsat_e • list lsat_ulsat_elsat_ue • Checking that the correlation between uit and Xitis -0.83 • corrlsat_hatlsat_u

4.2 Linear regression • I have explained that there are three main advantages of panel data: • The larger sample increases power, so the coefficients are estimated more precisely • We can estimate models that incorporate dynamic variables (e.g., the effect of growth on audit fees) • We can control for unobservable fixed effects (e.g., company-specific or person-specific characteristics) by estimating fixed-effects models.

4.2 Linear regression • Are there any disadvantages? • Yes, unfortunately we cannot investigate the effect of explanatory variables that are held constant over time. • From a technical point of view, this is because the time-invariant variable would be perfectly collinear with the person dummies. • From an economic point of view, this is because fixed-effect models are designed to study what causes the dependent variable to change within a given person. A time-invariant characteristic cannot cause such a change.

4.2 Linear regression • For example, suppose that the height of the four persons is constant over the three years. • Let’s create a height variable and test the effect of height on life satisfaction • gen height=185 if dum_1==1 • replace height=180 if dum_2==1 • replace height=175 if dum_3==1 • replace height=170 if dum_4==1 • list persnr height • Note that the height variable is a constant for each person. • We can estimate the effect of height as long as we do not control for unobservable person-specific effects • reglsat age height

4.2 Linear regression • If we try to control for person-specific effects by including dummy variables: • reg lsat age height dum_1 dum_2 dum_3 dum_4, nocons • Note that STATA has to throw away either a dummy variable or the height variable. • Why is that? • The only way we can include dummies for each person is if we do not include the height variable. • reg lsat age dum_1 dum_2 dum_3 dum_4, nocons • If we try to estimate the effect of height using the xtreg, fei() command, STATA will inform us that there is a problem of perfect collinearity • xtreglsat age height, fei( persnr)

4.2 Linear regression • Note that the height coefficient can be estimated if there is some variation over time for one or more persons. • The fixed-effects estimator can exploit this time variation to estimate the effect of height on life satisfaction. • For example, suppose that each person became 1cm taller in 1970. • replace height= height+1 if time==1970 • xtreglsat age height, fei( persnr)

The xtreg, fei() command estimates the following fixed-effects model: • Recall that we derived this model by taking averages: • The averages model is sometimes called the “between” estimator because the comparison is cross-sectional between persons rather than over time. • Like OLS, the between estimator provides unbiased estimates of only if the unobservable company-specific component (ui) is uncorrelated with Xit • If we wanted to estimate the “between effects” model, the command in STATA is xtreg , be i() • xtreglsat age, be i( persnr)

Note that the age coefficient is positive • the reason is that we are not controlling for person-specific effects, which are correlated with age. • therefore, the between-effects estimate of the age coefficient is biased. • Since we are estimating a between-effects model, it is the between R2 that is relevant (88.4%). • Note that this is also the between-effects R2 that was previously reported using the fixed-effects model. • Note that the R2 for the between-effects model is high despite that the age coefficient is severely biased. Again, this reinforces the fact that a high R2 does not imply that the model is well specified.

The between estimator is also less efficient than simple OLS because it throws away all the variation over time in the dependent and independent variables. • In fact the between estimator is equivalent to estimating an OLS model on the averages for just one year • Recall that we have already created averages for the lsat and age variables (avlsatavage) • regavlsatavage if time==1968 • regavlsatavage if time==1969 • regavlsatavage if time==1970 • xtreglsat age height, be i( persnr) • Since we actually have three years of data, it seems silly (and it is silly) to throw data away

4.2 Linear regression (xtreg) • Normally, then, we would never be interested in estimating a between-effects model: • The estimates are biased if the person-specific effects are correlated with the X variables • The estimates are inefficient because we are ignoring any time-series variation in the data • The fixed effects estimator is attractive because it controls for any correlation between ui and Xit • An unattractive feature is that it is forced to estimate a fixed parameter for each person or company in the data • you can think of these parameters as being the coefficients on the person dummy variables

4.2 Linear regression (xtreg) • An alternative is the “random effects” model in which the ui are assumed to be randomly distributed with a mean of zero and a constant variance (ui ~ IID(0, 2u) rather than fixed. • Intuitively, the random effects model is like having an OLS model where the intercept varies randomly across individuals i. • Like simple OLS, the random effects model assumes that there is zero correlation between ui and Xit • If ui and Xit are correlated, the random-effects estimates are biased.

4.2 Linear regression (xtreg) • If we want to estimate a random effects model, the command is xtreg , re i() • For example: • xtreglsat age, re i( persnr) • Note that because we have controlled for (random) unobserved person effects, the age coefficient is estimated with the correct negative sign.

The rest of the output is similar to the fixed-effects model except: • We use a Wald statistic instead of an F statistic to test the significance of the independent variables. Here we can reject the hypothesis that age is insignificant. • The Wald statistic is used because only the asymptotic properties of the random-effects estimator are known. • The output explicitly tells us that we have imposed the assumption that ui and Xit are uncorrelated. • This is the key difference between the random-effects and fixed-effects models.

We can test whether ui and Xit are correlated. • If they are correlated, we should use the fixed-effects model rather than OLS or the random-effects model (otherwise the coefficients are biased). • If they are not correlated, it is better to use the random-effects model (because it is more efficient). • The test was devised by Hausman • if ui and Xit are correlated, the random-effects estimates are biased (inconsistent) while the fixed-effects coefficients are unbiased (consistent) • In this case, there will be a large difference between the random-effects and fixed-effects coefficient estimates • if ui and Xit are uncorrelated, the random-effects and fixed-effects coefficients are both unbiased (consistent); the fixed-effects coefficients are inefficient while the random-effects coefficients are efficient. • In this case, there will not be a large difference between the random-effects and fixed-effects coefficient estimates • The Hausman test indicates whether the two sets of coefficient estimates are significantly different

Null hypothesis (H0): ui and Xit are uncorrelated • The Hausman statistic is distributed as chi2 and is computed as • If the chi2 statistic is positive and statistically significant, we can reject the null hypothesis. This would mean that the fixed-effects model is preferable because the coefficients are consistent. • If the chi2 statistic is not positive and statistically significant, we cannot reject the null hypothesis. This would mean that the random-effects model is preferable because the coefficients are consistent and efficient. • NB: The (Vc-Ve)-1 matrix is guaranteed to be positive only asymptotically. In small samples, this asymptotic result may not hold in which case the computed chi2 statistic will be negative.

4.2 Linear regression (estimates store, hausman) • The procedure for executing a Hausman test is as follows: • Save the coefficients that are consistent even if the null is not true: • xtreglsat age, fei( persnr) • estimates store fixed_effects • Save the coefficients that are inconsistent if the null is not true: • xtreglsat age, re i( persnr) • estimates store random_effects • The command for the Hausman test is: • hausmanname_consistentname_efficient • hausmanfixed_effectsrandom_effects

b is the fixed-effects coefficient while B is the random-effects coefficient. • The (Vc-Ve)-1 matrix has a negative value on the leading diagonal and, as a result, the square root of the leading diagonal is undefined. This is why the Chi2 statistic is negative. • Since the Chi2 statistic is not significantly positive, we might decide that we cannot reject the null hypothesis (see p. 57 of the STATA reference manual for the Hausman test). • On the other hand, this result is not very reliable because the asymptotic assumption fails to hold in this small sample.

If we reject the null hypothesis that ui and Xit are uncorrelated, the fixed-effects model is preferable to the OLS and random-effects models. • If we cannot reject the null hypothesis that ui and Xit are uncorrelated, we need to determine whether the ui are distributed randomly across individuals. • Recall that the random-effects model is like having an OLS model where the constant term varies randomly across individuals i. • Therefore, we need to test whether there is significant variation in ui across individuals.

rho = u2 / (u2 + e2) = 1.032 / (1.032 + 0.472) = 0.83 • u2 captures the variation in ui across individuals. • If u2 is significantly positive, the random-effects model is preferable to the OLS model. • The Breusch and Pagan (1980) Lagrange multiplier test is used to investigate whether u2 is significantly positive.

We perform the Breusch-Pagan test by typing xttest0 after xtreg, re • Our estimate of u2 is 1.067 (note that u is estimated to be 1.032 which is the same as sigma_u on the previous slide). • We are unable to reject the hypothesis that u2 = 0. Therefore, we cannot conclude that the random-effects model is preferable to the OLS model. • NB: Our Hausman and LM tests lack power because the sample consists of only 12 observations. In larger samples, we are more likely to reject the hypothesis that u2 = 0 and we are more likely to reject the hypothesis that ui and Xitare uncorrelated.

Class exercise 4b • Estimate models in which the dependent variable is the log of audit fees. • Estimate the models using: • OLS without controlling for ui • Fixed-effects models • Random-effects models • How do the coefficient estimates vary across the different models? • Which of these models is preferable?

4.2 Linear regression • Compared to economics and finance, there are not many accounting studies that exploit panel data in order to control for unobserved company-specific effects (ui). • Most studies simply report OLS estimates on the pooled data. • Some studies even fail to adjust the OLS standard errors for time-series dependence • this can be a very serious mistake especially when the panels are long (e.g., the sample period covers many years).