Teaching Langmuir Adsorption: Principles, Optimization, and Error Analysis in Regression

This poster explores the fundamentals of the Langmuir adsorption equation, its optimization, and challenges in teaching these concepts. It outlines common issues students face, such as interpreting regression results and recognizing biases in regression analyses. The work delves into Langmuir's assumptions, various forms of the equation, and illustrates the importance of understanding potential errors in data. By integrating perfect scenarios and accounting for real-world data errors, this educational resource aims to enhance student comprehension and skills in experimental adsorption data analysis.

Teaching Langmuir Adsorption: Principles, Optimization, and Error Analysis in Regression

E N D

Presentation Transcript

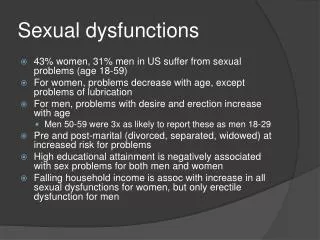

n-NLLS v-NLLS linear-L S E-H L-B n-NLLS v-NLLS L-B v-NLLS & linear-L E-H S n-NLLS E-H L-B v-NLLS linear-L v-NLLS S Scatchard S E-H E-H L-B Eadie-Hofstee & linear-Langmuir n-NLLS S Lineweaver- Burk n-NLLS linear-L v-NLLS E-H S linear-L & L-B Langmuir Adsorption Optimization: Problems & Solutions For Teaching Cristian P. Schulthess University of Connecticut, Storrs, CT, USA The University of Connecticut is an equal opportunity employer and program provider. • Problems: • Teaching students the fundamentals of the Langmuir equation and how to optimize it is relatively easy to do. Students, however, are often easily confused when real data are presented. Ideally, you want them to know how to do the following items, but teaching them these things can be difficult to illustrate in the classroom: • ► How to interpret the regression results for their data. • ► How to recognize regression bias. • ► How to recognize potential data outliers. Are more data needed? • ► How to recognize any potential error in the theory. • This poster walks you through the various steps that will achieve these goals in your lesson plan. • What is the Langmuir equation? • The Langmuir equation was introduced by Irwin Langmuir in 1916 to describe adsorption isotherms. He later presented data and a linear regression method for working with this equation in 1918. It was originally formulated for predicting the sorption of gases onto a solid phase. It is now also used to predict the sorption of aqueous compounds onto a solid phase. This is a mechanistic model that assumes that one reaction is involved in the sorption process, and that the distribution of the compounds between the two phases (gas-solid or liquid-solid) is controlled by an equilibrium constant. Langmuir received the Nobel Prize in Chemistry in 1932 for his work. • The Langmuir equation assumes that the adsorption reaction involves a single reaction with a constant energy of adsorption. Using mass action laws for its derivation, these are the only assumptions made. Because of its simplicity, it is often the first theory tested on any adsorption data set. The mechanistic reaction assumed by the Langmuir equation is: • S + A = SA [1], • where S is the solid surface site (adsorbent), A is the adsorbing species (adsorbate), and SA is the adsorbed product formed. Using mass action laws, Equation [1] yields the Langmuir equation: • G = GmaxK c [2], • 1 + K c • where G is the amount adsorbed, Gmax is the maximum adsorption capacity, K is the Langmuir constant, and c is the aqueous equilibrium concentration of the adsorbent. • If K and Gmax are known, then a given set of data can be predicted for any value of c. This is not as easy to do as it sounds. There are numerous errors that can be present in the optimization of these parameters. First, start them with a perfect scenario. The first thing the students will notice is that Equation [2] was too hard to solve in the BC (before computers) era. A least squares linear regression technique was invented by Gauss in 1795 when he was only 17 years old, and we can use this technique to solve the parameters in Equation [2]. We merely need to convert the equation into a linear form. The first thing to teach is that the mathematics of optimization does not care about how the equation is manipulated. If the data are perfect, and the equation is correct, than all kinds of different linear regression forms will work just as well. (You will later show them that this is not true in practice, but for now we make these necessary assumptions about the data being perfect.) There are four common linear forms of the Langmuir equation. The Lineweaver-Burk equation: 1 = 1 + 1 [3]. GGmaxGmaxK c The Eadie-Hofstee equation: G = Gmax- G [4]. K c The Scatchard equation: G = KGmax-KG [5]. c The linearized-Langmuir equation: c = c + 1 [6]. GGmaxKGmax Notice in Figure 1 that all regression methods yield the same isotherm prediction when the data are perfect (that is, the data have no errors present). Figure 1. Perfect data. (Data shown are hypothetical.) Lesson: All methods resulted in the same isotherm prediction. Second, introduce data error. Figure 2 shows the same data as Figure 1, but with +10% error present. The lesson here is that different regressions have different sensitivity (i.e., bias) to the data errors present. The data variation here is even and symmetrical. It is easy to show that if the data are not even and symmetrical, then you will have a much wider spread of results from each method used. Figure 2. Data with +10% error. (Data shown are hypothetical.) Error is even and symmetrical here. It can get worse. Lesson: Regression method can be biased. Third, introduce modern age of non-linear methods. The students will easily understand that a computer can be taught to find the best parameters that yield the least error. The trick, however, is deciding how error is to be defined. Each definition will yield a different result. Figure 3 illustrates two non-linear least squares methods (NLLS) for solving the Langmuir equation for an adsorption isotherm. The vertical-NLLS assumes that the best fit is the curve with the least error defined as the sum of the square of the errors of each datum point to the predicted curve measured vertically on the graph. The normal-NLLS assumes the same thing, but the error of each datum point is measured instead as its distance from the nearest point on the predicted curve. Why do we see a difference? The v-NLLS regression aggressively seeks to minimize the error on the lower-left corner because that is where we see the largest vertical change in the data. The v-NLLS regression is biased toward the data in the lower-left corner of the adsorption isotherm. Figure 3. Pb2+ adsorption on PO4-pretreated kaolin at pH 8. (Data from T.D. Ranatunga.) Lesson: The v-NLLS regression method is not free of bias. The n-NLLS regression is not biased toward any particular region of the graph. Also, the n-NLLS regression is arithmetically balanced (that is, while the sum of the square of the errors has been minimized, the sum of the errors also equals zero). Fourth, address the possibility of theory error. Theory error results when the adsorption reaction is not exactly following the reaction mechanism described by Equation [1]. This type of error is difficult to catch. Minimize the data scatter as much as possible before addressing this possibility. The adsorption isotherm illustrated below probably adsorbs too strongly at very low concentrations (e.g., at low c values). The data in the lower-left corner of the graph, although they are correct and reproducible, are probably too high for a Langmuir type of isotherm. Figure 4. PVA adsorption on Si oxide. (Data from Schulthess & Dey, 1996.) All points were duplicated. Lesson: If data error is low but different regression methods yield different results, then there may be a slight error with the theory. Another lesson: A slight theory error does not necessarily mean that the Langmuir equation should not be used. It is just not perfect. Fifth, let them play with the regression methods. Below is an adsorption isotherm with a great deal of variation in the regression results. Ask the students: Is this due to a slight theory error, or is it due to the data scatter? Which regression do you pick? Figure 5. Adsorption of Pb2+ on kaolin at pH 8. (Data from T.D. Ranatunga.) Question: Which regression result is correct? Note that if a single datum point is ignored in the analysis, than all but one (Eadie-Hofstee) of the regressions (linear and non-linear) yield nearly the same result (Figure 6). If two data points are ignored in the analysis, than all of the regressions yield nearly the same result (Figure 7). This suggests that the lack of agreement of the regression methods on the original complete data set may be due to data error, rather than theory error. Due to the impact of just one datum point on the regression results, it may be worthwhile to recheck the validity of that particular point. Ideally, reproduce your data as much as you can. In any case, the n-NLLS regression is not that sensitive to this slight data error. Figure 6. Same as Fig. 5, but one datum point is ignored for the regression analysis (triangle). Red line is n-NLLS. Figure 7. Same as Fig. 5, but two data points are ignored for the regression analysis (triangles). Lesson: The more data you have, the smaller the impact of a bad datum point or two on the regression method used. Notes: ► All figures made using LMMpro, distributed by www.alfisol.com. ► LMMpro easily manipulates the data on-the-fly for your classroom. ► Figures 1, 2, and 4 are demo data sets that come with LMMpro. ► Figure 4 data are from Schulthess & Tokunaga (1996, SSSAJ 60:86-91). ► Figures 3, 5, 6, and 7 data are from T.D. Ranatunga, Alabama A&M University, Normal, AL, with much gratitude. See also Ranatunga et al. (2008, Soil Sci. 173:321-331). ► If your analysis is sensitive to the type of regression used, then you have some error present. The error is either data error, theory error, or both. c.schulthess@uconn.edu