Artificial Neural Networks and Adaptive Systems

370 likes | 642 Vues

(for philosophers). Artificial Neural Networks and Adaptive Systems. Plan. Introduction: simple neurons, simple networks Principles: adaptation and parallelism Designing neural networks: types and parameters Artificial vs natural neurons Why neural networks matter to philosophers.

Artificial Neural Networks and Adaptive Systems

E N D

Presentation Transcript

(for philosophers) Artificial Neural Networksand Adaptive Systems

Plan • Introduction: simple neurons, simple networks • Principles: adaptation and parallelism • Designing neural networks: types and parameters • Artificial vs natural neurons • Why neural networks matter to philosophers

Artificial neurons • An artificial neuron has three components: • Input connections (x1,x2..) with weights (w1,w2..) (the equivalent of dendrites) • An output (y) (the equivalent of an axon eventually linked with other neurons’ “dendrites”. • A computing unit (the equivalent of a living neuron’s soma). The computing unit constantly sums the weighted inputs to produce a net out of which the output is calculated using a non-linear activation function (f) :

One neuron as a network • Given that • w1 = 0.5 and w2 = 0.5 • The activation function is f(x) = [ 1 if x >= 1, -1 if x < 1 ]. • X1and X2 encode the truth values {1,-1} • The above neuron implements an AND function • Most neural networks are used in this way: to implement functions.

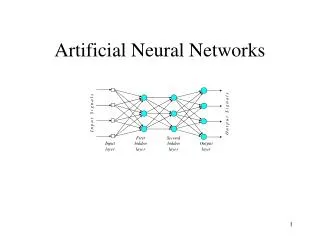

The Perceptron • The (multi-layer) perceptron is the kind of network Bentley alludes to in his allegory of the hierarchical enterprise. • In fig3: a perceptron embodies a function X -> Y, with X = X1 x X2 … (D dimensions) and Y = Y1 x Y2 … (m dimensions). • Generally, the output layer has less neurons (less dimensions) than the input layer so the perceptron acts as a classifier. • A typical example of a Perceptron would be one taking a 2D array of bits representing points on a writing surface and returning a letter, that is, a character recogniser.

Limitations of perceptrons • Perceptrons without hidden layers can only recognize linearly-separable classes. • Perceptrons with sufficient layers and connections can implement any function whatsoever.

Plan • Introduction: simple neurons, simple networks • Principles: adaptation and parallelism • Designing neural networks: types and parameters • Artificial vs natural neurons • Why neural networks matter to philosophers

Principles: adaption and parallelism • Neural networks are characterized as adaptive distributed systems. • Neural networks are adaptive exactly like evolutionary systems. • Neural networks are distributed exactly like multi-agent systems (e.g. ants-based designs). • The following sections explains the general principles of computational adaptation and parallelism.

The idea of distributed computing • Conventional computers are designed following von Neumann’s tripartite architecture (processor, registers, memory) • A different design strategy is to have many CPUs, memory units and registers that communicate between themselves using a network: to use a popularized expression, the network is the computer. • Different combinations unit power and unit numbers are possible

Kinds of distributed computing • There is a broad spectrum of possible combinations of quality and quantity that can be suited to solve hard computational problems.

Distributed representations ? • Usually, a neuron in a neural network cannot be said to hold any kind of representation. • The network, however, can represent different functions and therefore classes, concepts, methods, etc. • This is why we say that networks are sub-symbolic: no symbol could (normally) be attached to a single continuous memory space (neuron). • The advantages of distributed representations are usually robustness and power. !

The essence of adaptive systems • Adaptive systems provide an alternative to traditional system design: instead of creating a mechanism to perform function F, we create a mechanism that will learn or adapt itself in order to perform F. • An adaptive system generally has two distinct components: training (or feedback) and operation. • The advantages of such systems are often critical: flexibility and power.

Adaptive systems as function approximators • Most adaptive systems can be understood in terms of statistical regression.

The simplest method of linear regression is based on mean square error. It minimizes the quantity J:

A one-neuron network with a threshold function can be used to represent the previous solution. • The neuron acts as a linear function y = wx+b, b being the threshold and w the weight (it has only one input link) • In the following discussion we will use this minimalistic neuron-network:

Gradient descent • « Gradient descent » refers to a type of training method. • Now that we have a better idea of what a network does and how its performance is evaluated, we can summarize some important factors that can prevent us from analyticaly determining the weights of a network: • Lack of an explicit representation of the performance surface (hence also dJ/dw) • Impossibility to solve dJ/dw = 0 • Necessity to learn as examples are presented (online learning) • All gradient descent algorithms work as follow: • Start the network with random (but low) weights. • Calculate or approximate the gradient with these weights • Change the weights in the direction opposed the gradient • Generally, the change is not as important as the magnitude of the gradient but limited by a step size

Gradient descent: problems • There are many kinds of gradient descent algorithms, and their effectiveness depends on many factors. The one exposed here is the steepest descent algorithm. Others are much more complex yet not perfect. • One problem that affects all local descent algorithms is the inherent short-sightedness of such methods. They can only operate on what they got, which means that they often fall in local minima:

Gradient descent: problems • Another problem one can face with gradient descent is an inappropriate learning step size. • A step size that is too small will require too much training, and one that is too large will cause an ‘overshooting’, which can intuitively be described as an incapacity to generalize. • Note the direct applicability of these considerations to other kinds of adaptive systems, namely human learning and ecosystems

Gradient descent: conclusion • Gradient descent only works with reasonably smooth surfaces. It has several variants and always depends on a method for approximating dJ/dw. Intuitively, the more dJ/dw is representative of (the neighboorhood, the whole curve), the more the method is effective. • At the bottom, gradient descent is just a numerical method of nonlinear regression, but it is implemented using a network-like representation that can actually support other learning mechanisms. Note that the actual implementation of ANNs often uses only matrices and equations. • As Kauffman (2000) explains, gradient descent is no better than any other method (even a random method) over a random surface. That’s the no-free-lunch theorem.

Plan • Introduction: simple neurons, simple networks • Principles: adaptation and parallelism • Designing neural networks: types and parameters • Artificial vs natural neurons • Why neural networks matter to philosophers

Designing neural networks • Several factors must be taken into consideration. The most important are: • The topology • Number of units, density of connections, layer, cycles? • The encoding scheme and the input/output neurons • The learning mechanisms (including gradient approximation methods) • The step size • We will now explore some variations on the topology and learning mechanisms.

Traditional multi-layer perceptron • For pattern-recognition • Trained by steepest gradient descent with backpropagation.

Perceptron with Hebbian learning • « … When an axon of cell A is near enough to excite cell B and repeatedly or persistently takes part in firing it, some growth process or metabolic change takes place on one or both cells so that A's efficiency as one of the cells firing B is increased" (Hebb 1949, p62) • For associative memory (content addressable) • Normaly used with 2-layer linear perceptrons • Unsupervised learning

Kohonen self-organizing maps • Winner-take-all topology with feedback • Always only one output active • Works as a kind amplifier • Learning rule similar to Hebb’s rule. Update when activated:

Kohonen clusters A 2D to 1D cluster. Points are supposed to be mapped so that they are are close a possible in 1D to their neighbours in 2D.

Hopfield networks • Recurrent networks are evil. • However, if they can be made to work, they are very powerful. • Hopfield networks form a subclass of recurrent network which are well controlled. • They act as noise-resistant associative memories. • The different patterns they can recall can be understood as attractors in the state space of the network .

Plan • Introduction: simple neurons, simple networks • Principles: adaptation and parallelism • Designing neural networks: types and parameters • Artificial vs natural neurons • Why neural networks matter to philosophers

Plan • Introduction: simple neurons, simple networks • Principles: adaptation and parallelism • Designing neural networks: types and parameters • Artificial vs natural neurons • Why neural networks matter to philosophers

The philosophical interest of neural and adaptive systems • Metaphysics: the key to an empirical justification of anti-realism. • Ethics and politics: the advantage of self-knowledge; neuroscience is one ingredient of tomorrow’s ethical dilemmas. • Language: the importance and power of sub-symbolic processes.

The End • There is a Philosophy and Neuroscience conference at Carleton University next weekend, with such speakers as Dan Dennett and Patricia Churchland. • (I am looking for a ride ) • This presentation is available online at www.dbourget.com • My main reference book is available at the Eng & CS library: QA 76.87 .P74 2000X • Thank you for listening.