Overview of Rhea Analysis and Post-Processing Cluster for High-Performance Computing

Rhea is an advanced high-performance computing cluster at NCCS designed for users requiring extensive computational resources, especially within INCITE and ALCC programs. Featuring 200 Dell PowerEdge C6220 nodes, each equipped with 64GB of RAM and dual Intel Xeon CPUs, Rhea provides significant processing power. The cluster prioritizes quick job completion with a fair queuing policy and offers a rich software stack, including visualization tools, debugging aids, and data management systems. Transitioning from Titan to Rhea, users can access various resources on the Atlas filesystem.

Overview of Rhea Analysis and Post-Processing Cluster for High-Performance Computing

E N D

Presentation Transcript

RheaAnalysis & Post-processing Cluster Robert D. French NCCS User Assistance

Rhea Quick Overview • 200 Dell PowerEdge C6220 Nodes • 196 Compute / 4 Login • RHEL 6.4 • 2 x 8-Core Intel Xeon CPUs @ 2.0 GHz • Hyperthreading is enabled, so “top” shows 32 CPUs • 64GB of RAM • New 56Gb/s IB Fabric • Mounts Atlas • Does not mount Widow • Replaces Lens • No Preemptive Queue

Allocation & Billing • Rhea is prioritized as an extra resource for INCITE and ALCC users through the end of the year. • DD Projects may request access • 1 node hour charged per node per hour • Ex: 10 nodes for 2 hours = 20 node hours • Each project will be awarded 1,000 hours per Month • Separate from Titan / Eos usage • Request more if you run low

Rhea Queue Policy • Should minimize large jobs swamping the system • Small runs should complete quickly • Request a Reservation for more nodes / longer wall-times

Software Stack • Most Lens software will already be installed • Here are some highlights: • Visualization: ParaView, VisIt, VMD • Compilers: GCC, Intel, and PGI • Scientific Languages: MATLAB, Octave, R, SciPy • Data Management: Globus, BBCP, NetCDF, HDF5, Adios • Debugging: DDT, Vampir, Valgrind • Full list of installed software available on our website • If you can’t find what you need, just ask!

Transitioning to Rhea • Now: Titan Lens Widow • Titan and Lens mount Widow

Transitioning to Rhea • Soon (mid-to-late November): Titan Lens Rhea Widow Atlas • Titan will mount both Atlas and Widow • Move data to Atlas and take advantage of Rhea

Transitioning to Rhea • Near Future: Titan Rhea Widow Atlas • Lens will be decommissioned • Rhea will be the center’s viz & analysis cluster

Spider IIDirectory Layout Changes Chris Fuson

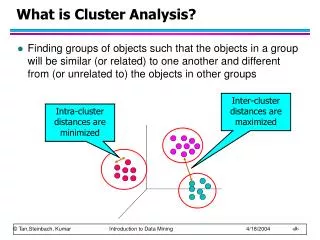

OLCF Center-wide File Systems • Spider • Center-wide scratch space • Temporary; not backed-up • Available from compute nodes • Fast access to job-related temporary files and for staging large files to and from archival storage • Contains multiple Lustre file systems

Spider I v/s Spider II Spider II Spider I • Widow [1-3] • 240 GB/s • 10 PB • 3 MDS • 192 OSS • 1,344 OST • Current Center-wide Scratch • Decommissioned Early January, 2014 • Atlas [1-2] • 1 TB/s • 30 PB • 2 MDS • 288 OSS • 2,016 OST • Available on Additional OLCF Systems Soon

Spider II Change Overview Before using Spider II, please note the following: • New directory structure • Organized by project • Each project given a directory on one of the atlas filesystems • WORKDIR now within project areas • You may have multiple WORKDIRs • * Requires Change • Quota increases • Increased file system size allows for increased quotas • All areas purged • To help ensure space available for all projects

Spider II Directory Structure ProjectID Member Work • Purpose: Batch job I/O • Path: • $MEMBERWORK/<projid> • 10 TB quota • 14 day purge • Permissions: • User allowed to change permissions to share within project • No automatic permission changes Project Work World Work

Spider II Directory Structure ProjectID Member Work Project Work • Purpose: Data sharing within project • Path: • $PROJWORK/<projid> • 100 TB quota • 90 day purge • Permissions: • Read, Write, Execute access for project members World Work

Spider II Directory Structure ProjectID Member Work Project Work World Work • Purpose: Data sharing with users who are not members of project • Path: • $WORLDWORK/<projid> • 10 TB quota • 14 day purge • Permissions: • Read, Execute for world • Read, Write, Execute for project

Spider II Directory Structure • New directory structure • Organized by project

Before Using Atlas • Modify scripts to point to new directory structure • /tmp/work/$USER • $WORKDIR • $MEMBERWORK/<projid> • $PROJWORK/<projid> /tmp/proj/<projid> • Migrate data • You will need to transfer needed data onto Spider II (atlas)

Questions? • More information: • www.olcf.ornl.gov/kb_articles/atlas-transition/ • Email: • help@olcf.ornl.gov

Other Items • Dec 17th - Titan to return to 100% • 2013 User Survey • Available on olcf.ornl.gov