Utility-Based Metaclassification: Enhancing Classifier Performance through Meta-Feature Optimization

This 2009 IEEE paper discusses a metaclassification approach that combines the outputs of multiple classifiers to improve performance. It explores the roles of object-level classifiers, such as decision trees and Bayesian networks, and the importance of selecting optimal features to maximize accuracy. The study introduces a metaclassifier trained to discern when an ordinary classifier is correct, utilizing both object-level and metalevel features. Key metrics like expected utility and accuracy are analyzed, facilitating the selection of features that enhance classification outcomes across various domains.

Utility-Based Metaclassification: Enhancing Classifier Performance through Meta-Feature Optimization

E N D

Presentation Transcript

Utility-based Metaclassification 2009 IEEE Conference on Systems, Man, and Cybernetics

Classifier Systems • Classifier: Labels object based on observed features • Decision tree, nearest neighbor, Bayesian, … • Features: height, weight, income, … • Classifier usually trained using objects of known type • “Optimal” sets of features • Wrapper/Filter search methods

Metaclassifier • Usual meaning: Combines output of multiple classifiers to improve performance • Bagging (voting) • Boosting (series of classifiers) • Here: A “metaclassifier” is trained to recognize when an “ordinary” classifier is correct. It classifies the classifications of the lower-level (“object-level”) classifier.

“Object-level” vs. “metalevel” • Object-level features: income, BMI, … • Metalevel features: • Impurity of decision tree leaf nodes • Distance to nearest neighbor • Our object-level classifier is “Bayesian”: For each known type, the probability that the object belongs to that type is calculated. Object is classified as belonging to type with highest probability.

Intuitively, the more uniform the probability distribution over the known types, the less confident we should feel about the classification: Pr(Bolt|Data) = 0.34 Pr(Washer|Data) = 0.33 Pr(Nut|Data) = 0.33 => Object is a bolt ???

We cannot “eyeball” the distributions. The metaclassifier is automated, just as the object-level classifier is. • Metalevel features include: • Entropy of entire distribution • Highest probability • Log of ratio of highest to second highest • Entropy of distribution minus highest • Interquantile range

Feature Selection • Object level features usually chosen to maximize accuracy, or weighted accuracy (e.g., misclassifying malignant tumor as benign is more costly than opposite error). • We could optimize metalevel features wrt various forms of accuracy. • What would “accuracy” mean for a metaclassifier?

“TP”: “true positive” – metaclassifier says object-level classifier was correct and it was correct “FP”: metaclassifier says correct, but it wasn’t “TN”: metaclassifier says incorrect and it was incorrect. “FN”: metaclassifier says incorrect, but it was correct. tp, fp, tn, fn: Experimentally observed frequencies of these outcomes

Object-level (unweighted) accuracy = (tp+fn)/(tp+tf+fp+fn) • Metalevel measures: Metalevel classifier accuracy = (tp+tn)/(tp+tn+fp+fn) Net accuracy = tp/(tp+fp)

Problems for these measures • Metalevel classifier accuracy = (tp+tn)/(tp+tn+fp+fn) Can be increased by replacing a set of metafeatures with a different set where tp' – tp > fp' – fp But the smaller increase in false positives may displease the end user far more than she is pleased by the larger TP increase.

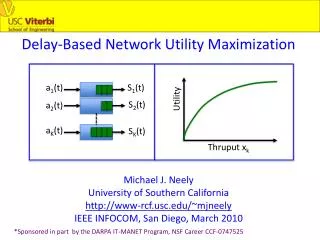

Alternative: Expected gain in utility • Exp. utility at metalevel = u(TP) × tp/total + u(FN) × fn/total + u(FP) × fp/total + u(TN) × tn/total • Exp. utility at object level = u(TP) × (tp + fn)/total + u(FP) × (fp + tn)/total

Expected gain equals difference: fn/total × (u(FN) – u(TP)) + tn/total × (u(TN) – u(FP)) • Gain can be positive, negative, or zero • Gain is zero when metaclassifier fails to reject any object-level classifications: fn = tn = 0

Where do utilities come from? • “Elicit” them from subject matter expert who cares very, very much about the performance of the two-level classifier • u(TP)=1, u(FP)=0, u(TN)=?, u(FN)=?? • Series of lotteries terminating with, e.g., u(TN) = Pr(TP)×u(TP) + Pr(FP)×u(FP) => u(TN) = Pr(TP)

Effect of metaclassifier • Before: 1 2 3 4 5 6 7 8 9 10 <- --- --- --- --- --- --- --- --- --- --- 26 4 5 0 2 1 0 2 2 0 (1): MIT 3 43 19 0 3 2 1 2 4 0 (2): NUC 5 21 43 0 2 6 2 2 2 0 (3): CYT 0 0 0 3 1 0 3 1 0 0 (4): ME1 0 0 0 1 5 2 1 3 1 0 (5): ME2 2 0 3 0 0 0 0 0 0 0 (6): VAC 0 0 1 0 0 0 4 0 0 0 (7): EXC 1 4 0 0 3 2 0 24 0 0 (8): ME3 0 0 0 0 0 0 0 0 1 0 (9): POX 0 0 0 0 0 0 0 0 0 1 (10): ERL

u(FN) = 0.35 u(TN) = 0.85 u(FP) = 0 u(TP) = 1 Best 1-element set(s): 0000010000000000 difftop2 Score = 0.17658 Number tied = 1 Classification matrix: 1 2 <-classified as --- --- 108 42 (1): class RIGHT 31 88 (2): class WRONG Best 2-element set(s): 0000000010010000 secondhighest 1+2+3 Score = 0.231041 Number tied = 1 Classification matrix: 1 2 <-classified as --- --- 124 26 (1): class RIGHT 26 93 (2): class WRONG Best 8-element set(s): 1011010000110011 entropy interXile_range total_below_Xth_Xile difftop2 thirdhighest 1+2+3 enttopthree entminusmax Score = 0.317658 1001110000110011 entropy total_below_Xth_Xile maxprob difftop2 thirdhighest 1+2+3 enttopthree entminusmax Score = 0.317658 Number tied = 2 Classification matrix: 1 2 <-classified as --- --- 135 15 (1): class RIGHT 7 112 (2): class WRONG Metafeature search

1 2 3 4 5 6 7 8 9 10 11 <- --- --- --- --- --- --- --- --- --- --- --- 25 0 0 0 0 0 0 0 0 0 17 (1): MIT 0 37 1 0 0 0 0 0 0 0 39 (2): NUC 0 5 37 0 0 0 0 0 0 0 41 (3): CYT 0 0 0 3 0 0 0 0 0 0 5 (4): ME1 0 0 0 0 5 0 0 0 0 0 8 (5): ME2 0 0 1 0 0 0 0 0 0 0 4 (6): VAC 0 0 0 0 0 0 4 0 0 0 1 (7): EXC 0 0 0 0 0 0 0 23 0 0 11 (8): ME3 0 0 0 0 0 0 0 0 0 0 1 (9): POX 0 0 0 0 0 0 0 0 0 1 0 (10): ERL 0 0 0 0 0 0 0 0 0 0 0 (11): UNK • After:

More refined outcomes u(“R”|”B”,B) = 0.8 u(“R”|”B”,M) = 0.0 u(“R”|”M”,M) = 1.0 u(“R”|”M”,B) = 0.6 u(“W”|”B”,M) = 0.8 u(“W”|”B”,B) = 0.7 u(“W”|”M”,B) = 0.5 u(“W”|”M”,M) = 0.1 “R”|”B”,M much worse than “R”|”M”,B but both are FP, generically.

concavity standard error concave points standard error symmetry standard error average of 3 largest symmetry values Object-level features: 1 2 <-classified as --- --- 40 4 (1): class M 4 66 (2): class B Best 1-element set(s): Maxprob Score = 0.00175439 Number tied = 1 rbb = 66 rbm = 1 rmm = 38 rmb = 0 wbm = 3 wbb = 0 wmb = 4 wmm = 2 1 2 3 <-classified as --- --- --- 38 1 5 (1): class M 0 66 4 (2): class B 0 0 0 (3): class UNKNOWN

Very effective in context of classifying sequence of objects for which probability that consecutive objects are of same type is high • Voting scheme over most recent N objects: K, K-1, …, 1 point • K points to “UNKNOWN” if rejected, remaining 1+2+ … +K-1 points unallocated • Points that would otherwise go to wrong class go instead to “UNKNOWN”, increasing object-level accuracy (vs. gain)