SIMULATED ANNEALING

SIMULATED ANNEALING. Cole Ott. The next 15 minutes. I’ll tell you what Simulated Annealing is. We’ll walk through how to apply it to a Markov Chain (via an example). We’ll play with a simulation. 1. The Problem. State space Scoring function Objective: to find minimizing. Idea.

SIMULATED ANNEALING

E N D

Presentation Transcript

SIMULATED ANNEALING Cole Ott

The next 15 minutes • I’ll tell you what Simulated Annealing is. • We’ll walk through how to apply it to a Markov Chain (via an example). • We’ll play with a simulation.

The Problem • State space • Scoring function • Objective: to find minimizing

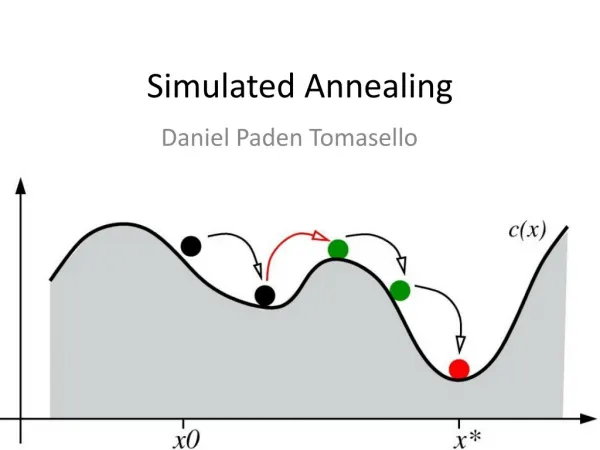

Idea • Construct a Markov Chain on with a stationary distribution that gives higher probabilities to lower-scoring states. • Run this chain for a while, until it is likely to be in a low-scoring state • Construct a new chain whose is even more concentrated on low-scoring states • Run this chain from • Repeat

Idea • Construct a Markov Chain on with a stationary distribution that gives higher probabilities to lower-scoring states. • Run this chain for a while, until it is likely to be in a low-scoring state • Construct a new chain whose is even more concentrated on low-scoring states • Run this chain from • Repeat

Idea • Construct a Markov Chain on with a stationary distribution that gives higher probabilities to lower-scoring states. • Run this chain for a while, until it is likely to be in a low-scoring state • Construct a new chain with an even stronger preference for states minimizing • Run this chain from • Repeat

Idea • Construct a Markov Chain on with a stationary distribution that gives higher probabilities to lower-scoring states. • Run this chain for a while, until it is likely to be in a low-scoring state • Construct a new chain with an even stronger preference for states minimizing • Run this chain from • Repeat

Idea • Construct a Markov Chain on with a stationary distribution that gives higher probabilities to lower-scoring states. • Run this chain for a while, until it is likely to be in a low-scoring state • Construct a new chain with an even stronger preference for states minimizing • Run this chain from • Construct another chain …

Idea (cont’d) • Basically, we are constructing an inhomogeneous Markov Chain that gradually places more probability on -minimizing states • We hope that if we do this well, then approaches as

Boltzmann Distribution • We will use the Boltzmann Distribution for our stationary distributions

Boltzmann Distribution (cont’d) • is a normalization term • We won’t have to care about it.

Boltzmann Distribution (cont’d) • is the Temperature • When is very large, approaches the uniform distribution • When is very small, concentrates virtually all probability on -minimizing states

Boltzmann Distribution (cont’d) • When we want to maximize rather than minimize, we replace with

Def: Annealing FUN FACT! • Annealing is a process in metallurgy in which a metal is heated for a period of time and then slowly cooled. • The heat breaks bonds and causes the atoms to diffuse, moving the metal towards its equilibrium state and thus getting rid of impurities and crystal defects. • When performed correctly (i.e. with a proper cooling schedule), the process makes a metal more homogenous and thus stronger and more ductile as a whole. • Parallels abound! Source: Wikipedia

Theorem (will not prove) • Let denote the probability that a random element chosen according to is a global minimum • Then Source: Häggström

Designing an Algorithm • Design a MC on our chosen state space with stationary distribution • Design a cooling schedule—a sequence of integers and a sequence of strictly decreasing temperature values such that for each in sequence we will run our MC at temperature for steps

Notes on Cooling Schedules • Picking a cooling schedule is more of an art than a science. • Cooling too quickly can cause the chain to get caught in local minima • Tragically, cooling schedules with provably good chances of finding a global minima can require more time than it would take to actually enumerate every element in the state space

Example: Traveling salesman

The Traveling Salesman Problem • cities • Want to find a path (a permutation of ) that minimizes our distance function .

The Traveling Salesman Problem • cities • Want to find a path (a permutation of ) that minimizes our distance function .

Our Markov Chain • Pick u.a.r. vertices such that • With some probability, reverse the order of the substring on our path

Our Markov Chain (cont’d) • Pick u.a.r. vertices such that • Let be the current state and let be the state obtained by reversing the substring • in • With probability • transition to , else do nothing.

Sources "Annealing.” Wikipedia, The Free Encyclopedia, 22 Nov. 2010. Web. 3 Mar. 2011 Häggström, Olle. Finite Markov Chains and Algorithmic Applications. Cambridge University Press.