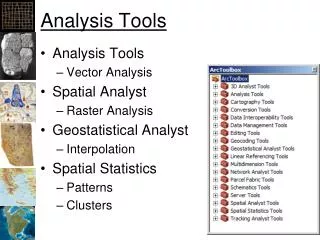

Analysis Tools

This analysis explores essential concepts in algorithm efficiency, focusing on how to compare different data structures, like Red-Black Trees and Hash Tables. We'll examine the importance of operations supported by each structure and how their efficiency can vary based on specific implementations. Additionally, we'll delve into Big-O notation, a critical tool for evaluating algorithm performance, exploring concepts of time complexity and space requirements. By understanding these principles, we can make informed decisions about which algorithms to implement in various applications.

Analysis Tools

E N D

Presentation Transcript

Analysis Tools Briana Morrison

The Big Idea • How will we compare one data structure with another? • How do I know when to use a Red-Black Tree versus a Hash Table? • We must know something about the operations that each support, and the efficiency of those operations given a specific implementation. Analysis of Algorithms

Analysis of Algorithms Input Algorithm Output An algorithm is a step-by-step procedure for solving a problem in a finite amount of time.

Efficiency of Algorithms By comparing the efficiency of algorithms, we have one basis of comparison that can help us determine the best technique to use in a particular situation. Travel - by foot - by car - by train - by airplane Analysis of Algorithms

Analysis of Algorithms • The measure of the amount of work an algorithm performs (time) or • the space requirements of an implementation (space) Complexity Order of magnitude is a function of the number of data items. Analysis of Algorithms

Running Time (§3.1) • Most algorithms transform input objects into output objects. • The running time of an algorithm typically grows with the input size. • Average case time is often difficult to determine. • We focus on the worst case running time. • Easier to analyze • Crucial to applications such as games, finance and robotics Analysis of Algorithms

Theoretical Analysis • Uses a high-level description of the algorithm instead of an implementation • Characterizes running time as a function of the input size, n. • Takes into account all possible inputs • Allows us to evaluate the speed of an algorithm independent of the hardware/software environment Analysis of Algorithms

Entire expression is called the "Big-O" measure for the algorithm. Big-O notation Big-O notation provides a machine independent means for determining the efficiency of an Algorithm. For the selection sort, the number of comparisons is T(n) = n2/2 - n/2. n = 100: T(100) = 1002/2 -100/2 = 10000/2 - 100/2 = 5,000 - 50 = 4,950 Analysis of Algorithms

Big O • Big-O notation measures the efficiency of an algorithm by estimating the number of certain operations that the algorithm must perform. • For searching and sorting algorithms, the operation is data comparison • Big-O measure is very useful for selecting among competing algorithms. Analysis of Algorithms

Big-O Notation • Algorithm A requires time proportional to a function F(N) given a reasonable implementation and computer. • big-o notation is then written as O(n) where n is proportional to the number of data items • You can ignore constants O(5n2) is O(n2) • You can ignore low-order terms O(n3 +n2 + n) is O(n3) Analysis of Algorithms

Growth Rates • Growth rates of functions: • Linear n • Quadratic n2 • Cubic n3 • In a log-log chart, the slope of the line corresponds to the growth rate of the function Analysis of Algorithms

Constant Factors • The growth rate is not affected by • constant factors or • lower-order terms • Examples • 102n+105is a linear function • 105n2+ 108nis a quadratic function Analysis of Algorithms

Big-Oh Notation (§3.5) • Given functions f(n) and g(n), we say that f(n) is O(g(n))if there are positive constantsc and n0 such that f(n)cg(n) for n n0 • Example: 2n+10 is O(n) • 2n+10cn • (c 2) n 10 • n 10/(c 2) • Pick c = 3 and n0 = 10 Analysis of Algorithms

Big-Oh Example • Example: the function n2is not O(n) • n2cn • n c • The above inequality cannot be satisfied since c must be a constant Analysis of Algorithms

More Big-Oh Examples • 7n-2 7n-2 is O(n) need c > 0 and n0 1 such that 7n-2 c•n for n n0 this is true for c = 7 and n0 = 1 • 3n3 + 20n2 + 5 3n3 + 20n2 + 5 is O(n3) need c > 0 and n0 1 such that 3n3 + 20n2 + 5 c•n3 for n n0 this is true for c = 4 and n0 = 21 • 3 log n + log log n 3 log n + log log n is O(log n) need c > 0 and n0 1 such that 3 log n + log log n c•log n for n n0 this is true for c = 4 and n0 = 2 Analysis of Algorithms

Big-Oh Rules • If is f(n) a polynomial of degree d, then f(n) is O(nd), i.e., • Drop lower-order terms • Drop constant factors • Use the smallest possible class of functions • Say “2n is O(n)”instead of “2n is O(n2)” • Use the simplest expression of the class • Say “3n+5 is O(n)”instead of “3n+5 is O(3n)” Analysis of Algorithms

Growth Rates • Constant O(1) print first item • Linear O(n) print list of items • Polynomial O(n2) print table of items • Logarithmic O(log n) binary search • Exponential O(2n) listing all the subsets of a set • Factorial O(n!) traveling salesperson problem Analysis of Algorithms

Constant Time Algorithms An algorithm is O(1) when its running time is independent of the number of data items. The algorithm runs in constant time. The storing of the element involves a simple assignment statement and thus has efficiency O(1). Analysis of Algorithms

Linear Time Algorithms An algorithm is O(n) when its running time is proportional to the size of the list. When the number of elements doubles, the number of operations doubles. Analysis of Algorithms

Exponential Algorithms • Algorithms with running time O(n2) are quadratic. • practical only for relatively small values of n. • Whenever n doubles, the running time of the algorithm increases by a factor of 4. • Algorithms with running time O(n3)are cubic. • efficiency is generally poor; doubling the size of n increases the running time eight-fold. Analysis of Algorithms

ExponentialAlgorithms Analysis of Algorithms

Logarithmic Time Algorithms The logarithm of n, base 2, is commonly used when analyzing computer algorithms. Ex. log2(2) = 1 log2(75) = 6.2288 When compared to the functions n and n2, the function log2 n grows very slowly. Analysis of Algorithms