Approximate String Matching

Approximate String Matching. A Guided Tour to Approximate String Matching Gonzalo Navarro Justin Wiseman. Outline:. Definition of approximate string matching (ASM) Applications of ASM Algorithms Conclusion. Approximate string matching.

Approximate String Matching

E N D

Presentation Transcript

Approximate String Matching A Guided Tour to Approximate String Matching Gonzalo Navarro Justin Wiseman

Outline: • Definition of approximate string matching (ASM) • Applications of ASM • Algorithms • Conclusion

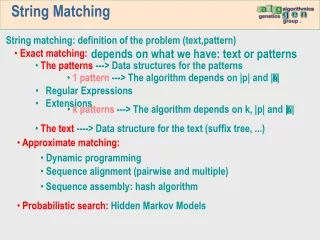

Approximate string matching • Approximate string matching is the process of matching strings while allowing for errors.

The edit distance • Strings are compared based on how close they are • This closeness is called the edit distance • The edit distance is summed up based on the number of operations required to transform one string into another

Levenshtein / edit distance • Named after Vladimir Levenshtein who created his Levenshtein distance algorithm in 1965 • Accounts for three basic operations: • Inserts , deletions, and replacements • In the simplified version, all operations have a cost of 1 • Example: “mash” and “march” have edit distance of 2

Other distance algorithms • Hamming distance: • Allows only substitutions with a cost of one each • Episode distance: • Allows only insertions with a cost of one each • Longest Common Subsequence distance: • Allows only insertions and deletions costing one each

Outline: • What is approximate string matching (ASM)? • What are the applications of ASM? • Algorithms • Conclusion

Applications • Computational biology • Signal processing • Information retrieval

Computational biology • DNA is composed of Adenine, Cytosine, Guanine, and Thymine (A,C,G,T) • One can think of the set {A,C,G,T} as the alphabet for DNA sequences • Used to find specific, or similar DNA sequences • Knowing how different two sequences are can give insight to the evolutionary process.

Signal processing • Used heavily in speech recognition software • Error correction for receiving signals • Multimedia and song recognition

Information Retrieval • Spell checkers • Search engines • Web searches (Google) • Personal files (agrep for unix) • Searching texts with errors such as digitized books • Handwriting recognition

Outline: • What is approximate string matching (ASM)? • What are the applications of ASM? • Algorithms • Conclusion

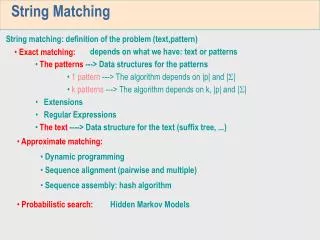

Algorithms • Definitions • Dynamic Programming algorithms • Automatons • Bit-parallelism • Filters

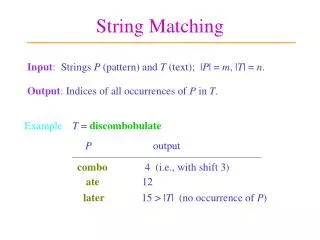

Definitions • Let ∑ be a finite alphabet of size |∑| = σ • Let Tє ∑* be a text of length n = |T| • Let Pє ∑* be a pattern of length m = |P| • Let k є R be the maximum error allowed • Let d : ∑* × ∑* R be a distance function • Therefore, given T, P, k, and d(.), return the set of all text positions j such that there exists i such that d(P, Ti..j) ≤ k

Algorithms • Definitions • Dynamic Programming algorithms • Automatons • Bit-parallelism • Filters

Dynamic Programming • oldest to solve the problem of approximate string matching • Not very efficient • Runtime of O(|x||y|) • However, space is O(min(|x||y|)) • Most flexible when adapting to different distance functions

Computing the edit distance • To compute the edit distance: ed(x,y) • Create a matrix C0..|x|,0..|y| where Ci,jrepresents the minimum operations needed to match x1..ito y1..j • Ci,0 = i • C0,j = j • Ci,j = if(xi = yj) then Ci-1, j-1 else 1 + min(Ci-1,Ci,j-1, Ci-1,j-1)

Edit distance example • Ci,0 = i • C0,j = j • if(xi = yj) Ci,j = Ci-1, j-1 else Ci,j = 1 +min(Ci-1, Ci,j-1, Ci-1,j-1)

Text searching • The previous algorithm can be converted to search a text for a given pattern with few changes • Let y = Pattern, and x = Text • Set C0,j = 0 so that any text position is the start of a match • Ci,j = if(Pi = Tj) then Ci-1,j-1 else 1+min(Ci-1,j, Ci,j-1, Ci-1,j-1)

Text search example • In English: if the letters at the index are the same, then the current position = the top left position. If the letters are not the same, then the current position is the minimum of left, top, and top left plus one.

Improvements • Example algorithm listed was the first • Many DP based algorithms improved on the search time • In 1992, Chang and Lampe produce new algorithm called “column partitioning” with an average search time of O(kn÷√σ) where k=errors, n=text length, and σ=size of alphabet

Algorithms • Definitions • Dynamic Programming algorithms • Automatons • Bit-parallelism • Filters

Automatons for approx. search • Model search with a nondeterministic finite automata • 1985: EskoUkkonen proposes a deterministic form • Fast: deterministic form has O(n) worst case search time • Large: space complexity of DFA grows exponentially with respect to the pattern length

NFA example with k = 2 Matching the pattern “survey” on text “surgery”

Improvements • In 1996 Kurtz[1996] proposes lazy construction of DFA • Space requirements reduced to O(mn)

Algorithms • Definitions • Dynamic Programming algorithms • Automatons • Bit-parallelism • Filters

Bit-parallelism • Takes advantage of the inherent parallelism of computer when dealing in bits • Changes an existing algorithm to operate at the bit level • Operations can be reduced by factor of w where w is the number of bits in a word

Shift-Or • Was the first bit-parallel algorithm • Parallelizes the operation of an NFA that tries to match the pattern exactly • NFA has m+1 states

Builds table B which stores a bit mask for every character c • For the mask B[c], the bit bi is set if and only if Pi = c • Search state is kept in a machine word D = dm..d1 • diis 1 when P1..imatches the end of the text scanned so far • Match is registered when dm = 1

To start, D is set to 1m • D is updated upon reading a new text character using the following formula • D’ ((D << 1) | 0m-11) & B[Tj] • This representation ends up working similar to a DFA in that the final state is only reached if the previous state has been reached and so on.

Algorithms • Definitions • Dynamic Programming algorithms • Automatons • Bit-parallelism • Filters

Filters • Originating in the 1990’s • Filter algorithms attempt to filter out large sections of code based on the fact that a given pattern can not be there • Needs a different kind of algorithm to check portions of text which are not filtered out

conceptually • Filter algorithms are really exact match pattern searchers • Exact pattern matching is much quicker • Breaks up original pattern into parts and searches the text for those exact parts • Example from Navarro: if “sur” and “vey” don’t appear in a section, then “survey” can’t either

Filters • must be paired with a non-filter algorithm such as one of the dynamic programming algorithms • Performance dependant upon number of errors allowed • Are the fastest of the algorithms surveyed • Best theoretical average cost O(n(k + logσm)/m)

Hierarchical verification method • Created by Navarro and Baeza-Yates in 1998 • Original pattern is recursively split with each half searching on k/2 errors • In example: if search on text “xxxbbxxxxxx”, the leaf “bbb” will return a match with one error • Checking the parent subdivision shows that there is no match

Outline: • What is approximate string matching (ASM)? • What are the applications of ASM? • Algorithms • Conclusion

Conclusion • Generally a combination of a fast filter and a fast verifying algorithm is the fastest overall • For non-filtering algorithms, a NFA bit-parallelized by diagonals is the fastest • Approximate string matching has greatly influenced the field of computer science and will play an important role in future technology.

References • “A Guided Tour to Approximate String Matching”, Gonzalo Navarro • “Implementation of a Bit-parallel Aproximate String Matching Algorithm”, MikaelOnsjo and Osamu Watanabe • “A Partial Deterministic Automaton for Approximate String Matching”, Gonzalo Navarro • http://en.wikipedia.org/wiki/Approximate_string_matching

Approximate String Matching A Guided Tour to Approximate String Matching Gonzalo Navarro Justin Wiseman