Performance, Availability and Cost Analysis for Cloud Based Services Rahul Ghosh

840 likes | 864 Vues

This study examines performability and resiliency of IaaS cloud services, challenging aspects, analysis methods, and future research in cloud computing.

Performance, Availability and Cost Analysis for Cloud Based Services Rahul Ghosh

E N D

Presentation Transcript

Performance, Availability and Cost Analysis for Cloud Based Services Rahul Ghosh Preliminary Examination, Dept. of ECE Duke University Committee Members: Dr. Kishor S. Trivedi(Chair) Dr. ShivnathBabu, Dr. John Board, Dr. Vijay Naik, Dr. Loren Nolte September 23, 2010

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency for pure performance measures • Future Research • Conclusions

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency for pure performance measures • Future Research • Conclusions

Definition • Cloud computing is a model of Internet-based computing • Definition provided by National Institute of Standards and Technology (NIST): • “Cloud computing is a model for enabling convenient, • on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.” • Source: P. Mell and T. Grance, “The NIST Definition of Cloud Computing”, October 7, 2009

Definition • Cloud computing is a model of Internet-based computing • Definition provided by National Institute of Standards and Technology (NIST): • “Cloud computing is a model for enabling convenient, • on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.” • Source: P. Mell and T. Grance, “The NIST Definition of Cloud Computing”, October 7, 2009

Key characteristics • On-demand self-service: • Provisioning of computing capabilities, without human interactions • Resource pooling: • Shared physical and virtualized environment • Rapid elasticity: • Through standardization and automation, quick scaling at any time • Metered Service: • Pay-as-you-go model of computing • Source: P. Mell and T. Grance, “The NIST Definition of Cloud Computing”, October 7, 2009

Service models • Infrastructure-as-a-Service (IaaS) Cloud: • Examples: Amazon EC2, IBM Smart Business Development and Test Cloud • Platform-as-a-Service (PaaS) Cloud: • Examples: Micorsoft Windows Azure, Google AppEngine • Software-as-a-Service (SaaS) Cloud: • Examples: Gmail, Google Docs

Deployment models • Private Cloud: • Cloud infrastructure solely for an organization • Managed by the organization or third party • May exist on premise or off-premise • Public Cloud: • Cloud infrastructure available for use for general users • Owned by an organization providing cloud services • Hybrid Cloud: • - Composition of two or more clouds (private or public)

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency for pure performance measures • Future Research • Conclusions

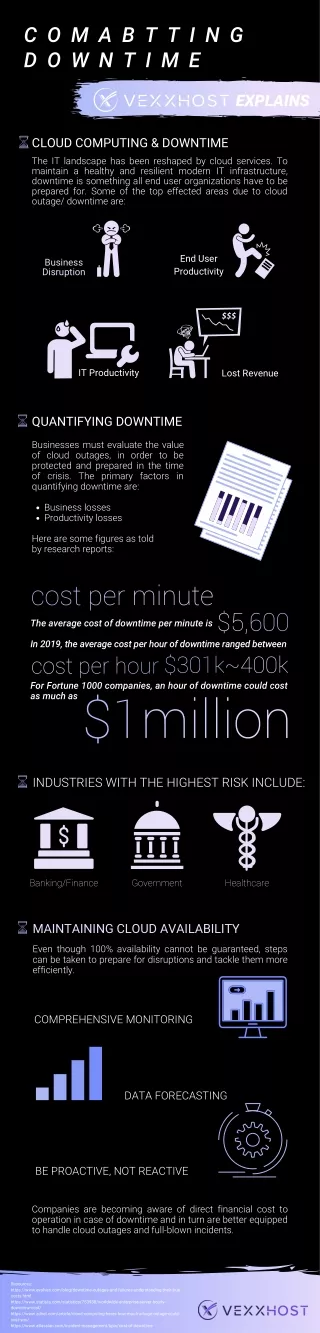

Key challenges • Two critical obstacles of a cloud: • Service (un)availability and performance unpredictability • Large number of parameters can affect performance and availability • Nature of workload (e.g., arrival rates, service rates) • Failure characteristics (e.g., failure rates, repair rates, modes of recovery) • Types of physical infrastructure (e.g., number of servers, number of cores per server, RAM and local storage per server, configuration of servers, network configurations) • Characteristics of virtualization infrastructures (VM placement, VM resource allocation and deployment) • Characteristics of different management and automation tools Performance and availability assessments are difficult!

Common approaches • Measurement-based evaluation: • Appealing because of high accuracy • Expensive to investigate all variations and configurations • Time consuming to observe enough events (e.g., failure events) to get statistically significant results • Lacks repeatability because of sheer scale of cloud • Discrete-event simulation models: • Provides reasonable fidelity but expensive to investigate many alternatives with statistically accurate results • Analytic models: • -Lower relative cost of solving the models • -May become intractable for a complex real sized cloud • -Simplifying the model results in loss of fidelity

Thesis goals • Developing a comprehensive modeling approach for joint analysis of availability and performance of cloud services • Developed models should have high fidelity to capture all the variations and configuration details • Proposed models need to be tractable and scalable • Applying these models to solve cloud design and operation related problems

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency of pure performance measures • Future Research • Conclusions

Key problems of interest: Characterize cloud service as a function of arrival rate, available capacity, service requirements, and failure properties Apply these characteristics in SLA analysis and management, admission control, cloud capacity planning, cloud economics Approach: We use interacting stochastic sub-models based approach Lower cost of solving the models while covering large parameter space Our approach is tractable and scalable Two key service quality measures: (1) service availability and (2) provisioning response delay Introduction These service quality measures are also performability measures.

Novelty of our approach • Single monolithic model vs. interacting sub-models approach • Even with a simple case of 6 physical machines and 1 virtual machine per physical machine, a monolithic model will have 126720 states. • In contrast, our approach of interacting sub-models has only 41 states. Clearly, for a real cloud, a naïve modeling approach will lead to very large analytical model. Solution of such model is practically impossible. Interacting sub-models approach is scalable, tractable and of high fidelity. Also, adding a new feature in an interacting sub-models approach, does not require reconstruction of the entire model. What are the different sub-models? How do they interact?

Main Assumptions All requests are homogenous, where each request is for one virtual machine (VM) with fixed size CPU cores, RAM, disk capacity. We use the term “job” to denote a user request for provisioning a VM. Submitted requests are served in FCFS basis by resource provisioning decision engine (RPDE). If a request can be accepted, it goes to a specific physical machine (PM) for VM provisioning. After getting the VM, the request runs in the cloud and releases the VM when it finishes. To reduce cost of operations, PMs can be grouped into multiple pools. We assume three pools – hot (running with VM instantiated), warm (turned on but VM not instantiated) and cold (turned off). All physical machines (PMs) in a particular type of pool are identical. System model

Provisioning and servicing steps: (i) resource provisioning decision, (ii) VM provisioning and (iii) run-time execution Life-cycle of a job inside a IaaS cloud Provisioning response delay VM deployment Provisioning Decision Actual Service Out Arrival Queuing Instantiation Resource Provisioning Decision Engine Run-time Execution Instance Creation Deploy Job rejection due to buffer full Job rejection due to insufficient capacity We translate these steps into analytical sub-models

Resource provisioning decision Provisioning response delay Resource Provisioning Decision Engine VM deployment Provisioning Decision Actual Service Out Run-time Execution Arrival Queuing Instantiation Instance Creation Deploy Admission control Job rejection due to buffer full Job rejection due to insufficient capacity

Flow-chart: Resource provisioning decision engine (RPDE)

Resource provisioning decision model: CTMC 0,h 1,h N-1,h i,s i = number of jobs in queue, s = pool (hot, warm or cold) … 0,0 0,w 1,w N-1,w … N-1,c 0,c 1,c …

Resource provisioning decision model: parameters & measures • Input Parameters: • –arrival rate: data collected from publicly available cloud • – mean search delays for resource provisioning decision engine: from searching algorithms or measurements • – probability of being able to provision: computed from VM provisioning model • N – maximum # jobs in RPDE: from system/server specification • Output Measures: • Job rejection probability due to buffer full (Pblock) • Job rejection probability due to insufficient capacity (Pdrop) • Total job rejection probability (Preject= Pblock+ Pdrop) • Mean queuing delay for an accepted job (E[Tq_dec]) • Mean decision delay for an accepted job (E[Tdecision])

VM provisioning Provisioning response delay Resource Provisioning Decision Engine VM deployment Provisioning Decision Actual Service Out Run-time Execution Arrival Queuing Instantiation Instance Creation Deploy Admission control Job rejection due to buffer full Job rejection due to insufficient capacity

VM provisioning model Hot PM Hot pool Resource Provisioning Decision Engine Warm pool Service out Accepted jobs Running VMs Idle resources in hot machine Cold pool Idle resources in warm machine Idle resources in cold machine

VM provisioning model for each hot PM Lh is the buffer size and m is max. # VMs that can run simultaneously on a PM 0,0,0 0,1,0 Lh,1,0 … 0,0,1 (Lh-1),1,1 Lh,1,1 … … … … … … … … 0,0,(m-1) 0,1,(m-1) (Lh-1),1,(m-1) Lh,1,(m-1) … 0,0,m 1,0,m Lh,0,m i = number of jobs in the queue, j = number of VMs being provisioned, k = number of VMs running i,j,k

VM provisioning model (for each hot PM) • Input Parameters: • can be measured experimentally • obtained from the lower level run-time model • obtained from the resource provisioning decision model • Hot pool model is the set of independent hot PM models • Output Measure: • = prob. that a job can be accepted in the hot pool = • where, is the steady state probability that a PM can accept job for provisioning - from the solution of the Markov model of a hot PM on the previous slide

VM provisioning model: Summary • For warm/cold PM, the VM provisioning model is similar to hot PM, with the following exceptions: • Effective job arrival rate • For the first job, warm/cold PM requires additional start-up work • Mean provisioning delay for a VM for the first job is longer • Buffer sizes are different • Outputs of hot, warm and cold pool models are the steady state probabilities that at least one PM in hot/warm/cold pool can accept a job for provisioning. These probabilities are denoted by and respectively • From VM provisioning model, we can also compute mean queuing delay for VM provisioning (E[Tvm_q]) and conditional mean provisioning delay (E[Tprov]). • Net mean response delay is given by: (E[Tresp]=E[Tq_dec]+E[Tdecision]+E[Tq_vm]+E[Tprov])

Run-time execution Provisioning response delay Resource Provisioning Decision Engine VM deployment Provisioning Decision Actual Service Out Run-time Execution Arrival Queuing Instantiation Instance Creation Deploy Admission control Job rejection due to buffer full Job rejection due to insufficient capacity

Run-time model: Markov chain 1 CPU Local I/O Global I/O 1 Finish values (for j = 0, 1) denote the transition probabilities of the Discrete Time Markov Chain (DTMC) 1 • Model output: Mean job service time / resource holding time

Model interactions: Pure performance Outputs from pure performance models Pure performance models Resource provisioning decision model Hot pool model Warm pool model Cold pool model VM provisioning models Run-time model

Availability model • Model outputs: Probability that the cloud service is available, downtime in minutes per year

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency for pure performance measures • Future Research • Conclusions

Introduction • Definition of resiliency • Resiliency is the persistence of service delivery that can justifiably be trusted when facing changes* • changes of interest in the context of IaaS cloud • Increase/decrease in workload, faultload • Increase/decrease in system capacity • Security attacks • Accidents or disasters • Our contributions: • General steps for quantifying resiliency • Metrics of resiliency quantification *[1] J. Laprie, “From Dependability to resiliency”, DSN 2008 [2] L. Simoncini, “Resilient Computing: An Engineering Discipline”, IPDPS 2009

General steps for quantifying resiliency • (1) Construct the model of the system • -We developed a stochastic reward net for pure performance analysis • (2) Determine the steady state behavior of the system • -Compute steady state values of performance measures • (3) Apply changes in the system model • -Change the values of input parameters • (4) Analyze the transient behavior of the system model to compute the transient performance measures using input parameters after change • -Initial probabilities for this transient analysis are obtained from the steady state probabilities as obtained in step (2) SPNP package allows to easily map the state space of step (2) model into state space of step (4) model

Resiliency metrics • We defined four metrics built around the pure performance measures: • Settling time ( ) • Peak overshoot (or undershoot) (PO) • Peak time ( ) • -percentile time ( ) • Notations used defining the metrics: • : Steady state value of the measure (for which resiliency is computed ) before the change • : Steady state value of the measure (for which resiliency is computed ) after the change • : Maximum deviation of the measure (for which resiliency is computed ) from its steady state value, after the change is applied

Resiliency metrics Performance measure Time Change applied

Settling time Performance measure Settling time Time Change applied

Peak overshoot Performance measure Peak overshoot or undershoot (%) = Time Change applied

Peak time Performance measure Peak time Time Change applied

γ-percentile time Performance measure γ-percentile time Time Change applied

Talk outline • An Overview of Cloud Computing • Definition, characteristics, service and deployment models • Motivation • Key challenges and thesis goals • Performability Analysis of IaaS Cloud • End-to-end service quality evaluation using interacting stochastic models • Resiliency Analysis of IaaS Cloud • Quantification of resiliency of pure performance measures • Future Research • Conclusions

Providers have two key costs for providing cloud based services Capital Expenditure (CapEx) and Operational Expenditure (OpEx) Capital Expenditure (CapEx) Example of CapEx includes infrastructure cost, software licensing cost Usually CapEx is fixed over time Operational Expenditure (OpEx) Example of OpEx includes power usage cost, cost or penalty due to violation of different SLA metrics, management costs OpEx is more interesting since it varies with time depending upon different factors like system configuration, management strategy or workload arrivals Cost analysis

Capacity planning (provider’s perspective) Failure of H/W, S/W Service times & priorities vary for different job types Workload demands varying over time Cloud service provider

SLA driven capacity planning What is the optimal #PMs so that total cost is minimized and SLA is upheld? Large sized cloud, large variability, fixed # configurations