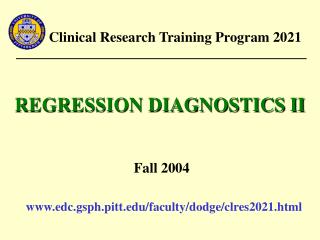

Clinical Research Training Program 2021

Clinical Research Training Program 2021. MULTIPLE REGRESSION. Fall 2004. www.edc.gsph.pitt.edu/faculty/dodge/clres2021.html. OUTLINE. Multiple (Multivariable) Regression Model Estimation ANOVA Table Partial F tests Multiple Partial F tests. MULTIPLE REGRESSION. MULTIPLE REGRESSION.

Clinical Research Training Program 2021

E N D

Presentation Transcript

Clinical Research Training Program 2021 MULTIPLE REGRESSION Fall 2004 www.edc.gsph.pitt.edu/faculty/dodge/clres2021.html

OUTLINE • Multiple (Multivariable) Regression Model • Estimation • ANOVA Table • Partial F tests • Multiple Partial F tests

MULTIPLE REGRESSION Model Suppose there are n subjects in the data set. For the ith individual, , where yiis the observed outcome; x1i, …, xpi are predictors; and 0, 1, …, p are unknown regression coefficients corresponding to x1, …, xp, respectively.

MULTIPLE REGRESSION Model Assumptions • Independence – yi are independent of one another • Linearity – E(yi) is a linear function of x1i, …, xpi. That is, E(yi | x1i, …, xpi) = 0 + 1x1i+ …+ pxpi. • Homoscedasticity – var(yi) is the same for any fixed combination of x1i, …, xpi. That is, var(yi| x1i, …, xpi) = 2. • Normality – for any fixed combination of x1i, …, xpi, yi~ N(0 + 1x1i+ …+ pxpi, 2) or equivalently ~ N(0, 2)

ESTIMATION The Least-squares Method The least-squares solution gives the best fitting regression plane by minimizing the sum of squares of the distances between the observed outcomes yi and the corresponding expected outcome values That is, the quantity is minimized to find the least-squares estimates of

y • • • • • • The best-fitting plane • • • • • x1 • • x2

ESTIMATION The least-squares regression equation is the unique linear combination of the independent variables x1, …, xp that has maximum possible correlation with the dependent variable y. In other words, of all possible linear combination of the form the linear combination is such that the correlation is a maximum. Multiple correlation coefficient

ANOVA TABLE H0: 1=···= p= 0 SST (Total sum of squares) = SSR (Regression sum of squares) + SSE (Residual sum of squares)

ANOVA TABLE Null hypothesis for the ANOVA table • H0: All p independent variables considered together do not explain a significant amount of the variation in y. • H0: There is no significant overall regression using all p independent variables in the model. • H0: 1 = 2 = ··· = p = 0

ANOVA TABLE Test for Significant Overall Regression Hypothesis: H0: 1 = 2 = ··· = p = 0 Test statistic: Decision: If F > Fp, n-p-1, 1-, then reject H0.

ANOVA TABLE Relationship between F and R2 Note that SSR=R2(SST)

INTERPRETATIONS OF THE OVERALL TEST If H0: 1 = 2 = ··· = p = 0 is not rejected, one of the following is true: • For a true underlying linear model, linear combinations of x1, …, xp provide little or no help in predicting y, that is, is essentially as good as for predicting y. • The true model may involve quadratic, cubic, or other more complex functions of x1, …, xp.

INTERPRETATIONS OF THE OVERALL TEST If H0: 1 = 2 = ··· = p = 0 is rejected, • Linear combinations of x1, …, xp provide significant information for predicting y, that is, the model is far better than the naive model for predicting y. • However, the result does not mean that all independent variables x1, …, xp are needed for significant prediction of y; perhaps only one or two of them are sufficient. In other words, a more parsimonious model than the one involving all p variables may be adequate.

PARTIAL F TEST ANOVA table for y regressed on x1, x2, and x3. x1, x2, x3 x1 x2|x1 x3|x1, x2 693.06 588.92 103.90 0.24 195.19 888.25 3 1 1 1 8 11 231.02 588.92 103.90 0.24 24.40 9.47 24.1 4.26 0.01 0.005 0.001 0.057 0.923 Overall test

PARTIAL F TEST • In the previous ANOVA table, the sum of squares regression were partitioned into three components: • SS(x1) – the sum of squares explained by using only x1 to predict y. • SS(x2| x1) – the extra sum of squares explained by using x2 in addition to x1 to predict y. • SS(x3| x1, x2) – the extra sum of squares explained by using x3 in addition to x1 and x2 to predict y.

PARTIAL F TEST • These extra information in the ANOVA table are able to answer the following questions: • Does x1 alone significantly aid in predicting y? • Does the addition of x2 significantly contribute to the prediction of y after we account (or control) for the contribution of x1? • Does the addition of x3 significantly contribute to the prediction of y after we account (or control) for the contribution of x1 and x2?

PARTIAL F TEST • To answer the first question, we simply fit the simple linear regression model using x1 as the single independent variable. The corresponding F statistic is used to test the significant contribution of x1. • To answer the second and the third questions, we must use what is called a partial F test. This test assesses whether the addition of any specific independent variable, given others already in the model, significantly contributes to the prediction of y.

PARTIAL F TEST Test for Significant Additional Information (I) • H0: * = 0 The addition of x* to a model already containing x1, …, xp does not significantly improve the prediction of y.

PARTIAL F TEST Test for Significant Additional Information (I) SS(x*|x1, …, xp) = SSR(x1, …, xp,x*)- SSR(x1, …, xp) = SSE(x1, …, xp) - SSE(x1, …, xp,x*) SSR when x1, …, xp, x* are all in the model SSR when x1, …, xp are all in the model Extra SS from adding x*, given x1, …, xp - =

PARTIAL F TEST Test for Significant Additional Information (I) • Test statistic (Partial F test) If F > F1, n-p-2, 1-, then reject H0.

PARTIAL F TEST From the example,

PARTIAL F TEST From the example,

MULTIPLE PARTIAL F TEST Test for Significant Additional Information (II) • H0: 1* = ···= k* = 0 The addition of x1*, ···, xk* to a model already containing x1, …, xp does not significantly improve the prediction of y.

MULTIPLE PARTIAL F TEST Test for Significant Additional Information (II) SS(x1*, …, xk*|x1, …, xp) = SSR(x1, …, xp,x1*, …, xk*)- SSR(x1, …, xp) =SSE(x1, …, xp) - SSE(x1, …, xp,x1*, …, xk*) SSR when x1, …, xp, and x1*, …, xk* are all in the model SSR when x1, …, xp are all in the model Extra SS from addingx1*, …, xk* given x1, …, xp - =

MULTIPLE PARTIAL F TEST Test for Significant Additional Information (II) • Test statistic (Multiple Partial F test) If F > Fk, n-p-k-1, 1-, then reject H0.

A variable should be included in the model? Two methods 1.Partial F tests for variable added in order (Type I F tests) 2. Partial F tests for variable added last (Type III F tests) : Significance of a variable is tested as if it were the last variable to enter the model.

TYPE III F TEST ANOVA table for y regressed on x1, x2, and x3. x1, x2, x3 x1|x2, x3 x2|x1, x3 x3|x1, x2 693.06 166.58 101.81 0.24 195.19 888.25 3 1 1 1 8 11 231.02 166.58 101.81 0.24 24.40 9.47 6.83 4.17 0.01 0.005 0.031 0.075 0.923 Overall test

TYPE III F TEST From the example,

TYPE III F TEST From the example,

Type I SS = SS for the partial F test (sequential SS) Type III SS = SS for the multiple partial F test

Forward addition Backward elimination