Monolithic vs Modular Approaches in Data Management and Reporting

E N D

Presentation Transcript

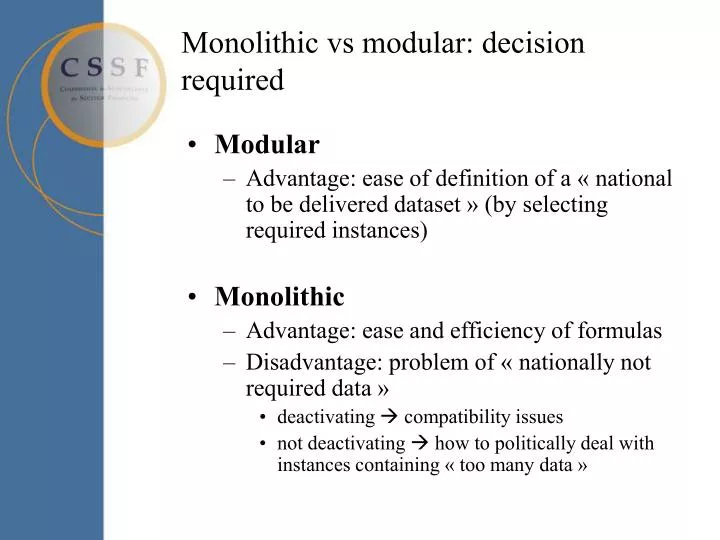

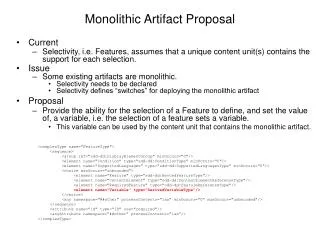

Monolithic vs modular: decision required • Modular • Advantage: ease of definition of a « national to be delivered dataset » (by selecting required instances) • Monolithic • Advantage: ease and efficiency of formulas • Disadvantage: problem of « nationally not required data » • deactivating compatibility issues • not deactivating how to politically deal with instances containing « too many data »

Rounding • “The rounding should be done while consuming the data by the financial regulator” • “Correct rounding should be enforced by instance validation e.g. in the formula validation part”

Instance « context » • Consolidation status • consolidated • non consolidated head & branches • non consolidated head only • non consolidated branch • Audit status • provisional • audited • Consolidation scope • CRD • Insurance • OtherEntities • IFRS

Instance « context » • Materialisation alternatives • As dimensions ? • As part of some header ? • As part of some external « envelope »?

Standard dataset • one single reporting entity • one single reporting period • one single capital currency (+ pure) • one single consolidation status • one single audit status • one single scope of consolidation

Otherwise … Max-tax Country 1 Country 2

Dimension defaults • Use of instances in a 2-step approach • first XBRL validation • then injection of values into DB (without taxonomy) • Issues: • Transparency of data • Reidentification of hypercubes in a mapping-oriented system • Keep it simple (& keep requirements low)

In scope of Finrep 2012 • Atomic XBRL instances that are structurally compatible • Atomic XBRL instances that respect CEBS formula set • (Hopefully) no national formula sets required • In case of a monolithic taxonomy structure, a standard way of rejecting « nationally not required data » should be defined: take it and ignore it, or refuse it? • A « standard dataset » acceptable by all participating countries should be defined

Out of scope of FINREP 2012 • To be determined by local supervisor (or within separate project) • File name convention • Transmission channel & encryption • Packaging and envelopes (XBRL pure, XBRL/XML mix, ZIP, ...)

Obsolete • Nil • Nil values should be technically prohibited by instance validation • Period / instant harmonization ? • Can one single approach be defined for all data ? • both inacceptable for IFRS compatibility reasons

Min-Max Risk (Corep) Tn-max Tn-min Tn-country1 Tn-country2 Tn-countryn

Min-Max best practice • National extensions should either include min or max version of Tn, but not a mix of them

Finrep structure t1 t2 BS … tn t1 t2 P&L … tn

Corep structure T1-max T1-min Ca-sro … Tn-max Tn-min

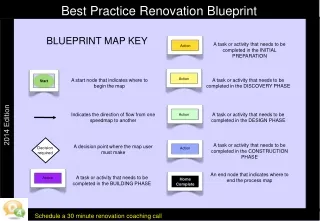

Finrep-Corep 2012 structure Tn-min Tn-min formulas Main Import Tn-max Tn-max formulas Main formulas Taxonomy-nmin with formulas Taxonomy-nmax imports Taxonomy-nminand adds its own formulas

Discussion on requirements • Formulas have to work properly and efficiently cross-instance in all XBRL-tools • Access to all required instances in the same environment at the same time in the same place • Pre-defined file name convention for instances required?