Constraint Satisfaction Problems

Constraint Satisfaction Problems. A Brief Summary of Basic Ideas. Outline. What is CSP? Definitions and Terminologies CSP Examples Solving CSP by Search Backtracking Search Heuristics for CSP Search Variable and Value Heuristics Forward Checking and Constraint Propagation Summary.

Constraint Satisfaction Problems

E N D

Presentation Transcript

Constraint Satisfaction Problems A Brief Summary of Basic Ideas

Outline What is CSP? Definitions and Terminologies CSP Examples Solving CSP by Search Backtracking Search Heuristics for CSP Search Variable and Value Heuristics Forward Checking and Constraint Propagation Summary

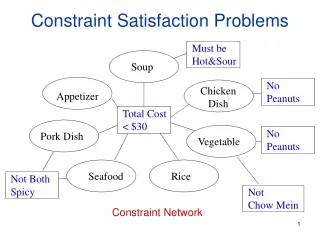

Definitions • A constraint-satisfaction problem has • A set of variables • V = {X1, X2, …, Xn} • Each variable has a domain of values • Xi Di = {di1, di2, …, din} • A set of constraints on the values each variable can take • C = {C1, C2, …, Cm} • A state is a set of assignment of values • S1 = {X1 = d12, X4 = d45}

CSP: Terminologies • Consistent (legal) state • An assignment that does not violate any of the constraints • Complete state • All variables have a value • Goal state • Consistent and complete assignments • Might not exist • Proof of inconsistency

Example: Map Colouring Colour map of the states of Australia 3 colours (red, green, blue), no adjacent states with same colour Binary CSP: each constraint relates two variables Constraint graph: nodes are variables, arcs are constraints

Example: Map Colouring Variables {WA, NT, SA, Q, NSW, V, T} Domain {R, G, B} Constraints {WA NT, WA SA, NT SA, NT Q, SA Q, SA NSW, SA V, Q NSW, NSW V}

Example: Map-Colouring Solutions are complete and consistent assignments, e.g., WA = red, NT = green, Q = red, NSW = green, V = red, SA = blue, T = green

Example: 8-Queen • Variables • {Q1,Q2,Q3,Q4,Q5,Q6,Q7,Q8} • Domain • Q1, …, Q8∊{(1,1), (1,2), …, (8,8)} • Alternatively Q1, …, Q8 ∊{1, 2, …, 8} if the variables represent queen at each column • Constraint set • No queen attacks any other

X1 {1,2,3,4} X2 {1,2,3,4} 1 2 3 4 1 2 3 4 X3 {1,2,3,4} X4 {1,2,3,4} Example: 4-Queens Problem

Varieties of CSPs Discrete variables finite domains: n variables, domain size d O(d n) complete assignments e.g., map colouring, 8-queen infinite domains: integers, strings, etc. use of constraint language instead of enumerating all values e.g., job scheduling, variables are start/end days for each job: StartJob1 + 5 ≤ StartJob3 Continuous variables e.g., start/end times for Hubble Space Telescope observations linear constraints solvable in polynomial time by linear programming

Absolute Constraint Unary Constraint e.g. SA ≠ green Binary Constraint e.g. SA ≠ WA Higher-order Constraint e.g. Crypt-arithmetic column constraints Preference Constraint Many real world CSPs have preference, e.g. Prof. X might prefer teaching in the morning, red is better than yellow etc. Use an objective function to include a cost for each variable assignment Constrained optimisation problems Varieties of Constraints

Real World CSPs Resource allocation e.g., crew assignments to flights Timetabling problems e.g., which class is offered when and where? Transportation fleet planning Manufacturing scheduling Shop floor design and layout

Solving CSP by Search States Set of value assignments to some or all of the variables Initial state The empty assignment {} Successor function Value assignment to any unassigned variable without conflicts with previously assigned variables Goal test Finding out whether the current assignment is complete and consistent Path Cost A constant cost

Benefits The successor function and goal test can be written in a generic way that applies to all CSPs. We can develop effective, generic heuristics that require no additional domain-specific expertise. The structure of the constraint graph can be used to simplify the solution process.

Standard Search Formulation nxd WA WA WA NT T [nxd]x[(n-1)xd] WA NT WA NT WA NT NT WA • Let’s try the standard search formulation (generate and test) • We need: • Initial state: none of the variables has a value (colour) {} • Successor state: one of the variables without a value will get some value. • Goal: all variables have a value and none of the constraints is violated. Equal! n! x dn leaves but there are only dn distinct complete assignments! depth n

Backtracking (Depth-First) Search NT WA WA NT = • Special property of CSPs: They are commutative, • which means that the order in which we assign variables • does not matter. • Better search tree: First order variables, then assign them with values one-by-one. d WA WA WA WA NT d2 WA NT WA NT dn

Backtracking Search • Depth-first search algorithm • Goes down one variable at a time • In a dead end, back up to last variable whose value can be changed without violating any of the constraints, and change it • If you backed up to the root and tried all values, then there are no solutions • Algorithm is complete • Will find a solution if one exists • Will expand the entire (finite) search space if necessary

Q BT Q Q Q Q Q Q Q Q Q Q Q Q BT BT Q Q Q BT BT BT BT Q Q Q Q Q Q Q BT Q BT Q Q Q Q BT Q Q Q BT BT Q Q Q BT Q Q Q Q Backtracking Search

Heuristics • Backtracking searches are variations of depth-limited search with limit = n • Uninformed search technique • Can we make it an informed search? • Add some heuristics • Which variable to assign next? • In which order should the values be tried? • How to detect dead-ends early?

Variable & Value Heuristics • Most constrained variable • a.k.a minimum remaining values (MRV) • Choose the variable with the fewest legal values remaining in its domain • Most constraining variable • a.k.a. degree heuristic • Choose the variable that’s part of the most constraints • In first variable selection, MRV heuristic does not help at all so pick one with highest degree. Also useful in cases of MRV tie-break. • Least constraining value • Pick the value that’s part of the fewest constrains • Keeps maximum flexibility for future assignments

Rationale for MRV, DH, LCV In all cases we want to enter the most promising branch, but we also want to detect inevitable failure as soon as possible. MRV: the variable that is most likely to cause failure in a branch is assigned first. The variable must be assigned at some point, so if it is doomed to fail, we’d better find out soon. This has the effect of pruning the search tree. DH: reduce branching factor on future choices by selecting the variable that is involved in the largest number of constraints on other unassigned variables LCV: tries to avoid failure by assigning values that leave maximal flexibility for the remaining variable assignments, so as to succeed as soon as possible. Useful if only one solution is required but no difference if all solutions have to be found or if there is no solution to the problem

Forward checking A variable X is assigned. Looks at each unassigned variable Y connected to X by a constraint Deletes any values of Y’s domain that is inconsistent with X If there is any Y with empty domain, undo this assignment Backtrack!! If not, MRV !! Forward checking is an obvious partner of MRV heuristic !! MRV heuristic is activated after forward checking. Heuristics for Early Failure Detection

Forward Checking Idea: Keep track of remaining legal values for unassigned variables Terminate search when any variable has no legal values

Q BT Q FC FC FC FC FC FC FC FC FC FC FC FC BT BT Q BT Q Q Q Q FC FC FC FC FC FC FC FC FC FC FC BT FC FC Q Q FC Q FC Q Q FC FC Q Q FC FC FC FC FC Q FC FC FC FC FC FC FC FC FC FC FC FC FC FC FC FC Q FC FC FC FC FC FC Q FC FC BT Q FC FC FC FC FC FC Forward Checking

Most Constrained Variable NT NT NT Q SA SA SA NT ={G, B} Q = {R, G, B} WA = R SA = {G, B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B} NT ={R, G, B} Q = {R, G, B} WA = {R, G, B} SA = {R, G, B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B} NT =G Q = {R, B} WA = R SA = {B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B}

Most Constraining Variable NT NT Q SA SA SA NT ={R, G} Q = {R, G} WA = {R, G} SA = B NSW = {R, G} V = {R, G} T = {R, G, B} 3 3 2 5 3 2 0 NT ={R, G, B},3 Q = {R, G, B},3 WA = {R, G, B},2 SA = {R, G, B},5 NSW = {R, G, B},3 V = {R, G, B},2 T = {R, G, B},0 NT = G Q = {R} WA = {R} SA = B NSW = {R, G} V = {R, G} T = {R, G, B}

NT Q WA NT WA WA NT Q WA NT ={G, B} Q = {R, G, B} WA = R SA = {G, B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B} NT ={R, G, B} Q = {R, G, B} WA = {R, G, B} SA = {R, G, B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B} NT =G Q = {R, B} WA = R SA = {B} NSW = {R, G, B} V = {R, G, B} T = {R, G, B} NT =G Q = R WA = R SA = {B} NSW = {G, B} V = {R, G, B} T = {R, G, B} NT =G Q = B WA = R SA = ? NSW = {R,G} V = {R, G, B} T = {R, G, B} Least Constraining Value Red in Q is the least constraint value Terminate search when any variable has no legal values

Constraint Propagation Forward checking only checks consistency between assigned and non-assigned states. How about constraints between two unassigned states? NT and SA cannot both be blue! Constraint propagation repeatedly enforces constraints locally

Checking for Consistency Node consistency Unary constraint (e.g. NT G) A node is consistent if and only if all values in its domain satisfy all unary constraints Arc consistency Binary constraint (e.g. NT Q) An arc Xi Xj or (Xi, Xj) is consistent if and only if, for each value a in the domain of Xi, there is a value b in the domain of Xj that is permitted by the binary constraints between Xiand Xj. Path consistency Can be reduced to arc consistency

Arc Consistency Simplest form of propagation makes each arc consistent X Y is consistent iff for every value x of X there is some allowed y for Y consistent arc. constraint propagation propagates arc consistency on the graph.

Arc Consistency Simplest form of propagation makes each arc consistent X Y is consistent iff for every value x of X there is some allowed y for Y inconsistent arc. remove blue from source consistent arc.

Arc Consistency Simplest form of propagation makes each arc consistent X Y is consistent iff for every value x of X there is some allowed y for Y If X loses a value, neighbors of X need to be rechecked: i.e. incoming arcs can become inconsistent again (outgoing arcs will stay consistent). this arc just became inconsistent

Arc Consistency Simplest form of propagation makes each arc consistent X Y is consistent iff for every value x of X there is some allowed y If X loses a value, neighbors of X need to be rechecked Arc consistency detects failure earlier than forward checking Can be run as a preprocessor or after each assignment

Special Constraints – Alldiff Problem-dependent constraints Alldiff constraint If there are m variables involved in the constraint and n possible distinct values, m > n inconsistent for Alldiff constraint An example 3 variables: NT,SA,Q NT, SA, Q ∊ {green, blue} m=3 > n=2 inconsistent WA NSW

Special Constraints – Atmost Resource constraint Atmost constraint (use limited resource) An example In total, no more than 10 personnel should be assigned to jobs. Atmost (10, PA1, PA2, PA3, PA4) Job1~4 ∊ {3,4,5,6} ( min value = 3 ) 3+3+3+3 > 10 (minimum resource 12 but available resource 10) inconsistent Job1~4 ∊ {2,3,4,5,6} 2+2+2+2 <10 (consistent) 2+2+2+5 (or 6) > 10(inconsistent) Delete 5,6 from domain

Summary CSPs are a special kind of problem: states defined by values of a fixed set of variables goal test defined by constraints on variable values Backtracking = depth-first search with one variable assigned per node Variable ordering and value selection heuristics help significantly Forward checking prevents assignments that guarantee later failure Constraint propagation (e.g., arc consistency) does additional work to constrain values and detect inconsistencies

Acknowledgements The lecture slides are adapted from the following sources: http://sharif.ir/~sani/courses/ai/ai5-csp2.ppt Artificial Intelligence: A Modern Approach by Russell and Norvig Slides from the AIMA instructor’s resource page at: http://aima.eecs.berkeley.edu/slides-ppt/m5-csp.ppt