Processes and Threads

Processes and Threads. 2.1 Processes 2.2 Threads 2.3 Interprocess communication 2.4 Classical IPC problems 2.5 Scheduling. Chapter 2. Processes The Process Model. In the process model, all runnable software is organized as a collection of processes. . Multiprogramming of four programs

Processes and Threads

E N D

Presentation Transcript

Processes and Threads 2.1 Processes 2.2 Threads 2.3 Interprocess communication 2.4 Classical IPC problems 2.5 Scheduling Chapter 2

ProcessesThe Process Model • In the process model, all runnable software is organized as a collection of processes. • Multiprogramming of four programs • Conceptual model of 4 independent, sequential processes • Only one program active at any instant

Process Creation • Principal events that cause process creation • System initialization • Execution of a process creation system • User request to create a new process • Initiation of a batch job • Foreground processes are those that interact with users and perform work for them. • Background processes that handle some incoming request are called daemons.

Process Creation • How to list the running processes? • In UNIX, use the ps command. • In Windows 95/98/Me, use Ctrl-Alt-Del. • In Windows NT/2000/XP, use the task manager. • In UNIX, a fork() system call is used to create a new process. • Initially, the parent and the child have the same memory image, the same environment strings, and the same open files. • The execve() system call can be used to load a new program. • But the parent and child have their own distinct address space. • In Windows, CreateProcess handles both creation and loading the correct program into the new process.

Process Termination • Conditions which terminate processes • Normal exit (voluntary) • Exit in UNIX and ExitProcess in Windows. • Error exit (voluntary) • Example: Compiling errors. • Fatal error (involuntary) • Example: Core dump • Killed by another process (involuntary) • Kill in UNIX and TerminateProcess in Windows.

Process Hierarchies • Parent creates a child process, child processes can create its own process • Forms a hierarchy • UNIX calls this a "process group“ • In UNIX, init sshd sh ps • Windows has no concept of process hierarchy • all processes are created equal

Process Model - Example • In UNIX, for example, a fork() system call is used to create child processes in such a hierarchy. A good example is a shell. • Consider the menu-driven shell given in programs/c/Ex3.c.

Process Model - Example • The algorithm (Ex3.c) is: • Display the menu and obtain the user's request (1=ls,2=ps,3=exit). • If the user wants to exit, then terminate the shell process. • Otherwise: • Fork off a child process. • The child process executes the option selected, while the parent waits for the child to complete. • The child exits. • The parent goes back to step 1.

Process States (1) • Possible process states • Running - using the CPU. • Ready - runnable (in the ready queue). • Blocked - unable to run until an external event occurs; e.g., waiting for a key to be pressed. • Transitions between states shown

Process States (2) • Lowest layer of process-structured OS (Scheduler) • handles interrupts, scheduling • Above that layer are sequential processes • User processes, disk processes, terminal processes

Implementation of Processes • The operating system maintains a process table with one entry (called a process control block (PCB)) for each process. • When a context switch occurs between processes P1 and P2, the current state of the RUNNING process, say P1, is saved in the PCB for process P1 and the state of a READY process, say P2, is restored from the PCB for process P2 to the CPU registers, etc. Then, process P2 begins RUNNING. • Note: This rapid switching between processes gives the illusion of true parallelism and is called pseudo- parallelism.

Implementation of Processes (1) Fields of a process table entry

Implementation of Processes (2) Skeleton of what lowest level of OS does when an interrupt occurs

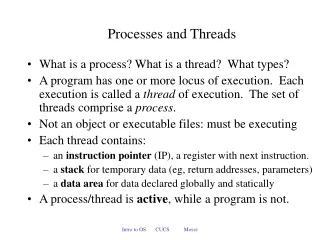

Thread vs. Process • A thread – lightweight process (LWP) is a basic unit of CPU utilization. • It comprises a thread ID, a program counter, a register set, and a stack. • A traditional (heavyweight) process has a single thread of control. • If the process has multiple threads of control, it can do more than one task at a time. This situation is called multithreading.

ThreadsThe Thread Model (1) (a) Three processes each with one thread (b) One process with three threads

The Thread Model (2) • Items shared by all threads in a process • Items private to each thread

The Thread Model (3) Each thread has its own stack

Thread Usage • Why do you use threads? • Responsiveness: Multiple activities can be done at same time. They can speed up the application. • Resource Sharing: Threads share the memory and the resources of the process to which they belong. • Economy: They are easy to create and destroy. • Utilization of MP (multiprocessor) Architectures: They are useful on multiple CUP systems. • Example - Word Processor, Spreadsheet: • One thread interacts with the user. • One formats the document (spreadsheet). • One writes the file to disk periodically.

Thread Usage (1) A word processor with three threads

Thread Usage • Example – Web server: • One thread, the dispatcher, distributes the requests to a worker thread. • A worker thread handles the requests. • Example – data processing: • An input thread • A processing thread • An output thread

Thread Usage (2) A multithreaded Web server

Thread Usage (3) • Rough outline of code for previous slide (a) Dispatcher thread (b) Worker thread

Thread Usage (4) Three ways to construct a server

User Threads • Thread management done by user-level threads library • User-level threads are fast to create and manage. • Problem: If the kernel is single-threaded, then any user-level thread performing a blocking system call will cause the entire process to block. • Examples - POSIX Pthreads - Mach C-threads - Solaris UI-threads

Implementing Threads in User Space A user-level threads package

Kernel Threads • Supported by the Kernel: The kernel performs thread creation, scheduling, and management in kernel space. • Disadvantage: high cost • Examples - Windows 95/98/NT/2000/XP - Solaris - Tru64 UNIX - BeOS - OpenBSD - FreeBSD - Linux

Implementing Threads in the Kernel A threads package managed by the kernel

Hybrid Implementations Multiplexing user-level threads onto kernel- level threads

Scheduler Activations • Goal – mimic functionality of kernel threads • gain performance of user space threads • Avoids unnecessary user/kernel transitions • Kernel assigns virtual processors to each process • lets runtime system allocate threads to processors • Makes an upcall to the run-time system to switch threads. • Problem: Fundamental reliance on kernel (lower layer) calling procedures in user space (higher layer)

Pop-Up Threads • Creation of a new thread when message arrives (a) before message arrives (b) after message arrives

Making Single-Threaded Code Multithreaded Conflicts between threads over the use of a global variable

Making Single-Threaded Code Multithreaded Threads can have private global variables

Thread Programming • Pthread - a POSIX standard (IEEE 1003.1c) API for thread creation and synchronization. <pthread.h>. • Solaris 2 is a version of UNIX with support for threads at the kernel and user levels, SMP, and real-time scheduling. • Solaris 2 implements the Pthread API and UI threads

Thread Programming • Pthread - a POSIX standard (IEEE 1003.1c) API for thread creation and synchronization. • Example: thread-sum.c, pthread-ex.c, helloworld.cc • Solaris 2 threads: • Solaris 2 is a version of UNIX with support for threads at the kernel and user levels, SMP, and real-time scheduling. • Solaris 2 implements the Pthread API and UI threads. • Example: thread-ex.c, lwp.c

Thread Programming • Java threads may be created by: • Extending Thread class • Implementing the Runnable interface • Calling the start method for the new object does two things: • It allocates memory and initializes a new thread in the JVM. • It calls the run method, making the thread eligible to be run by JVM. • Java threads are managed by the JVM. • Example: ThreadEx.java, ThreadSum.java

Interprocess Communication • Three issues are involved in interprocess communication (IPC): • How one process can pass information to another. • How to make sure two or more processes do not get into each other’s way when engaging in critical activities. • Proper sequencing when dependencies are present. • Race conditions are situations in which several processes access shared data and the final result depends on the order of operations.

Interprocess CommunicationRace Conditions Two processes want to access shared memory at same time

Race Condition • Assume there are two variables , out, which points to the next file to be printed, and in, which points to the next free slot in the directory. • Assume in is currently 7. The following situation could happen: Process A reads in and stores the value 7 in a local variable. A switch to process B happens. Process B reads in, stores the file name in slot 7 and updates in to be an 8. Process A stores the file name in slot 7 and updates in to be an 8. • The file name in slot 7 was determined by who finished last. A race condition occurs.

Critical Regions • The key to avoid race conditions is to prohibit more than one process from reading and writing the shared data at the same time. • Four conditions to provide mutual exclusion • No two processes simultaneously in critical region (mutual exclusion) • No assumptions made about speeds or numbers of CPUs • No process running outside its critical region may block another process (progress) • No process must wait forever to enter its critical region

Critical Regions (2) Mutual exclusion using critical regions

Mutual Exclusion Solution - Disabling Interrupts • By disabling all interrupts just after entering its critical region, no context switching can occur. • Thus, it is unwise to allow user processes to disable interrupts. • However, it is convenient (and even necessary) for the kernel to disable interrupts while a context switch is being performed.

Mutual Exclusion Solution - Lock Variable shared int lock = 0; /* entry_code: execute before entering critical section */ while (lock != 0) /* do nothing */ ; lock = 1; - critical section - /* exit_code: execute after leaving critical section */ lock = 0; • This solution may violate property 1. If a context switch occurs after one process executes the while statement, but before setting lock = 1, then two (or more) processes may be able to enter their critical sections at the same time.

Mutual Exclusion Solution Proposed solution to critical region problem (a) Process 0. (b) Process 1.

Mutual Exclusion Solution – Strict Alternation • This solution may violate progress requirement. Since the processes must strictly alternate entering their critical sections, a process wanting to enter its critical section twice in a row will be blocked until the other process decides to enter (and leave) its critical section as shown in the the table below. • The solution of strict alteration is shown in Ex5.c. Be sure to note the way shared memory is allocated using shmget and shmat.

Mutual Exclusion with Busy Waiting Peterson's solution for achieving mutual exclusion

Mutual Exclusion Solution – Peterson’s • This solution satisfies all 4 properties of a good solution. Unfortunately, this solution involves busy waiting in the while loop. Busy waiting can lead to problems we will discuss below. • Challenge: Write the code for Peterson's solution using Ex5.c (the strict alteration code) as a starting point.

Hardware solution: Test-and-Set Locks (TSL) • The hardware must support a special instruction, tsl, which does 2 things in a single atomic action: tsl register, flag: (a) copy a value in memory (flag) to a CPU register and (b) set flag to 1.

Mutual Exclusion with Busy Waiting Entering and leaving a critical region using the TSL instruction

Mutual Exclusion with Busy Waiting • The last two solutions, 4 and 5, require BUSY-WAITING; that is, a process executing the entry code will sit in a tight loop using up CPU cycles, testing some condition over and over, until it becomes true. For example, in 5, in the enter_region code, a process keeps checking over and over to see if the flag has been set to 0. • Busy-waiting may lead to the PRIORITY-INVERSION PROBLEM if simple priority scheduling is used to schedule the processes.