Building Dependable Systems: Fault Tolerance and Reliability Concepts

Learn about fault tolerance, masking failures, and process resilience in computing systems to ensure reliability and avoid catastrophic failures.

Building Dependable Systems: Fault Tolerance and Reliability Concepts

E N D

Presentation Transcript

Fault Tolerance and Reliabilityin DS

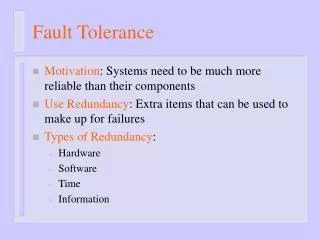

Fault Tolerance Basicconcepts in fault tolerance Maskingfailure byredundancy Processresilience

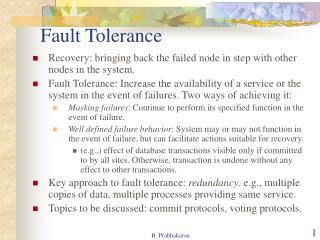

Motivation Single machine systems Failures are all or nothing OS crash, disk failures Distributed systems: multiple independent nodes Partial failures are also possible (some nodes fail) Question: Can we automatically recover from partial failures? Important issue since probability of failure grows with number of independent components (nodes) systems in the Prob(failure) = Prob(Any one component fails)=1-P(no failure)

A Perspective Computing systems are not very reliable OS crashes frequently (Windows), buggy software, unreliable hardware, software/hardware incompatibilities Until recently:computer users were “tech savvy” i.e knowing a lot about modern technology, specially computers: Could depend on users to reboot, troubleshoot problems Growing popularity of Internet/World Wide Web “Novice” users Need to build more reliable/dependable systems Example: what is your TV (or car) broke down every day? Users don’t want to “restart” TV or fix it (by opening it up) Need to make computing systems more reliable

BasicConcepts Fault – physical defect, imperfection, or flaw that occurs within hardware or software unit. Error – manifestation of a fault. Deviation from accuracy or correctness. Failure – if error results in the system performing one of its functions incorrectly.

Basic Concepts (cont’d) Need to build dependable systems Requirements for dependable systems Availability: system should be available for given time 99.999 % availability (five 9s) => very small down times Reliability: system should run continuously without failure Safety: temporary failures should not result in a catastrophic Example: computing systems controlling an airplane, nuclear reactor Maintainability: a failed system should be easy to repair Security: avoidance or tolerance of deliberate attacks to the system

BasicConcepts (cont’d) Fault tolerance: system should services despite faults Transient faults- Fault for very short period of time. Intermittent faults- Due to loose connection of circuit Permanent faults- Circuit burnt out Provide

Transient faults • Fault for very short period of time. • Transient fault occur once and then disappear. If operation is repeated the fault goes away. • eg-A bird is flying through the beam of a microwave transmitter may cause lost bits on some n/w. If transmission times out and is retried, it will properly work the second time

Failure Models Typeof failure Description Crashfailure Aserverhalts,butisworkingcorrectlyuntilithalts Omission failure Receive omission Send omission Aserverfailstorespondtoincomingrequests Aserverfailstoreceiveincomingmessages Aserverfailstosendmessages Timingfailure Aserver'sresponseliesoutsidethespecifiedtimeinterval Responsefailure Valuefailure Statetransitionfailure Theserver'sresponseisincorrect Thevalueoftheresponseiswrong Theserverdeviatesfromthecorrectflowofcontrol Arbitraryfailure Aservermayproducearbitraryresponsesatarbitrarytimes Differenttypesof failures.

Fault types Node (hardware) faults Program (software) faults Communication faults Timing faults Implies typesof redundancy

Typesofredundancy • Hardware redundancy: extra PE, I/O • Software redundancy: N-version programming (NVP) • is a method or process in software engineering where multiple functionally equivalent programs are independently generated from the same initial specifications • Information redundancy: error detection and correction methods • Time redundancy: performing the same operation multiple times such as multiple executions of a program or multiple copies of data transmitted

Fault handling methods Active replication – all replication modules and their internal states are closely synchronized. Passive replication – only one module is active but other module’s internal states are regularly updated by means of checkpoint from active module. Semi-active – hybrid of both active passive replication. Low recovery overhead. and

Failure Masking by Redundancy Triplemodularredundancy.

ProcessResilience Handling faulty processes: processes into a group organizeseveral All processes All messages Majority need perform same computation are sent to all members of the group to agree on results of a computation Ideally want multiple, independent implementations of the application (to prevent identical bugs) Use process groups to organize such processes

Flat Groups Groups versus Hierarchical -Flat Group: ->Every one has responsibility ->delay for consensus ->independent Advantages and disadvantages?

Agreement in Faulty Systems How should processes agree on results of a computation? K-fault tolerant: system can survive k faults and yet function Assume processes fail silently Need (k+1)redundancyto tolerant k faults Byzantine failures: processes run even if sick Produce erroneous, random or malicious replies Byzantine failures are most difficult to deal with Need ? Redundancyto handleByzantinefaults

Byzantine Faults Simplified scenario: two perfect processes with unreliable channel Need to reach agreement on a 1 bit message Two army problem: Two armies waiting to attack Each army coordinates with a messenger Messenger can be captured by the hostile army Can generals reach agreement? Property: Two perfect process can never reach agreement in presence of unreliable channel Byzantine generals problem: Can N generals reach agreement with a perfect channel? M generals out of N may be traitors

Byzantine Generals Problem Recursive algorithm by Lamport The Byzantine generals problem for 3 loyal generals traitor. and1 a) The generals announce their troop strengths (in units of 1 kilosoldiers). The vectors that each general assembles based on (a) The vectors that each general receives in step 3. b) c)

Byzantine Generals Problem Example The same as in previous slide, except now with 2 loyal generals and one traitor. Property: With m faulty processes, agreement is possible only if 2m+1 processes function correctly [Lamport 82] Need more than two-thirds processes to function correctly

Moreon Fault Tolerance Reliable communication One-one communication One-many communication Distributed commit Two phase commit Three phase commit Failure recovery Checkpointing Message logging

Reliable One-One Communication Issues were discussed in Lecture 3 Use reliable transport protocols (TCP) or handle at application layer RPC semantics in the presence of failures Possibilities: Client unable to locate server Lost request messages Server crashes after receiving request Lost reply messages Client crashes after sending request the

Reliable One-Many Communication Reliable multicast Lost messages => need to retransmit i.e receive guarantee Possibilities ACK-based schemes Sender can become bottleneck NACK-based schemes

Atomic Multicast Atomic multicast: a guarantee that all process received the message or none at all Replicated database example Problem: how to handle process crashes? Solution: group view Each message is uniquely associated with a group of processes View of the process group when message was sent All processes in the group should have the same view (and agree on it) Figure : VirtuallySynchronousMulticast After some messages have been multicast p3 crashes. However, before crashing it succeeded in multicasting a message to p2 &p4 but not to p1. However, virtual synchrony guarantees that the message is not delivered at all ,effectively establishing the situation that the message never sent before p3 crashes

Implementing Virtual Synchrony in Isis • Communication: reliable, order-preserving, point-to-point n Requirement: all messages are delivered to all nonfaulty processes in G Solution • each pj in G keeps a message in the hold-back queue until it knows that all pj in G have received it • n a message received by all is called stable • only stable messages are allowed to be delivered • view change Gi => Gi+1 (means one new process want to join in group Gi): (There may be the case of Gi-1, if one process want to leave the group) • multicast all unstable messages to all pj in Gi+1 • multicast a flush message to all pj in Gi+1 • after having received a flush message from all: install the new view Gi+1

Implementing Virtual Synchrony in Isis In case of process failures can be accurately defined in terms of process groups and change to group membership a) b) Process 4 Process 6 message Process 6 noticesthatprocess 7hascrashed,sendsaview change sendsoutallitsunstablemessages,followed byaflush c) installs thenewviewwhenithasreceivedaflushmessage fromeveryoneelse

Distributed Commit Atomic multicast example of a more general problem All processes in a group perform an operation or not at all Examples: Reliable multicast: Operation = delivery of a message Distributed transaction: Operation = commit transaction Problem of distributed commit All or nothing operations in a group of processes Possible approaches Two phase commit (2PC) [Gray 1978 ] Three phase commit

Distributed commit protocols • How to execute commit for distributed transactions • Issue: How to ensure atomicity and durability • One-phase commit (1PC): the coordinator communicates with all servers to commit. Problem: a server can not abort a transaction. • Two-phase commit (2PC): allow any server to abort its part of a transaction. Commonly used. • Three-phase commit (3PC): avoid blocking servers in the presence of coordinator failure. Mostly referred in literature, not in practice.

Two-Phase Commit(Coordinator) • Phase 1: When coordinator is ready to commit the transaction • Place Prepare(T) state in log on stable storage • Send Vote_request(T) message to all other participants • Wait for replies

Two-Phase Commit (Coordinator) • Phase 2: Coordinator • If any participant replies Abort(T) • Place Abort(T) state in log on stable storage • Send Global_Abort(T) message to all participants • Locally abort transaction T • If all participants reply Ready_to_commit(T) • Place Commit(T) state in log on stable storage • Send Global_Commit(T) message to all participants • Proceed to commit transaction locally

Two-Phase Commit (Participant ) • Phase I: Participant gets Vote_request(T) from coordinator • Place Abort(T) or Ready(T) state in local log • Reply with Abort(T) or Ready_to_commit(T) message to coordinator • If Abort(T) state, locally abort transaction • Phase II: Participant • Wait for Global_Abort(T) or Global_Commit(T) message from coordinator • Place Abort(T) or Commit(T) state in local log • Abort or commit locally per message

Implementing Two-Phase actions by coordinator: while START _2PC to local log; multicast VOTE_REQUEST to all participants; while not all votes have been collected { wait for any incoming vote; if timeout { while GLOBAL_ABORT to local log; multicast GLOBAL_ABORT to all participants; exit; } record vote; } Commit if all participants sent VOTE_COMMIT and coordinator votes COMMIT{ write GLOBAL_COMMIT to local log; multicast GLOBAL_COMMIT to all participants; } else { write GLOBAL_ABORT to local log; multicast GLOBAL_ABORT to all participants; } Outline of the steps taken by the coordinator in a two phase commit protocol

Implementing actions by participant: write INIT to local log; wait for VOTE_REQUEST from coordinator; if timeout { write VOTE_ABORT to local log; exit; } if participant votes COMMIT { write VOTE_COMMIT to local log; send VOTE_COMMIT to coordinator; wait for DECISION from coordinator; if timeout { 2PC actions for handling decision requests: /*executed by separate thread */ while true { wait until any incoming DECISION_REQUEST is received; /* remain blocked */ read most recently recorded STATE from the local lo if STATE == GLOBAL_COMMIT send GLOBAL_COMMIT to requesting participant; else if STATE == INIT or STATE == GLOBAL_ABORT send GLOBAL_ABORT to requesting participant; else multicast DECISION_REQUEST to other participants; wait until DECISION is received; /* remain blocked */ write DECISION to local log; skip; /*participantremainsblocked*/ } if DECISION == GLOBAL_COMMIT write GLOBAL_COMMIT to local log; else if DECISION == GLOBAL_ABORT write GLOBAL_ABORT to local log; } else { write VOTE_ABORT to local log; send VOTE ABORT to coordinator; }

Three-Phase Commit • As with the two-phase commit, the three-phase also has a coordinator who initiates and coordinates the transaction. However, the three-phase protocol introduces a third phase called the pre-commit. • The aim of this is to 'remove the uncertainty period for participants that have committed and are waiting for the global abort or commit message from the coordinator'. • When receiving a pre-commit message, participants know that all others have voted to commit. If a pre-commit message has not been received the participant will abort and release any blocked resources

Three-Phase Commit • Coordinator: • Phase 1: The coordinator receives a transaction request. If there is a failure at this point, the coordinator aborts the transaction (i.e. upon recovery, it will consider the transaction aborted). Otherwise, the coordinator sends a canCommit? message to the participants and moves to the waiting state. • Phase 2: If there is a failure, timeout, or if the coordinator receives a No message in the waiting state, the coordinator aborts the transaction and sends an abort message to all participants. Otherwise the coordinator will receive Yes messages from all participants within the time window, so it sends preCommit messages to all participants and moves to the prepared state. • Phase 3:If the coordinator succeeds in the prepared state, it will move to the commit state. However if the coordinator times out while waiting for an acknowledgement from a participant, it will abort the transaction. In the case where all acknowledgements are received, the coordinator moves to the commit state as well.

Three-Phase Commit • Participants • Phase 1: The participant receives a canCommit? message from the coordinator. If the participant agrees it sends a Yes message to the coordinator and moves to the prepared state. Otherwise it sends a No message and aborts. If there is a failure, it moves to the abort state. • Phase 2: In the prepared state, if the participant receives an abort message from the coordinator, fails, or times out waiting for a commit, it aborts. If the participant receives a preCommit message, it sends an ACK message back and awaits a final commit or abort. • Phase 3: If, after a participant member receives a preCommit message, the coordinator fails or times out, the participant member goes forward with the commit.

Three-Phase Commit Two phase commit: problem if (processes block) coordinatorcrashes Threephasecommit:variantof 2PCthat avoidsblocking

Recovery Techniques thus far allow failure handling Recovery: operations that must be performed after a failure to correct state Techniques: Checkpointing: recover to a Periodicallycheckpoint state Upon a crash roll back to a previous checkpoint with a consistent state

Independent Checkpointing Each processes periodically checkpoints independently processes of other Upon a failure, work backwards to locate a consistent cut Problem: if most recent checkpoints form inconsistenct cut, will need to keep rolling back until a consistent cut is found Cascading rollbacks can lead to a domino effect.

CoordinatedCheckpointing Take a distributed snapshot Upon a failure, roll back to the latest snapshot All process restart from the latest snapshot

MessageLogging Checkpointing is expensive All processes restart from previous Taking a snapshot is expensive consistentcut Infrequent snapshots => all computations after previous snapshot will need to be redone [wasteful] Combine checkpointing (expensive) with message logging (cheap) Take infrequent checkpoints Log all messages between checkpoints to local stable storage To recover: simply replay messages from previous checkpoint Avoids recomputations from previous checkpoint