INF6953G : Concepts avancés en infonuagique C loud Architectural Patterns

INF6953G : Concepts avancés en infonuagique C loud Architectural Patterns. Foutse Khomh foutse.khomh@polymtl.ca Local M-4123. Important Problem Areas to Consider When Designing Cloud Applications. http://cloudacademy.com/blog/wp-content/uploads/2014/12/IC709534.png.

INF6953G : Concepts avancés en infonuagique C loud Architectural Patterns

E N D

Presentation Transcript

INF6953G :Concepts avancés en infonuagiqueCloud Architectural Patterns Foutse Khomh foutse.khomh@polymtl.ca Local M-4123

Important Problem Areas to Consider When Designing Cloud Applications http://cloudacademy.com/blog/wp-content/uploads/2014/12/IC709534.png

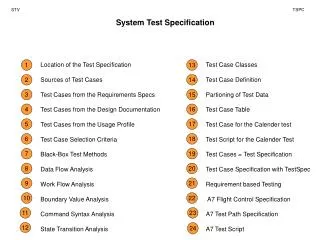

Patterns relevant for Data Partitioning • Sharding Pattern • Compensating Transaction Pattern • Command and Query Responsibility Segregation Pattern • Event Sourcing Pattern. • Index Table Pattern • Materialized View Pattern

Command and Query Responsibility Segregation (CQRS) Pattern Description: • Segregate operations that read data from operations that update data using separate interfaces. • This pattern can maximize performance, scalability, and security.

Command and Query Responsibility Segregation (CQRS) Pattern Context and Problem: • In traditional databases all create, read, update, and delete (CRUD) operations are applied to the same representation of the entity.

Command and Query Responsibility Segregation (CQRS) Pattern Context and Problem: This CRUD design has the following disadvantages: • There can be a mismatch between the read and write representations of the data • For example, additional columns or properties that must be updated correctly even though they are not required as part of an operation. • In a collaborative environment, there is a risk of: • contention when records are locked in the data store, or • update conflicts caused by concurrent updates when optimistic locking is used. • There is also a risk of performance degradation due to load on the data store and data access layer, and the complexity of queries required to retrieve information. • Managing security and permissions is cumbersome because each entity is subject to both read and write operations, which might inadvertently expose data in the wrong context.

Command and Query Responsibility Segregation (CQRS) Pattern Solution • Segregates operations that read data (Queries) from operations that update data (Commands) using separate interfaces. • Use different data models for querying and updating. It is common to separate data into two different stores to maximize performance, scalability, and security

Command and Query Responsibility Segregation (CQRS) Pattern Issues and Considerations • Dividing the data store into separate physical stores for read and write operations can add considerable complexity in terms of resiliency and eventual consistency. • The read model store must be updated to reflect changes to the write model store, • It may be difficult to detect when a user has issued a request based on stale read data (meaning that the operation cannot be completed). • Unlike CRUD designs, CQRS code cannot automatically be generated by using scaffold mechanisms.

Command and Query Responsibility Segregation (CQRS) Pattern When to Use this Pattern • Collaborative domains where multiple operations are performed in parallel on the same data. • It allows to define commands with a sufficient granularity to minimize merge conflicts. • Use with task-based user interfaces (where users are guided through a complex process as a series of steps), with complex domain models. • Scenarios where performance of data reads must be fine-tuned separately from performance of data writes, especially when the read/write ratio is very high, and when horizontal scaling is required. • Scenarios where business rules change regularly. • Integration with other systems, especially in combination with Event Sourcing, where the temporal failure of one subsystem should not affect the availability of the others.

Command and Query Responsibility Segregation (CQRS) Pattern When NOT to Use this Pattern • The domain or the business rules are simple. • A simple CRUD-style user interface and the related data access operations are sufficient. • For implementation across the whole system. There are specific components of an overall data management scenario where CQRS can be useful, but it can add considerable and often unnecessary complexity where it is not actually required.

Command and Query Responsibility Segregation (CQRS) Pattern • This pattern supports the evolution of the system over time through higher flexibility; and prevent update commands from causing merge conflicts at the domain level. • The read store can be a read-only replica of the write store, or the read and write stores may have a different structure altogether. • Using multiple read-only replicas of the read store can considerably increase query performance and application UI responsiveness, especially in distributed scenarios where read-only replicas are located close to the application instances. • Some database systems, such as SQL Server, provide additional features such as failover replicas to maximize availability.

Important Problem Areas to Consider When Designing Cloud Applications http://cloudacademy.com/blog/wp-content/uploads/2014/12/IC709534.png

Data Replication and Synchronization Strategies • When deploying applications to the cloud, it is important to consider carefully how to replicate and synchronize data used by each instance of the application. • Data replication and synchronisation is important to • maximize availability and performance, • ensure consistency, and • minimize data transfer costs between locations. • The two most common topologies for data replication and synchronisation are Master-Master Replication and Master-Subordinate Replication.

Master-Master Replication • This topology requires a two-way synchronization mechanism to keep the replicas up to date and to resolve any conflicts that might occur. • In a cloud application, to ensure that response times are kept to a minimum and to reduce the impact of network latency, synchronization typically happens periodically. • The changes made to a replica are batched up and synchronized with other replicas according to a defined schedule. • While this approach reduces the overheads associated with synchronization, it can introduce some inconsistency between replicas before they are synchronized.

Master-Master Replication https://i-msdn.sec.s-msft.com/dynimg/IC709536.png

Master-SubordinateReplication • In this topology, conflicts are unlikely to occur since only data in the master is dynamic, the remaining replicas are read-only. • Hence, synchronization requirements are simpler than that of the Master-Master Replication • However, data consistency issues persist.

Master-SubordinateReplication https://i-msdn.sec.s-msft.com/dynimg/IC709537.png

Benefits of Replication To improve performance and scalability: • Use Master-Subordinate replication with read-only replicas to improve performance of queries. • Locate replicas close to the applications that access them; • Use simple one-way synchronization to push updates to them from a master database. • Use Master-Master replication to improve the scalability of write operations. • Applications can write more quickly to a local copy of the data; • However, there is additional complexity because two-way synchronization (and possible conflict resolution) with other data stores. • Include in each replica any reference data that is relatively static, and is required for queries executed against that replica to avoid the requirement to cross the network to another datacenter.

Benefits of Replication To improvereliability: • Deploy replicas close to the applications and inside the network boundaries of the applications that use them to avoid delays caused by accessing data across the Internet. • Typically, the latency of the Internet and the correspondingly higher chance of connection failure are the major factors in poor reliability.

Benefits of Replication To improvesecurity: • In a hybrid application, deploy only non-sensitive data to the cloud and keep the rest on-premises. • This approach may also be a regulatory requirement, specified in a service level agreement (SLA), or as a business requirement. • Replication and synchronization can take place over the non-sensitive data only.

Benefits of Replication To improveavailability: • In a global reach scenario, use Master-Master replication in datacenters in each country or region where the application runs. • Each deployment of the application can use data located in the same datacenter as that deployment • in order to maximize performance and minimize any data transfer costs. • Use replication from the master database to replicas in order to provide failover and backup capabilities. • By keeping additional copies of the data up to date (according to a schedule or on demand) it may be possible to switch the application to use the backup data in case of a failure of the original data store.

SomeImplementationadvices • Use a Master-Subordinate Replication topology wherever possible. • This topology requires only one-way synchronization from the master to the subordinates. • Segregate the data into several stores or partitions according to replication requirements. • Partition the data so that updates, and the resulting risk of conflicts, can occur only in minimum number of places. • Version the data so that no overwriting is required. • when data is changed, a new version is added to the data store alongside the existing versions. • Use a quorum-based approach where a conflicting update is applied only if the majority of data stores vote to commit the update.

Example of Replication and Synchronization Implementations • The Azure SQL Data Sync service • The Microsoft SyncFramework • Azure storagegeo-replication • SQL Server databasereplication

Important Problem Areas to Consider When Designing Cloud Applications http://cloudacademy.com/blog/wp-content/uploads/2014/12/IC709534.png

Service Load Balancing Pattern Description: • Provides redundant deployments to accommodate increasing workloads. • This pattern can increase scalability and availability.

Service Load Balancing Pattern Context and Problem: • A single cloud service implementation has a finite capacity, which leads to runtime exceptions, failure and performance degradation when its processing thresholds are exceeded.

Service Load Balancing Pattern Solution • Creates redundant deployments of the cloud service. • Add a load balancing system to dynamically distribute workloads across cloud service implementations. The load balancing agent intercepts messages sent by cloud service consumers (1) and forwards the messages at runtime to the virtual servers so that the workload processing is horizontally scaled (2)

Service Load Balancing Pattern Solution • The duplicate cloud service implementations are organized into a resource pool. • The load balancer is positioned as an external component or may be built-in, allowing hosting servers to balance workloads among themselves.

Service Load Balancing Pattern Issues and Considerations • The load balancer can become a single point of failure • The load balancer is typically located on the communication path between the IT resources generating the workload and the IT resources performing the workload processing. • Existing load balancing mechanisms: • Multi-Layer Network Switch • Dedicated Load Balancer Hardware Appliance • Dedicated Software-Based System (common in server operating systems) • Service Agent (usually controlled by cloud management software)

Service Load Balancing Pattern When to Use this Pattern • Systems experiencing increasing workloads.

Important Problem Areas to Consider When Designing Cloud Applications http://cloudacademy.com/blog/wp-content/uploads/2014/12/IC709534.png

Usage Monitoring Pattern Description: • Measurement of IT usages.

Usage Monitoring Pattern Context and Problem: • IT resources that are shared can generate a variety of runtime scenarios that, if not tracked and responded to, can cause numerous failure, performance, and security concerns and can further make usage-based reporting and billing impossible.

Usage Monitoring Pattern Solution • Use cloud usage monitors to track and measure the quantity and nature of runtime IT resource usage activity.

Usage Monitoring Pattern Issues and Considerations • Excessive logging can cause performance degradation.

OpenStack Design Tenet • Scalabilityand elasticityare the main goals • Any feature that limits the main goals must be optional • Everything should be asynchronous • If you can't do something asynchronously, see #2 • All required components must be horizontally scalable • Always use shared nothing architecture (SN) or sharding • If you can't Share nothing/shard, see #2 • Distribute everything • Especially logic. Move logic to where state naturally exists. • Accept eventual consistency and use it where it is appropriate. • Test everything. • We require tests with submitted code. (We will help you if you need it) Sources:http://www.openstack.org/downloads/openstack-compute-datasheet.pdfhttp://wiki.openstack.org/BasicDesignTenets

OpenStack Architecture Source: http://ken.pepple.info/openstack/2012/09/25/openstack-folsom-architecture/

OpenStack is comprised of 7 core projects that form a complete IaaSsolution IaaS IaaS Provision and manage virtual resources • Compute (Nova) • Storage (Cinder) • Network (Quantum) Self-service portal • Dashboard (Horizon) Catalog and manage server images • Image (Glance) Unified authentication, integrates with existing systems • Identity (Keystone) Petabytes of secure, reliable object storage • Object Storage (Swift) Source: http://ken.pepple.info/openstack/2012/09/25/openstack-folsom-architecture/

Compute: a fully featured, redundant, and scalable cloud computing platform • Key Capabilities: • Manage virtualized server resources • CPU/Memory/Disk/Network Interfaces • API with rate limiting and authentication • Distributed and asynchronous architecture • Massively scalable and highly available system • Live guest migration • Move running guests between physical hosts • Live VM management (Instance) • Run, reboot, suspend, resize, terminate instances • Security Groups • Role Based Access Control (RBAC) • Ensure security by user, role and project • Projects & Quotas • VNC Proxy through web browser Architecture Sources:http://ken.pepple.info/openstack/2012/09/25/openstack-folsom-architecture/http://openstack.org/projects/compute/

Compute management stack control plane is built on queue and database Key Capabilities: Responsible for providing communications hub and managing data persistence • RabbitMQ is default queue, MySQL DB • Documented HA methods • ZeroMQ implementation available to decentralize queue • Single “cell” (1 Queue, 1 Database) typically scales from 500 – 1000 physical machines • Cells can be rolled up to support larger deployments • Communications route through queue • API requests are validated and placed on queue • Workers listen to queues based on role or role + hostname • Responses are dispatched back through queue

nova-compute manages individual hypervisors and compute nodes Key Capabilities: Responsible for managing all interactions with individual endpoints providing compute resource, e.g. • Attach iSCSI volume to phsyical host, map to guest as additional HDD • Implementations direct to native hypervisor APIs • Avoids abstraction layers that bring least common denomination support • Enables easier exploitation of hypervisor differentiators • Service instance runs on every physical compute node, helps to minimize failure domain • Support for security groups that define firewall rules • Support for • KVM • LXC • VMware ESX/ESXi (4.1 update 1) • Xen (XenServer 5.5, Xen Cloud Platform) • Hyper V

nova-scheduler allocates virtual resources to physical hardware Key Capabilities: • Determines which physical hardware to allocate to a virtual resource • Default scheduler uses a series of filters to reduce set of applicable hosts and uses costing functions to provide Weight • Not a focus point for OpenStack • Default implementation finds first fit • Shorter the workload lifespan, less critical the placement decision • If default does not work, often, deployers have specific requirements and develop custom solutions.

nova-apisupports multiple API implementations and, it is the entry point into the cloud Key Capabilities: • APIs supported • OpenStack Compute API (REST-based) • Similar to RackSpace APIs • EC2 API (subset) • Can be excluded • Admin API (nova-manage) • Robust extensions mechanism to add new capabilities

Network automates management of networks and attachments (network connectivity as a service) Key Capabilities: Responsible for managing networks, ports, and attachments on infrastructure for virtual resources • Create/delete tenant-specific L2 networks • L3 support (Floating IPs, DHCP, routing) • Moving to L4 and above in Grizzly • Attach / Detach host to network • Similar to dynamic VLAN support • Support for • Open vSwitch • OpenFlow(NEC & Floodlight controllers) • Cisco Nexus • Niciria Architecture

Cinder manages block-based storage, enables persistent storage Key Capabilities: • Responsible for managing lifecycle of volumes and exposing for attachment • Structure is a copy of Compute (Nova), sharing same characteristics and structure in API server, scheduler, etc. • Enables additional attached persistent block storage to virtual machines • Support for booting virtual machines from nova-volume backed storage • Allows multiple volumes to be attached per virtual machine • Supports following • ISCSI • RADOS block devices (e.g. Ceph distributed file system) • Sheepdog • Zadara Architecture

Identity service offers unified, project-wide identity, token, service catalog, and policy service Key Capabilities: • Identity service provides authentication credential validation and data about Users, Tenants and Roles • Token service validates and manages tokens used to authenticate requests after initial credential verification • Catalog service provides an endpoint registry used for endpoint discovery. • Policy service provides a rule-based authorization engine and the associated rule management interface. • Each service is configured to serve data from pluggable backend • Key-Value, SQL, PAM, LDAP, PAM, Templates • REST-based APIs

Image service provides basic discovery, registration, and delivery services for virtual disk images Key Capabilities: • Think Image Registry, not Image Repository • REST-based APIs • Query for information on public and private disk images • Register new disk images • Disk images can be stored in and delivered from a variety of stores(e.g. SoNFS, Swift) • Supported formats • Raw • Machine (a.k.a. AMI) • VHD (Hyper-V) • VDI (VirtualBox) • qcow2 (Qemu/KVM) • VMDK (VMWare) • OVF (VMWare, others) Referenceshttp://openstack.org/projects/image-service/

Dashboard enables administrators and users to access and provision cloud-based resources through a self-service portal Key Capabilities: • Thin wrapper over APIs, no local state • Registration pattern for applications to hook into • Ships with three central dashboards, a “User Dashboard”, a “System Dashboard”, and a “Settings Dashboard • Out-of-the-box support for all core OpenStack projects • Nova, Glace, Switch, Quantum • Anyone can add a new component as a “first-class citizen”. • Follow design and style guide. • Visual and interaction paradigms are maintained throughout. • Console Access Referenceshttp://horizon.openstack.org/intro.html

Some OpenStack Public Use Cases • Internap • http://www.internap.com/press-release/internap-announces-world%E2%80%99s-first-commercially-available-openstack-cloud-compute-service/ • Rackspace Cloud Servers, Powered by OpenStack • http://www.rackspace.com/blog/rackspace-cloud-servers-powered-by-openstack-beta/ • Deutsche Telekom • http://www.telekom.com/media/media-kits/104982 • AT&T • http://arstechnica.com/business/news/2012/01/att-joins-openstack-as-it-launches-cloud-for-developers.ars • MercadoLibre • http://openstack.org/user-stories/mercadolibre-inc/mercadolibre-s-bid-for-cloud-automation/ • NeCTAR • http://nectar.org.au/ • San Diego Supercomputing Center • http://openstack.org/user-stories/sdsc/