Evaluation Framework for the Canada Health Infostructure Partnerships Program (CHIPP)

310 likes | 449 Vues

Evaluation Framework for the Canada Health Infostructure Partnerships Program (CHIPP). Robert Hanson and Sandra Chatterton Health Canada Joint Conference of the American Evaluation Association and the Canadian Evaluation Society Toronto Ontario October 29, 2005.

Evaluation Framework for the Canada Health Infostructure Partnerships Program (CHIPP)

E N D

Presentation Transcript

Evaluation Framework for the Canada Health Infostructure Partnerships Program (CHIPP) Robert Hanson and Sandra Chatterton Health Canada Joint Conference of the American Evaluation Association and the Canadian Evaluation Society Toronto Ontario October 29, 2005

“There is hardly any activity, any enterprise, which is started out with such tremendous hopes and expectations, and yet which fails so regularly, as love.” (Erich Fromm) – with the possible exception of federal health technologies projects* * In 1997, Auditor General of Canada reported that 89% of federal health technologies projects failed!

Overview of Presentation Background CHIPP Evaluation Framework What happened Lessons Learned

What is Telehealth? Telehealth is the use of information and communication technology (ICT) to deliver health services, expertise and information over distance.1 Sometimes called “telemedicine” In concert with electronic health record (EHR) systems, it has come to be more commonly referred to in Canada as “e-Health” 1 Source:Glossary of Telehealth Related Terms, Acronyms and Abbreviations, Health Telematics Unit, University of Calgary, 2002

Examples of telehealth applications Above: Remote viewing and discussion of chest x-ray from Baker Lake NU in Pond Inlet NU Left: Remote inner ear examination in Pond Inlet NU transmitted to Baker Lake NU

Some Context and Policy Issues Interoperability and standards Privacy and security of personal information Sustainability - high failure rate for health technologies funded by federal government (Auditor General, 1997) Licensure and reimbursement – care delivery across jurisdictions Best practices - expected to emerge from projects Resource allocation and usage

CHIPP Launched in 2000 • Canada Health Infostructure Partnership Program (CHIPP) launched in 2000 as a two year, $80 million, shared-cost program to support the implementation of innovative telehealth/EHR applications in health services delivery • Applicants were required to include evaluation, risk management, and sustainability plans in their project proposals • 29 out of 185 proposals were funded, with projects in every province and territory, • CHIPP paid up to 50% of implementation costs, with contributions ranging from $436K to $12M

CHIPP-funded Projects by Region • Yukon Telehealth Network • IIU Telehealth Network • WestNet Tele-Opthalmology • SLICK (Teleopthalmology) * Rural telemedicine in Temiscamingue* CLSC du futur * Regional tele-clinic in Trois Rivieres* Vitrine Laval* MOXXI • BC Telehealth • Central BC & Yukon Teleradiology Initiative • MHECCU Tele-psychiatry • Health Link • Bridges to Better Health • Integrated Community Mental Health Information System • SYNAPSE (mental health information system) • WHIC Provider Registry • Manitoba Telehealth • Saskatchewan Telehealth * North Network* Northern Radiology Network* East Ontario Telehealth Network* South West Ontario Telehealth Network * Centre for Minimal Access Surgery* Project Outreach* Regionally Accessible Secure Cardiac Health Record * COMPETE/MOXXI * Health Infostructure Atlantic * Tele-Oncology

Why develop an evaluation framework? • To facilitate accountability – theirs and ours! • To provide direction / clarify expectations • To assist project planning • To promote evaluation as a management tool • To facilitate thematic syntheses/analysis • To lay foundation for ongoing evaluation

Adapting a Framework “That’s good in theory, but not in practice!” If something is good in theory but not in practice, you might wonder if it is a good theory. But, consider how it’s applied or may be adapted. Or, in other words… you don’t have to re-invent the wheel!

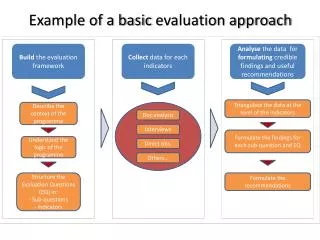

Development of the Framework • Program staff tasked with evaluation planning for the program, including development of evaluation framework for the projects to use • Working group to identify program-specific issues • Don’t want to re-invent the wheel • Search for usable models elsewhere: • Literature review – few models existed; limited applicability! • Consultation and review of other funding programs for evaluation approaches and issues • Framework needed to be adaptable to broad range of project types and sizes • CHIPP framework built upon a model developed by the Institute of Medicine (IOM)

Focus of the IOM Model 1 IOM model addresses the main factors for success and sustainability of telecommunications in health care: Quality Accessibility Cost Acceptability 1 Institute of Medicine, Telemedicine – A Guide to Assessing Telecommunications in Health Care, National Academy Press, Washington DC, 1996

Additional CHIPP-specific Issues Integration into the health system Health and related impacts Technology performance Privacy Rationale Lessons learned

CHIPP Evaluation Issues Quality Accessibility Cost Acceptability Integration into the health system Health and related impacts Technology performance Privacy Rationale Lessons learned

Evaluation Framework Provided at Outset • Evaluation guidelines, including examples of indicators, provided in the call for proposals • Varying degrees of detail and suitability of evaluation plans that were contained in proposals • After projects were selected, regional workshops on evaluation organized to inform and assist with compliance with evaluation framework • Projects and their evaluation were monitored by program staff to varying degrees

What happened? Demand for project evaluations outstripped evaluative capacity Project evaluations completed with varying degrees of expertise, rigour, compliance and usefulness Project implementation took much longer than anticipated. Not enough time for impacts of projects to be properly assessed, even with 2 year extension of the funding end date Program evaluation conducted in 2004-2005, but not yet released CHIPP wrapped up in 2004-05. Currently, no provision for post-implementation or impact evaluation at project or program level Canada Health Infoway has mandate for promotion of ICT in health, in collaboration with provinces and territories, (see http://www.infoway.ca/)

Evaluation Contribution • Providing a foundation for evaluation strategies, definition development and measurement, including common and innovative indicators • Contributes to National Telehealth Outcome Indicators Project (NTOIP) funded by Richard Ivey Foundation, (4 preliminary measurements: quality, accessibility, acceptance, cost-effectiveness, i.e. IOM issues) • Series of policy briefs to come on evaluation, standards, change management, integration of e-health within continuum of care, health human resources, and continuing education • Canadian Telehealth Society – CHIPP presentations plus formal agreement to share CHIPP reports • Canadian Telematics Unit at University of Calary will be repository for CHIPP Database, (reports and analyses)

Lessons Learned with CHIPP Evaluation Framework Important to communicate expectations and best practices, (e.g. benchmarking, quasi-experimental design) Stipulate specific indicators and measures at the outset, to avoid difficult-to-aggregate proliferation of measures Important to acknowledge what can be evaluated when, i.e. evaluability! Ensure that objectives achievement at program and project levels are explicitly addressed to facilitate assessment of project and program performance Important to ensure common understanding of terminology, (e.g. utilization of new system without any consideration of status quo was interpreted by some to be impact on access) Promote strategic approaches and usage of project evaluations, (e.g. for documentation of business case, summative purposes, etc.)

Any questions?Sandra Chattertonsandra_chatterton@hc-sc.gc.caTelephone: 613-954-8769

Sources for More Information Health Canada Website- information on telehealth/e-health: http://www.hc-sc.gc.ca/hcs-sss/ehealth-esante/index_e.html - information on completed projects: http://www.hc-sc.gc.ca/hcs-sss/pubs/chipp-ppics/index_e.html - archived information on CHIPP: http://www.hc-sc.gc.ca/hcs-sss/ehealth-esante/infostructure/finance/chipp-ppics/index_e.html Sandra Chatterton sandra_chatterton@hc-sc.gc.ca Robert Hanson robert_hanson@hc-sc.gc.ca

Rationale Why was this project considered a good idea? Is this an idea that should be pursued further? Why? What proved to be the most innovative aspects of this project?

Improvements to Health Services From the perspective of patients and providers, how does this project affect the quality of services/care provided? Examples of indicators: changes in satisfaction of various stakeholders with the quality of service offered changes in satisfaction with the quality of patient/provider relationship changes in technical appropriateness of intervention How does this project affect access to, or utilization of, health services? Examples of indicators: - changes in access to available services - changes in the mix of services or nature of services - changes in location or accommodation where services are provided

Integration of Health Services In what ways does this project foster integration, coordination and/or collaboration of health services across the continuum of care (e.g. from primary care to acute care to community and home care). Examples of indicators: - evidence of improved linkages across the continuum of care (e.g. information sharing, joint planning, cross-training of staff)

Health and Related Impacts/Effects What kinds of health and related impacts have occurred as a result of your project, and on what basis did you draw these conclusions? Examples of indicators: - changes to measurable health status indicators: changes in morbidity (i.e. decline in incidence rates per 1000 population, harm reduction or secondary symptom reduction) - population perspective on changes to health status (i.e. client assessment) - unintended or unanticipated results (e.g. project does not reach a relevant population or reaches an unintended population, or unanticipated impacts on other aspects of the health system) - broader societal and non-health–related impacts

Cost-effectiveness Does the project contribute to a more cost-effective service than what is currently being provided? Examples of indicators: - Does comparative baseline information exist? Who benefits? Patients, health system, health institutions, health practitioners, others? How do they benefit? How does the project contribute to a more cost-effective service than what is currently being provided? Examples of indicators: - faster response time - fewer steps necessary to provide service - reduction in transportation time for patients - fewer interventions by medical practitioners required

Lessons Learned What lessons have you learned in developing and implementing this project, that might be useful to other jurisdictions/regions/settings, and to other programs? Examples of indicators: What relationships were found to exist between the characteristics of the implementation and the outcomes of this project? What elements of this project seem particularly transferable to other jurisdictions? How did the project affect the interactions between the members of the health team and was this helpful or problematic? Specify the positive and negative effects or results experienced during the life of your project. What were the consequences of these results, and, where appropriate, how were they dealt with? Examples of indicators: - Identify the problems and obstacles that resulted in negative consequences for the project; how could they have been anticipated and overcome?

Technology Performance How well has the technology met the project’s requirements? Examples of indicators: - vendor supportive of project - ease of extraction of data - ease in updating and reporting technology-health team interactions

Electronic Health Records: Privacy In what ways have the means for collecting, using and disclosing personal health information been improved to ensure privacy? Examples of indicators: the amount of personal information being collected is minimized- direct individual consent on information collected In what ways are the project participants’ (providers, clients, patients, administrators, others) satisfied with the protection of information? Examples of indicators: - Personal information can be accessed by authorized individuals and only on a need to know basis - A log is kept of all access to personal information and access violations are dealt with promptly

Electronic Health Record Privacy (continued) In what ways are the project participants’ (providers, clients, patients, administrators, others) satisfied with the protection of information? Example of indicator: - results of client and health service provider satisfaction survey (compared to baseline data)