Supervised Distance Metric Learning

Supervised Distance Metric Learning. Presented at CMU’s Computer Vision Misc-Read Reading Group May 9, 2007 by Tomasz Malisiewicz. Overview. Short Metric Learning Overview Eric Xing et al (2002) Kilian Weinberger et al (2005) Local Distance Functions Andrea Frome et al (2006).

Supervised Distance Metric Learning

E N D

Presentation Transcript

Supervised Distance Metric Learning Presented at CMU’s Computer Vision Misc-Read Reading Group May 9, 2007 by Tomasz Malisiewicz

Overview • Short Metric Learning Overview • Eric Xing et al (2002) • Kilian Weinberger et al (2005) • Local Distance Functions • Andrea Frome et al (2006)

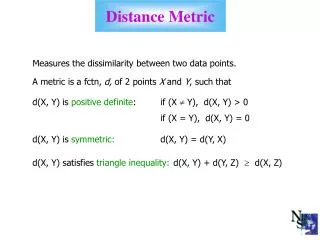

What is a metric? • Technically, a metric on a space X is a function • Satisfies : non-negativity, identity, symmetry, triangle inequality • Just forget about the technicalities, and think of it as a distance function that measures similarity • Depending on context and mathematical properties other useful names: Comparison function, distance function, distance metric, similarity measure, kernel function, matching measure

When are distance functions used? • Almost everywhere • Density estimation (e.g. parzen windows) • Clustering (e.g. k-means) • Instance-based classifiers (e.g. NN)

How to choose a distance function? • A priori • Euclidean Distance • L1 distance • Cross Validation within small class of functions • e.g. choosing order of polynomial for a kernel

Learning distance function from data • Unsupervised Metric Learning (aka Manifold Learning) • Linear: e.g. PCA • Non-linear: e.g. LLE, Isomap • Supervised Metric Learning (using labels associated with points) • Global Learning • Local Learning

A Mahalanobis Distance Metric Most Commonly Used Distance Metric in Machine Learning Community Equivalent to first applying linear transformation y = Ax, then using Euclidean distance in new space of y’s

Supervised Distance Metric Learning Global vs Local

Supervised Global Distance Metric Learning (Xing et al 2002) • Input data has labels (C classes) • Consider all pairs of points from the same class (equivalence class) • Consider all pairs of points from different classes (inequivalence class) • Learn a Mahalanobis Distance Metric that brings equivalent points closer together while staying far from inequivalent points E. Xing, A. Ng, and M. Jordan, “Distance metric learning with application to clustering with side information,” in NIPS, 2003.

Supervised Global Distance Metric Learning (Xing et al 2002)

Supervised Global Distance Metric Learning (Xing et al 2002) • Convex Optimization problem • Minimize pairwise distances between all similarly labeled examples

Supervised Global Distance Metric Learning (Xing et al 2002) • Anybody see a problem with this approach?

Problems with Global Distance Metric Learning • Problem with multimodal classes

Supervised Local Distance Metric Learning • Many different supervised distance metric learning algorithms that do not try to bring all points from same class together • Many approaches still try to learn Mahalanobis distance metric • Account for multimodal data by integrating only local constraints

Supervised Local Distance Metric Learning (Weinberger et al 2005) • Consider a KNN Classifier: for each Query Point x, we want the K-nearest neighbors of same class to become closer to x in new metric K.Q. Weinberger, J. Blitzer, and L.K. Saul, “Distance metric learning for large margin nearest neighbor classification,” in NIPS, 2005.

Supervised Local Distance Metric Learning (Weinberger et al 2005) • Convex Objective Function (SDP) • Penalize large distances between each input and target neighbors • Penalize small distances between each input and all other points of different class • Points from different classes are separated by large margin K.Q. Weinberger, J. Blitzer, and L.K. Saul, “Distance metric learning for large margin nearest neighbor classification,” in NIPS, 2005.

Distance Metric Learning by Andrea Frome • A. Frome, Y. Singer, and J. Malik, “Image Retrieval and Classification Using Local Distance Functions,” in NIPS 2006. • Finally we get something more interesting than Mahalanobis Distance Metric!

Per-exemplar distance function • Goal: instead of learning a deformation of the space of exemplars, want to learn a distance function for each exemplar (think KNN classifier)

Distance functions and Learning Procedure • If there are N training images, we will solve N separate learning problems (the training image for a particular learning problem is referred to as the focal image) • Each learning problem solved with a subset of the remaining training images (called the learning set)

Elementary Distances • Distance functions built on top of elementary distance measures between patch-based features • Each input is not treated as a fixed-length vector

Elementary Patch-based Distances • Distance function is combination of elementary patch-based distances (distance from patch to image) • M patches in Focal Image • Image to Image distance is linear combination of patch to image distances Distance between j-th patch in F and entire image I

Learning from triplets • Goal is to learn w for each Focal Image from Triplets of Focal Image I, Similar Image, and Different Image • Each Triplet gives us one constraint

Max Margin Formulation • Learn w for each Focal Image independently • Weights must be positive • T triplets for each learning problem • Slack Vars (like non-separable SVM)

Visual Features and Patch-to-Image Distance • Geometric Blur descriptors (for shape) at two different scales • Naïve Color Histogram descriptor • Sampled from 400 or fewer edge points • No geometric relations between features • Distance between feature f and image I is the smallest L2 distance between f and feature f’ in I of the same feature type

Image Browsing Example • Given a Focal Image, sort all training images by distance from Focal Image

Image Retrieval • Given a novel Image Q (not in training set), want to sort all training images with respect to distance to Q • Problem: local distances are not directly comparable (weights learned independently and not on same scale)

Second Round of Training: Logistic Regression to the Rescue • For each Focal Image I, fit Logistic Regression model to the binary (in-class versus out-of-class) training labels and learned distances. • To classify query image Q, we can get distance from Q to each training image Ii, then use logistic function to get probability that Q is in the same class as Ii • Assign Q to the class with the highest mean (or sum) probability

Choosing Triplets for Learning • For Focal Image I, images in same category are “similar” images and images from different category are “different” images • Want to select images that are similar to the focal image according to at least one elementary distance function

Choosing Triplets for Learning • For each of the M elementary distances in focal image F, we find the top K closest images • If K contains both in-class and out-of-class images, create all triplets from (F,in-class,out-class) combinations • If K are all in-class images, get closest out-class image then make K triplets (reverse if all K are out-class)

Conclusions • Metric Learning similar to Feature Selection • Seems like for different visual object categories, different notions of similarity are needed: color informative for some classes, local shape for others, geometry for others • Metric Learning well suited for instance-based classifiers like KNN • Local learning more meaningful than global learning

References • NIPS 06 Workshop on Learning to Compare Examples • Liu Yang’s Distance Metric Learning Comprehensive Survey • Xing et al, Weinberger et al, Frome et al

Questions? • Thanks for Listening • Questions?