5. independence

CSE 312, 2012 Autumn, W.L.Ruzzo. 5. independence. [ | ]. independence. Defn: Two events E and F are independent if P(EF) = P(E) P(F) If P(F)>0, this is equivalent to: P(E|F) = P(E) (proof below) Otherwise, they are called dependent. independence.

5. independence

E N D

Presentation Transcript

CSE 312, 2012 Autumn, W.L.Ruzzo 5. independence [ | ]

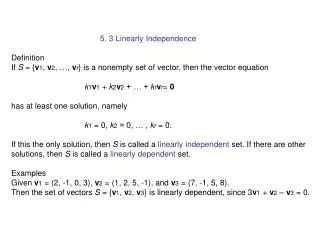

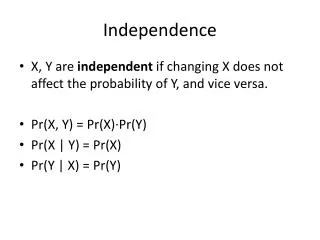

independence Defn: Two events E and F are independent if P(EF) = P(E) P(F) If P(F)>0, this is equivalent to: P(E|F) = P(E)(proof below) Otherwise, they are called dependent

independence • Roll two dice, yielding values D1 and D2 • 1) E = { D1 = 1 } • F = { D2 = 1 } • P(E) = 1/6, P(F) = 1/6, P(EF) = 1/36 • P(EF) = P(E)•P(F) ⇒ E and F independent • Intuitive; the two dice are not physically coupled • 2) G = {D1 + D2 = 5} = {(1,4),(2,3),(3,2),(4,1)} • P(E) = 1/6, P(G) = 4/36 = 1/9, P(EG) = 1/36 • not independent! • E, G are dependent events • The dice are still not physically coupled, but “D1 + D2 = 5” couples them mathematically: info about D1 constrains D2. (But dependence/independence not always intuitively obvious; “use the definition, Luke”.)

independence Two events E and F are independent if P(EF) = P(E) P(F) If P(F)>0, this is equivalent to: P(E|F) = P(E) Otherwise, they are called dependent Three events E, F, G are independent if P(EF) = P(E) P(F) P(EG) = P(E) P(G) and P(EFG) = P(E) P(F) P(G)P(FG) = P(F) P(G) Example: Let X, Y be each {-1,1} with equal prob E = {X = 1}, F = {Y = 1}, G = { XY = 1} P(EF) = P(E)P(F), P(EG) = P(E)P(G), P(FG) = P(F)P(G) but P(EFG) = 1/4!!! (because P(G|EF) = 1)

independence In general, events E1, E2, …, En are independent if for every subset S of {1,2,…, n}, we have (Sometimes this property holds only for small subsets S. E.g., E, F, G on the previous slide are pairwise independent, but not fully independent.)

E = EF ∪EFc S E F independence Theorem: E, F independent ⇒ E, Fc independent Proof: P(EFc) = P(E) – P(EF) = P(E) – P(E) P(F) = P(E) (1-P(F)) = P(E) P(Fc) Theorem: if P(E)>0, P(F)>0, then E, F independent ⇔ P(E|F)=P(E) ⇔ P(F|E) = P(F) Proof: Note P(EF) = P(E|F) P(F), regardless of in/dep. Assume independent. Then P(E)P(F) = P(EF) = P(E|F) P(F)⇒ P(E|F)=P(E) (÷ by P(F)) Conversely, P(E|F)=P(E) ⇒ P(E)P(F) = P(EF) (× by P(F))

biased coin Suppose a biased coin comes up heads with probability p, independent of other flips P(n heads in n flips) = pn P(n tails in n flips) = (1-p)n P(exactly k heads in n flips) Aside: note that the probability of some number of heads =as it should, by the binomial theorem.

biased coin • Suppose a biased coin comes up heads with probability p, independent of other flips • P(exactly k heads in n flips) • Note when p=1/2, this is the same result we would have gotten by considering n flips in the “equally likely outcomes” scenario. But p≠1/2 makes that inapplicable. Instead, the independence assumption allows us to conveniently assign a probability to each of the 2n outcomes, e.g.: • Pr(HHTHTTT) = p2(1-p)p(1-p)3 = p#H(1-p)#T

hashing • A data structure problem: fast access to small subset of data drawn from a large space. • A solution: hash function h:D→{0,...,n-1} crunches/scrambles names from large space into small one. E.g., if x is integer: • h(x) = x mod n • Good hash functions approximately randomize placement. D R x (Large) space of potential data items, say names or SSNs, only a few of which are actually used 0 . . . n-1 h(x) = i i • (Small) hash table containing actual data 10

indp hashing m strings hashed (uniformly) into a table with n buckets Each string hashed is an independent trial E = at least one string hashed to first bucket What is P(E) ? Solution: Fi = string i not hashed into first bucket (i=1,2,…,m) P(Fi) = 1 – 1/n = (n-1)/n for all i=1,2,…,m Event (F1 F2 … Fm) = no strings hashed to first bucket P(E) = 1 – P(F1 F2⋯ Fm) = 1 – P(F1) P(F2) ⋯ P(Fm) = 1 – ((n-1)/n)m ≈1-exp(-m/n)

hashing m strings hashed (non-uniformly) to table w/ n buckets Each string hashed is an independent trial, with probability pi of getting hashed to bucket i E = At least 1 of buckets 1 to k gets ≥ 1 string What is P(E) ? Solution: Fi = at least one string hashed into i-th bucket P(E) = P(F1∪⋯∪ Fk) = 1-P((F1∪⋯∪ Fk)c) = 1 – P(F1c F2c … Fkc) = 1 – P(no strings hashed to buckets 1 to k) = 1 – (1-p1-p2-⋯-pk)m

hashing • Let D0⊆ D be a fixed set of m strings, R = {0,...,n-1}. A hash function h:D→R is perfect for D0 if h:D0→R is injective (no collisions). How hard is it to find a perfect hash function? • Fix h; pick m elements of D0independently at random ∈ D • Suppose h maps ≈ (1/n)th of D to each element of R. This is like the birthday problem: • P(h is perfect for D0) = graph needs work!!!

hashing • Let D0⊆ D be a fixed set of m strings, R = {0,...,n-1}. A hash function h:D→R is perfect for D0 if h:D0→R is injective (no collisions). How hard is it to find a perfect hash function? • Fix D0; pick hat random • E.g., if m = |D0| = 23 and n = 365, then there is ~50% chance that h is perfect for this fixed D0. If it isn’t, pick h’, h’’, etc. With high probability, you’ll quickly find a perfect one! • “Picking a random function h” is easier said than done, but, empirically, picking among a set of functions like • h(x) = (a•x +b) mod n • where a, b are random 64-bit ints is a start. caution; this analysis is heuristic, not rigorous, but still useful.

p1 p2 … pn network failure Consider the following parallel network n routers, ith has probability pi of failing, independently P(there is functional path) = 1 – P(all routers fail) = 1 – p1p2 ⋯ pn

network failure Contrast: a series network n routers, ith has probability pi of failing, independently P(there is functional path) = P(no routers fail) = (1 – p1)(1– p2) ⋯ (1 – pn) p1 p2 … pn

deeper into independence Recall: Two events E and F are independent if P(EF) = P(E) P(F) If E & F are independent, does that tell us anything about P(EF|G), P(E|G), P(F|G), when G is an arbitrary event? In particular, is P(EF|G) = P(E|G) P(F|G) ? In general, no.

deeper into independence Roll two 6-sided dice, yielding values D1 and D2 E = { D1 = 1 } F = { D2 = 6 } G = { D1 + D2 = 7 } E and F are independent P(E|G) = 1/6 P(F|G) = 1/6, but P(EF|G) = 1/6, not 1/36 so E|G and F|G are not independent!

conditional independence • Definition: • Two events E and F are called conditionally independent given G, if • P(EF|G) = P(E|G) P(F|G) • Or, equivalently (assuming P(F)>0, P(G)>0), • P(E|FG) = P(E|G)

do CSE majors get fewer A’s? • Say you are in a dorm with 100 students • 10 are CS majors: P(C) = 0.1 • 30 get straight A’s: P(A) = 0.3 • 3 are CS majors who get straight A’s • P(CA) = 0.03 • P(CA) = P(C) P(A), so C and A independent • At faculty night, only CS majors and A students show up • So 37 students arrive • Of 37 students, 10 are CS ⇒ • P(C | C or A) = 10/37 = 0.27 < .3 = P(A) • Seems CS major lowers your chance of straight A’s ☹ • Weren’t they supposed to be independent? • In fact, CS and A are conditionally dependent at fac night

conditioning can also break DEPENDENCE Randomly choose a day of the week A = { It is not a Monday } B = { It is a Saturday } C = { It is the weekend } A and B are dependent events P(A) = 6/7, P(B) = 1/7, P(AB) = 1/7. Now condition both A and B on C: P(A|C) = 1, P(B|C) = ½, P(AB|C) = ½ P(AB|C) = P(A|C) P(B|C) ⇒ A|C and B|C independent Dependent events can become independent by conditioning on additional information! Another reason why conditioning is so useful

independence: summary • Events E & F are independent if • P(EF) = P(E) P(F), or, equivalently P(E|F) = P(E) (if p(E)>0) • More than 2 events are indp if, for alI subsets, joint probability = product of separate event probabilities • Independence can greatly simplify calculations • For fixed G, conditioning on G gives a probability measure, P(E|G) • But “conditioning” and “independence” are orthogonal: • Events E & F that are (unconditionally) independent may become dependent when conditioned on G • Events that are (unconditionally) dependent may become independent when conditioned on G 23

T T T T H T H H CSE 312, 2012 Autumn, W.L.Ruzzo 6. random variables

random variables • Arandom variable is some numeric function of the outcome, not the outcome itself. (Technically, neither random nor a variable, but...) • Ex. • Let H be the number of Heads when 20 coins are tossed • Let T be the total of 2 dice rolls • Let X be the number of coin tosses needed to see 1st head • Note; even if the underlying experiment has “equally likely outcomes,” the associated random variable may not }

memorize me! first head • Flip a (biased) coin repeatedly until 1st head observed • How many flips? Let X be that number. • P(X=1) = P(H) = p • P(X=2) = P(TH) = (1-p)p • P(X=3) = P(TTH) = (1-p)2p • ... • Check that it is a valid probability distribution: • 1) • 2)

head count n = 2 n = 8

pmf cdf cumulative distribution function NB: for discrete random variables, be careful about “≤” vs “<”

why random variables • Why use random variables? • A. Often we just care about numbers • If I win $1 per head when 20 coins are tossed, what is my average winnings? What is the most likely number? What is the probability that I win < $5? ... • B. It cleanly abstracts away from unnecessary detail about the experiment/sample space; PMF is all we need. • Flip 7 coins, roll 2 dice, and throw a dart; if dart landed in sector = dice roll mod #heads, then X = ... → →

expectation average of random values, weighted by their respective probabilities 33

expectation average of random values, weighted by their respective probabilities 34

expectation average of random values, weighted by their respective probabilities 35

first head dy0/dy = 0 How much would you pay to play? (To geo)

how many heads How much would you pay to play?

E[Y] = Σj jq(j) = 72/36 = 2 E[X] = Σi ip(i) =252/36= 7 expectation of a function of a random variable

expectation of a function of a random variable E[Y] = Σj jq(j) = 72/36 = 2 E[g(X)] = Σi g(i)p(i) = 252/3= 2

g X Y xi1 yj1 xi2 xi3 yj3 xi6 yj2 xi4 xi5 Note that Sj = { xi | g(xi)=yj } is a partition of the domain of g. expectation of a function of a random variable BT pg.84-85

properties of expectation • A & B each bet $1, then flip 2 coins: • Let X be A’s net gain: +1, 0, -1, resp.: • What is E[X]? • E[X] = 1•1/4 + 0•1/2 + (-1)•1/4 = 0 • What is E[X2]? • E[X2] = 12•1/4 + 02•1/2 + (-1)2•1/4 = 1/2 Note: E[X2] ≠ E[X]2

properties of expectation • Linearity of expectation, I • For any constants a, b: E[aX + b] = aE[X] + b • Proof: • Example: • Q: In the 2-person coin game above, what is E[2X+1]? • A: E[2X+1] = 2E[X]+1 = 2•0 + 1 = 1

properties of expectation • Linearity, II • Let X and Y be two random variables derived from outcomes of a single experiment. Then • Proof: Assume the sample space S is countable. (The result is true without this assumption, but I won’t prove it.) Let X(s), Y(s) be the values of these r.v.’s for outcome s∈S.Claim: • Proof: similar to that for “expectation of a function of an r.v.,” i.e., the events “X=x” partition S, so sum above can be rearranged to match the definition of • Then: E[X+Y] = E[X] + E[Y] True even if X, Y dependent E[X+Y] = Σs∈S(X[s] + Y[s]) p(s) = Σs∈SX[s] p(s) + Σs∈SY[s] p(s) = E[X] + E[Y]

properties of expectation • Example • X = # of heads in one coin flip, where P(X=1) = p. • What is E(X)? • E[X] = 1•p + 0 •(1-p) = p • Let Xi, 1 ≤ i ≤ n, be # of H in flip of coin with P(Xi=1) = pi • What is the expected number of heads when all are flipped? • E[ΣiXi] = ΣiE[Xi] = Σipi • Special case: p1 = p2 = ... = p : • E[# of heads in n flips] = pn • ☜ Compare to slide 35

properties of expectation • Note: • Linearity is special! • It is not true in general that • E[X•Y] = E[X] • E[Y] • E[X2] = E[X]2 • E[X/Y] = E[X] / E[Y] • E[asinh(X)] = asinh(E[X]) • • • • • • ← counterexample above

risk • Alice & Bob are gambling (again). X = Alice’s gain per flip: • E[X] = 0 • . . . Time passes . . . • Alice (yawning) says “let’s raise the stakes” • E[Y] = 0, as before. • Are you (Bob) equally happy to play the new game?

E[X] measures the “average” or “central tendency” of X. • What about its variability? • If E[X] = μ, then E[|x-μ|] seems like a natural quantity to look at: how much do we expect X to deviate from its average. Unfortunately, it’s a bit inconvenient mathematically; following is easier/more common. • Definition • The variance of a random variable X with mean E[X] = μ is • Var[X] = E[(X-μ)2], often denoted σ2. • The standard deviation of X is σ = √Var[X]

what does variance tell us? • The variance of a random variable X with mean E[X] = μ is • Var[X] = E[(X-μ)2], often denoted σ2. • 1: Square always ≥ 0, and exaggerated as X moves away from μ, so Var[X] emphasizes deviation from the mean. • II: Numbers vary a lot depending on exact distribution of X, but typically X is • within μ ± σ ~66% of the time, and • within μ ± 2σ ~95% of the time. • (We’ll see the reasons for this soon.)

mean and variance • μ = E[X] is about location;σ = √Var(X) is about spread σ≈2.2 # heads in 20 flips, p=.5 μ # heads in 150 flips, p=.5 σ≈6.1 μ (and note σ bigger in absolute terms in second ex., but smaller as a proportion of max.)

risk • Alice & Bob are gambling (again). X = Alice’s gain per flip: • E[X] = 0 Var[X] = 1 • . . . Time passes . . . • Alice (yawning) says “let’s raise the stakes” • E[Y] = 0, as before. Var[Y] = 1,000,000 • Are you (Bob) equally happy to play the new game?

~ ~ ~ ~ σY = 100 σZ = 10 example • Two games: • a) flip 1 coin, win Y = $100 if heads, $-100 if tails • b) flip 100 coins, win Z = (#(heads) - #(tails)) dollars • Same expectation in both: E[Y] = E[Z] = 0 • Same extremes in both: max gain = $100; max loss = $100 • But variabilityis very different: