From Crash-and-Recover to Sense-and-Adapt: Our Evolving Models of Computing Machines

From Crash-and-Recover to Sense-and-Adapt: Our Evolving Models of Computing Machines. Rajesh K. Gupta UC San Diego. To a software designer, all chips look alike. To a hardware engineer, a chip is delivered as per contract in a data-sheet. Reality is.

From Crash-and-Recover to Sense-and-Adapt: Our Evolving Models of Computing Machines

E N D

Presentation Transcript

From Crash-and-Recover to Sense-and-Adapt: Our Evolving Models of Computing Machines Rajesh K. Gupta UC San Diego.

To a software designer, all chips look alike To a hardware engineer, a chip is delivered as per contract in a data-sheet.

Reality is Computers are Built on STUFF THAT IS IMPERFECT AND…

Changing From Chiseled Objects to Molecular Assemblies 45nm Implementation of Leon3 Processor Core Courtesy: P. Gupta, UCLA

Engineers Know How to “Sandbag” • PVTA margins add to guardbands • Static Process variation: effective transistor channel length and threshold voltage • Dynamic variations: Temperature fluctuations, supply Voltage droops, and device Aging (NBTI, HCI) guardband actual circuit delay Clock Across-wafer Frequency VCC Droop Temperature Aging

Uncertainty Means Unpredictability • VLSI Designer: Eliminate It • Capture physics into models • Statistical or plain-old Monte Carlo • Manufacturing, temperature effects • Architect: Average it out • Workload (Dynamic) Variations • Software, OS: Deny It • Simplify, re-organize OS/tasks breaking these into parts that are precise (W.C.) and imprecise (Ave.) Simulate ‘degraded’ netlist with model input changes (DVth) Deterministic simulations capture known physical processes (e.g., aging) Multiple (Monte-Carlo) simulations wrapped around a nominal model Each doing their own thing, massive overdesign…

Let us step back a bit: HW-SW Stack Application Application Operating System Hardware Abstraction Layer (HAL)

Let us step back a bit: HW-SW Stack Application Application Operating System Hardware Abstraction Layer (HAL) Time or part

} overdesigned hardware Let us step back a bit: HW-SW Stack Application Application Operating System Hardware Abstraction Layer (HAL) 20x in sleep power 50% in performance 40% larger chip 35% more active power 60% more sleep power Time or part

} underdesigned hardware What if? Application Application Operating System Hardware Abstraction Layer (HAL) Time or part

Application Application Traditional Fault-tolerance Underdesigned Hardware Opportunistic Software Operating System Hardware Abstraction Layer (HAL) Time or part New Hardware-Software Interface.. minimal variability handling in hardware

Do Nothing (Elastic User, Robust App) Change Algorithm Parameters (Codec Setting, Duty Cycle Ratio) Change Algorithm Implementation (Alternate code path, Dynamic recompilation) Change Hardware Operating Point (Disabling parts of the cache, Changing V-f) UNO Computing Machines Seek Opportunities based on Sensing Results Metadata Mechanisms: Reflection, Introspection • Variability signatures: • cache bit map • cpu speed-power map • memory access time • ALU error rates Models Sensors • Variability manifestations • faulty cache bits • delay variation • power variation

UnO Computing Machines: Taxonomy of Underdesign Hardware Characterization Tests D D D Nominal Design D D D D Manufacturing D D D Manufactured Die Signature Burn In D Die Specific Adaptation Software D D D Performance Constraints D D D D D D Manufactured Die With Stored Signatures Hardware Puneet Gupta/UCLA

Several Fundamental Questions • How do we distinguish between codes that need to be accurate versus that can be not so? • How fine grain are these (or have to be)? • How do we communicate this information across the stack in a manner that is robust and portable? • And error controllable (=safe). • What is the model of error that should be used in designing UNO machines?

Building Machines that leverage move from Crash & Recover to Sense & Adapt

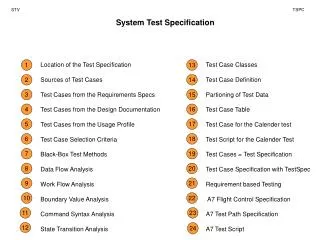

Expedition Grand Challenge & Questions “Can microelectronic variability be controlled and utilized in building better computer systems?” Three Goals: Address fundamental technical challenges (understand the problem) Create experimental systems (proof of concept prototypes) Educational and broader impact opportunities to make an impact (ensure training for future talent). What are most effective ways to detect variability? What are software-visible manifestations? What are software mechanisms to exploit variability? How can designers and tools leverage adaptation? How do we verify and test hw-sw interfaces?

Thrusts traverse institutions on testbed vehicles seeding various projects

Observe and Control Variability Across Stack The steps to build variability abstractions up to the SW layer By the time, we get to TLV, we are into a parallel software context: instruct OpenMP scheduler, even create an abstraction for programmers to express irregular and unstructured parallelism (code refactoring). • Monitor manifestations from instructions levels to task levels. [ILV,SLV,PLV,TLV] Rahimi et al, DATE’12, ISLPED’12, TC’13, DATE’13

Closer to HW: Uncertainty Manifestations • The most immediate manifestations of variability are in path delay and power variations. • Path delay variations has been addressed extensively in delay fault detection by test community. • With Variability, it is possible to do better by focusing on the actual mechanisms • For instance, major source of timing variation is voltage droops, and errors matter when these end up in a state change. Combine these two observations and you get a rich literature in recent years for handling variability induced errors: Razor, EDA, TRC, …

Detecting and Correcting Timing Errors • Detect error, tune supply voltage to reach an error rate, borrow time, stretch clock • Exploit detection circuits (e.g., voltage droops), double sampling with shadow latches, Exploit data dependence on circuit delays • Enable reduction in voltage margin • Manage timing guardbands and voltage margins • Tunable Replica allow non-intrusive operation. Voltage droop Voltage droop

Sensing: Razor, RazorII, EDS, Bubble Razor Transition Detector with Time Borrowing [Bowman’09] Double Sampling (Razor I) [Ernest’03] Double Sampling with Time Borrowing [Bowman’09] Razor II [Das’09] EDS [Bowman ‘11]

Task Ingredients: Model, Sense, Predict, Adapt • Sense & Adapt Observation using in situ monitors (Razor, EDS) with cycle-by-cycle corrections (leveraging CMOS knobs or replay) • Predict & Prevent Relying on external or replica monitors Model-based rule derive adaptive guardband to prevent error Adapt (correct) Prevent Sense (detect) Model Sensors

Don’t Fear Errors: Bits Flip, Instructions Don’t Always Execute Correctly CHARACTERIZE, MODEL, PREDICT Bit Error Rate, Timing Error Rate, Instruction Error Rate, ….

Characterize Instructions and Instruction Sequences for Vulnerability to timing errors Characterize LEON3 in 65nm TSMC across full range of operating conditions: (-40°C−125°C, 0.72V−1.1V) Critical path (ns) Dynamic variations cause the critical path delay to increase by a factor of 6.1×.

Generate ILV, SLV “Metadata” • The ILV (SLV) for each instructioni (sequencei) at every operating condition is quantified: • where Ni (Mi) is the total number of clock cycles in Monte Carlo simulation of instructioni (sequencei) with random operands. • Violationj indicates whether there is a violated stage at clock cyclej or not. • ILVi (SLVi) defined as the total number of violated cycles over the total simulated cycles for the instructioni (sequencei). Now, I am going to make a jump over characterization data…

Connect the dots from paths to Instructions Observe: The execute and memory parts are sensitive to V/T variations, and also exhibit a large number of critical paths in comparison to the rest of processor. Hypothesis: We anticipate that the instructions that significantly exercise the execute and memory stages are likely to be more vulnerableto V/T variations Instruction-level Vulnerability (ILV) T= 125°C For SPARC V8 instructions (V, T, F) are varied and ILVi is evaluated for every instructioniwith random operands; SLVi is evaluated for a high-frequent sequencei of instructions. VDD= 1.1V

ILV AND SLV: Partition them into groups according to their vulnerability to timing errors • For every operating conditions: ILV (3rd Class) ≥ ILV (2nd Class) ≥ ILV (1st Class) SLV (Class II) ≥ SLV (Class I) ILV: 1st class= Logical and arithmetic; 2nd class= Memory; 3rd class= Multiply and divide. SLV:Class II= mixtures of memory, logic, control; Class I= logical and arithmetic. For top 20 high-frequency sequence from 80 billion dynamic instructions of 32 benchmarks ILV and SLV classification for integer SPARC V8 ISA.

Use Instruction Vulnerabilities to Generate Better Code, Call/Returns APPLY: STATICALLY TO ACHIEVE HIGHER INSTRUCTION THROUGHPUT, LOWER POWER

Now Use ILV, SLV to Dynamically Adapt Guardbands Application Code • Error-tolerant Applications • Duplication of critical instructions • Satisfying the fidelity metric • Error-intolerant Application • Increasing the percentage of the sequences of ClassI, i.e., increasing the number arithmetic instructions with regard to the memory and control flow instructions, e.g., through loop unrolling technique App. type Compile time VA Compiler ILV SLV Adaptive Guardbanding via memory-mapped I/O • Adaptive clock scaling for each class of sequences mitigates the conservative inter- and intra-corner guardbanding. I$ Adaptive Clocking ME EX IF ID WB RA Seqi D$ PLUT (V,T) Runtime CPM LEON3 core clock • At the runtime, in every cycle, the PLUT module sends the desired frequency to the adaptive clocking circuit utilizing the characterized SLV metadata of the current sequence and the operating condition monitored by CPM.

Utilization SLV at Compile Time • Applying the loop unrolling produces a longer chain of ALU instructions, and as a result the percentage of sequences of ClassI is increased up to 41% and on average 31%. • Hence, the adaptive guardbanding benefits from this compiler transformation technique to further reduce the guardband for sequences of ClassI.

Effectiveness of Adaptive Guardbanding • Using online SLV coupled with offline compiler techniques enables the processor to achieve 1.6× average speedup for intolerant applications • Compared to recent work [Hoang’11], by adapting the cycle time for dynamic variations (inter-corner) and different instruction sequences (intra-corner). • Adaptive guardbanding achieves up to 1.9× performance improvement for error-tolerant (probabilistic) applications in comparison to the traditional worst-case design.

Example: Procedure Hopping in Clustered CPU, Each core with its voltage domain • Statically characterize procedure for PLV • A core increases voltage if monitored delay is high • A procedure hops from one core to another if its voltage variation is high • Less 1% cycle overhead in EEMBC. VDD = 0.81V VA-VDD-Hopping=( , 0.81V 0.99V ) VDD = 0.99V

HW/SW Collaborative Architecture to Support Intra-cluster Procedure Hopping • The code is easily accessible via the shared-L1 I$. • The data and parameters are passed through the shared stack in TCDM (Tightly Coupled Data Memory) • A procedure hopping information table (PHIT) keeps the status for a migrated procedure.

Combine Characterization with Online Recognition APPLY: MODEL, SENSE, and ADAPT DYNAMICALLY

Consider a Full Permutation of PVTA Parameters • 10 32-bit integer, 15 single precision FP Functional Units (FUs) • For each FUi working with tclk and a given PVTA variations, we defined Timing Error Rate (TER):

Parametric Model Fitting Linear discriminant analysis PVTA tclk • We used Supervised learning (linear discriminant analysis) to generate a parametric model at the level of FU that relates PVTA parameters variation and tclk to classes of TER. • On average, for all FUs the resubstitution error is 0.036, meaning the models classify nearly all data correctly. • For extra characterization points, the model makes correct estimates for 97% of out-of-sample data. The remaining 3% is misclassified to the high-error rate class, CH, thus will have safe guardband. HFG ASIC Analysis Flow for TER TER Classes of TER TER Class Parametric Model

Delay Variation and TER Characterization • During design time the delay of the FP adder has a large uncertainty of [0.73ns,1.32ns], since the actual values of PVTA parameters are unknown.

Hierarchical Sensors Observability • The question is what mix of monitors that would be useful? • The more sensors we provide for a FU, the better conservative guardband reduction for that FU. • The guardband of FP adder can be reduced up to • 8% (P_sensor), • 24% (PA_sensors), • 28% (PAT_sensors), • 44% (PATV_sensors) In-situ PVT sensors impose 1−3% area overhead [Bowman’09] Five replica PVT sensors increase area of by 0.2% [Lefurgy’11] The banks of 96 NBTI aging sensors occupy less than 0.01% of the core's area [Singh’11]

Online Utilization of Guardbanding The control system tunes the clock frequency through an online model-based rule. • Fine-grained granularity of instruction-by-instruction monitoring and adaptation that uses signals of PATV sensors from individual FUs • Coarse-grained granularity of kernel-level monitoring uses a representative PATV sensors for the entire execution stage of pipeline

Throughput benefit of HFG Kernel-level monitoring improves throughput by 70% from P to PATV sensors. Target TER=0 Instruction-level monitoring improves throughput by 1.8-2.1X.

Consider shared 8-FPU 16-core architectures Putting It Together: Coordinated Adaptation TO Propagate ERRORS TOWARDS APPLICATION

Accurate, Approximate Operating Modes Modeled after STM P2012 16-core machine • Accurate mode: every pipeline uses (with 3.8% area overhead) • EDS circuit sensors to detect any timing errors, ECU to correct errors using multiple-issue operation replay mechanism (without changing frequency)

Accuracy-Configurable Architecture • In the approximate mode • Pipeline disables the EDS sensors on the less significant N bits of the fraction where N is reprogrammable through a memory-mapped register. • The sign and the exponent bits are always protected by EDS. • Thus pipeline ignores any timing error below the less significant N bits of the fraction and save on the recovery cost. • Switching between modes disables/enables the error detection circuits partially on N bits of the fraction FP pipeline can efficiently execute subsequent interleaved accurate or approximate software blocks.

Fine-grain Interleaving Possible Through Coordination and Controlled Approximation Architecture: accuracy-reconfigurable FPUs that are shared among tightly-coupled processors and support online FPV characterization Compiler: OpenMP pragmas for approximate FP computations; profiling technique to identify tolerable error significance and error rate Runtime: Scheduler utilizes FPV metadata and promotes FPUs to accurate mode, or demotes them to approximate mode depending upon the code region requirements. Either ignore the timing errors (in approximate regions) or reduce frequency of errors by assigning computations to correctible hardware resources for a cost. Ensure safety of error ignorance through a set of rules.

FP Vulnerability Dynamically Monitored and Controlled by ECU • % of cycles with timing errors as reported by EDS sensors captured as FPV metadata • Metadata is visible to the software through memory-mapped registers. • Enables runtime scheduler to perform on-line selection of best FP pipeline candidates • Low FPV units for accurate blocks, or steer error without correction to application.

OpenMP Compiler Extension error_significance_threshold (<value N>) #pragmaompaccurate structured-block #pragmaompapproximate[clause] structured-block #pragma omp parallel { #pragma omp accurate #pragmaomp for for (i=K/2; i <(IMG_M-K/2); ++i) { // iterate over image for (j=K/2; j <(IMG_N-K/2); ++j) { float sum = 0; int ii, jj; for (ii =-K/2; ii<=K/2; ++ii) { // iterate over kernel for (jj = -K/2; jj <= K/2; ++jj) { float data = in[i+ii][j+jj]; float coef = coeffs[ii+K/2][jj+K/2]; float result; #pragmaomp approximate error_significance_threshold(20) { result = data * coef; sum += result; } } } out[i][j]=sum/scale; } } } Code snippet for Gaussian filter utilizing OpenMP variability-aware directives int ID = GOMP_resolve_FP (GOMP_APPROX, GOMP_MUL, 20); GOMP_FP (ID, data, coeff, &result); int ID = GOMP_resolve_FP (GOMP_APPROX, GOMP_ADD, 20); GOMP_FP (ID, sum, result, &sum); Invokes the runtime FPU scheduler programs the FPU

FPV Metadata can even drive synthesis! utilizing fast leaky standard cells (low-VTH) for these paths utilizing the regular and slow standard cells (regular-VTH and high-VTH) for the rest of paths since errors can be ignored!

Save Recovery Time, Energy using FPV Monitoring (TSMC 45nm) • Error-tolerant applications: Gaussian, Sobel filters • PSNR results show error significance threshold at N=20 while maintaining >30 dB • 36% more energy efficient FPUs, recovery cycles reduced by 46% • 5 kernel codes as error-intolerant applications • 22% average energy savings.

Expedition Experimental Platforms & Artifacts • Interesting and unique challenges in building research testbeds that drive our explorations • Mocks up don’t go far since variability is at the heart of microelectronic scaling. Need platforms that capture scaling and integration aspects. • Testbeds to observe (Molecule, GreenLight, Ming), control (Oven, ERSA) Molecule Ming the Merciless Red Cooper ERSA@BEE3

Red Cooper Testbed • Customized chip with processor + speed/leakage sensors • Testbed board to finish the sensor feedback loop on board • Used in building a duty-cycled OS based on variability sensors Applications Power Microarchitecture and Compilers Performance Errors Runtime Ambient Process Vendor Aging CPU Mem Storage Accelerators Energy Source Network (Batteries)