Floating Point Theory, Notation, MIPS 19,21 June 2007

320 likes | 631 Vues

CDA 3101 Summer 2007 Introduction to Computer Organization. Floating Point Theory, Notation, MIPS 19,21 June 2007. Overview. Floating point numbers Scientific notation Decimal scientific notation Binary scientific notation

Floating Point Theory, Notation, MIPS 19,21 June 2007

E N D

Presentation Transcript

CDA 3101 Summer 2007Introduction to Computer Organization Floating Point Theory, Notation, MIPS 19,21 June 2007

Overview • Floating point numbers • Scientific notation • Decimal scientific notation • Binary scientific notation • IEEE 754 FP Standard • Floating point representation inside a computer • Greater range vs. precision • Decimal to Floating Point conversion • Type is not associated with data • MIPS floating point instructions, registers

Computer Numbers • Computers are made to deal with numbers • What can we represent in n bits? • Unsigned integers: 0 to 2n - 1 • Signed integers: -2(n-1) to 2(n-1) - 1 • What about other numbers? • Very large numbers? (seconds/century) 3,155,760,00010 (3.1557610 x 109) • Very small numbers? (atomic diameter) 0.0000000110 (1.010 x 10-8) • Rationals (repeating pattern) 2/3 (0.666666666. . .) • Irrationals: 21/2 (1.414213562373. . .) • Transcendentals : e (2.718...), (3.141...)

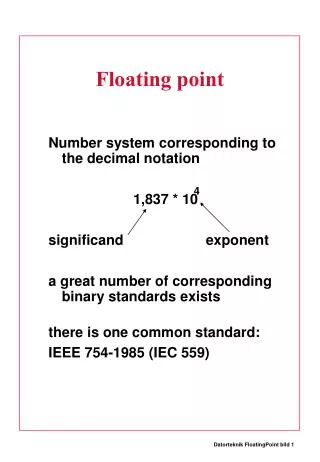

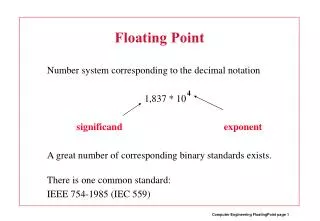

exponent mantissa radix (base) decimal point Scientific Notation 6.02 x 1023 • Normalized form: no leadings 0s (exactly one digit to left of decimal point) • Alternatives to representing 1/1,000,000,000 • Normalized: 1.0 x 10-9 • Not normalized: 0.1 x 10-8, 10.0 x 10-10

Exponent radix (base) “binary point” Binary Scientific Notation Mantissa • Floating point arithmetic • Binary point is not fixed (as it is for integers) • Declare such variable in C as float 1.0two x 2-1

31 30 23 22 0 S Exponent Significand 1 bit 8 bits 23 bits Floating Point Representation • Normal format:+1.xxxxxxxxxxtwo*2yyyytwo • Multiple of Word Size (32 bits) • S represents SignExponent represents y’s Significand represents x’s • Represent numbers as small as 2.0 x 10-38 to as large as 2.0 x 1038

Overflow and Underflow • Overflow • Result is too large (> 2.0x1038 ) • Exponent larger than represented in 8-bit Exponent field • Underflow • Result is too small • >0, < 2.0x10-38 • Negative exponent larger than represented in 8-bit Exponent field • How to reduce chances of overflow or underflow?

Double Precision FP 31 30 20 19 0 • Use two words (64 bits) S Exponent Significand 1 bit 11 bits 20 bits Significand (cont’d) 32 bits • C variable declared as double • Represent numbers almost as small as 2.0 x 10-308 to almost as large as 2.0 x 10308 • Primary advantage is greater accuracy (52 bits)

Floating Point Representation 31 30 23 22 0 Single Precision Exponent Significand S 1 bit 8 bits 23 bits 31 30 20 19 0 Significand S Exponent Double Precision 1 bit 11 bits 20 bits Significand (cont’d) 32 bits 127 (SP) 1023 (DP) Bias Normalized scientific notation: +1.xxxxtwo*2yyyytwo Exponent: biased notation Significand: sign – magnitude notation

IEEE 754 FP Standard • Used in almost all computers (since 1980) • Porting of FP programs • Quality of FP computer arithmetic • Sign bit: • Significand: • Leading 1 implicit for normalized numbers • 1 + 23 bits single, 1 + 52 bits double • always true: 0 < Significand < 1 • 0 has no leading 1 • Reserve exponent value 0 just for number 0 1 means negative 0 means positive (-1)S * (1 + Significand) * 2Exp

IEEE 754 Exponent • Use FP numbers even without FP hardware • Sort records with FP numbers using integer compares • Break FP number into 3 parts: compare signs, then compare exponents, then compare significands • Faster (single comparison, ideally) • Highest order bit is sign ( negative < positive) • Exponent next, so big exponent => bigger # • Significand last: exponents same => bigger #

Biased Notation for Exponents • Two’s complement does not work for exponent • Most negative exponent: 00000001two • Most positive exponent: 11111110two • Bias: number added to real exponent • 127 for single precision • 1023 for double precision • 1.0 * 2-1 0 0111 1110 0000 0000 0000 0000 0000 000 (-1)S * (1 + Significand) * 2(Exponent - Bias)

Significand • Method 1 (Fractions): • In decimal: 0.34010 => 34010/100010 => 3410/10010 • In binary: 0.1102 => 1102/10002 (610/810) => 112/1002 (310/410) • Helps understand the meaning of the significand • Method 2 (Place Values): • Convert from scientific notation • In decimal: 1.6732 = (1x100) + (6x10-1) + (7x10-2) + (3x10-3) + (2x10-4) • In binary: 1.1001 = (1x20) + (1x2-1) + (0x2-2) + (0x2-3) + (1x2-4) • Interpretation of value in each position extends beyond the decimal/binary point • Good for quickly calculating significand value • Use this method for translating FP numbers

0 0110 1000 101 0101 0100 0011 0100 0010 Binary to Decimal FP • Sign: 0 => positive • Exponent: • 0110 1000two = 104ten • Bias adjustment: 104 - 127 = -23 • Significand: • 1 + 1x2-1+ 0x2-2 + 1x2-3 + 0x2-4 + 1x2-5 +...=1+2-1+2-3 +2-5 +2-7 +2-9 +2-14 +2-15 +2-17 +2-22= 1.0 + 0.666115 • Represents: 1.666115*2-23 ~ 1.986*10-7

1 0111 1110 100 0000 0000 0000 0000 0000 Decimal to Binary FP (1/2) • Simple Case: If denominator is a power of 2 (2, 4, 8, 16, etc.), then it’s easy. • Example: Binary FP representation of -0.75 • -0.75 = -3/4 • -11two/100two = -0.11two • Normalized to -1.1two x 2-1 • (-1)S x (1 + Significand) x 2(Exponent-127) • (-1)1 x (1 + .100 0000 ... 0000) x 2(126-127)

Decimal to Binary FP (2/2) • Denominator is not an exponent of 2 • Number can not be represented precisely • Lots of significand bits for precision • Difficult part: get the significand • Rational numbers have a repeating pattern • Conversion • Write out binary number with repeating pattern. • Cut it off after correct number of bits (different for single vs. double precision). • Derive sign, exponent and significand fields.

Decimal to Binary 1 1000 0000 101 0101 0101 0101 0101 0101 0.33333333 x 2 0 .66666666 0.66666666 x 2 1 .33333332 0.33333332 x 2 0 .66666664 - 1 1 . 0 1 0 1 0 1 0 . . . - 3 . 3 3 3 3 3 3… => - 1.1010101.. x 21 • Significand: 101 0101 0101 0101 0101 0101 • Sign: negative => 1 • Exponent: 1+ 127 = 128ten= 1000 0000two

Types and Data 0011 0100 0101 0101 0100 0011 0100 0010 • 1.986 *10-7 • 878,003,010 • “4UCB” ori $s5, $v0, 17218 • Data can be anything; operation of instruction that accesses operand determines its type! • Power/danger of unrestricted addresses/pointers: • Use ASCII as FP, instructions as data, integers as instructions, ... • Security holes in programs

IEEE 754 Special Values Negative Underflow Positive Underflow Negative Overflow Expressible Negative Numbers Expressible Positive Numbers Positive Overflow -(1-2-24)*2128 -.5*2-127 0 .5*2-127 (1-2-24)*2128

Value: Not a Number • What is the result of: sqrt(-4.0)or 0/0? • If infinity is not an error, these shouldn’t be either. • Called Not a Number (NaN) • Exponent = 255, Significand nonzero • Applications • NaNs help with debugging • They contaminate: op(NaN, X) = NaN • Don’t use NaN • Ask math majors

b Gap! - + 0 a - + 0 Value: Denorms • Problem: There’s a gap among representable FP numbers around 0 • Smallest pos num: a = 1.0… 2 * 2-126 = 2-126 • 2nd smallest pos num: b = 1.001 2 * 2-126 = 2-126 + 2-150 • a - 0 = 2-126 • b - a = 2-150 • Solution: • Denormalized numbers: no leading 1 • Smallest pos num: a = 2-150 • 2nd smallest num: b = 2-149

Rounding • Math on real numbers => rounding • Rounding also occurs when converting types • Double single precision integer • Round towards +infinity • ALWAYS round “up”: 2.001 => 3; -2.001 => -2 • Round towards -infinity • ALWAYS round “down”: 1.999 => 1; -1.999 => -2 • Truncate • Just drop the last bits (round towards 0) • Round to (nearest) even (default) • 2.5 => 2; 3.5 => 4

FP Fallacy • FP Add, subtract associative: FALSE! • x = – 1.5 x 1038, y = 1.5 x 1038, and z = 1.0 • x + (y + z) = –1.5x1038 + (1.5x1038 + 1.0) = –1.5x1038 + (1.5x1038) = 0.0 • (x + y) + z = (–1.5x1038 + 1.5x1038) + 1.0 = (0.0) + 1.0 = 1.0 • Floating Point add, subtract are not associative! • Why? FP result approximates real result • 1.5 x 1038 is so much larger than 1.0 that 1.5 x 1038 + 1.0 in floating point representation is still 1.5 x 1038

FP Addition / Subtraction • Much more difficult than with integers • Can’t just add significands • Algorithm • De-normalize to match exponents • Add (subtract) significands to get resulting one • Keep the same exponent • Normalize (possibly changing exponent) • Note: If signs differ, just perform a subtract instead.

MIPS FP Architecture (1/2) • Separate floating point instructions: • Single Precision: add.s, sub.s, mul.s, div.s • Double Precision: add.d, sub.d, mul.d, div.d • These instructions are far more complicated than their integer counterparts • Problems: • It’s inefficient to have different instructions take vastly differing amounts of time. • Generally, a particular piece of data will not change from FP to int, or vice versa, within a program. • Some programs do not do floating point calculations • It takes lots of hardware relative to integers to do FP fast

MIPS FP Architecture (2/2) • 1990 Solution: separate chip that handles only FP. • Coprocessor 1: FP chip • Contains 32 32-bit registers: $f0, $f1, … • Most registers specified in .s and .d instructions ($f) • Separate load and store: lwc1 and swc1(“load word coprocessor 1”, “store …”) • Double Precision: by convention, even/odd pair contain one DP FP number: $f0/$f1, … , $f30/$f31 • 1990 Computer contains multiple separate chips: • Processor: handles all the normal stuff • Coprocessor 1: handles FP and only FP; • Move data between main processor and coprocessors: • mfc0, mtc0, mfc1, mtc1, etc.

C => MIPS float f2c (float fahr) { return ((5.0 / 9.0) * (fahr – 32.0)); } F2c: lwc1 $f16, const5($gp) # $f16 = 5.0 lwc1 $f18, const9($gp) # $f18 = 9.0 div.s $f16, $f16, $f18 # $f16 = 5.0/9.0 lwc1 $f20, const32($gp) # $f20 = 32.0 sub.s $f20, $f12, $f20 # $f20 = fahr – 32.0 mul.s $f0, $f16, $f20 # $f0 = (5/9)*(fahr-32) jr $ra # return

Conclusion • Floating Point numbers approximate values that we want to use. • IEEE 754 Floating Point Standard is most widely accepted attempt to standardize FP arithmetic • New MIPS architectural elements • Registers ($f0-$f31) • Single Precision (32 bits, 2x10-38… 2x1038) • add.s, sub.s, mul.s, div.s • Double Precision (64 bits , 2x10-308…2x10308) • add.d, sub.d, mul.d, div.d • Type is not associated with data, bits have no meaning unless given in context (e.g., int vs. float)