CS 213: Parallel Processing Architectures

CS 213: Parallel Processing Architectures. Laxmi Narayan Bhuyan http://www.cs.ucr.edu/~bhuyan Lecture4. Commercial Multiprocessors. Origin2000 System. CC-NUMA Architecture Upto 512 nodes (1024 processors) 195MHz MIPS R10K processor , peak 390MFLOPS or 780 MIPS per proc

CS 213: Parallel Processing Architectures

E N D

Presentation Transcript

CS 213: Parallel Processing Architectures Laxmi Narayan Bhuyan http://www.cs.ucr.edu/~bhuyan Lecture4

Origin2000 System • CC-NUMA Architecture • Upto 512 nodes (1024 processors) • 195MHz MIPS R10K processor, peak 390MFLOPS or 780 MIPS per proc • Peak SysAD bus bw is 780MB/s, so also Hub-Mem • Hub to router chip and to Xbow is 1.56 GB/s (both are off-board)

Origin Network • Each router has six pairs of 1.56MB/s unidirectional links • Two to nodes, four to other routers • latency: 41ns pin to pin across a router • Flexible cables up to 3 ft long • Four “virtual channels”: request, reply, other two for priority or I/O • HPC solution stack running on industry standard Linux® operating systems

SGI® Altix® 4700 Servers • Shared-memory NUMAflex™ (CC-NUMA?) architecture • 512 sockets or 1024 cores under one instance of Linux and as much as 128TB of globally addressable memory • Dual-core Intel® Itanium® 2 Series 9000 cpus • High Bandwidth Fat-Tree Interconnection Network, called NUMALink • SGI® RASC™ Blade: Two high performance Xilinx Virtex 4 LX200 FPGA chips with 160K logic cells

The basic building block of the Altix 4700 system is the compute/memory blade. The compute blade contains one or two processor sockets. Each processor socket can contain one Intel Itanium 2 processor with on-chip L1, L2, and L3 caches, memory DIMMs, and a SHub2 ASIC.

Cray T3D – Shared Memory • Build up info in ‘shell’ • Remote memory operations encoded in address

CRAY XD1 2 or 4-way SMP based on AMD 64-bit Opteron processors. FPGA accelerator at each SMP

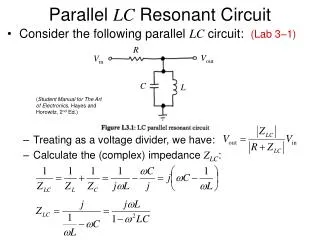

Low Latency Message Passing Across Clusters in XD1 The interconnection topology, shown in Fig. 1 has three levels of latencies: communication time between the CPUs inside one blade is through shared memory very fast message passing communication among blades within a chassis, slower message passing communication between two different chassis

NOW – Message Passing • General purpose processor embedded in NIC to implement VIA – to be discussed later

IBM SP Architecrture • SMP nodes and Message passing between nodes • Switch Architecture for High Performance

SIMD Model • Operations can be performed in parallel on each element of a large regular data structure, such as an array • 1 Control Processor (CP) broadcasts to many PEs. The CP reads an instruction from the control memory, decodes the instruction, and broadcasts control signals to all PEs. • Condition flag per PE so that can skip • Data distributed in each memory • Early 1980s VLSI => SIMD rebirth: 32 1-bit PEs + memory on a chip was the PE • Data parallel programming languages lay out data to processor

Data Parallel Model (SPMD) • Vector processors have similar ISAs, but no data placement restriction • SIMD led to Data Parallel Programming languages • Advancing VLSI led to single chip FPUs and whole fast µProcs (SIMD less attractive) • SIMD programming model led to Single Program Multiple Data (SPMD)model • All processors execute identical program • Data parallel programming languages still useful, do communication all at once: “Bulk Synchronous” phases in which all communicate after a global barrier

SPMD Programming – High-Performance Fortran (HPF) • Single Program Multiple Data (SPMD) • FORALL Construct similar to Fork: FORALL (I=1:N), A(I) = B(I) + C(I), END FORALL • Data Mapping in HPF 1. To reduce interprocessor communication 2. Load balancing among processors http://www.npac.syr.edu/hpfa/ http://www.crpc.rice.edu/HPFF/

Parallel Applications SPMD and MIMD • Commercial Workload • Multiprogramming and OS Workload • Scientific/Technical Applications

Parallel App: Commercial Workload • Online transaction processing workload (OLTP) (like TPC-B or -C) • Decision support system (DSS) (like TPC-D) • Web index search (Altavista)

Parallel App: Scientific/Technical • FFT Kernel: 1D complex number FFT • 2 matrix transpose phases => all-to-all communication • Sequential time for n data points: O(n log n) • Example is 1 million point data set • LU Kernel: dense matrix factorization • Blocking helps cache miss rate, 16x16 • Sequential time for nxn matrix: O(n3) • Example is 512 x 512 matrix

Parallel App: Scientific/Technical • Barnes App: Barnes-Hut n-body algorithm solving a problem in galaxy evolution • n-body algs rely on forces drop off with distance; if far enough away, can ignore (e.g., gravity is 1/d2) • Sequential time for n data points: O(n log n) • Example is 16,384 bodies • Ocean App: Gauss-Seidel multigrid technique to solve a set of elliptical partial differential eq.s’ • red-black Gauss-Seidel colors points in grid to consistently update points based on previous values of adjacent neighbors • Multigrid solve finite diff. eq. by iteration using hierarch. Grid • Communication when boundary accessed by adjacent subgrid • Sequential time for nxn grid: O(n2) • Input: 130 x 130 grid points, 5 iterations

Parallel Scientific App: Scaling • p is # processors • n is + data size • Computation scales up with n by O( ), scales down linearly as p is increased • Communication • FFT all-to-all so n • LU, Ocean at boundary, so n1/2 • Barnes complex:n1/2 greater distance,x log n to maintain bodies relationships • All scale down 1/p1/2 • Keep n same, but inc. p? • Inc. n to keep comm. same w. +p?