Model choice

Model choice. Gil McVean, Department of Statistics Tuesday 17 th February 2007. Questions to ask…. What is a generalised linear model? What does it mean to say that a variable is a ‘significant’ predictor? Is the best model just the one with all the ‘significant’ predictors?

Model choice

E N D

Presentation Transcript

Model choice Gil McVean, Department of Statistics Tuesday 17th February 2007

Questions to ask… • What is a generalised linear model? • What does it mean to say that a variable is a ‘significant’ predictor? • Is the best model just the one with all the ‘significant’ predictors? • How do I choose between competing models as explanations for observed data? • How can I effectively explore model space when there are many possible explanatory variables?

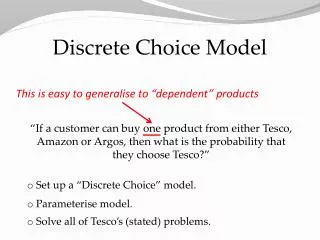

What is a linear model? Intercept Linear relationships with explanatory variables Interaction term Gaussian error Response variable • In a linear model, the expectation of the response variable is defined as a linear combination of explanatory variables • Explanatory variables can include any function of the original data • But the link between E(Y) and X (or some function of X) is ALWAYS linear and the error is ALWAYS Gaussian

What is a GLM? • There are many settings where the error is non-Gaussian and/or the link between E(Y) and X is not necessarily linear • Discrete data (e.g. counts in multinomial or Poisson experiments) • Categorical data (e.g. Disease status) • Highly-skewed data (e.g. Income, ratios) • Generalised linear models keep the notion of linearity, but enable the use of non-Gaussian error models • g is called the link function • In linear models, the link function is the identity • The response variable can be drawn from any distribution of interest (the distribution function) • In linear models this is Gaussian

Example: logistic regression When only two types of outcome are possible (e.g. disease/not-disease) we can model counts by the binomial If we want to perform inference about the factors that influence the probability of ‘success’ it is usual to use the logistic model The link function here is the logit

Fitting the model to data • In linear modelling we can use the beautiful compactness of linear algebra to find MLEs and estimates of the variance for parameters • Consider an n by k+1 data matrix, X, where n is the number of observations and k is the number of explanatory variables • the first column is ‘1’ for the intercept term • The MLEs for the coefficients (b) can be estimated using • In GLMs, there is usually no such compact analytical expression for the MLEs • Use numerical methods to maximise the likelihood

Example: testing for genotype association 2 b0 = -4 b1 = 2 1 0 In a cohort study, we observe the number of individuals in a population that get a particular disease We want to ask whether a particular genotype is associated with increased risk The simplest test is one in which we consider a single coefficient for the genotypic value

Cont. Suppose in a given study we observe the following counts We can fit a GLM using the logit link function and binomial probabilities We have genotype data stored in the vector gt and disease status in the vector status

Interpreting results Measure of contribution of individual observations to overall goodness of fit (for MLE model) MLE for coefficients Call: glm(formula = status ~ gt, family = binomial) Deviance Residuals: Min 1Q Median 3Q Max -0.8554 -0.4806 -0.2583 -0.2583 2.6141 Coefficients: Estimate Std. Error z value Pr(>|z|) (Intercept) -4.6667 0.2652 -17.598 <2e-16 *** gt 1.2833 0.1407 9.123 <2e-16 *** --- Signif. codes: 0 ‘***’ 0.001 ‘**’ 0.01 ‘*’ 0.05 ‘.’ 0.1 ‘ ’ 1 (Dispersion parameter for binomial family taken to be 1) Null deviance: 967.36 on 1999 degrees of freedom Residual deviance: 886.28 on 1998 degrees of freedom AIC: 890.28 Number of Fisher Scoring iterations: 6 Standard error for estimates Estimate/std. error P-value from normal distribution Measure of goodness of fit of null (compared to saturated model) Measure of goodness of fit of fitted model Penalised likelihood used in model choice Number of iterations used to find MLE

Extending the analysis In this simple example I had a single genetic locus about which I was asking questions In general, you may have data on 1000s (or millions) of loci, as well as additional covariates such as age, sex, weight, height, smoking habit,… We cannot jointly estimate the parameters for all factors (and even if we could we wouldn’t be able to interpret them) We would like to build a repatively simple model that captures the key features and relationships

Example • Suppose we wish to be able to predict whether someone will be likely to develop diabetes in later age • We have clinical records on 200 individuals listing whether they became diabetic • We also have information on the absence/presence of specific mutations at 20 genes that have been hypothesised as being associated with late-onset diabetes • How should we build a model?

What do we want the model to do? • A good model will • Allow us to identify key features of the underlying process • Enable estimation of key parameters • Be sufficiently simple that it remains coherent • Provide predictive power for future observations • A bad model will • Be so complex as to be impossible to understand • Be fitted too closely to the observed data • Have poor predictive power

Variable selection • We wish to identify from among the many variables measured those that are relevant to outcome • We wish our end point to be a model of sufficient complexity to capture the essential details, but no more • Occam’s razor • We wish to be able to work in situations where there may be more variables than data points

The problem of over-fitting • Models fitted too closely to past events will be poor at predicting future events • Adding additional parameters to a model will only increase the likelihood (it can never decrease it) • The most complex model is the one that maximises the probability of observing the data on which the model is fitted

Example • In a simulated data set the first 10 genes have ‘real’ effects of varying magnitude and the second 10 genes have no effect Coefficients: Estimate Std. Error z value Pr(>|z|) (Intercept) 34.05359 2910.79683 0.012 0.99067 data$gene1 -0.73766 0.45941 -1.606 0.10835 data$gene2 -0.99981 0.30628 -3.264 0.00110 ** data$gene3 0.66909 0.26177 2.556 0.01059 * data$gene4 -0.93047 1.15316 -0.807 0.41974 data$gene5 -16.33647 1455.39768 -0.011 0.99104 data$gene6 0.04707 0.60876 0.077 0.93837 data$gene7 -1.94350 1.21402 -1.601 0.10940 data$gene8 -0.32492 0.28443 -1.142 0.25330 data$gene9 0.23051 0.26882 0.858 0.39116 data$gene10 0.83958 0.25710 3.266 0.00109 ** data$gene11 0.55105 0.37792 1.458 0.14481 data$gene12 -0.59682 0.37278 -1.601 0.10938 data$gene13 -16.81804 1455.39765 -0.012 0.99078 data$gene14 0.20485 0.26589 0.770 0.44105 data$gene15 0.41384 0.28000 1.478 0.13941 data$gene16 0.37951 0.45013 0.843 0.39917 data$gene17 0.06568 0.36846 0.178 0.85853 data$gene18 0.37563 0.32853 1.143 0.25289 data$gene19 0.04099 0.44159 0.093 0.92605 data$gene20 -0.06147 0.46208 -0.133 0.89417 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1 Truth 0 -0.3716689 -0.4409581 0.8322316 -0.4222148 0.01153955 0.03125722 -0.3132983 -0.1919051 0.1974780 0.8168223 0 0 0 0 0 0 0 0 0 0

Model performance • Which explanatory variables are explanatory? With disease Without Individual – ranked by predicted value

Why can’t we just use likelihood ratios? • A natural approach would be to perform parameter estimation under the complete model and then remove non-significant variables • More generally, we could use the asymptotic theory of likelihood ratio tests to ask whether we can reject simple models in favour of more complex ones • There are two problems • Likelihood ratio tests only deal with nested models • It turns out that this approach tends to give too much weight to the more complex model Under M1 mle More complex model

Model scoring • We need to find a way of scoring models • One approach is to include a penalty for the number of parameters fitted. This gives penalised likelihood schemes • Another is to estimate the prediction potential through cross-validation

Penalised likelihood • A simple approach to guard against over-fitting is to add a penalty to the log likelihood whenever an additional parameter is added • All penalties are of the form • The two most popular penalties are Log likelihood Penalty relating to sample size, n, and number of fitted parameters, pi Akaike information criterion Bayesian (Schwartz) information criterion

Example • Which is the best model: gene1, gene2 or gene1+gene2?

A note on likelihoods • Note that the log likelihood in a linear model (i.e. with a normal distribution) with the coefficients estimated by maximum likelihood can be written as a function of the sample size and the sum of the squares of the residuals (RSS) • In generalised linear models the log likelihood at the fitted coefficients is of the form

Cross-validation • The idea is to use a subset of the data to train the model and assess its prediction performance on the remaining data • Predictive power is assess through some function of the residuals (the difference between observed and predicted values) • The approach throws away information, but does have useful robustness properties • Approaches include • Leave-one-out cross validation (leave out each observation in turn and predict its value from the remaining) • k-fold cross validation (divide the data into k sets and for each subset use the k-1 other subsets for parameter estimation).

Choosing the score • We need a way of scoring the predictive value of the model • One possibility is to compute some function of the residuals (the difference between predicted and observed values) • Mean absolute error • Mean square error (Brier’s score) • Root mean square error • An alternative is to compute the (log) likelihood for the novel data. • Poor prediction will mean that future observations are very unlikely

Model search • Having defined ways of scoring models, we need to efficiently search through model space • In the diabetes example there are 2100 possible models excluding any interaction terms • There are no generic methods of model searching (beyond exhaustive enumeration) that guarantee finding the optimum • However, stepwise methods generally perform well

Forward selection • Start with the simplest model – just an intercept • Find the single ‘best’ variable by searching over all of the possible variables • Add in the next best • Repeat the procedure until the score begins to decrease

Backward elimination • Start with the full model • Find the single ‘worst’ variable (i.e. the one whose removal leads to the greatest increase in model score) • Remove the next worst • Repeat the elimination procedure until the score begins to decrease

Issues • Often it is useful to combine the procedures – starting with the addition step then attempting to remove each of the current variables in turn to see if any have become redundant • Note that both algorithms are deterministic • In general, hill-climbing methods that include some stochasticity (e.g. simulated annealing) will find better solutions because the allow a broader range of search paths

Example Coefficients: Estimate Std. Error z value Pr(>|z|) (Intercept) 33.9250 2910.7953 0.012 0.990701 data$gene1 -0.8406 0.4407 -1.907 0.056494 . data$gene2 -0.9921 0.2928 -3.389 0.000703 *** data$gene3 0.6203 0.2428 2.555 0.010618 * data$gene5 -16.5845 1455.3976 -0.011 0.990908 data$gene7 -2.1143 1.1944 -1.770 0.076683 . data$gene10 0.7944 0.2425 3.275 0.001056 ** data$gene12 -0.7013 0.3578 -1.960 0.049985 * data$gene13 -17.0354 1455.3976 -0.012 0.990661 --- Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 This is not a real effect This is not a ‘significant’ effect

Example: 10-fold cross validation • For the complete model, with all 20 genes included, the mean absolute error is 0.395 (the root mean square error is 0.473) • By comparison the null model (intercept alone) has a MAE of 0.468 (RMSE of 0.484 • The model selected by the stepwise method using AIC has a MAE of 0.392 (RMSE of 0.456)

Ridge regression and lasso • In penalised likelihood, there is a fixed penalty for the number of parameters • However, we might consider alternative penalties, for example some function of the magnitude of the regression coefficients • For example, • The l parameter determines the strength of the penalty • Note that explanatory variables must be scaled to have zero mean and unit variance

Example Ridge regression Lasso Note that coefficients can change sign Note that there are sharp transitions from zero to non-zero parameter estimates

A look forward to Bayesian methods • In Bayesian statistics, model choice is typically drive by the use of Bayes factors • Whereas likelihood ratios compare the probability of observing the data at specified parameter values, Bayes factors compare the relative posterior probabilities of two models to their prior ratios • Unlike likelihood ratios, Bayes factors can be used to compare any models – i.e. they need not be nested • The log Bayes factor is often referred to as the weight of evidence • It is worth noting that model selection by BIC is an approximation to model selection by Bayes factors