Noise Sensitivity and Noise Stability

510 likes | 689 Vues

Noise Sensitivity and Noise Stability. Gil Kalai Hebrew University of Jeusalem and Yale University NY-Chicago-TA-Barcelona 08-09. (We start with a one-slide summary of the lecture followed by a 4 slides very informal summary of its four main parts.). Plan of the talk. 1) Planar percolation

Noise Sensitivity and Noise Stability

E N D

Presentation Transcript

Noise Sensitivity and Noise Stability Gil Kalai Hebrew University of Jeusalem and Yale University NY-Chicago-TA-Barcelona 08-09

(We start with a one-slide summary of the lecture followed by a 4 slides very informal summary of its four main parts.)

Plan of the talk • 1) Planar percolation • 2) Boolean functions, influences. • Noise sensitivity – The primal description • Noise sensitivity - The Fourier description • 3) Percolation – noise sensitivity, the spectrum, and dynamic percolation • 4) The Majority is Stabelest theorem, MAX CUT, and voting.

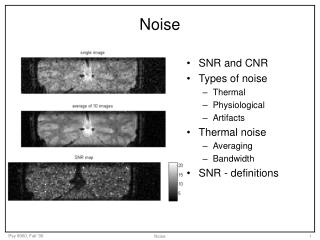

We consider a BOOLEAN FUNCTION f :{-1,1}n {-1,1} f(x1 ,x2,...,xn) Boolean functions are of importance in combinatorics, probability theory, computer science and other areas. The influence of the kth variable xk on f is the probability that flipping the value of the kth variable will flip the value of f. Boolean functions and influences

The infinite model: we have an infinite lattice grid in the plane. Every edge (bond) is open with probability p. All these probabilities are statistically independent. Basic questions: What is the probability of an infinite open cluster? What is the probability of an infinite open cluster containing the origin? Critical properties of percolation. Planar Percolation

Primal description - Functions (random variables) that are extremely sensitive to small random changes (which respect the overall underlying distribution.) Such functions cannot be measured by (even slightly) noisy measurements. Dual description– Spectrum concentrated on “large sets” Examples: Critical percolation, and many others Basic insight: Noise sensitivity is common and forced in various general situations. The notions of noise stability and noise sensitivity were introduced by Benjamini, Kalai and Schramm. Closely related notions (black noise; non-Fock models) were introduced by Tsirelson and Vershik. Noise sensitivity

Garban, Pete and Schramm achieved wonderful understanding of noise sensitivity for critical percolation. Detailed understanding of the scaling limit for the spectral distribution, and of dynamic percolation followed. Mossel, O’Donnell and Oleszkiewicz Proved that from all Boolean functions with diminishing influence the majority function is asymptotically most stable. This settled open problems regarding hardness of approximation for MAXCUT and the Condorcet Paradox. Two recent breakthroughs

Part I Planar Percolation

The critical probability Limit conjectures and Conformal invariance SLE and scaling limits Noise sensitivity and spectral description Critical Percolation: problems and progress

Kesten’s Theorem (1980): The critical probability for percolation in the plane is ½. If the probability p for a bond to be open (or for a hexagon to be grey) is below ½ the probability for an infinite cluster is 0. If the probability for a bond to be open is > ½ then the probability for an infinite cluster is 1. (Q: And when p is precisely ½?) (A: The probability for an infinite cluster is 0) Kesten: Critical probability 1/2

Conjecture: The probability for the crossing event for an n by m rectangular grid tends to a limit if the ratio m/n tends to a real number a, a>0, as n tends to infinity. (Sounds almost obvious, yet very difficult to prove) Note: we have moved from infinite models to finite ones. Limit conjectures

Conjecture: Crossing events in percolation are conformally invariant!! Sounds very surprising. (But there is no case of a planar percolation model where the limit conjectures are proven and conformal invariance is not.) Langlands, Pouliot, Saint-Aubin, Cardy, Aizenman: Conformal invariance conjectures

Oded Schramm defined a one parameter planar stochastic models SLE(κ). Lawler, Schramm and Werner extensively studied the SLE processes, found relations to several planar processes, and computed various critical exponents. SLE(6) describes the scaling limit of percolation. Schramm: SLE

Smirnov proved that for the model of site percolation on the triangular grid, equivalently For the white/grey hexagonal model (simply HEX), the conformal invariance conjecture is correct! (An incredibly simple form of Cardy’s formulas in this case found by Carleson was of importance.) Smirnov: Conformal Invariance

Combining Smirnov results with the works of Lawler Schramm and Werner (and some earlier works of Kesten) all critical exponents for percolation predicted by physicists and quite a few more (and for quite a few other planar models) were computed. (rigorously) (For the model of bond percolation with square grid this is yet to be done.) Putting things together

Part II Boolean Functions and Noise Sensitivity

We consider a BOOLEAN FUNCTION f :{-1,1}n {-1,1} f(x1 ,x2,...,xn) It is convenient to regard{-1,1}n as a probability space with the uniform probability distribution. Boolean Functions

We consider a BOOLEAN FUNCTION f :{-1,1}n {-1,1} The influence of the kth variable xk on f, denoted by Ik(f) is the probability that flipping the value of the kth variable will flip the value of f. Influence

1) Dictatorship f(x1 ,x2,...,xn) =x1 Ik(f) = 0 for k>1 I1(f)=1 2) Majority f(x1 ,x2,...,xn) =1 iff x1 + x2+...+xn > 0 Ik(f) behaves like n-1/2 for every k. Examples

3) The crossing event for percolations For percolation, every hexagon corresponds to a variable. xi =-1 if the hexagon is white and xi =1 if it is grey. f=1 if there is a left to right grey crossing. Ik(f) behaves like n-3/8 for every k but few. Examples (cont.)

We consider a BOOLEAN FUNCTION f :{-1,1}n {-1,1} f(x1 ,x2,...,xn) Given x1 ,x2,...,xn we define y1 ,y2,...,yn as follows: xi = yi with probability 1-t xi = -yi with probability t Noise Sensitivity: The Primal description

Let C(f;t) be the correlation between f(x1 , x2,...,xn) and f(y1,y2,...,yn) A sequence of Boolean function (fn ) is (completely) noise-sensitive if for every t>0, C(fn,t) tends to zero with n. Noise Sensitivity: The Primal description (cont.)

Part III Fourier Analysis, noise sensitivity and percolation

Theorem [BKS]: The crossing event for critical planar percolation model is noise- sensitive Basic argument: 1) Fourier description of noise sensitivity; 2) hypercontractivity This argument applies to very general cases. Percolation is Noise sensitive

Imagine two separate pictures of n by n hexagonal models for percolation. A hexagon is grey with probability ½. If the grey and white hexagons are independent in the two pictures the probability for crossing in both is ¼. If for each hexagon the correlation between its colors in the two pictures is 0.99, still the probability for crossing in both pictures is very close to ¼ as n grows! If you put one drawing on top of the other you will hardly notice a difference! Percolation is Noise sensitive

Given a Boolean function f :{-1,1}n {-1,1}, we write f(x) as a sum of multilinear (square free) monomials. f(x) = Σfˆ(S)W(S), where W(S) =∏{xs : s є S}. f^(S) is the Fourier-Walsh coefficient corresponding to S. Used by Kahn, Kalai and Linial (1988) to settle a conjecture by Ben-Or and Linial on “influences”. Fourier-Walsh expansion

The spectral distribution of f is a probability distribution assigning to a subset S the probability (f^(S))2 For a sequence of Boolean function fn :{-1,1}n {-1,1} (fn) is noise sensitive if for every k the overall spectral probability for non empty sets of size at most k tends to 0 as n tends to infinity. Noise sensitivity – the dual Description

Thaorem (Benjamini, K. Schramm 1999) For a sequence of Boolean functions fn ; If H(f) the sum of squares of the influences tends to 0 then (fn ) is noise sensitive. (Easier; a simple application of Beckner’s estimates.) If H(f) < n-b for some b>o then most of the spectral distribution of f is above the log n level. Our theorem

This was an attempt towards limits and conformal invariance conjectures. (Second attempt with records for Oded and Itai.) Understanding the spectrum of percolation looked interesting; One critical exponent (correlation length) has a simple description. (Late) Percolation on certain random planar graphs arises here naturally. (KPZ) The motivations

Dynamic percolation was introduced and first studied byHäggström, Peres and Steif (1997). The model was introduced independently by Itai Benjamini. Häggström, Peres and Steif proved that above the critical probability we have infinite clusters at all times, and below the critical probability there are infinite clusters at no times. Schramm and Steif proved that for critical dynamic percolation on the HEX model there are exceptional times. The proof is based on their strong versions of noise sensitivity for planar percolation. They needed stronger results about noise sensitivity of percolation. An application: Dynamic percolation

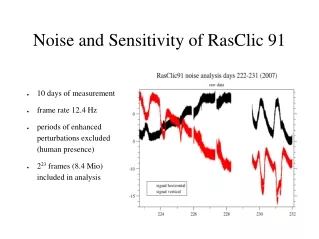

Benjamini, Kalai, and Schramm: Most Fourier Coefficients are abovelog n Schramm and Steif: Most Fourier coefficients are abovenb (b>0) Schramm and Smirnov: Scaling limit for spectral distribution for Percolation exists (*) Garban, Pete and Schramm: Spectral distributions concentrated on sets of sizen3/4(1+o(1)).(*) (*) – proved only for models where Smirnov’s result apply. The scaling limit for the spectral distribution of percolation is described by Cantor sets of dimension ¾. Fourier Description of Crossing events of Percolation

Garban, Pete and Schramm: Spectral distributions concentrated on sets of sizen3/4(1+o(1)).(*) (*) – proved only for models where Smirnov’s result apply. The scaling limit for the spectral distribution of percolation is described by Cantor sets of dimension ¾. Towards a full understanding of the scaling limit for dynamic percolation. Much, much more… Garbon, Pete and Schramm

An ingredient in the proof The first two moments of the spectral distribution coincide with those of the pivotal distribution. h(x) is the number of neighbors of x where f attains a different value. Σ^f2 (S)|S| = ΣIk (f) = Σ2-n h(x) (KKL) Σ^f2 (S)|S|2 = Σ2-n h2 (x)

First Passage Percolation (Benjamini, Kalai, Schramm) A recursive example by Ben-Or and Linial (BKS) Eigenvalues of random Gaussian matrices (Essentially follows from the work of Tracy-Widom) Here, we leave the Boolean setting. Examples related to random walks (required replacing the discrete cube by trees) and more... Other cases of noise sensitivity

Part IV Majority is most stable Stabe

Can we sample according (approximately) to the spectral distribution of the crossing event of percolation? This is unknown and it might be hard on digital computers. But... it is known to be easy for... quantum computers. For every Boolean function where f is computable in polynomial time. (Quantum computers are hypothetical devices based on QM which allow superior computational power.) Diversion: Simulating and computing the spectrum for percolation

Majority is noise stable Sheppard Theorem: (’99) Suppose that there is a probability t for a mistake in counting each vote. The probability that the outcome of the election are reversed is: arccos(1-t)/π

Majority is noise stable (cont.) Sheppard Theorem: (1899): Suppose that there is a probability t for a mistake in counting each vote. The probability that the outcome of the election are reversed is: arccos(1-t)/π When t is small this behaves like t1/2

Majority is noise stable (cont.) Suppose that there is a probability t for a mistake in counting each vote. The probability that the outcome of the election are reversed is: arccos(1-t)/π When t is small this behaves like t1/2 Is there a more stable voting rule? Sure! dictatorship

Majority is stablest! Theorem: Mossel, O’Donnell and Oleszkiewicz: (2005) Let (fn ) be a sequence of Boolean functions with diminishing maximum influence. I.e., lim max Ik(f) -> 0 Then the probability that the outcome of the election are reversed when for every vote there is a probability t it is flipped is at least (1-o(1)) arccos(1-t)/π

Majority is stablest!: Two applications • The probabilities of cyclic outcomes for voting rules with diminishing influences are minimized for the majority voting rule! • Improving the Goemans-Williamson 0.878567 approximation algorithm is hard, unique-game-hard!

The remarkable story of PCP and Hardness of approximation is related to Fourier analysis of Boolean functions. Khot Kindler Mossel and Oddonell showed that majority is stablest implies hardness of improving Goemans-Williamson MAX CUT algorithm

This is where the talk officially ends: Thank you! A little more on noise sensitivity follows.

Closly related notions to “noise sensitivity” were studied by Tsirelson and Vershik . In their terminology “noise sensitivity” translates to “non Fock processes”, “black noise”, and “non-classical stochastic processes”. Their motivation is closer to mathematical quantum physics. Noise sensitivity, and non-classical stochastic processes; black noise

The terminology is confusing but here is the dictionary: Noise stable – White noise; classical stochastic process; Fock model Noise sensitive – Black noise; non classical stochastic process; non-Fock model. Tsirelson and Vershik pointed out a connection between noise sensitivity and non-linearity. (Well within the realm of QM.) Tsirelson and Vershik: Non Fock spaces; black noise; non classical stochastic processes (cont)