Ensemble System Design: Modules, Infrastructure, and Community Engagement

Discusses design issues, system requirements, revision control, and community involvement of an ensemble system with six modules. Covers computational resources, system considerations, and community collaboration.

Ensemble System Design: Modules, Infrastructure, and Community Engagement

E N D

Presentation Transcript

DET Overall Design Paula McCaslin1, Tara Jensen2 and Linda Wharton1 1 NOAA/GSD, Boulder, CO 2 NCAR/RAL, Boulder, CO

Motivation • Each of the six modules have specific goals outside scope of the structure, system design, etc • This is a discussion of the • Design Issues and Considerations • System Requirements • Revision Control Approach, Archiving • Community Involvement

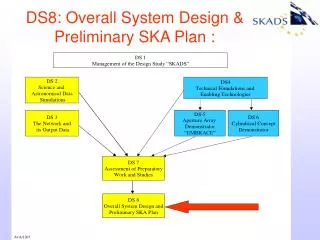

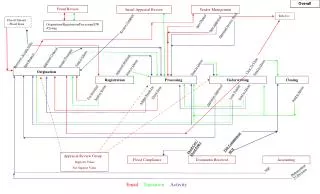

How Modules Fit Module 1: Ensemble Configuration External Sources (HMT, HWT, HFIP, etc…) External Sources (HMT, HWT, HFIP, etc…) Module 2: Initial Perturbations Module 3: Physics Perturbations Module 4: Statistical Post-Processing Module 5: Product/ Displays Module 6: Verification

Basic Infrastructure • Ensemble Generation: Ensemble Configuration, Initial Conditions, Physics Perturbations (Modules 1-3) • Need to run on massively parallel processing systems • Are portable across available systems as a subgroup • Use of Information: Statistical Post-Process, Product Generation, Verification (Mods 4-6) • Less intensive CPU demands • Likely to run at one location, DTC Center • Available for model grids from ensembles run elsewhere

Basic Infrastructure (con’t) • Systems, software, procedures, format specifications, data and protocols needed to carry out testing and evaluation of community methods • Benchmarks used as reference when testing and evaluating

Design Issues • Modularity to facilitate transition to operations • Portability for ease of execution where computational resources are available • Flexible to allow testing of new ideas, Plug and Play • Considerations for real-time and retrospective use • R2O focus

System Considerations Compute Resources • DTC resources – Jet and Bluefire • HPC Consortium resources (GAUs = wall clock time on processors * reserved processors * computer factor, based on cpu power * job queue charge factor) • TeraGrid (GAUs allocated on a quarterly basis; 200K GAUs available for a “test drive”) • DOE Incite (allocations made on a yearly basis – proposals due by Oct 2010 for 2011 GAUs) • NSF Centers (both Tier 1 and Tier 2 centers, e.g. NCAR Wyoming Super-Computing center is a NSF Tier 2 center) • TACC (Texas Advanced CC, you can request use directly) • Oak Ridge National Lab’s Jaguar

System Considerations (con’t) • Petatscale – computer system capable of reaching performance in excess of one petaflop, i.e. one quadrillion floating point operations per second • Disk space requirements • Archiving • NCAR mass storage system • Jet mass storage system • Data Service • Making datasets we use for testing and evaluation available via website (future idea)

System Requirements • Output data from model: • Standard model output formats • NetCDF • GRIB1, GRIB2 • Output data from products: • AWIPS-II NetCDF • CF-Compliant NetCDF • Climate and Forecasting (CF) • Different from AWIPS-II NetCDF

System Requirements (con’t) • Proposed Languages: • Model: Fortran, C • Products: Python, Fortran90, C++ • Display: Python, JavaScript, Java • Scripts • XML • Shell scripts (ksh, csh, …) • Compilers • PGI • Intel • Others

Revision Control • Release versions will use SVN for NCEP and other users • DET may utilize SVN or other revision control systems for local use then check release versions into SVN

Community Involvement • Manage community ensemble codes • Feedback from workshops and forums • Communicate through website (http://www.dtcenter.org/det) • Documents • Testing and evaluation plans • Reports • Results • Collaborative tools, Forum • Tutorials