Supervised Learning Networks

Delve into the structure and applications of hierarchical fuzzy neural networks, including modular network designs and three-level hierarchy implementations. Understand the synergy between experts-in-class and classes-in-expert networks for improved supervised learning. Learn about the strengths of multi-layer perceptrons and decision-based neural networks in neural network structures.

Supervised Learning Networks

E N D

Presentation Transcript

Supervised Learning Networks • Linear perceptron networks • Multi-layer perceptrons • Mixture of experts • Decision-based neural networks • Hierarchical neural networks

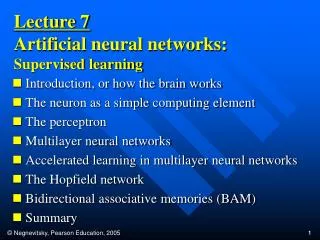

Hierarchical Neural Network Structures One-Level: (a) multi-layer perceptrons. Two-Level: (b) Linear perceptron networks (c) decision-based neural network. (d) mixture of experts network. Three-Level: (e) experts-in-class network. (f) classes-in-expert network.

Hierarchical Structure of NN • 1-level hierarchy: BP • 2-level hierarchy: MOE,DBNN • 3-level hierarchy: PDBNN “Synergistic Modeling and Applications of Hierarchical Fuzzy Neural Networks”, by S.Y. Kung, et al., Proceedings of the IEEE, Special Issue on Computational Intelligence, Sept. 1999

All Classes in One Net multi-layer perceptron

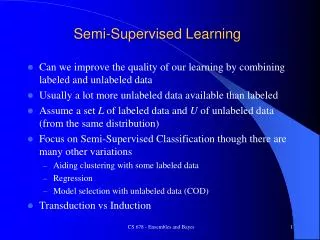

Modular Structures (two-level) Divide-and-conquer principle: divide the task into modules and then integrate the individual results into a collective decision. Two typical modular networks: (1) mixture-of-experts (MOE) which utilizes the expert-level modules, (2) decision-based neural networks (DBNN) based on the class-level modules.

Expert-level (Rule-level) Modules: • Each expert serves the function of • extracting local features and • making local recommendations. • The rules in the gating network are used to decide how to combine recommendations from several local experts, with corresponding degree of confidences.

Class-level modules: Class-level modules are natural basic partitioning units, where each module specializes in distinguishing its own class from the others. In contrast to expert-level partitioning, this OCON structure facilitates a global (or mutual) supervised training scheme. In global inter-class supervised learning, any dispute over a pattern region by (two or more) competing classes may be effectively resolved by resorting to the teacher's guidance.

Three-level hierarchical structures: Apply the divide-and-conquer principle twice: one time on the expert-level and another on the class-level. • Depending on the order used, two kinds of hierarchical networks: • one has an experts-in-class construct and • another a classes-in-expert Construct.

BP Multi-Layer Perceptron(MLP) A BP Multi-Layer Perceptron(MLP) possesses adaptive learning abilities to estimate sampled functions, represent these samples, encode structural knowledge, and inference inputs to outputs via association. Its main strength lies in its (sufficiently large number of ) hidden units, thus a large number of interconnections. The MLP neural networks enhance the ability to learn and generalize from training data. Namely, MLP can approximate almost any function.

RBF NN is More Suitable for Probabilistic Pattern Classification Hyperplane Kernel function MLP RBF The probability density function (also called conditional density function or likelihood) of the k-th class is defined as

RBF BP Neural Network The centers and widths of the RBF Gaussian kernels are deterministic functions of the training data;

RBF Output as Probability Function • According to Bays’ theorem, the posterior prob. is where P(Ck) is the prior prob. and

MLPs are highly non-linear in the parameter space gradient descent local minima • RBF networks solve this problem by dividing the learning into two independent processes. • Use the K-mean algorithm to find ci and determine weights w using the least square method • RBF learning by gradient descent

RBF learning process K-means ci w A Basis Functions Linear Regression xp ci K-Nearest Neighbor i

RBF networks implement the function • wi i and ci can be determined separately • Fast learning algorithm • Basis function types

Finding the RBF Parameters (1 ) Use the K-mean algorithm to find ci

Use K nearest neighbor rule to find the function width k-th nearest neighbor of ci • The objective is to cover the training points so that a smooth fit of the training samples can be achieved

For Gaussian basis functions • Assume the variance across each dimension are equal

Determining weights w using the least square method where dp is the desired output for pattern p

(2) RBF learning by gradient descent we have Apply

Elliptical Basis Function networks : function centers : covariance matrix

EBF Vs. RBF networks RBFN with 4 centers EBFN with 4 centers

MatLab Assignment #3: RBF BP Network to separate 2 classes ratio=2:1 RBF BP with 4 hidden units EBF BP with 4 hidden units S S