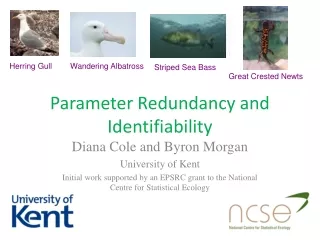

Navigating Identifiability Challenges in Statistical Modeling

180 likes | 311 Vues

Explore the concept of identifiability in statistical modeling through illustrative examples such as Newton's gravity and mixture distributions. Learn about the lack of identifiability issues, possible solutions, and their impact on data analysis methodologies.

Navigating Identifiability Challenges in Statistical Modeling

E N D

Presentation Transcript

The scourge of statistical modelling Lack of identifiability

Overview • Definition • Examples (many) • Possible solutions

Identifiability defined • Vague def: A model is identifiable if all elements of it can be estimated from the data available. • Statistical def: A statistical model is identifiable if each set of parameter values gives a unique probability distribution for the possible outcomes (the likelihood). • Lack of identifiability You have a problem. Pops up ever so often!

Example 1: A toy example We want to estimate the length of a specific lizard. We’ve got multiple measurements. We model the measurements of total length as “body length” + “tail length” + noise, but we only measure total length! The parameters (Lb,Lt) are not identifiable. Total length, L Body length, Lb Tail length, Lt

Example 1(b): The likelihood of the toy example Plotting Lb on the x axis and Lt on the y axis, the likelihood surface has a “ridge” on a diagonal, going from (0,mean) to (mean,0).

Example 2: Variation and measurement noise We want to study the variation in the length of a species of lizard. We’ve got multiple measurements, but only one per organism. We model the measurements as normally distributed variation in individual length plus normally distributed measurement noise. Total length, L The variation parameters (,) are also not identifiable.

Example 3: Occupancy • Occupancy analysis consists of trying to establish how many areas are populated with a given organism. • It is performed by doing multiple transects in multiple areas. One is interested in the occupancy frequency, po. If the area is occupied, there is a detection frequency, pd, associated with each transect. • What if we only have one transect per area? The encounters are then binomially distributed with frequency po* pd, so the detection and occupancy frequencies are not identifiable. • If po* pd =50%, that can either be because all the sites are occupied but the chance of detecting them are only 50%, or it could be because the chance of detecting them is 100% and 50% of the areas are occupied. (Or something in between).

Example 4: Newton’s gravity • Newton’s law of gravity gave a mechanistic description of the elliptical orbits of the planets around the sun, the deviances from perfect ellipses due to the planets effect on each other, Earth’s effect on us, the moons orbit around Earth, the tides, the orbits of comets and the orbits of the moons of Jupiter. In short, a spectacular success! • It did however contain an identifiability problem. Each orbit could be described by the acceleration caused by other bodies. But there was a gravitational constant involved; constant times mass. The masses could thus not be identified, only their ratio compared to each other!

Example 5: ANOVAExample 6: Regression • A standard (one-way) ANOVA model is defined as: where i denotes the group and j denotes the measurement index of that group. But and are not identifiable! • If you do linear regression on linearly dependent covariates, you get the same problem. This is bound to happen if you have more covariates than measurements.

Example 7: Mixture distributions Sometimes a complicated distribution is needed for describing variation in nature. One way of modeling this is by mixture distributions. Think of this as first drawing a parametric distribution and then drawing from that distribution. where fN is the normal distribution with expectation and standard deviation . What constitutes peak number one and two is not clear though. However if we could determine that, the ratio, p, and the individual expectations, , and standard deviations, , are possible to estimate. Peak 2 or 1? Peak 1 or 2?

Example 8: Capture data The hare/lynx capture data from Hudson Bay company is often used for studying fluctuations in hare/lynx abundance. But the abundances themselves are not possible to estimate from this data. The data can be modeled as capture=abundance*hunting intensity*hunting success. By assuming hunting intensity and hunting success to be constant, one can at least get relative abundance from year to year, but the absolute abundance is not identifiable. Additional processes like the abundance of the plants that the hares are eating, will also be subject to identification problems.

Example 9: Phenotypic evolution and fluctuations in the optimum 1.96 s Phenotypic evolution of a single trait (fixed optimum) modelled as Ornstein-Uhlenbeck process. Aside from location and spread, the dynamics is given by a characteristic time, t. The optimum can also change (OU?). Phenotypic evolution would then be linear tracking (natural selection) of this underlying process. The presence and the characteristic times of both the two dynamics can be found given enough fossil data. Which dynamics belong to which process can however not be identified. If we find one slow-moving and one rapidly moving component in our phenotypic data then; • Natural selection is rapidly tracking slow-moving changes in the optimum. • Natural selection is slowly tracking fast-moving changes in the optimum! t -1.96 s Slow optimum Fast selection Fast optimum Slow selection

Solution 1: Get better data If you really are interested in all the parameters in your model and can’t identify them, then get better data! Examples: • (1) If you want to know a lizards body and tail lengths then measure that! • (2) If you want to separate the variation of a species from measurement noise, do multiple measurements on each organism. • (3) If you want to estimate the occupancy rate, do multiple transects in each area. • (6) If you have too few measurements to estimate all regression parameters, get more data! • (4) If you really are interested in the gravitational constant and the mass of planets and the sun, measure the gravity between objects of known mass at a known distance. Cavendish did, 110 years after Newton made his law of gravity! Cavendish experiment Note: For ANOVA (5), it’s the definition of the parameters which is the problem. For hare-lynx (8), the dynamics, not the absolute abundances are the issue. For separating selection from optimum dynamics (9), getting data from the optimum in the past is not feasible!

Solution 2(a): Get rid of unnecessary parameters If you have no interest in the parameters that are causing problems, try to get rid of them! Examples: • (1) If we are only interested in the total length of a lizard, modelling each measurement as (body length+tail length+noise) makes no sense. Replace these two parameters with a parameter representing total body length, LT. • (3) If the probability of encountering a species is of interest, don’t model that probability as po* pd . Use one encountering probability, p , instead.

Solution 2(b): Fixate problematic parameters If you aren’t interested in all the problematic parameters, but can’t get rid of them, fixate them. Examples: • (5) In ANOVA the identifiability problem in can be fixed either by setting (sum contrast) or by comparing all groups to a single default group, (treatment contrast). • (4) Since any value of the gravitational constant could be used (pre-Cavendish), one can fixate it so that it yields a “reasonable” estimate for the mass of the Earth. • (8) If it’s the dynamics of the ecology which is of interest, not absolute abundances, fixate the hunting success parameters to something reasonable. Note: This will not work in case (7) and (9) since the identifiability problem there is discrete. Nor will it work in case (6), since the regression parameters themselves are of interest.

Solution 3: Put restrictions on the parameter space If multiple but discrete places in parameter space yields the same likelihood, try putting restrictions on the parameters. Examples: • (7) In order to identify distribution one and two in a mixture, put restriction on the mixture probability, p>0.5 , or the peak of the distributions, 1<2, or on the spread of the distributions, 1>2. • (9) Assume natural selection to move faster than the optimum, t1<t2 . Note: In Bayesian analysis, you might be tempted to give the same prior distribution for each characteristic time as you would for a one layer model. But when you then impose the restriction, each prior gets more narrow and the two layer model gets an unfair advantage in model comparison! • Characteristic time • priors: • One layer prior • Lower two layer prior • Upper two layer prior

Solution 4(a): Ignore the problem when using ML So what if there are multiple solutions? They yield the same predictions. So if you do ML optimization, just start from somewhere and seek an optimum until you can’t get any higher. You’ll get different estimates when starting from different places, but predictions-wise this doesn’t matter. Examples: • (1) You will get an estimate for body length between 0 and your mean total length. The tail length will be the mean minus that. Total length will be estimated as the mean. • (9) Depending on where you start the optimization, you will get t1=taand t2=tband or vice versa t1=tband t2=ta . Notes: • You probably will not get an estimate of the parameter uncertainty. • Other people using your model will get different answers and wonder why. • The actual values you get will be a result of your optimization scheme.

Solution 4(b): Ignore the problem when using Bayesian inference An informative prior will give different probabilities to different parameter sets even when the likelihood is the same. Examples: • (1) As with ML, you will get a distribution for body length between 0 and your mean total length (maybe a little further). If you make your prior so that body length and tail length are approximately the same, that will be the result for the posterior distribution also. • (2) A strong prior on the size of the measurement noise may yield reasonable results for the species variation, while taking the uncertainty due to the size of the measurement noise into account. • (6) A sensible prior can give regression estimates even when there are less measurements than covariates! This can be viewed as the principle behind ridge regression and lasso regression. • (9) You will get a distribution for each characteristic time having two peaks, though they may be of different height due to the prior distribution. (Same with 7.) Notes: • In the direction in parameter space dominated by the identifiability problem, only the prior will have any real say in the outcome! • This method is however much easier than the restriction method for model comparison!