Data Freeway : Scaling Out to Realtime

210 likes | 335 Vues

This presentation by Eric Hwang and Sam Rash details the implementation and evolution of Facebook's Data Freeway system, focusing on real-time data processing capabilities necessary for supporting over 500 million active users. Key components discussed include Scribe, Calligraphus, HDFS, and Puma, with an overview of their architecture, reliability, and scalability challenges. The team emphasizes the critical need for low-latency data handling, reliable performance, and the ability to support massive data rates concurrent with user demands. Future work includes enhancements in streaming analytics and HBase integrations.

Data Freeway : Scaling Out to Realtime

E N D

Presentation Transcript

Data Freeway : Scaling Out to Realtime • Author: Eric Hwang, Sam Rash {ehwang,rash}@fb.com • Speaker : Haiping Wang ctqlwhp1022@gamil.com

Agenda • Data at Facebook • Realtime Requirements • Data Freeway System Overview • Realtime Components • Calligraphus/Scribe • HDFS use case and modifications • Calligraphus: a Zookeeper use case • ptail • Puma • Future Work

Big Data, Big Applications / Data at Facebook • Lots of data • More than 500 million active users • 50 million users update their statuses at least once each day • More than 1 billion photos uploaded each month • More than 1 billion pieces of content (web links, news stories, blog posts, notes, photos, etc.) shared each week • Data rate: over 7 GB / second • Numerous products can leverage the data • Revenue related: Ads Targeting • Product/User Growth related: AYML, PYMK, etc • Engineering/Operation related: Automatic Debugging • Puma: streaming queries

Example: User related Application • Major challenges: Scalability , Latency

Realtime Requirements • Scalability: 10-15 GBytes/second • Reliability: No single point of failure • Data loss SLA: 0.01% • Loss due to hardware: means at most 1 out of 10,000 machines can lose data • Delay of less than 10 sec for 99% of data • Typically we see 2s • Easy to use: as simple as ‘tail –f /var/log/my-log-file’

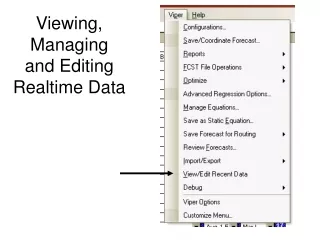

Data Freeway System Diagram • Scribe & Calligraphus get data into the system • HDFS at the core • Ptail provides data out • Puma is a emerging streaming analytics platform

Scribe • Scalable distributed logging framework • Very easy to use: • scribe_log(string category, string message) • Mechanics: • Built on top of Thrift • Runs on every machine at Facebook, Collect the log data into a bunch of destinations • Buffer data on local disk if network is down • History: • 2007: Started at Facebook • 2008 Oct: Open-sourced

Calligraphus • What • Scribe-compatible server written in Java • Emphasis on modular, testable code-base, and performance • Why? • Extract simpler design from existing Scribe architecture • Cleaner integration with Hadoop ecosystem • HDFS, Zookeeper, HBase, Hive • History • In production since November 2010 • Zookeeper integration since March 2011

HDFS : a different use case • Message hub • Add concurrent reader support and sync • Writers + concurrent readers a form of pub/sub model

HDFS : add Sync • Sync • Implement in 0.20 (HDFS-200) • Partial chunks are flushed • Blocks are persisted • Provides durability • Lowers write-to-read latency

HDFS : Concurrent Reads Overview • Without changes, stock Hadoop 0.20 does not allow access to the block being written • Need to read the block being written for realtime apps in order to achieve < 10s latency

HDFS : Concurrent Reads Implementation • DFSClient asks Namenode for blocks and locations • DFSClient asks Datanode for length of block being written • opens last block

Calligraphus: Log Writer Calligraphus Servers HDFS Scribe categories Server ? Category 1 Server Category 2 Category 3 Server • How to persist to HDFS?

Calligraphus (Simple) Calligraphus Servers HDFS Scribe categories Server Category 1 Server Category 2 Category 3 Server Number of categories Total number of directories Number of servers = x

Calligraphus (Stream Consolidation) Calligraphus Servers HDFS Scribe categories Router Writer Category 1 Router Writer Category 2 Category 3 Router Writer ZooKeeper Number of categories Total number of directories =

ZooKeeper: Distributed Map • Design • ZooKeeper paths as tasks (e.g. /root/<category>/<bucket>) • Cannonical ZooKeeper leader elections under each bucket for bucket ownership • Independent load management – leaders can release tasks • Reader-side caches • Frequent sync with policy db Root A B C D 1 2 3 4 5 1 2 3 4 5 1 2 3 4 5 1 2 3 4 5

Canonical Realtime ptail Application • Hides the fact we have many HDFS instances: user can specify a category and get a stream • Check pointing Puma

Puma Overview • Realtime analytics platform • Metrics • count, sum, unique count, average, percentile • Uses ptail check pointing for accurate calculations in the case of failure • Puma nodes are sharded by keys in the input stream • HBase for persistence

Puma Read Path • Performance • Elapsed time typically 200-300 ms for 30 day queries • 99th percentile, cross-country, < 500ms for 30 day queries

Future Work • Puma • Enhance functionality: add application-level transactions on Hbase • Streaming SQL interface • Compression