Bayesian Networks

Reverend Thomas Bayes (1702-1761). Bayesian Networks. Probability theory BN as knowledge model Bayes in Court Dazzle examples Conclusions. Jenneke IJzerman, Bayesiaanse Statistiek in de Rechtspraak, VU Amsterdam, September 2004.

Bayesian Networks

E N D

Presentation Transcript

Reverend Thomas Bayes(1702-1761) Bayesian Networks Probability theory BN as knowledge model Bayes in Court Dazzle examples Conclusions Jenneke IJzerman,Bayesiaanse Statistiek in de Rechtspraak,VU Amsterdam, September 2004. http://www.few.vu.nl/onderwijs/stage/werkstuk/werkstukken/werkstuk-ijzerman.doc Expert Systems 8

Imagine two types of bag: BagA: 250 + 750 BagB: 750 + 250 Take 5 balls from a bag: Result: 4 + 1 What is the type of the bag? Probability of this result from BagA: 0. 0144 BagB: 0. 396 Conclusion: The bag is BagB. Thought Experiment: Hypothesis Selection • But… • We don’t know how the bag was selected • We don’t even know that type BagB exists • Experiment is meaningful only in light of the a priori posed hypotheses (BagA, BagB) and their assumed likelihoods. Expert Systems 8

Classical statistics: Compute the prob for your data, assuming a hypothesis Reject a hypothesis if the data becomes unlikely Bayesian statistics: Compute the prob for a hypothesis, given your data Requires a priori prob for each hypothesis;these are extremely important! Classical and Bayesian statistics Expert Systems 8

Part I: Probability theory What is a probability? • Frequentist: relative frequency of occurrence. • Subjectivist: amount of belief • Mathematician:Axioms (Kolmogorov),assignment of non-negative numbers to a set of states, sum 1 (100%). State has several variables: product space. With n binary variables: 2n. Multi-valued variables. Expert Systems 8

Conditional Probability: Using evidence • First table:Probability for any woman to deliver blond baby • Second table:Describes for blond and non-blond mothers separately • Third table:Describe only for blond mother Row is rescaled with its weight; Def. conditional probability:Pr(A|B) = Pr( A & B ) / Pr(B) Rewrite: Pr(A & B) = Pr(B) x Pr(A | B) Expert Systems 8

Dependence and Independence • The prob for a blond child are 30%, but larger for a blond mother and smaller for a non-blond mother. • The prob for a boy are 50%, also for blond mothers, and also for non-blond mothers. Def.: A and B are independent: Pr(A|B) = Pr(A) Exercise: Show thatPr(A|B) = Pr(A) is equivalent toPr(B|A) = Pr(B) (aka B and A are independent). Expert Systems 8

Bayes’ Rule: • Observe thatPr(A & B) = Pr(A) x Pr(B|A) = Pr(B) x Pr(A|B) • Conclude Bayes’ Rule: Bayes Rule: from data to hypothesis • Classical Probability Theory:0.0144 is the relative weight of 4+1 in the ROW of BagA. • Bayesian Theory describes the distribution over the column of 4+1. Classical statistics: ROW distribution Bayesian statistic: COLUMN distr. Expert Systems 8

Reasons for Dependence 1: Causality • Dependency: P(B|A) ≠ P(B) • Positive Correlation: > • Negative correlation: < Possible explanation:A causes B. Example: P(headache) = 6% P(ha | party) = 10% P(ha | ¬party) = 2% Alternative explanation:B causes A. In the same example:P(party) = 50%P(party | h.a.) = 83%P(party | no h.a.) = 48% “Headaches make students go to parties.” In statistics, correlation has no direction. Expert Systems 8

Reasons for Dependence 2: Common cause 1. The student party may lead to headache and is costly(money versus broke): 2. Table of headache and money: Pr(broke) = 30% Pr(broke | h.a.) = 50% 3. Table of headache and money for party attendants: This dependency disappears if the common cause variable is known Expert Systems 8

A and B are independent: Pr(B) = 80% Pr(B|A) = 80% B and A are independent. Their combination stimulates C; for instances satisfying C: Pr(B) = 90% Pr(B|A) = 93%, Pr(B|¬A)=80% Reasons for Dependence 3: Common effect This dependency appears if the common effect variable is known Expert Systems 8

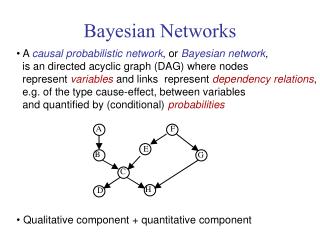

Part II: Bayesian Networks pa • Probabilistic Graphical Model • Probabilistic Network • Bayesian Network • Belief Network Consists of: • Variables (n) • Domains (here binary) • Acyclic arc set, modeling the statistical influences • Per variable V (indegree k): Pr(V | E), for 2k cases of E. Information in node:exponential in indegree. ha br A B C Expert Systems 8

Closed World Assumption Rule based: IF x attends party THEN x has headache WITH cf = .10What if x didn’t attend? Bayesian model: Direction of arcs and correlation The Bayesian Network Model ha pa pa ha • BN does not necessarily model causality • Built upon HE understanding of relationships; often causal Pr(ha|¬pa) is included: claim all relevant info is modeled Expert Systems 8

A little theorem • A Bayesian network on n binary variables uniquely defines a probability distribution over the associated set of 2nstates. • Distribution has 2n parameters(numbers in [0..1] with sum 1). • Typical network has in-degree 2 to 3:represented by 4n to 8n parameters (PIGLET!!). • Bayesian Networks are an efficient representation Expert Systems 8

The Utrecht DSS group • Initiated by Prof Linda van der Gaag from ~1990 • Focus: development of BN support tools • Use experience from building several actual BNs • Medicalapplications • Oesoca,~40 nodes. • Courses:ProbabilisticReasoning • NetworkAlgoritms(Ma ACS). Expert Systems 8

Describe Human Expert knowledge:Metastatic Cancer may be detected by an increased level of serum calcium (SC). The Brain Tumor (BT) may be seen on a CT scan (CT). Severe headaches (SH) are indicative for the presence of a brain tumor. Both a Brain tumor and an increased level of serum calcium may bring the patient in a coma (Co). Probabilities: Expert guess or statistical study Learn BN structure automatically from data by means of Data Mining Research of Carsten Models not intuitive Not considered XS Helpful addition to Knowledge Acquisition from Human Expert Master ACS. mc bt ct sh sc co How to obtain a BN model Expert Systems 8

The probability of a stateS = (v1, .. , vn):Multiply Pr(vi | S) The marginal (overall) probability of each variable: Sampling: Produce a series of cases, distributed according to the probability distribution implicit in the BN Inference in Bayesian Networks pa Pr(pa) = 50% Pr(ha) = 6% Pr(br) = 20% ha br Pr (pa, ¬ha, ¬br) = 0.50 * 0.90 * 0.60 = 0.27 Expert Systems 8

Consultation: Entering Evidence Consultation applies the BN knowledge to a specific case • Known variable values can be entered into the network • Probability tables for all nodes are updated • Obtain (sthlike) new BNmodeling theconditionaldistribution • Again, showdistributionsand stateprobabilities • Backward andForwardpropagation Expert Systems 8

Test Selection (Danielle) • In consultation, enter data until goal variable is known with sufficient probability. • Data items are obtained at specific cost. • Data items influence the distribution of the goal. Problem: • Given the current state of the consultation, find out what is the best variable to test next. Started CS study 1996, PhD Thesis defense Oct 2005 Expert Systems 8

Complexity of Network Design (Johan) • Boolean formula can be coded into a BN • SAT-problems reformulated as BN problems • Monotonicity, Kth MPE, Inference • Complete for PP^PP^NP • Started PhD Oct 2005 • Graduated Oct 2009 Expert Systems 8

Some more work done in Linda’s DSS group • Sensitivity Analysis:Numerical parameters in the BN may be inaccurate;how does this influence the consultation outcome? • More efficient inferencing:Inferencing is costly, especially in the presence of • Cycles (NB.: There are no directed cycles!) • Nodes with a high in-degree Approximate reasoning, network decompositions, … • Writing a program tool: Dazzle Expert Systems 8

Part III: In the Courtroom What happens in a trial? • Prosecutor and Defense collect information • Judge decides if there is sufficient evidence that person is guilty Forensic tests are far more conclusive than medical ones but still probabilistic in nature!Pr(symptom|sick) = 80%Pr(trace|innocent) = 0.01% Tempting to forget statistics. Need a priori probabilities. Jenneke IJzerman, Bayesiaanse Statistiek in de Rechtspraak, VU Amsterdam, September 2004. Expert Systems 8

The story: A DNA sample was taken from the crime site Probability of a match of samples of different people is 1 in 10,000 20,000 inhabitants are sampled John’s DNA matches the sample Prosecutor: chances that John is innocent is 1 in 10,000 Judge convicts John The analysis The prosecutor confuses Pr(inn | evid) (a) Pr(evid | inn) (b) Forensic experts can only shed light on (b) The Judge must find (a);a priori probabilities are needed!! (Bayes) Dangerous to convict on DNA samples alone Pr(innocent match) = 86% Pr(1 such match) = 27% Prosecutor’s Fallacy Expert Systems 8

The story Town has 100,001 people We expect 11 to match(1 guilty plus 10 innocent) Probability that John is guilty is 9%. John must be released Implicit assumptions: Offender is from town. Equal a priori probability for each inhabitant It is necessary to take other circumstances into account; why was John prosecuted and what other evidence exists? Conclusions: PF: it is necessary to take Bayes and a priori probs into account DF: estimating the a prioris is crucial for the outcome Defender’s Fallacy Expert Systems 8

IJzerman’s ideas about trial: Forensic Expert may not claim a priori or a posteriori probabilities (Dutch Penalty Code, 344-1.4) Judge must set a priori Judge must compute a posteriori, based on statements of experts Judge must have explicit threshold of probability for beyond reasonable doubt Threshold should be explicitized in law. Is this realistic? Avoid confusing Pr(G|E) and Pr(E|G), a good idea A priori’s are extremely important; this almost pre-determines the verdict How is this done? Bayesian Network designed and controlled by Judge? No judge will obey a mathematical formula Public agreement and acceptance? Experts’ and Judge’s task verslagen van deskundigen behelzende hun gevoelen betreffende hetgeen hunne wetenschap hen leert omtrent datgene wat aan hun oordeel onderworpen is Expert Systems 8

Bayesian Alcoholism Test • Driving under influence of alcohol leads to a penalty • Administrative procedure may voiden licence • Judge must decide if the subject is an alcohol addict;incidental or regular (harmful) drinking • Psychiatrists advice the court by determining if drinking was incidental or regular • Goal HHAU: Harmful and Hazardous Alcohol Use • Probabilistically confirmed or denied by clinical tests • Bayesian Alcoholism Test: developed 1999-2004 by A. Korzec, Amsterdam. Expert Systems 8

Variables in Bayesian Alcoholism Test Hidden variables: • HHAU: alcoholism • Liver disease Observable causes: • Hepatitis risk • Social factors • BMI, diabetes Observable effects: • Skin color • Lab: blood, breadth • Level of Response • Smoking • CAGE questionnaire Expert Systems 8

Knowledge in the Network Qualitative- What variables are relevant- How do they interrelate Quantitative- A priori probabilities- Conditional probabilities for hidden diseases- Conditional probabilities for effects- Response of lab tests to hidden diseases How it was obtained Network structure??IJzerman does not report about this Probabilities- Literature studies: 40% of probabilities- Expert opinions: 60% of probabilities Knowledge Elicitation for BAT Expert Systems 8

Enter evidence about subject: Clinical signs:skin, smoking, LRA;CAGE. Lab results Social factors The network will return: Probability that Subject has HHAU Probabilities for liver disease and diabetes The responsible Human Medical Expert converts this probability to a YES/NO for the judge! (Interpretation phase) HME may take other data into account (rare disease). Consultation with BAT Knowing what the CAGE is used for may influence the answers that the subject gives. Expert Systems 8

Part IV: Bayes in the Field The Dazzle program • Tool for designing and analysing BN • Mouse-click the network;fill in the probabilities • Consult by evidence submission • Read posterior probabilities • Development 2004-2006 • Written in Haskell • Arjen van IJzendoorn, Martijn Schrage • www.cs.uu.nl/dazzle Expert Systems 8

Importance of a good model In 1998, Donna Anthony (31) was convicted for murdering her two children. She was in prison for seven years but claimed her children died of cot death. Prosecutor:The probability of two cot deaths in one family is too small, unless the mother is guilty. Expert Systems 8

The Evidence against Donna Anthony • BN with priors eliminates Prosecutor’s Fallacy • Enter the evidence:both children died • A priori probability is very small (1 in 1,000,000) • Dazzle establishes a 97.6% probability of guilt • Name of expert: Prof. Sir Roy Meadow (1933) • His testimony brought a dozen mothers in prison in a decade Expert Systems 8

A More Refined Model Allow for genetic or social circumstances for which parent is not liable. Expert Systems 8

The Evidence against Donna? Refined model: genetic defect is the most likely cause of repeated deaths Donna Anthony was released in 2005 after 7 years in prison 6/2005: Struck from GMC register 7/2005: Appeal by Meadow 2/2006: Granted; otherwise experts refuse witnessing Expert Systems 8

Classical Swine Fever, Petra Geenen • Swine Fever is a costly disease • Development 2004/5 • 42 vars, 80 arcs • 2454 Prs, but many are 0. • Pig/herd level • Prior extremely small • Probability elicitation with questionnaire Expert Systems 8

Conclusions • Mathematically sound model to reason with uncertainty • Applicable to areas where knowledge is highly statistical • Acquisition: Instead of classical IF a THEN b (WITH c),obtain bothPr(b|a)andPr(b|¬a) • More work but more powerful model • One formalism allows both diagnostic and prognostic reasoning • Danger: apparent exactness is deceiving • Disadvantage: Lack of explanation facilities (research);Model is quite transparant, but consultations are not;Design questions have high complexity (> NPC). • Increasing popularity, despite difficulty in building Expert Systems 8