Understanding Bayesian Networks and Conditional Independence

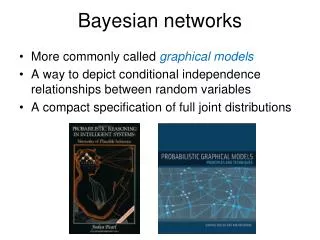

Explore the significance of conditional independence in Bayesian networks through examples like grades, intelligence, and SAT scores. Learn how to represent and interpret causal relationships using directed acyclic graphs.

Understanding Bayesian Networks and Conditional Independence

E N D

Presentation Transcript

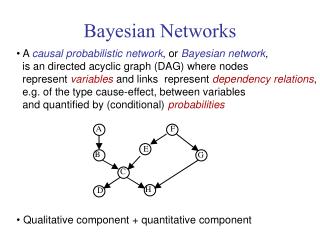

Significance of Conditional independence • Consider Grade(CS101), Intelligence, and SAT • Ostensibly, the grade in a course doesn’t have a direct relationship with SAT scores • but good students are more likely to get good SAT scores, so they are not independent… • It is reasonable to believe that Grade(CS101) and SAT are conditionally independent given Intelligence

Bayesian Network • Explicitly represent independence among propositions • Notice that Intelligence is the “cause” of both Grade and SAT, and the causality is represented explicitly P(I) P(I,G,S) = P(G,S|I) P(I) = P(G|I) P(S|I) P(I) Intel. P(S|I) P(G|I) Grade SAT

Burglary Earthquake causes Alarm effects JohnCalls MaryCalls A More Complex BN Intuitive meaning of arc from x to y: “x has direct influence on y” Directed acyclic graph

Burglary Earthquake Alarm JohnCalls MaryCalls A More Complex BN Size of the CPT for a node with k parents: 2k 10 probabilities, instead of 31

Significance of Bayesian Networks • If we know that some variables are conditionally independent, we should be able to decompose joint distribution to take advantage of it • Bayesian networks are a way of efficiently factoring the joint distribution into conditional probabilities • And also building complex joint distributions from smaller models of probabilistic relationships • But… • What knowledge does the BN encode about the distribution? • How do we use a BN to compute probabilities of variables that we are interested in?

Burglary Earthquake Alarm JohnCalls MaryCalls What does the BN encode? • Each of the beliefs JohnCalls and MaryCalls is independent of Burglary and Earthquake given Alarm or Alarm P(BJ) P(B) P(J) P(BJ|A) =P(B|A) P(J|A) For example, John doesnot observe any burglariesdirectly

Burglary Earthquake Alarm JohnCalls MaryCalls What does the BN encode? • The beliefs JohnCalls and MaryCalls are independent given Alarm or Alarm P(BJ|A) =P(B|A) P(J|A) P(JM|A) =P(J|A) P(M|A) A node is independent of its non-descendants given its parents For instance, the reasons why John and Mary may not call if there is an alarm are unrelated

Burglary Earthquake Alarm JohnCalls MaryCalls What does the BN encode? Burglary and Earthquake are independent A node is independent of its non-descendants given its parents • The beliefs JohnCalls and MaryCalls are independent given Alarm or Alarm For instance, the reasons why John and Mary may not call if there is an alarm are unrelated

Locally Structured World • A world is locally structured (or sparse) if each of its components interacts directly with relatively few other components • In a sparse world, the CPTs are small and the BN contains much fewer probabilities than the full joint distribution • If the # of entries in each CPT is bounded by a constant, i.e., O(1), then the # of probabilities in a BN is linear in n – the # of propositions – instead of 2n for the joint distribution

But does a BN represent a belief state?In other words, can we compute the full joint distribution of the propositions from it?

Burglary Earthquake Alarm JohnCalls MaryCalls Calculation of Joint Probability P(JMABE) = ??

Burglary Earthquake Alarm JohnCalls MaryCalls • P(JMABE)= P(JM|A,B,E) P(ABE)= P(J|A,B,E) P(M|A,B,E) P(ABE)(J and M are independent given A) • P(J|A,B,E) = P(J|A)(J and B and J and E are independent given A) • P(M|A,B,E) = P(M|A) • P(ABE) = P(A|B,E) P(B|E) P(E) = P(A|B,E) P(B) P(E)(B and E are independent) • P(JMABE) = P(J|A)P(M|A)P(A|B,E)P(B)P(E)

Burglary Earthquake Alarm JohnCalls MaryCalls Calculation of Joint Probability P(JMABE)= P(J|A)P(M|A)P(A|B,E)P(B)P(E)= 0.9 x 0.7 x 0.001 x 0.999 x 0.998= 0.00062

Burglary Earthquake Alarm P(x1x2…xn) = Pi=1,…,nP(xi|parents(Xi)) JohnCalls MaryCalls full joint distribution table Calculation of Joint Probability P(JMABE)= P(J|A)P(M|A)P(A|B,E)P(B)P(E)= 0.9 x 0.7 x 0.001 x 0.999 x 0.998= 0.00062

Burglary Earthquake Alarm P(x1x2…xn) = Pi=1,…,nP(xi|parents(Xi)) JohnCalls MaryCalls full joint distribution table Calculation of Joint Probability Since a BN defines the full joint distribution of a set of propositions, it represents a belief state P(JMABE)= P(J|A)P(M|A)P(A|B,E)P(b)P(e)= 0.9 x 0.7 x 0.001 x 0.999 x 0.998= 0.00062

Burglary Earthquake Alarm JohnCalls MaryCalls What does the BN encode? Burglary Earthquake JohnCallsMaryCalls | Alarm JohnCalls Burglary | Alarm JohnCalls Earthquake | Alarm MaryCalls Burglary | Alarm MaryCalls Earthquake | Alarm A node is independent of its non-descendents, given its parents

Burglary Earthquake Alarm JohnCalls MaryCalls Reading off independence relationships • How about Burglary Earthquake | Alarm ? • No! Why?

Burglary Earthquake Alarm JohnCalls MaryCalls Reading off independence relationships • How about Burglary Earthquake | Alarm ? • No! Why? • P(BE|A) = P(A|B,E)P(BE)/P(A) = 0.00075 • P(B|A)P(E|A) = 0.086

Burglary Earthquake Alarm JohnCalls MaryCalls Reading off independence relationships • How about Burglary Earthquake | JohnCalls? • No! Why? • Knowing JohnCalls affects the probability of Alarm, which makes Burglary and Earthquake dependent

Independence relationships • Rough intuition (this holds for tree-like graphs, polytrees): • Evidence on the (directed) road between two variables makes them independent • Evidence on an “A” node makes descendants independent • Evidence on a “V” node, or below the V, makes the ancestors of the variables dependent (otherwise they are independent) • Formal property in general case : D-separation independence (see R&N)

Benefits of Sparse Models • Modeling • Fewer relationships need to be encoded (either through understanding or statistics) • Large networks can be built up from smaller ones • Intuition • Dependencies/independencies between variables can be inferred through network structures • Tractable probabilistic inference

Probabilistic Inference • Is the following problem…. • Given: • A belief state P(X1,…,Xn) in some form (e.g., a Bayes net or a joint probability table) • A query variable indexed by q • Some subset of evidence variables indexed by e1,…,ek • Find: • P(Xq | Xe1 ,…, Xek)

Probabilistic Inference without Evidence • For the moment we’ll assume no evidence variables • Find P(Xq)

Probabilistic Inference without Evidence • In joint probability table, we can find P(Xq) through marginalization • Computational complexity: 2n-1(assuming boolean random variables) • In Bayesian networks, we find P(Xq)using top down inference

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference Suppose we want to compute P(Alarm)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference Suppose we want to compute P(Alarm) P(Alarm) = Σb,eP(A,b,e) P(Alarm) = Σb,e P(A|b,e)P(b)P(e)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference • Suppose we want to compute P(Alarm) • P(Alarm) = Σb,eP(A,b,e) • P(Alarm) = Σb,e P(A|b,e)P(b)P(e) • P(Alarm) = P(A|B,E)P(B)P(E) + P(A|B, E)P(B)P(E) + P(A|B,E)P(B)P(E) +P(A|B,E)P(B)P(E)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference • Suppose we want to compute P(Alarm) • P(A) = Σb,eP(A,b,e) • P(A) = Σb,e P(A|b,e)P(b)P(e) • P(A) = P(A|B,E)P(B)P(E) + P(A|B, E)P(B)P(E) + P(A|B,E)P(B)P(E) +P(A|B,E)P(B)P(E) • P(A) = 0.95*0.001*0.002 + 0.94*0.001*0.998 + 0.29*0.999*0.002 + 0.001*0.999*0.998 = 0.00252

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference Now, suppose we want to compute P(MaryCalls)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference Now, suppose we want to compute P(MaryCalls) P(M) = P(M|A)P(A) + P(M|A) P(A)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference Now, suppose we want to compute P(MaryCalls) P(M) = P(M|A)P(A) + P(M|A) P(A) P(M) = 0.70*0.00252 + 0.01*(1-0.0252) = 0.0117

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference with Evidence Suppose we want to compute P(Alarm|Earthquake)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference with Evidence Suppose we want to compute P(A|e) P(A|e) = Σb P(A,b|e) P(A|e) = Σb P(A|b,e)P(b)

Burglary Earthquake Alarm JohnCalls MaryCalls Top-Down inference with Evidence • Suppose we want to compute P(A|e) • P(A|e) = Σb P(A,b|e) • P(A|e) = Σb P(A|b,e)P(b) • P(A|e) = 0.95*0.001 +0.29*0.999 + = 0.29066

Top-Down inference • Only works if the graph of ancestors of a variable is a polytree • Evidence given on ancestor(s) of the query variable • Efficient: • O(d 2k) time, where d is the number of ancestors of a variable, with k a bound on # of parents • Evidence on an ancestor cuts off influence of portion of graph above evidence node

Cavity Toothache Querying the BN • The BN gives P(T|C) • What about P(C|T)?

Bayes’ Rule • P(AB) = P(A|B) P(B) = P(B|A) P(A) • So… P(A|B) = P(B|A) P(A) / P(B)

Applying Bayes’ Rule • Let A be a cause, B be an effect, and let’s say we know P(B|A) and P(A) (conditional probability tables) • What’s P(B)?

Applying Bayes’ Rule • Let A be a cause, B be an effect, and let’s say we know P(B|A) and P(A) (conditional probability tables) • What’s P(B)? • P(B) = Sa P(B,A=a) [marginalization] • P(B,A=a) = P(B|A=a)P(A=a) [conditional probability] • So, P(B) = SaP(B | A=a) P(A=a)

Applying Bayes’ Rule • Let A be a cause, B be an effect, and let’s say we know P(B|A) and P(A) (conditional probability tables) • What’s P(A|B)?

Applying Bayes’ Rule • Let A be a cause, B be an effect, and let’s say we know P(B|A) and P(A) (conditional probability tables) • What’s P(A|B)? • P(A|B) = P(B|A)P(A)/P(B) [Bayes rule] • P(B) = SaP(B | A=a) P(A=a) [Last slide] • So, P(A|B) = P(B|A)P(A) / [SaP(B | A=a) P(A=a)]

How do we read this? • P(A|B) = P(B|A)P(A) / [SaP(B | A=a) P(A=a)] • [An equation that holds for all values A can take on, and all values B can take on] • P(A=a|B=b) =

How do we read this? • P(A|B) = P(B|A)P(A) / [SaP(B | A=a) P(A=a)] • [An equation that holds for all values A can take on, and all values B can take on] • P(A=a|B=b) = P(B=b|A=a)P(A=a) / [SaP(B=b | A=a) P(A=a)] Are these the same a?

How do we read this? • P(A|B) = P(B|A)P(A) / [SaP(B | A=a) P(A=a)] • [An equation that holds for all values A can take on, and all values B can take on] • P(A=a|B=b) = P(B=b|A=a)P(A=a) / [SaP(B=b | A=a) P(A=a)] Are these the same a? NO!

How do we read this? • P(A|B) = P(B|A)P(A) / [SaP(B | A=a) P(A=a)] • [An equation that holds for all values A can take on, and all values B can take on] • P(A=a|B=b) = P(B=b|A=a)P(A=a) / [Sa’P(B=b | A=a’) P(A=a’)] Be careful about indices!

Cavity Toothache Querying the BN • The BN gives P(T|C) • What about P(C|T)? • P(Cavity|Toothache) = P(Toothache|Cavity) P(Cavity) P(Toothache)[Bayes’ rule] • Querying a BN is just applying Bayes’ rule on a larger scale… Denominator computed by summing out numerator over Cavity and Cavity

Naïve Bayes Models • P(Cause,Effect1,…,Effectn)= P(Cause) Pi P(Effecti | Cause) Cause Effect1 Effect2 Effectn

P(C|F1,….,Fk) = P(C,F1,….,Fk)/P(F1,….,Fk) = 1/Z P(C)Pi P(Fi|C) Given features, what class? Naïve Bayes Classifier • P(Class,Feature1,…,Featuren)= P(Class) Pi P(Featurei | Class) Spam / Not Spam English / French/ Latin … Class Feature1 Feature2 Featuren Word occurrences