Bayesian networks

Bayesian networks. Chapter 14 . Outline. Syntax Semantics. Bayesian networks. A simple, graphical notation for conditional independence assertions and hence for compact specification of full joint distributions Syntax: a set of nodes, one per variable

Bayesian networks

E N D

Presentation Transcript

Bayesian networks Chapter 14

Outline • Syntax • Semantics

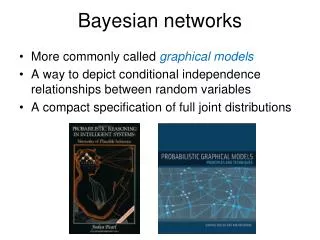

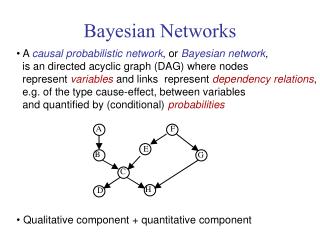

Bayesian networks • A simple, graphical notation for conditional independence assertions and hence for compact specification of full joint distributions • Syntax: • a set of nodes, one per variable • a directed, acyclic graph (link ≈ "directly influences") • a conditional distribution for each node given its parents: P (Xi | Parents (Xi)) • In the simplest case, conditional distribution represented as a conditional probability table (CPT) giving the distribution over Xi for each combination of parent values

Example • Topology of network encodes conditional independence assertions: • Weather is independent of the other variables • Toothache and Catch are conditionally independent given Cavity

Example • I'm at work, neighbor John calls to say my alarm is ringing, but neighbor Mary doesn't call. Sometimes it's set off by minor earthquakes. Is there a burglar? • Variables: Burglary, Earthquake, Alarm, JohnCalls, MaryCalls • Network topology reflects "causal" knowledge: • A burglar can set the alarm off • An earthquake can set the alarm off • The alarm can cause Mary to call • The alarm can cause John to call

Compactness • A CPT for Boolean Xi with k Boolean parents has 2k rows for the combinations of parent values • Each row requires one number p for Xi = true(the number for Xi = false is just 1-p) • If each variable has no more than k parents, the complete network requires O(n · 2k) numbers • I.e., grows linearly with n, vs. O(2n)for the full joint distribution • For burglary net, 1 + 1 + 4 + 2 + 2 = 10 numbers (vs. 25-1 = 31)

Semantics The full joint distribution is defined as the product of the local conditional distributions: P (X1, … ,Xn) = πi = 1P (Xi | Parents(Xi)) e.g., P(j m a b e) = P (j | a) P (m | a) P (a | b, e) P (b) P (e) n

Constructing Bayesian networks • 1. Choose an ordering of variables X1, … ,Xn • 2. For i = 1 to n • add Xi to the network • select parents from X1, … ,Xi-1 such that P (Xi | Parents(Xi)) = P (Xi | X1, ... Xi-1) This choice of parents guarantees: P (X1, … ,Xn) = πi =1P (Xi | X1, … , Xi-1) (chain rule) = πi =1P (Xi | Parents(Xi)) (by construction) n n

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J) No P(A | J, M) = P(A | J)?P(A | J, M) = P(A)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J) No P(A | J, M) = P(A | J)?P(A | J, M) = P(A)? No P(B | A, J, M) = P(B | A)? P(B | A, J, M) = P(B)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J) No P(A | J, M) = P(A | J)?P(A | J, M) = P(A)? No P(B | A, J, M) = P(B | A)? Yes P(B | A, J, M) = P(B)? No P(E | B, A ,J, M) = P(E | A)? P(E | B, A, J, M) = P(E | A, B)?

Example • Suppose we choose the ordering M, J, A, B, E P(J | M) = P(J) No P(A | J, M) = P(A | J)?P(A | J, M) = P(A)? No P(B | A, J, M) = P(B | A)? Yes P(B | A, J, M) = P(B)? No P(E | B, A ,J, M) = P(E | A)? No P(E | B, A, J, M) = P(E | A, B)? Yes

Example contd. • Deciding conditional independence is hard in noncausal directions • (Causal models and conditional independence seem hardwired for humans!) • Network is less compact: 1 + 2 + 4 + 2 + 4 = 13 numbers needed

Inference • Complexity of inference Bayes Net • Bayes Net reduces space complexity • Bayes Net does not reduce time complexity for general case

Conditional independence and D-separation • Two sets of nodes, X and Y, are conditionally independent given an evidence set of nodes, E if every undirected path from a node in X to a node in Y is d-seperated by E. • A set of nodes, E d-separates to sets of nodes, X and Y, if every undirected path from a node in X to a node in Y is blocked by E • A path is blocked given E if there is a node Z on the path for which one of the following holds:

Inference • Inference by enumeration P(B | j, m) = P(B, j, m) / P(j, m) = P(B, j, m) = a eP(B, e, a, j, m) = P(B)e P(e)aP(a | B,e) P(j | a) P(m | a)

Time complexity • Dynamic programming • Works well for polytrees (where there is at most one path between any two nodes) • Doesn’t work for multiply connected networks

Inference in multiply connected networks • Clustering • Cutset conditioning • Stochastic methods • Monte Carlo • likelihood weighting • Markov Chain Monte Carlo • Will be skipped in this course

Clustering • Merge variables, replace their CPTs by their combined CPT

Cutset conditioning • cutting off cycles to obtain polytree. • Sum over all instantiations of cutset variables.

Cutset conditioning - example P(W|S) =RCP(W|S,R,C) P(R,C|S) = RCP(W|S,R) P(R,C|S) P(R,C|S) = P(R,S|C) P(C) / P(S) = P(R|C) P(S|C) P(C) / P(S) (Notice here that node C d-separates R and S) P(C = +) + P(C = -)

Stochastic Inference • It’s expensive to work with the full joint distribution… whether as a table or as a Bayes Network • Is approximation good enough? • Monte Carlo

Monte Carlo • Use samples to approximate solution • Simulated annealing used Monte Carlo theories to justify why random guesses and sometimes going uphill can lead to optimality • More samples = better approximation • How many are needed? • Where should you take the samples?

Prior sampling • An ability to model the prior probabilities of a set of random variables

Approximating true distribution • With enough samples, perfect modeling is possible

Rejection sampling • Compute P(X | e) • Use PriorSample (SPS) and create N samples • Denote Ne number of samples consistent with e (namely E = e) • Denote Nex number of samples consistent with E = e) AND X = x • P(X | e) can be computed from Nex / Ne

Example • P(Rain | Sprinkler = true) • Use Bayes Net to generate 100 samples • Suppose 73 have Sprinkler=false • Suppose 27 have Sprinkler=true • 8 have Rain=true • 19 have Rain=false • P(Rain | Sprinkler=true) = <8/27; 19/27>

Problems with rejection sampling • Standard deviation of the error in probability is proportional to 1/sqrt(n), where n is the number of samples consistent with evidence • As problems become complex, number of samples consistent with evidence becomes small and it becomes harder to construct accurate estimates

Likelihood weighting • We only want to generate samples that are consistent with the evidence, e • We’ll sample the Bayes Net, but we won’t let every random variable be sampled, some will be forced to produce a specific output

Example • P (Rain | Sprinkler=true, WetGrass=true)

Example • P (Rain | Sprinkler=true, WetGrass=true) • First, weight vector, w, set to 1.0

Example • Notice that weight is reduced according to how likely an evidence variable’s output is given its parents • So final probability is a function of what comes from sampling the free variables while constraining the evidence variables

Comparing techniques • In likelihood weighting, attention is paid to evidence variables before samples are collected • In rejection sampling, evidence variables are considered after the sampling • Likelihood weighting isn’t as accurate as the true posterior distribution, P(z | e) because the sampled variables ignore evidence among z’s non-ancestors

Likelihood weighting • Uses all the samples • As evidence variables increase, it becomes harder to keep the weighting constant high and estimate quality drops

Gibbs sampling • Start with an arbitrary state of the network, with Evidence variables set to their observed values • Randomly sample a value for a non evidence variable, conditioned on its: parents, children and childrens parents (Markov blanket) • Each state sampled contributes equally to the estimate of the query

Some Applications of BN • Medical diagnosis, e.g., lymph-node diseases • Troubleshooting of hardware/software systems • Fraud/uncollectible debt detection • Data mining • Analysis of genetic sequences • Data interpretation, computer vision, image understanding