CRANDEM: Conditional Random Fields for ASR

260 likes | 387 Vues

This document explores the application of Conditional Random Fields (CRFs) in Automatic Speech Recognition (ASR) by integrating them with Tandem Hidden Markov Models (HMMs). The outline discusses the foundations of CRFs, their capabilities in providing superior sequence labeling, and the problem of combining CRF outputs with standard HMM systems. It details methods for generating posterior probability vectors using the Forward-Backward Algorithm and evaluates the performance in phone and word recognition tasks using TIMIT datasets, demonstrating significant improvements in accuracy.

CRANDEM: Conditional Random Fields for ASR

E N D

Presentation Transcript

CRANDEM: Conditional Random Fields for ASR Jeremy Morris 11/21/2008

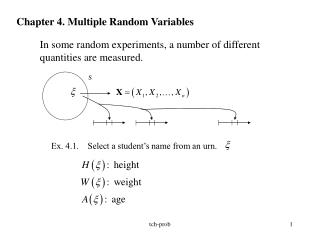

Outline • Background – Tandem HMMs & CRFs • Crandem HMM • Phone recognition • Word recognition

Background • Conditional Random Fields (CRFs) • Discriminative probabilistic sequence model • Directly defines a posterior probability of a label sequence given a set of observations

Background • Problem: How do we make use of CRF classification for word recognition? • Attempt to use CRFs directly? • Attempt to fit CRFs into current state-of-the-art models for speech recognition? • Here we focus on the latter approach • How can we integrate what we learn from the CRF into a standard HMM-based ASR system?

Background • Tandem HMM • Generative probabilistic sequence model • Uses outputs of a discriminative model (e.g. ANN MLPs) as input feature vectors for a standard HMM

Background • Tandem HMM • ANN MLP classifiers are trained on labeled speech data • Classifiers can be phone classifiers, phonological feature classifiers • Classifiers output posterior probabilities for each frame of data • E.g. P(Q|X), where Q is the phone class label and X is the input speech feature vector

Background • Tandem HMM • Posterior feature vectors are used by an HMM as inputs • In practice, posteriors are not used directly • Log posterior outputs or “linear” outputs are more frequently used • “linear” here means outputs of the MLP with no application of the softmax function to transform into probabilities • Since HMMs model phones as Gaussian mixtures, the goal is to make these outputs look more “Gaussian” • Additionally, Principle Components Analysis (PCA) is applied to features to decorrelate features for diagonal covariance matrices

Idea: Crandem • Use a CRF classifier to create inputs to a Tandem-style HMM • CRF labels provide a better per-frame accuracy than input MLPs • We’ve shown CRFs to provide better phone recognition than a Tandem system with the same inputs • This suggests that we may get some gain from using CRF features in an HMM

Idea: Crandem • Problem: CRF output doesn’t match MLP output • MLP output is a per-frame vector of posteriors • CRF outputs a probability across the entire sequence • Solution: Use Forward-Backward algorithm to generate a vector of posterior probabilities

Forward-Backward Algorithm • The Forward-Backward algorithm is already used during CRF training • Similar to the forward-backward algorithm for HMMs • Forward pass collects feature functions for the timesteps prior to the current timestep • Backward pass collects feature functions for the timesteps following the current timestep • Information from both passes are combined together to determine the probability of being in a given state at a particular timestep

Forward-Backward Algorithm • This form allows us to use the CRF to compute a vector of local posteriors y at any timestept. • We use this to generate features for a Tandem-style system • Take log features, decorelate with PCA

Phone Recognition • Pilot task – phone recognition on TIMIT • 61 feature MLPs trained on TIMIT, mapped down to 39 features for evaluation • Crandem compared to Tandem and a standard PLP HMM baseline model • As with previous CRF work, we use the outputs of an ANN MLP as inputs to our CRF • Various CRF models examined (state feature functions only, state+transition functions), and various input feature spaces examined (phone classifier and phonological feature classifier)

Phone Recognition • Phonological feature attributes • Detector outputs describe phonetic features of a speech signal • Place, Manner, Voicing, Vowel Height, Backness, etc. • A phone is described with a vector of feature values • Phone class attributes • Detector outputs describe the phone label associated with a portion of the speech signal • /t/, /d/, /aa/, etc.

Phone Recognition - Results • Phonological feature attributes • Detector outputs describe phonetic features of a speech signal • Place, Manner, Voicing, Vowel Height, Backness, etc. • A phone is described with a vector of feature values • Phone class attributes • Detector outputs describe the phone label associated with a portion of the speech signal • /t/, /d/, /aa/, etc.

Results (Fosler-Lussier & Morris 08) * Significantly (p<0.05) improvement at 0.6% difference between models

Results (Fosler-Lussier & Morris 08) * Significantly (p≤0.05) improvement at 0.6% difference between models

Word Recognition • Second task – Word recognition • Dictionary for word recognition has 54 distinct phones instead of 48, so new CRFs and MLPs trained to provide input features • MLPs and CRFs again trained on TIMIT to provide both phone classifier output and phonological feature classifier output • Initial experiments – use MLPs and CRFs trained on TIMIT to generate features for WSJ recognition • Next pass – use MLPs and CRFs trained on TIMIT to align label files for WSJ, then train MLPs and CRFs for WSJ recognition

Initial Results *Significant (p≤0.05) improvement at roughly 1% difference between models

Initial Results *Significant (p≤0.05) improvement at roughly 1% difference between models

Initial Results *Significant (p≤0.05) improvement at roughly 1% difference between models

Word Recognition • Problems • Some of the models show slight significant improvement over their Tandem counterpart • Unfortunately, what will cause an improvement is not yet predictable • Transition features give slight degredation when used on their own slight improvement when classifier is mixed with MFCCs • Retraining directly on WSJ data does not give improvement for CRF • Gains from CRF training are wiped away if we just retrain the MLPs on WSJ data

Word Recognition • Problems (cont.) • The only model that gives improvement for the Crandem system is a CRF model trained on linear outputs from MLPs • Softmax outputs – much worse than baseline • Log softmax outputs – ditto • This doesn’t seem right, especially given the results from the Crandem phone recognition experiments • These were trained on softmax outputs • I suspect “implementor error” here, though I haven’t tracked down my mistake yet

Word Recognition • Problems (cont.) • Because of the “linear inputs only” issue, certain features have yet to be examined fully • “Hifny”-style Gaussian scores have not provided any gain – scaling of these features may be preventing them from being useful

Current Work • Sort out problems with CRF models • Why is it so sensitive to the input feature type? (linear vs. log vs. softmax) • If this sensitivity is “built in” to the model, how can I appropriately scale features to include them in the model that works? • Move on to next problem – direct decoding on CRF lattices