Information Bottleneck versus Maximum Likelihood

Information Bottleneck versus Maximum Likelihood. Felix Polyakov. Overview of the talk. Brief review of the Information Bottleneck Maximum Likelihood Information Bottleneck and Maximum Likelihood Example from Image Segmentation. A Simple Example. Simple Example.

Information Bottleneck versus Maximum Likelihood

E N D

Presentation Transcript

Information Bottleneck versus Maximum Likelihood Felix Polyakov

Overview of the talk • Brief review of the Information Bottleneck • Maximum Likelihood • Information Bottleneck and Maximum Likelihood • Example from Image Segmentation

A new compact representation The document clusters preserve the relevant information between the documents and words

Feature Selection? • NO ASSUMPTIONS about the source of the data • Extracting relevant structure from data • functions of the data (statistics) that preserve information • Information about what? • Need a principle that is both general and precise.

Documents Words

The information bottleneck or relevance through distortion N. Tishby, F. Pereira, and W. Bialek • We would like the relevant partitioning T to compress X as much as possible, and to capture as much information about Y as possible Y X

Goal: find q(T | X) • note Markovian independence relationT X Y

Variational problem Iterative algorithm

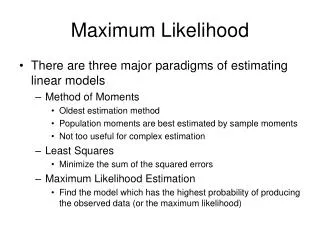

Overview of the talk • Short review of the Information Bottleneck • Maximum Likelihood • Information Bottleneck and Maximum Likelihood • Example from Image Segmentation

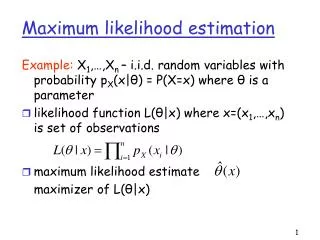

Likelihood of the Data Probability of a head A simple example... A coin is known to be biased The coin is tossed three times – two heads and one tail Use ML to estimate the probability of throwing a head • Model: • p(head) = P • p(tail) = 1 - P • Try P = 0.2 L(O) = 0.2 * 0.2 * 0.8 = 0.032 • Try P = 0.4 L(O) = 0.4 * 0.4 * 0.6 = 0.096 • Try P = 0.6 L(O) = 0.6 * 0.6 * 0.4 = 0.144 • Try P = 0.8 L(O) = 0.8 * 0.8 * 0.2 = 0.128

A bit more complicated example… :Mixture Model • Three baskets with white (O= 1), grey (O = 2), and black (O = 3) balls B1 B2 B3 • 15 balls were drawn as follows: • Choose a basket according to p(i) = bi • Draw the ball j from basket i with probability • Use ML to estimate given the observations: sequence of balls’ colors

Likelihood of observations • Log Likelihood of observations • Maximal Likelihood of observations

Likelihood of the observed data • x – hidden random variables [e.g. basket] • y – observed random variables [e.g. color] • - model parameters [e.g. they define p(y|x)] 0 – current estimate of model parameters

2. Maximization • EM algorithm converges to local maxima Expectation-maximization algorithm (I) • Expectation • Compute • Get

Jensen’s inequality for concave function EM – another approach • Goal:

2. Maximization Expectation-maximization algorithm (II) • Expectation (I) and (II) are equivalent

Scheme of the approach Expectation Maximization

Overview of the talk • Short review of the Information Bottleneck • Maximum Likelihood • Information Bottleneck and Maximum Likelihood for a toy problem • Example from Image Segmentation

Words - Y Documents - X t ~ (t) x ~ (x) y|t ~ (y|t) Topics - t

Model parameters Example • xi = 9 • t(9) = 2 • sample from (y|2) • get yi = “Drug” • set n(9, “Drug”) = n(9, “Drug”) + 1 Sampling algorithm • For i = 1:N • choose xi by sampling from (x) • choose yi by sampling from (y|t(xi)) • increase n(xi, yi) by one

(y|t=1) (y|t=2) (y|t=3) X t(X)

X Y t(x) (y|t(x)) = topics X = documents Y = words Toy problem: which parameters maximize the likelihood?

Normalization factor EM approach • E-step • M-step

IB approach Normalization factor

, , ML r is a scaling constant IB

IBMLmapping , , • X is uniformly distributed • r = |X| • The EM algorithm is equivalent to the IB iterative algorithm + + + + EM + IB ML Iterative IB +

IBMLmapping • X is uniformly distributed • = n(x) • All the fixed points of the likelihood L are mapped to all the fixed points of the IB-functional L= I(T;X) - I(T;Y) • At the fixed points –log L L+ const + + + ML IB +

X is uniformly distributed • = n(x) • -(1/r) F - H(Y) = L • -F L+ const • Every algorithm increases F, iff it decreases L

ML IB Deterministic case • N (or ) EM: IB:

N (or ) • Do not speak about uniformity of X here • All the fixed points of L are mapped to all the fixed points of L • -F L+ const • Every algorithm which finds a fixed point of L, induces a fixed point of L and vice versa • In case of several different f.p., the solution that maximized L is mapped to the solution that minimizes L.

Example • This does not mean that q(t) = (t) N= =

When N, every algorithm increases F iff it decrease L with • How large must N (or ) be? • How is it related to the “amount of uniformity” in n(x)?

Simulations • 200 runs = 100 (small N) + 100 (large N) • 58 runs IIB converged to a smaller value of (-F) than EM • 46 runs EM converged to (-F) related to a smaller value of L

IBML Quality estimation for EM solution • The quality of IB solution is measured through the theoretic upper bound • Using mapping, one can adopt this measure for the ML esimation problem, for large enough N

Summary: IB versus ML • ML and IB approaches are equivalent under certain conditions • Models comparison • The mixture model assumes that Y is independent of X given T(X): X T Y • In the IB framework, T is defined through the IB Markovian independence relation: T X Y • Can adapt the quality estimation measure from IB to the ML estimation problem, for large N

Overview of the talk • Brief review of the Information Bottleneck • Maximum Likelihood • Information Bottleneck and Maximum Likelihood • Example from Image Segmentation (L. Hermes et. al.)

The clustering model • Pixels oi, i = 1, …, n • Deterministic clusters c,, = 1, …, k • Boolean assignment matrix MM = {0, 1}n x k ,Sn Min=1 • Observations

oi q r • Observations

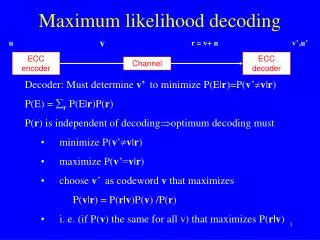

Likelihood • Discretization of the color space into intervals Ij • Set • Data likelihood

Log-Likelihood • Assume that ni = const, set = ni then L = -log L IB functional