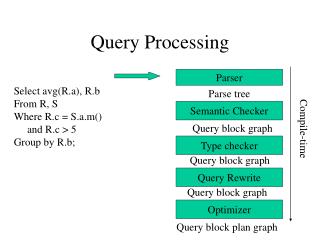

Query processing: optimizations

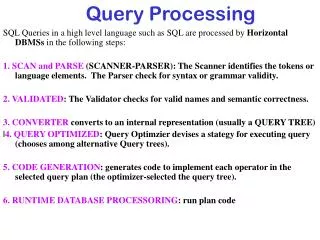

Query processing: optimizations. Paolo Ferragina Dipartimento di Informatica Università di Pisa. Reading 2.3. Sec. 2.3. 128. 17. 2. 4. 8. 41. 48. 64. 1. 2. 3. 8. 11. 21. Augment postings with skip pointers (at indexing time). How do we deploy them ? Where do we place them ?.

Query processing: optimizations

E N D

Presentation Transcript

Query processing:optimizations Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 2.3

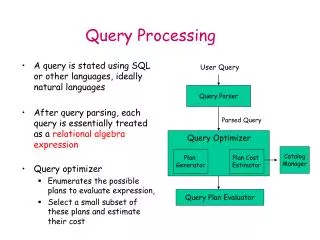

Sec. 2.3 128 17 2 4 8 41 48 64 1 2 3 8 11 21 Augment postings with skip pointers (at indexing time) • How do we deploy them ? • Where do we place them ? 128 41 31 11 31

Sec. 2.3 17 2 4 8 41 48 64 1 2 3 8 11 21 But the skip successor of 11 on the lower list is 31, so we can skip ahead past the intervening postings. Using skips 128 41 128 31 11 31 Suppose we’ve stepped through the lists until we process 8 on each list. We match it and advance. We then have 41 and 11 on the lower. 11 is smaller.

Sec. 2.3 Placing skips • Tradeoff: • More skips shorter spans more likely to skip. But lots of comparisons to skip pointers. • Fewer skips longer spans few successful skips. Less pointer comparisons.

Sec. 2.3 Placing skips • Simple heuristic for postings of length L • use L evenly-spaced skip pointers. • This ignores the distribution of query terms. • Easy if the index is relatively static. • This definitely useful for in-memory index • The I/O cost of loading a bigger list can outweigh the gains!

Sec. 2.3 You can solve it by Shortest Path Placing skips, contd • What if it is known a distribution of access pk to the k-th element of the inverted list? • w(i,j) = sumk=i..j pk • L^0(i,j) = average cost of accessing an item in the sublist from i to j = sumk=i..j pk * (k-i+1) • L^1(1,n) = 1 (first skip cmp) + (cost to access the right list) minu>1w(1,u-1) * L^0(1,u-1) + w(u,n) * L^1(u,n) • L^0(i,j) can be tabulated in O(n^2) time • Computing L^1(i,n) takes O(n), given L^1(j,n), for j>i • Computing the total L^1(1,n) takes O(n^2) time

Sec. 2.3 Placing skips, contd • What if it is also fixed the number of p skip-pointers that can be allocated? • Same as before but we add as parameter p • L^1_p(1,n) = 1 + min_{u>1} w(1,u-1) * L^0(1,u-1) + w(u,n) * L^1_{p-1}(u,n) • L^1_0(i,j) = L^0(i,j), i.e. no pointers left then scan • L^i(j,n) takes O(n) time [min calculation] if are available the values for L^{i-1}(h,n) with h > j • So L^p(1,n) takes O(pn^2) time

Faster query = caching? Two opposite approaches: • Cache the query results(exploits query locality) • Cache pages of posting lists(exploits term locality)

Query processing:phrase queries and positional indexes Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 2.4

Sec. 2.4 Phrase queries • Want to be able to answer queries such as “stanford university”– as a phrase • Thus the sentence “I went at Stanford my university” is not a match.

Sec. 2.4.1 Solution #1: Biword indexes • For example the text “Friends, Romans, Countrymen” would generate the biwords • friends romans • romans countrymen • Each of these biwords is now an entry in the dictionary • Two-word phrase query-processing is immediate.

Sec. 2.4.1 Longer phrase queries • Longer phrases are processed by reducing them to bi-word queries in AND stanford university palo alto can be broken into the Boolean query on biwords, such as stanford university AND university paloANDpalo alto Need the docs to verify + They are combined with other solutions Can have false positives! Index blows up

Sec. 2.4.2 Solution #2: Positional indexes • In the postings, store for each term and document the position(s) in which that term occurs: <term, number of docs containing term; doc1: position1, position2 … ; doc2: position1, position2 … ; etc.>

Sec. 2.4.2 Processing a phrase query • “to be or not to be”. • to: • 2:1,17,74,222,551;4:8,16,190,429,433;7:13,23,191; ... • be: • 1:17,19; 4:17,191,291,430,434;5:14,19,101; ... • Same general method for proximity searches

Sec. 7.2.2 Query term proximity • Free text queries: just a set of terms typed into the query box – common on the web • Users prefer docs in which query terms occur within close proximity of each other • Would like scoring function to take this into account – how?

Sec. 2.4.2 Positional index size • You can compress position values/offsets • Nevertheless, a positional index expands postings storage by a factor 2-4 in English • Nevertheless, a positional index is now commonly used because of the power and usefulness of phrase and proximity queries … whether used explicitly or implicitly in a ranking retrieval system.

Sec. 2.4.3 Combination schemes • BiWord + Positional index is a profitable combination • Biword is particularly useful for particular phrases (“Michael Jackson”, “Britney Spears”) • More complicated mixing strategies do exist!

Sec. 7.2.3 Soft-AND • E.g. query rising interest rates • Run the query as a phrase query • If <K docs contain the phrase rising interest rates, run the two phrase queries rising interest and interest rates • If we still have <K docs, run the “vector space query”rising interest rates (…see next…) • “Rank”the matching docs (…see next…)

Query processing:other sophisticated queries Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 3.2 and 3.3

Sec. 3.2 Wild-card queries: * • mon*: find all docs containing words beginning with “mon”. • Use a Prefix-search data structure • *mon: find words ending in “mon” • Maintain a prefix-search data structure for reverse terms. How can we solve the wild-card query pro*cent?

Sec. 3.2 What about * in the middle? • co*tion • We could look up co* AND *tion and intersect the two lists • Expensive • se*ateANDfil*er This may result in many Boolean AND queries. • The solution: transform wild-card queries so that the *’s occur at the end • This gives rise to the Permuterm Index.

Sec. 3.2.1 Permuterm index • For term hello, index under: • hello$, ello$h, llo$he, lo$hel, o$hell, $hello where $ is a special symbol. • Queries: • X lookup on X$ • X* lookup on $X* • *X lookup on X$* • *X* lookup on X* • X*Y lookup on Y$X* • X*Y*Z ??? Exercise!

Sec. 3.2.1 Permuterm query processing • Rotate query wild-card to the right • P*Q Q$P* • Now use prefix-search data structure • Permuterm problem: ≈ 4x lexicon size Empirical observation for English.

Sec. 3.2.2 K-gram indexes • The k-gram index finds terms based on a query consisting of k-grams (here k=2). $m mace madden mo among amortize on among arond

Sec. 3.2.2 K-gram for wild-cards queries • Query mon* can now be run as • $m AND mo AND on • Gets terms that match AND version of our wildcard query. • Must post-filter these terms against query.

Sec. 3.3.2 Isolated word correction • Given a lexicon and a character sequence Q, return the words in the lexicon closest to Q • What’s “closest”? • Edit distance (Levenshtein distance) • Weighted edit distance • n-gram overlap Useful in query-mispellings

Sec. 3.3.3 Edit distance • Given two strings S1 and S2, the minimum number of operations to convert one to the other • Operations are typically character-level • Insert, Delete, Replace, (Transposition) • E.g., the edit distance from dof to dog is 1 • From cat to act is 2 (Just 1 with transpose.) • from cat to dog is 3. • Generally found by dynamic programming.

DynProg for Edit Distance • Let E(i,j) = edit distance between T1,j and P1,i. • E(i,0)=E(0,i)=i • E(i, j) = E(i–1, j–1) if Pi=Tj • E(i, j) = min{E(i, j–1), • E(i–1, j), • E(i–1, j–1)}+1 if PiTj

Sec. 3.3.3 Weighted edit distance • As above, but the weight of an operation depends on the character(s) involved • Meant to capture OCR or keyboard errors, e.g. m more likely to be mis-typed as n than as q • Therefore, replacing m by n is a smaller edit distance than by q • Requires weight matrix as input • Modify dynamic programming to handle weights

Sec. 3.3.4 k-gram overlap for Edit Distance • Enumerate all the k-grams in the query string as well as in the lexicon • Use the k-gram index (recall wild-card search) to retrieve all lexicon terms matching any of the query k-grams • Threshold by number of matching k-grams • If the term is L chars long • If E is the number of allowed errors (E*k, k-grams are killed) • At least (L-k+1) – E*k of the query k-grams must match a dictionary term to be a candidate answer.

Sec. 3.3.5 Context-sensitive spell correction • Text: I flew from Heathrow to Narita. • Consider the phrase query “flew form Heathrow” • We’d like to respond Did you mean “flew from Heathrow”? because no docs matched the query phrase.

Zone indexes Paolo Ferragina Dipartimento di Informatica Università di Pisa Reading 6.1

Sec. 6.1 Parametric and zone indexes • Thus far, a doc has been a term sequence • But documents have multiple parts: • Author • Title • Date of publication • Language • Format • etc. • These are the metadata about a document

Sec. 6.1 Zone • A zone is a region of the doc that can contain an arbitrary amount of text e.g., • Title • Abstract • References … • Build inverted indexes on fields AND zones to permit querying • E.g., “find docs with merchant in the title zone and matching the query gentle rain”

Sec. 6.1 Example zone indexes Encode zones in dictionary vs. postings.

Sec. 7.2.1 Tiered indexes • Break postings up into a hierarchy of lists • Most important • … • Least important • Inverted index thus broken up into tiers of decreasing importance • At query time use top tier unless it fails to yield K docs • If so drop to lower tiers

Sec. 7.2.1 Example tiered index