Bulk Data Transfer Activities

Submit Site. Submit Site. SRB Server. SRB Server. DAG File. Move X from C to D. Move X from C to D. Remove X from A. Remove X from A. Move X from C to D. Remove X from A. Remove X from A. Move X from C to D. Move X from B to C. Move X from B to C. Move X

Bulk Data Transfer Activities

E N D

Presentation Transcript

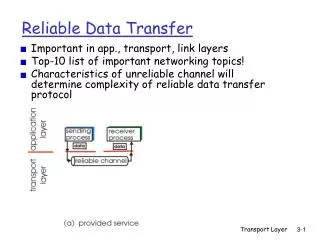

Submit Site Submit Site SRB Server SRB Server DAG File Move X from C to D Move X from C to D Remove X from A Remove X from A Move X from C to D Remove X from A Remove X from A Move X from C to D Move X from B to C Move X from B to C Move X from A to C Move X from C to D Move X from A to B Remove X from C Move X from A to C Move X from C to D Remove X from C Remove X from B Move X from A to B Move X from A to B Remove X from C Move X from C to D Remove X from B Move X from A to C Move X from C to D Move X from A to B Remove X from C Move X from B to C Remove X from B Remove X from B Move X from B to C Move X from A to C UniTree Server UniTree Server D C A B D C A MSS put SRB get MSS put Globus-url-copy SRB get DAG File SDSC Cache NCSA Cache NCSA Cache 1 2 3 Bulk Data Transfer Activities • We regard data transfers as “first class citizens,” just like computational jobs. • We have transferred ~3 TB of DPOSS data (2611 x 1.1 GB files) from SRB to UniTree using 3 different pipeline configurations. • The pipelines are built using Condor and Stork scheduling technologies. The whole process is managed by DAGMan. • What a batch system means for computational jobs, Stork means the same for data placement activities (ie. transfer, replication, reservations, staging) in Grid: it schedules, runs, monitors data placement jobs and ensures that they complete. • Stork can interact with heterogeneous middleware and end-storage systems easily and recover from failures successfully. • Stork makes data placement a first class citizen of Grid computing. • http://www.cs.wisc.edu/condor/stork • Condor is a specialized workload management system for compute-intensive jobs. • Condor provides a job queuing mechanism, scheduling policy, priority scheme, resource monitoring, and resource management. • Condor chooses when and where to run jobs based upon a policy, carefully monitors their progress, and ultimately informs the user upon completion. • http://www.cs.wisc.edu/condor • DAGMan • DAGman (Directed Acyclic Graph Manager) is a meta-scheduler for Condor. It manages dependencies between jobs at a higher level than the Condor Scheduler. DAGMan can now also interact with Stork. • http://www.cs.wisc.edu/condor/dagman • We used the native file transfer mechanisms for each underlying system: SRB, Globus GridFTP, and UniTree for the transfers. • We described each data transfer with a five stage pipeline, resulting in a 5x2611 node workflow (DAG) managed by DAGMan. • We obtained an end-to-end throughput (from SRB to UniTree) of 11 files per hour (3.2 MB/sec). • GridFTP: • High performance, secure, reliable data transfer protocol from Globus • http://www.globus.org/datagrid/gridftp.html • SRB: Storage Resource Broker • Client-Server middleware that provides a uniform interface for connecting to heterogeneous data resources • http://www.npaci.edu/DICE/SRB • UniTree: • NCSA’s High-speed, high-capacity mass storage system • http://www.ncsa.uiuc.edu/Divisions/CC/HPDM/unitree • We used the experimental DiskRouter tool instead of Globus GridFTP for cache-to-cache transfers. • We obtained an end-to-end throughput (from SRB to UniTree) of 20 files per hour (5.95 MB/sec). • DiskRouter • Moves large amounts of data efficiently (on the order of terabytes) • Uses disk as a buffer to aid in large data transfers • Performs application level routing • Increases network throughput by using multiple sockets and setting tcp buffer sizes explicitly • http://www.cs.wisc.edu/condor/diskrouter Unitree not responding SDSC cache reboot & UW CS Network outage Diskrouter reconfigured and restarted SRB server maintenance • We skipped the SDSC cache, and performed direct SRB transfers from SRB server to NCSA cache. • We described each data transfer with a three stage pipeline, resulting in a 3x2611 node workflow (DAG). • We obtained an end-to-end throughput (from SRB to UniTree) of 17 files per hour (5.00 MB/sec). PDQ Expedition Unitree maintenance SRB server problem