Maximizing Performance with Stanford Hydra Chip Multiprocessor CMP

390 likes | 510 Vues

Explore the Stanford Hydra Chip Multiprocessor (CMP) architecture aiming to optimize performance through data/thread level speculation. The design overview, motivation behind CMPs, advantages, drawbacks, and the Hydra prototype layout are discussed. Learn about the challenges of parallelizing applications and the Hydra's approach to extract parallelism. Discover the potential of Thread-Level Speculation (TLS) in increasing application parallelization.

Maximizing Performance with Stanford Hydra Chip Multiprocessor CMP

E N D

Presentation Transcript

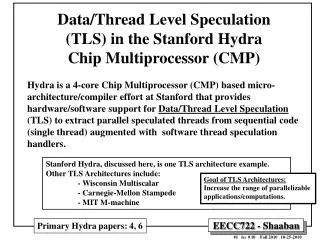

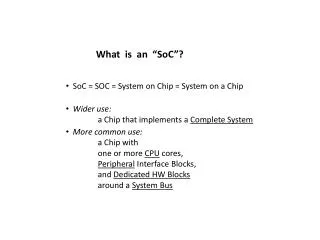

Data/Thread Level Speculation(TLS) in the Stanford Hydra Chip Multiprocessor (CMP) Hydra is a 4-core Chip Multiprocessor (CMP) based micro-architecture/compiler effort at Stanford that provides hardware/software support for Data/Thread Level Speculation (TLS) to extract parallel speculated threads from sequential code (single thread) augmented with software thread speculation handlers. Stanford Hydra, discussed here, is one TLS architecture example. Other TLS Architectures include: - Wisconsin Multiscalar - Carnegie-Mellon Stampede - MIT M-machine Goal of TLS Architectures: Increase the range of parallelizable applications/computations. Primary Hydra papers: 4, 6

Motivation for Chip Multiprocessors (CMPs) • Chip Multiprocessors (CMPs) offers implementation benefits: • High-speed signals are localized in individual CPUs • A proven CPU design is replicated across the die (including SMT processors, e.g IBM Power 5) • Overcomes diminishing performance/transistor return problem (limited-ILP) in single-threaded superscalar processors (similar motivation for SMT) • Transistors are used today mostly for ILP extraction • CMPs use transistors to run multiple threads (exploit thread level parallelism, TLP): • On parallelized (multi-threaded) programs • With multi-programmed workloads (multi-tasking) • A number of single-threaded applications executing on different CPUs • Fast inter-processor communication eases parallelization of code (Using shared L2 cache) • Potential Drawback of CMPs: • High power/heat issues using current VLSI processes due to core duplication. • Limited ILP/poor latency hiding within individual cores (SMT addresses this) But slower than logical processor communication in SMT

Coarse Grain Fine Grain Stanford Hydra CMP Approach Goals • Exploit all levels of program parallelism. • Develop a single-chip multiprocessor architecture that simplifies microprocessor design and achieves high performance. • Make the multiprocessor transparent to the average user. • Integrate use of parallelizing compiler technology in the design of microarchitecture that supports data/thread level speculation (TLS). How? On multiple CPU cores within a single CMP or multiple CMPs On multiple CPU cores within a single CMP using Thread Level Speculation (TLS) Within a single CPU core

Hydra Prototype Overview To support Thread-Level Speculation (TLS) • 4 CPU cores with modified private L1 caches. • Speculative coprocessor (for each processor core) • Speculative memory reference controller. • Speculative interrupt screening mechanism. • Statistics mechanisms for performance evaluation and to allow feedback for code tuning. • Memory system • Read and write buses. • Controllers for all resources. • On-chip shared L2 cache. • L2 Speculation write buffers. • Simple off-chip main memory controller. • I/O and debugging interface.

The Basic Hydra CMP • 4 processor cores and shared secondary (L2) cache on a chip • 2 buses connect processors and memory • Cache Coherence: writes are broadcast on write bus L2 Shared L2

Hydra Memory Hierarchy Characteristics L1 is Write though To L2 (not to main memory)

Hydra Prototype Layout Shared L2 Private Split L1 caches Per core I-L1 I-L1 L2 Speculation Write Buffers (one per core) D-L1 D-L1 D-L1 D-L1 Main Memory Controller I-L1 I-L1 250 MHz clock rate target Circa ~ 1999

CMP Parallel Performance High Data Parallelism/LLP • Varying levels of performance: • Multiprogrammed workloads work well • Very parallel apps (matrix-based FP and multimedia) are excellent • Acceptable only with a few less parallel (i.e. integer) general applications 3 2 1 Thread Level Speculation (TLS) Target Applications Results given here are without Thread Level Speculation (TLS) Normally hard to parallelize (multi-thread)

Causes Of Ambiguous Dependencies The Parallelization Problem High Data Parallelism/LLP • Current automated parallelization software (parallel compilers) is limited • Parallel compilers are generally successful for scientific applications with statically known dependencies (e.g dense matrix computations). • Automated parallization of general-purpose applications provides poor parallel performance especially for integer applications due to ambiguous data dependencies resulting from: • Significant pointer use: Pointer aliasing (Pointer disambiguation problem) • Dynamic loop limits • Complex control flow • Irregular array accesses • Inter-procedural dependencies • Ambiguous data dependencies limit extracted parallelism/performance: • Complicate static dependency analysis • Introduce imprecision into dependence relations • Force conservative performance-degrading synchronization to safely handle potential dependencies. Parallelism may exist in algorithm, but code hides it. • Manual parallelization can provide good performance on a much wider range of applications: • Requires different initial program design/data structures/algorithms • Programmers with additional skills. • Handling ambiguous dependencies present in general-purpose applications may still force conservative synchronization greatly limiting parallel performance • Can hardware help the situation? Outcome Hardware Supported Thread Level Speculation

Possible Limited Parallel Software Solution: Data Speculation & Thread Level Speculation (TLS) • Data speculation and Thread Level Speculation (TLS) enable parallelization without regard for data dependencies: • Normal sequential program is broken up into parallel speculative threads. • Speculative threads are now run in parallel on multiple physical CPUs (e.g. CMP) and/or logical CPUs (e.g. SMT). • Thus the approach assumes/speculates that no data dependencies exist among created threads and thus speculative threads are run in parallel. • Speculation hardware (TLS processor) architecture ensures correctness (no name/data dependence violations among speculative threads). • Parallel software implications • Loop parallelization is now easily automated. • Ambiguous dependencies resolved dynamically without conservative synchronization. • More “arbitrary” threads are possible (subroutines). • Add synchronization only for performance. • Thread Level Speculation (TLS) hardware support mechanisms • Speculative thread control mechanism • Five fundamental speculation hardware/memory system requirements for correct data/thread speculation. Multiple speculated threads We assume no dependencies and TLS hardware ensures no violations if dependencies actually exist e.g Speculative thread creation, restart, termination .. Given later in slide 21

Subroutine Thread Speculation Speculated Thread (subroutine return code) Speculate Speculated threads communicate results through shared memory locations

Loop Iteration Speculative Threads More Speculative Threads A Simple example of a speculatively executed loop using Data/Thread Level Speculation (TLS) Speculated Threads Shown here one iteration per speculated thread Original Sequential (Single Thread) Loop Most common Application of TLS Speculated threads communicate results through shared memory locations

Speculative Thread Creation in Hydra More Speculative Threads Register Passing Buffer (RPB)

Overview of Loop-Iteration Thread Speculation i.e. assume no data dependencies • Parallel regions (loop iterations) are annotated by the compiler. • e.g. Begin_Speculation … End_Speculation • The hardware uses these annotations to run loop iterations in parallel as speculated threads on a number of CPU cores. • Each CPU core knows which loop iteration it is running. • CPUs dynamically prevent data/name dependency violations: • “later” iterations can’t use data before write by “earlier” iterations (Prevent data dependency violation, RAW hazard). • “earlier” iterations never see writes by “later” iterations (WAR hazards prevented): • Multiple views of memory are created by TLS hardware • If a “later” iteration (more speculated thread) has used data that an “earlier” iteration (less speculated thread) writes before data is actually written (data dependency violation, RAW hazard must be detected by TLS hardware), the later iteration is restarted. • All following iterations are halted and restarted, also. • All writes by the later iteration are discarded (undo speculated work). A later iteration is assigned a more speculated thread Memory Renaming How? Detect dependency violation and restart computation Speculated threads communicate results through shared memory locations

Hydra’s Data & Thread Speculation Operations Once a RAW hazard is detected by hardware Speculated Threads must commit results in- order (when no longer Speculative)

Hydra Loop Compiling for Speculation Create Speculated Threads

Loop Execution with Thread Speculation Data Dependency Violation (RAW hazard) Handling Example • If a “later” iteration (more speculated thread) has used data that an “earlier” iteration (less speculated thread) writes before data is actually written (data dependency violation, RAW hazard), the later iteration is restarted • All following iterations are halted and restarted, also. • All writes by the later iteration are discarded (undo speculated work). Data Dependency Violation (RAW hazard) Value read too early Earlier (less speculative) thread Later (more speculative) thread Speculated threads communicate results through shared memory locations

I .. .. J I (Write) J (Read) I (Read) J(Write) J (Write) I (Read) J (Read) I (Write) Shared Name Shared Name Shared Name Shared Name Read after Write (RAW) if data dependence is violated Program Order A name dependence: output dependence Write after Write (WAW) if output dependence is violated Data Hazard/Dependence Classification Name: Register or Memory Location e.g. S.D. F4, 0(R1) e.g. L.D. F6, 0(R1) True Data Dependence A name dependence: antidependence e.g L.D. F6, 0(R1) e.g. S.D. F4, 0(R1) Write after Read (WAR) if antidependence is violated e.g. L.D. F6, 0(R1) e.g. S.D. F4, 0(R1) No dependence e.g. L.D. F4, 0(R1) e.g. S.D. F6, 0(R1) Read after Read (RAR) not a hazard Here, speculated threads communicate results through shared memory locations

Speculative Data Access in Speculated Threads i Less Speculated thread i+1 More speculated thread Program Order i WAR RAW Data Dep. violation (detect and Restart) i+1 WAW More Speculative Reversed access order to same memory location i+1 before i Write by i+1 Not seen by i Access in correct program order to same memory location i before i+1 Speculated threads communicate results through shared memory locations

Speculative Data Access in Speculated Threads To provide the desired (correct) memory behavior for speculative data access, the data/thread speculation hardware must provide: 1. A method for detecting true memory data dependencies, in order to determine when a dependency has been violated (RAW hazard). 2. A method for restarting (backing up and re-executing) speculative loads and any instructions that may be dependent upon them when the load causes a violation. 3. A method for buffering any data written during a speculative region of a program (speculative results) so that it may be discarded when a violation occurs or permanently committed at the right time in correct order. i.e RAW hazard i.e when thread is no longer speculative (and in correct order to prevent WAW hazards)

Five Fundamental Speculation Hardware Requirements For Correct Data/Thread Speculation (TLS) 1. Forward data between parallel threads (Prevent RAW). A speculative system must be able to forward shared data quickly and efficiently from an earlier thread running on one processor to a later thread running on another. 2. Detect when reads occur too early (RAW hazard occurred ).If a data value is read by a later thread and subsequently written by an earlier thread, the hardware must notice that the read retrieved incorrect data since a true dependence violation (RAW hazard) has occurred. 3. Safely discard speculative state after violations (RAW hazards).All speculative changes to the machine state must be discarded after a violation, while no permanent machine state may be lost in the process. 4. Retire speculative writes in the correct order (Prevent WAW hazards).Once speculative threads have completed successfully (no longer speculative) , their state must be added (committed) to the permanent state of the machine in the correct program order, considering the original sequencing of the threads. 5. Provide memory renaming (Prevent WAR hazards).The speculative hardware must ensure that the older thread cannot “see” any changes made by later threads, as these would not have occurred yet (i.e. future computation) in the original sequential program. (i.g. Multiple views of memory) i.e. More Speculative

Speculative Hardware/Memory Requirements 1-2 Read is too early 2 1 Less Speculated Thread i More Speculated Thread i +1 (prevent RAW) (RAW hazard or violation)

Speculative Hardware/Memory Requirements 3-4 RAW Hazard Occurred/Detected More Speculated Thread Discard Restart Write order Commit speculative writes in correct program order 3 4 (RAW hazard). Commit (prevent WAW hazards)

Speculative Hardware/Memory Requirement 5 Memory Renaming More Speculated Thread i + 1 Less speculated Thread i Not visible to less speculated thread i Write X by i+1 not visible to less speculative threads (thread i here) (i.e. no WAR hazard) Even more Speculated Thread i + 2 But visible to more speculative thread 5 Memory Renaming to prevent WAR hazards.

Hydra Thread Level Speculation (TLS) Hardware Modified Data L1 Cache Flags Speculation Coprocessor Data L1 L2 Cache Speculation Write Buffers (one per core) Needed to hold speculative data/state

How TLS Hardware Requirements Are Met (Summary) Hydra Thread Level Speculation (TLS) Support How the five fundamental TLS hardware requirements are met: (summary) Multiple Memory views or Memory Renaming i.e. restart

L1 Cache Modifications To Support Speculation: Data L1 Cache Tag Details 3 1 2 4 1 2 3 4 - Record writes of more speculated threads

L2 Speculation Write Buffer Details i.e speculative state i.e. Stores L2 speculation write buffers committed in L2 (which holds permanent non-speculative state ) in correct program order (when no longer speculative) To prevent WAW hazards (basic requirement 5) i.e. Loads Speculative loads are shown next

The Operation of Speculative Loads To meet requirement 1: Forward results (Prevent RAW) More Speculative Less Speculative On local Data L1 Miss: First, check own and then less speculated (earlier) Write buffers then L2 cache L1 On local Data L1 Miss: Do Not Check: More Speculated Later writes not visible (otherwise WAR) Check L2 Last To meet requirement 5: Multiple Memory Views (Memory Renaming) Check First On L1 miss Data L1 Hit Data L1 Miss This operation of speculative loads provides multiple memory views (memory renaming) where more speculative writes are not visible to less speculative threads which prevents WAR hazards (memory renaming, Requirement 5) and satisfies data dependencies (forward data, Requirement 1)

Speculative Load Operation: Reading L2 Cache Speculative Buffers To meet requirement 1: Forward results (Prevent RAW) On local Data L1 Miss: First, check own and then less speculated (earlier) Write buffers then L2 cache On local Data L1 Miss: Do Not Check: More Speculated Later writes not visible (otherwise WAR) To meet requirement 5: Multiple Memory Views (Memory Renaming) Similar to last slide

Less Speculated More Speculated The Operation of Speculative Stores i.e Data L1 Cache Write Hit Write to L1 and own L2 speculation write buffer Similar to invalidate cache coherency protocols RAW Detection (Req. 2) (This satisfies basic speculative hardware/Memory requirements 2-3) Detect RAW violations and restart L2 speculation write buffers committed in L2 (which holds permanent non-speculative state ) in correct program order (when no longer speculative) (This satisfies fundamental TLS requirement 4 to prevent WAW)

Hydra’s Handling of Five Basic Speculation Hardware Requirements For Correct Data/Thread Speculation 1. Forward data between parallel threads (RAW). • When a speculative thread writes data over the write bus, all more-speculative threads that may need the data have their current copy of that cache line invalidated. • This is similar to the way the system works during non-speculative operation (invalidate cache coherency protocol). • If any of the threads subsequently need the new speculative data forwarded to them, they will miss in their primary cache and access the secondary cache. • The speculative data contained in the write buffers of the current or older threads replaces data returned from the secondary cache on a byte-by-byte basis just before the composite line is returned to the processor and primary cache. In primary cache (Data L1 cache) And own L2 write buffer and less speculated L2 buffers Speculative Load As seen earlier in slides 29-30

Hydra’s Handling of Five Basic Speculation Hardware Requirements For Correct Data/Thread Speculation 2. Detect when reads occur too early (Detect RAW hazards). • Primary cache (Data L1) read bits are set to mark any reads that may cause violations. • Subsequently, if a write to that address from an earlier thread (less speculated) invalidates the address, a violation is detected, and the thread is restarted. 3. Safely discard speculative state after violations. • Since all permanent machine state in Hydra is always maintained within the secondary cache, anything in the primary caches and secondary cache speculation buffers may be invalidated at any time without risking a loss of permanent state. • As a result, any lines in the primary cache containing speculative data (marked with a special modified bit) may simply be invalidated all at once to clear any speculative state from a primary cache. • In parallel with this operation, the secondary cache buffer for the thread may be emptied to discard any speculative data written by the thread. RAW hazards Discard speculative state in Data L1 and L2 speculation buffers

Why pre-invalidate Hydra’s Handling of Five Basic Speculation Hardware Requirements For Correct Data/Thread Speculation 4. Retire speculative writes in the correct order (Prevent WAW hazards). • Separate secondary cache speculation buffers are maintained for each thread. As long as these are drained (committed) into the secondary (L2) cache in the original program sequence of the threads, they will reorder speculative memory references correctly. 5. Provide memory renaming (Prevent WAR hazards). • Each processor can only read data written by itself or earlier threads (less speculated threads) when reading its own primary cache or the secondary cache speculation buffers. • Writes from later (more speculative) threads don’t cause immediate invalidations in the primary cache, since these writes should not be visible to earlier (less speculative) threads. • However, these “ignored” invalidations are recorded using an additional pre-invalidate primary cache bit associated with each line. This is because they must be processed before a different speculative or non-speculative thread executes on this processor. • If future threads have written to a particular line in the primary cache, the pre-invalidate bit for that line is set. When the current thread completes, these bits allow the processor to quickly simulate the effect of all stored invalidations caused by all writes from later processors all at once, before a new thread begins execution on this processor. When threads/work no longer speculative Multiple Memory Views As seen earlier in speculative load operation i.e. generated by more speculative threads

Thread Speculation Performance • Results representative of entire uniprocessor applications • Simulated with accurate modeling of Hydra’s memory and hardware speculation support. (No TLS)

Hydra Conclusions • Hydra offers a number of advantages: • Good performance on parallel applications. • Promising performance on difficult to parallelize sequential (single-threaded) applications using data/Thread Level Speculation (TLS) mechanisms. • Scalable, modular design. • Low hardware overhead support for speculative thread parallelism (compared to other TLS architectures), yet greatly increases the number of parallelizable applications. Main goal of TLS

Other Thread Level Speculation (TLS) Efforts:Wisconsin Multiscalar (1995) • This CMP-based design proposed the first reasonable hardware to implement TLS. • Unlike Hydra, Multiscalar implements a ring-like network between all of the processors to allow direct register-to-register communication. • Along with hardware-based thread sequencing, this type of communication allows much smaller threads to be exploited at the expense of more complex processor cores. • The designers proposed two different speculative memory systems to support the Multiscalar core. • The first was a unified primary cache, or address resolution buffer (ARB). Unfortunately, the ARB has most of the complexity of Hydra’s secondary cache buffers at the primary cache level, making it difficult to implement. • Later, they proposed the speculative versioning cache (SVC). • The SVC uses write-back primary caches to buffer speculative writes in the primary caches, using a sophisticated coherence scheme.

Other Thread Level Speculation (TLS) Efforts:Carnegie-Mellon Stampede • This CMP-with-TLS proposal is very similar to Hydra, • Including the use of software speculation handlers. • However, the hardware is simpler than Hydra’s. • The design uses write-back primary caches to buffer writes—similar to those in the SVC—and sophisticated compiler technology to explicitly mark all memory references that require forwarding to another speculative thread. • Their simplified SVC must drain its speculative contents as each thread completes, unfortunately resulting in heavy bursts of bus activity.

Other Thread Level Speculation (TLS) Efforts:MIT M-machine • This CMP design has three processors that share a primary cache and can communicate register-to-register through a crossbar. • Each processor can also switch dynamically among several threads. (TLS & SMT??) • As a result, the hardware connecting processors together is quite complex and slow. • However, programs executed on the M-machine can be parallelized using very fine-grain mechanisms that are impossible on an architecture that shares outside of the processor cores, like Hydra. • Performance results show that on typical applications extremely fine-grained parallelization is often not as effective as parallelism at the levels that Hydra can exploit. The overhead incurred by frequent synchronizations reduces the effectiveness. Fine grain multi-threaded, not SMT