Reinforcement Learning

Explore the concepts of Supervised vs. Unsupervised Learning, Sequential Decision Problems, Model Structures, Value Iteration, Utility Functions, Dynamic Programming, and Reinforcement Learning in this detailed introduction by Alp Sardağ.

Reinforcement Learning

E N D

Presentation Transcript

Reinforcement Learning Introduction Presented by Alp Sardağ

Supervised vs Unsupervised Learning • Any Situation in which both the inputs and outputs of a component can be perceived is called Supervised Learning. • Learning when there is no hint at all about correct outputs is called Unsupervised Learning. The agent receives some evaluation of its action but is not told the correct action.

Sj1 Sj2 Si Sjn Sequential Decision Problems • In single decision problems, the utility of each actions outcome is well known. Aj1 Uj1 Aj2 Uj2 Choose next action with Max(U) Ajn Uj3

Sequential Decision Problems • Sequential decision problems, the agent’s utility depends on a sequence of actions. • The difference is what is returned is not a single action but rather a policy- arrived at by calculating the utilities for each state.

Example The available actions (A) : North, South, East and West P(IE | A) = 0.8 ; P(^IE | A) = 0.2 * IE Intended Action • Terminal States : The environment terminates when the agent reaches one of the states marked +1 or –1. • Model : Set of probabilites associated with the possible transitions between states after any given action. The notation Maij means the probability of reaching State j if action A is done in State i. (Accessible environment MDP: next state depends current state and action.)

.5 1.0 .5 .33 +1 .33 .5 .33 1.0 .5 -1 .5 .5 .33 .33 .5 .33 .5 .5 Model Obtained by simulation

Example • There is no utility for the states other than the terminal states (T). • We have to base the utility function on a sequence of states rather than on a single state. E.g. Uex(s1,...,sn) = -1/25 n + U(T) • To select the next action : Consider sequences as one long action and apply the basic maximum expected utility principle to sequences. • Max(EU(A|I)) = Max(Maij * Uj) • Result : The first action of the optimal sequence.

Drawback • Consider the action sequence starting from state (3,2) ; [North,East]. • Than it will be better to calculate utilitiy function for each state.

VALUE ITERATION • The basic idea is to calculate the utility of each state, U(state), and then use the state utilities to select an optimal action in each state. • Policy : A complete mapping from states to actions. • H(state,policy) : History tree starting from the state and taking action according to policy. • U(i) EU(H(i,policy*)|M) P(H(i,policy*)|M)Uh(H(i,policy*)))

The Property of Utility Function • For a utility function on states (U) to make sense, we require that the utility function on histories (Uh) have the property of seperability. Uh([s0,s1,...,sn]) = f(s0,Uh([s1,...,sn]) • The siplest form of seperable utility funciton is additive. Uh([s0,s1,...,sn]) = R(s0) + Uh([s1,...,sn]) where R is called the Reward function. *Notice : Additivity was implicit in our use of path cost functions in heuristic search algorithms. The sum of the utilities from that state until the terminal state is reached.

Refreshing • We have to base the utility function on a sequence of states rather than on a single state. E.g. Uex(s1,...,sn) = -1/25 n + U(T) • In that case R(si) = -1/25 for non terminal states , +1 for state (4,3) and –1 for state (4,2).

Utility of States • Given a separable utility function Uh , the utility of a state can be expressed in terms of the utility of its succesors. U(i) = R(i) + maxajMaijU(j) • The above equation is the basis for dynamic programming.

Dynamic Programming • There are two approaches. • The first approach starts by calculating utilities of all states at step n-1 in terms of utilites of the terminal states; than at step n-2 , so on... • The second approach approximates the utilities of states to any degree of accuracv using an iterative procedure. This is used because in most decision problem the environment histories are potentially of unbounded length.

Algorithm Function DP (M , R ) Returns Utility Function Begin // Initialization U = R ; U’ = R; Repeat U U’ For Each State i do U’[i] R[i] + maxajMaijU(j) end Until U’-U < End

Policy • Policy Function : policy*(i) = maxajMaijU(j)

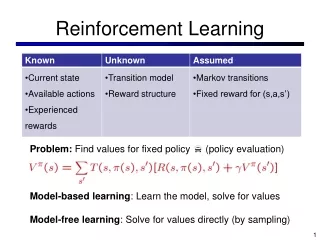

Reinforcement Learning • The task is to use rewards and punishments to learn a succesfull agent function (policy) • Diffucult, the agent never told what the right actions, nor which reward for which action. The agent starts with no model and no utility function. • In many complex domain, RL is the only feasible way to train a program to perform at high levels.

Example: An agent learning to play chess • Supervised learning: very hard for the teacher from large number of positions to choose accurate ones to train directly from examples. • In RL the program told when it has won or lost, and can use this information to learn an evaluation function.

Two Basic Designs • The agent learns a utility function on states (or histories) and uses it to select actions that maximizes the expected utility of their outcomes. • The agent learns an action-value function giving the expected utility of taking a given action in a given state. This is called Q-learning. The agent not interested with the outcome of its action.

Active & Passive Learner • A passive learner simply watches the world going by, and tries to learn utility of being in various states. • An active learner must also act using learned information and use its problem generator to suggest explorations of unknown portions of the environment.

Comparison of Basic Designs • The policy for an agent that learns a utility function on states is: policy*(i) = maxajMaijU(j) • Te policy for an agent that learns an action-value function is: policy*(i) = maxa Q(a,i)

.5 1.0 .5 .33 .33 .5 .33 1.0 .5 .33 .5 .5 .33 .5 .33 .5 .5 Passive Learning .5 (a)Simple Stocastic Environment (b)Mij is provided in PL, Maij is provided in AL (c)The exact utility values

Calculation of Utility on States for PL • Dynamic Programming (ADP): U(i) R(i) + jMijU(j) Because the agent is passive, no maximization over action. • Temporal Difference Learning: U(i) U(i)+(R(i)+U(j)-U(i)) where is the learning rate. This suggest U(i) agree with its successor.

Comparison of ADP & TD • ADP will converge faster than TD, ADP knows current environment model. • ADP use the full model, TD uses no model, just information about connectedness of states, from the current training sequence. • TD adjusts a state to agree with its observed successor whereas ADP adjusts the state to agree with all successor. But this difference will disappear when the effects of TD adjustments are averaged over a large number of transitions. • Full ADP may be intractable when the number of states is large. Prioritized-sweeping heuristic prefers to make adjustement to states whose likely successor have just undergone a large adjustment in their own utility.

Calculation of Utility on States for AL • Dynamic Programming (ADP): U(i) R(i) + maxajMaijU(j) • Temporal Difference Learning: U(i) U(i)+(R(i)+U(j)-U(i))

{ R+ if n<Ne u otherwise Problem of Exploration in AL • An active learner act using the learned information, and can use its problem generator to suggest explorations of unknown portions of the environment. • Trade-off between immediate good and long-term well-being. • One idea: To change the constraint equation so that it assigns a higher utility estimate to relatively unexplored action-state pairs. • U(i) R(i) + maxa F(jMaijU(j),N(a,i)) where • F(u,n) =

Learning an Action-Value Function • The function assigns an expected utility to taking a given action in a given state. Q(a,i) : expected utility to taking action a in state i. • Like condition-action rules, they suffice for decision making. • Unlike the condition-action rules, they can be learned directly from reward feedback.

Calculation of Action-Value Function • Dynamic Programming: Q(a,i) R(i) + jMaij maxa’Q(a’,j) • Temporal Difference Learning: Q(a,i) Q(a,i) +(R(i)+ maxa’Q(a’,j) - Q(a,i)) where is the learning rate.

Question & The Answer that Refused to be Found • Is it better to learn a utility function or to learn an action-value function?