ERSDAC Research Initiatives and Network Integration Agenda

Discover how ERSDAC collaborates with international partners like NASA for Earth remote sensing data analysis research. Learn about large-volume data transfer, future challenges, and network infrastructure.

ERSDAC Research Initiatives and Network Integration Agenda

E N D

Presentation Transcript

23th APAN Meeting ASTER DATA Transfer System Earth Remote Sensing Data Analysis Center Kunjuro Omagari ERSDAC

Agenda • Research Purposes • Network Structure • Data Transfer / Archive • Data Transfer / Distribution • Future Challenges • Summary ERSDAC

Research Purposes - International cooperation - • NASA Goddard Space Flight Center (GSFC/US) • Jet Propulsion Laboratory (JPL/US) • USGS / National Center for Earth Resources Observation & Science (EROS/US) • ERSDAC/JAPAN ERSDAC

Research Purposes - Domestic cooperation - • AIST/JAPAN • ERSDAC/JAPAN Transfer all ASTER L0 data for research and development of AIST GEO Grid supercomputer System. ERSDAC

Research Purposes - Reduced distribution time- • Carry out research and development of a system which aims at reducing the time between observation and data acquisition by researchers, and to provide an infrastructure ・Time between observation and data acquisition ・Old system: about 14 days (by sending a tape) ・Target: about 4 days (by network transfer) ERSDAC

Research Purposes - Large-volume data transfer- • Amount of received data(NASA -> ERSDAC): 500 observed scenes / day about 60GB Level 0 data / day • Amount of send data(ERSDAC -> EROS): 500 scenes / day about 60GB processed Level 1 data / day ERSDAC

Research Purposes - Large-volume data transfer- • Transfer data for research and development of the supercomputer technology (AIST GEO Grid System) • 800 GB / day ASTER L0 data transfer in operation • Transfer newly-arrived ASTER L0 data

Research Purposes - Support for mission management- • Send and receive observation schedule etc. (ftp) • Receive satellite telemetry data(GRE tunnel + multicast) • Voice hotline (VoIP) ERSDAC

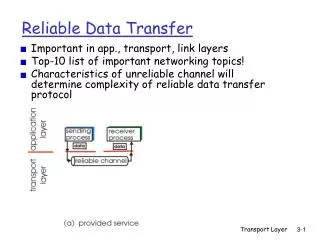

Network Structure - Network overview- Using 1Gbps Ether as the access line (changed from 100M bps to 1Gbps in 2006/Nov) Tokyo XP APAN ERSDAC 1G Ether Router JGN2/ TrnsPAC2 Starlight Chicago MAX/Abilene VBNS+ Connecting from JGN2 to Starlight Chicago (Internet2), via Tokyo XP APAN, with transPAC2 as the backup line Connecting from Starlight Chicago to NASA: Goddard Space Flight Center, USGS EROS and JPL, via MAX/VBNS+ etc Goddard Space Flight Center EROS/JPL EBnet LAN Router-1 Router-2 Router ERSDAC

Network Structure - Observation system overview- Tracking and Data Relay Satellite TERRA(5 sensors onboard) ASTER sensor Control command Process the data to Level 0 at NASA, and transfer it to ERSDAC via network Process the data to Level 1 at ERSDAC Control command observation data Telemetry data After observation by ASTER sensor, receive the data from satellite TERRA in the U.S observation Observation data Telemetry data U.S.A. Japan Tracking and Data Relay Satellite System 日本 EROS ERSDAC GSFC JPL Telemetry data Level 0 data/VoIP Level 1 data Processing/Archive Tokyo XP APAN Data processing to Level 0 ASTER Ground data system EOSDIS /NASA JGN2/transPAC2 Observation schedule Level 1 data Star Light Chicago Data Acquisition Request Data Processing Request Data Acquisition Request Data Processing Request Process the data to Level 1 at ERSDAC and transfer it to EROS Archive the data both at EROS and ERSDAC Data distribution Data distribution User User ERSDAC

Network Structure - Communication protocol- • IPV4 TCP/UDP network • Installed the hotline between NASA and ERSDAC by two VoIP lines • Receiving telemetry data with GRE tunnel + multicast packet • Confirmed the data transfer by multi-session ftp and by e-mail ERSDAC

Change of the GRE tunnel - Before change - • Receiving the GRE tunnel, which is used for multicast packet transfer, by WAN connecting router • The firewall that ERSDAC currently has can’t let multicast packet go through. => unable to use the firewall ERSDAC

Change of the GRE tunnel end - After change - • To extend the end of the GRE tunnel to the connecting router (Cisco8201) of a required segment, as well as to duplicate it. => able to make good use of the existing firewall ERSDAC

Data Transfer / Archive - ERSDAC internal system- Put the data on DTF-2 tapes into storage Tape storage Distribution server InterNET Receive L0 data From NASA Generate primary data by data processor Data transfer server Archive the primary data Data distribution control server Transfer the received L0 data to AIST Tokyo XP APAN JGN2 Data processing server ERSDAC

Data Transfer / Distribution - ERSDAC internal system- Tape storage Distribution server InterNET Distribute the high-level data to Users Distribute the primary data to Users Send the primary data to EROS Archive the high-level data Retrieve products for high-level processing Data distribution control server Tokyo XP APAN JGN2 Data processing server Data transfer server ERSDAC

FutureChallenges • To apply the results of research and development of supercomputer technology (GEO Grid System) by AIST • To assess the transfer of not only ASTER-data, but also PALSAR-data (TBD) ERSDAC

Summary • As of now, the interval between observation and Level 1 data distribution has been reduced to about 4 days, while it used to be around 12 days. (The interval between observation and data generation has been reduced to about 2 days.) • We plan to review and change the archive method, so that we can process the data immediately after receiving it. (Currently, we wait for two-day’s data to be stored and then process it.) (TBD) • We started the data transfer for development of the AIST GEO Grid system, with 800GB/day (2007/Nov) • We plan to use the GEO Grid system. ERSDAC