Support Vector Machines

Support Vector Machines. Jordan Smith MUMT 611 14 February 2008. Topics to cover. What do Support Vector Machines (SVMS) do? How do SVMs work? Linear data Non-linear data (Kernel functions) Unseparable data (added Cost function) Search optimization Why?. What SVMs do. What SVMs do.

Support Vector Machines

E N D

Presentation Transcript

Support Vector Machines Jordan Smith MUMT 611 14 February 2008

Topics to cover • What do Support Vector Machines (SVMS) do? • How do SVMs work? • Linear data • Non-linear data (Kernel functions) • Unseparable data (added Cost function) • Search optimization • Why?

What SVMs do = margin

What SVMs do = margin

What SVMs do = margin = support vector

What SVMs do (optimum separating hyperplane) = margin = support vector

What SVMs do (optimum separating hyperplane) = margin = support vector

What SVMs do Sherrod 230

Topics to cover • What do Support Vector Machines (SVMS) do? • How do SVMs work? • Linear data • Non-linear data (Kernel functions) • Unseparable data (added Cost function) • Search optimization • Why?

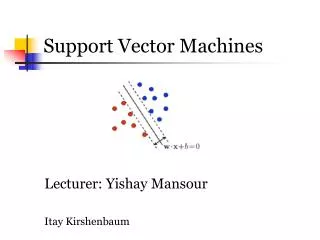

The linear, separable case • Training data {xi, yi} • Separating hyperplane defined by normal vector w • hyperplane equation: w·x + b = 0 • distance from plane to origin: |b|/|w| • Distances from hyperplane to nearest point in each collection are d+ and d- Goal: maximize d+ + d- (margins)

Lagrange multipliers The linear, separable case 1) xi·w + b ≥ +1 (for yi = +1) 2) xi·w + b ≤ -1 (for yi = -1) • yi(xi·w + b) - 1 ≥ 0 • for our support vectors, distance from origin to plane = |1-b|/|w| Algebra d+ + d- = 2 / |w| New goal: maximize: 2 /|w| i.e., minimize: |w|

Nonlinear SVMs Sherrod 235

Nonlinear SVMs Kernel trick: • Map data into a higher-dimensional space using : Rd H • Training problems involve only the dot product, so H can even be of infinite dimension • Kernel trick makes nonlinear solutions linear again! • youtube example

Nonlinear SVMs • Radial basis function: Sherrod 236

Nonlinear SVMs • Sigmoid Sherrod 237

Another demonstration • applet

The unseparable case • Classifiers need to have a balanced capacity: • Bad botanist: “It has 847 leaves. Not a tree!” • Bad botanist: “It’s green. That’s a tree!”

The unseparable case Sherrod 237

The unseparable case = error = fuzzy margin

Quadratic Programming The unseparable case Add a cost function: • xi·w + b ≥ +1 - i (for yi = +1) • xi·w + b ≤ -1 + i (for yi = -1) • i ≥ 0 old goal: minimize |w|2/2 new goal: minimize |w|2/2 + C(∑i i)k

Optimizing your search • To find the separating hyperplane, you must manipulate many parameters, depending on which kernel function you select: • C, the cost constant • Gamma, i, etc. • There are two basic methods: • Grid search • Pattern search

Topics to cover • What do Support Vector Machines (SVMS) do? • How do SVMs work? • Linear data • Non-linear data (Kernel functions) • Unseparable data (added Cost function) • Search optimization • Why?

Why use SVMs? Uses: • Optical character recognition • Spam detection • MIR • genre, artist classification (Mandel 2004, 2005) • mood classification (Laurier 2007) • popularity classification, based on lyrics (Dhanaraj 2005)

Why use SVMs? • Machine learner of choice for high-dimensional data, such as text, images, music! • Conceptually simple. • Generalizable and efficient. Next slides: results of a benchmark study (Meyer 2004) comparing SVMs and other learning techniques

Key References Burges, C. J. C. "A tutorial on support vector machines for pattern recognition." Data Mining and Knowledge Discovery, 2:955-974, 1998. http://citeseer.ist.psu.edu/burges98tutorial.html Cortes, C. and V. Vapnik. "Support-Vector Networks." Machine Learning, 20:273-297, Sept 1995. http://citeseer.ist.psu.edu/cortes95supportvector.html Sherrod, Phillip H. 2008. DTREG: Predictive Modeling Software. (User’s guide) 227-41. <http://www.dtreg.com/DTREG.pdf” Smola, A. J. and B. Scholkopf. 1998. “A tutorial on support vector regression.” NEUROCOLT Technical report NC-TR-98-030. Royal Holloway college, London.