Parallel and Multiprocessor Architectures

Parallel and Multiprocessor Architectures. Chapter 9.4. By Eric Neto. Parallel & Multiprocessor Architecture. In making processors faster, we run into certain limitations. Physical Economic Solution: When necessary, use more processors, working in sync.

Parallel and Multiprocessor Architectures

E N D

Presentation Transcript

Parallel and Multiprocessor Architectures Chapter 9.4 By Eric Neto

Parallel & Multiprocessor Architecture • In making processors faster, we run into certain limitations. • Physical • Economic • Solution: When necessary, use more processors, working in sync.

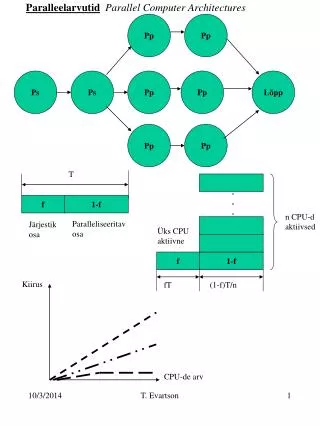

Parallel & Multiprocessor Limitations • Though parallel processing can speed up performance, the amount is limited. • Intuitively, you’d expect N processors to do the work in 1/N time, but processes sometimes work in sequences, so there will be some downtime while dormant processors wait for the active processor to finish. • Therefore, the more sequential a process is, the less cost-effective it is to implement parallelism.

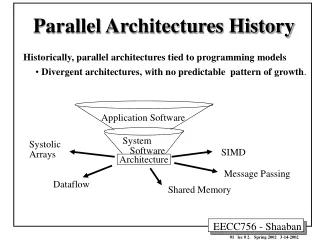

Parallel and Multiprocessing Architectures • Superscalar • VLIW • Vector • Interconnection Networks • Shared Memory • Distributed Computing

Superscalar Architecture • Allow multiple instructions to be executed simultaneously in each cycle. • Contain • Execution units – Each allows for one process to execute. • Specialized instruction fetch unit – Fetch multiple instructions at once, send them to decoding unit. • Decoding unit – Determines whether the given instructions are independent of one another.

VLIW Architecture • Similar to superscalar, but relies on compiler rather than specific hardware. • Puts independent instructions into one “Very Long Instruction Words” • Advantages: • More simple hardware • Disadvantages: • Instructions fixed at compile time, so some modifications could affect execution of instructions

Vector Processors • Use vector pipelines to store and perform operations on many values at once, as opposed to Scalar processing, which only performs operations on individual values. • Since it uses fewer instructions, there is less decoding, control unit overhead, and memory bandwidth usage. • Can be SIMD or MIMD. LDV V1, R1 LDV V2, R2 ADDV R3, V1, V2 STV R3, V3 Xn= X1 + X2 ; Yn= Y1 + Y2 ; Zn= Z1 + Z2 ; Wn= W1 + W2 ; … VS.

Interconnection Networks • Each processor has it’s own memory, that can be accessed and shared by other processors through an interconnected network. • Efficiency of messages shared through the network is limited based on: • Bandwidth • Message latency • Transport latency • Overhead • In general, the amount of messages sent and distances they must travel are minimized.

Topologies • Connections between networks can be either static or dynamic. • Different configurations of static processors are more useful for different tasks. Completely Connected Star Ring

More Topologies Tree Mesh Hypercube

Dynamic Networks • Busses, Crossbars, Switches, Multistage connections. • As you implement more processors, these get exponentially more expensive.

Dynamic Networking:Crossbar Network • Efficient • Direct • Expensive

Dynamic Networking:Switch Network • Complex • Moderately Efficient • Cheaper

Dynamic Networking:Bus • Simple • Slow • Inefficient • Cheap

Shared Memory Multiprocessors • Memory is shared either globally or locally, or a combination of the two.

Shared Memory Access • Uniform Memory Access systems use a shared memory pool, where all memory takes the same amount of time to access. • Quickly becomes expensive when more processors are added.

Shared Memory Access • Non-Uniform Memory Access systems have memory distributed across all the processors, and it takes less time for a processor to read from its own local memory than from non-local memory. • Prone to cache coherence problems, which occur when a local cache isn’t in sync with non-local caches representing the same data. • Dealing with these problems require extra mechanisms to ensure coherence.

Distributed Computing • Multi-Computer processing • Works on the same principal as multi-processors on a larger scale. • Uses a large network of computers to solve small parts of a very large problem.