Chapter 3, Section 9 Discrete Random Variables

Chapter 3, Section 9 Discrete Random Variables. Moment-Generating Functions. John J Currano, 12/15/2008. æ. ö. k. ( c ) k j E [ Y j ]. E [ ( Y – c ) k ]. k. =. å. ç. ÷. j. è. ø. =. j. 0. E [ ( Y – E ( Y ) ) k ] = E [ ( Y – ) k ]. m. =. k.

Chapter 3, Section 9 Discrete Random Variables

E N D

Presentation Transcript

Chapter 3, Section 9Discrete Random Variables Moment-Generating Functions John J Currano,12/15/2008

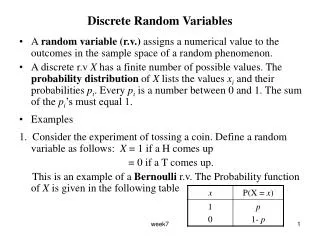

æ ö k (c)kj E [Y j] E[(Y – c)k] k = å ç ÷ j è ø = j 0 E[(Y – E(Y))k] = E[(Y – )k] m = k Definitions. Let Y be a discrete random variable with probability function, p(y), and let k = 1, 2, 3, . Then: is the kth moment of Y (about the origin). is the kth moment of Y about c. is the kth central moment of Y. Notes.=E(Y) = the first moment of Y ( =1) 2=V(Y) = the second central moment of Y ( =2 ) =E(Y2) – [E(Y)]2=2 – (1)2. What is the first central moment of Y ?

Definition. If Y is a discrete random variable, its moment-generating function (mgf) is the function provided this function of t exists (converges) in some interval around 0. Note. The mgf, when it exists, gives a better characterization of the distribution than the mean and variance – it completely determines the distribution in the sense that if two random variables have the same mgf, then they have the same distribution. So to find the distribution of a random variable, we can find its mgf and compare it to a list of known mgfs; if the mgf is in the list, we have found the distribution. We shall also see that the mgf gives us an easy way to find the distribution’s mean and variance.

( ) tY ty å = = m ( t ) E e e p ( y ) y k ¥ ( ty ) å å = p ( y ) k ! = y k 0 k ¥ ( ty ) å å = p ( y ) k ! = k 0 y k k ¥ t t k å å = factoring out y p ( y ) k ! k ! = k 0 y ( ) k ¥ t k å = E Y k ! = k 0 Why it is called the moment-generating function: Using the Maclaurin Series for ex at the right, we derive by Theorem 3.2 using the Maclaurin series for ety interchanging the summations by Theorem 3.2

- k 2 ¥ ( ) ( ) ( ) - × k ( k 1 ) t 2 1 k 2 2 ¢ ¢ ¢ ¢ = = = å m ( t ) E Y ; m ( 0 ) E Y E Y ; k ! 2 ! = k 2 Why it is called the moment-generating function: Thus, et cetera.

t 2 t 3 t 3 1 2 = + + m ( t ) e e e 6 6 6 t 2 t 3 t ¢ 9 1 4 14 7 ¢ = + + = = = m ( t ) e e e , so E ( Y ) m ( 0 ) . 6 6 6 6 3 ¢ ¢ = m ( t ) ( ) t 2 t 3 t 2 8 36 1 27 ¢ ¢ + + = = = e e e , so E Y m ( 0 ) 6 . 6 6 6 6 ( ) 2 2 = = 5 7 - 6 3 9 Theorem. for k = 1, 2, 3, . . . where mY(t) is the mgf of Y (provided it exists). Also, mY(0) = E(e0Y) = E(1) = 1 for any RV, Y. Example(p. 142 #3.155). Given that find: (a) E(Y); (b) V(Y); (c) the distribution of Y. (a) (b) Thus, V(Y) = E(Y2) [E(Y)]

t 2 t 3 t 3 1 2 = + + m ( t ) e e e 6 6 6 ( ) tY ty t 2 t 3 t 3 1 2 = = = + + å m ( t ) E e e p ( y ) e e e 6 6 6 y 3 1 2 , , , 6 6 6 Theorem. for k = 1, 2, 3, . . . where mY(t) is the mgf of Y (provided it exists). Also, mY(0) = E(e0Y) = E(1) = 1 for any RV, Y. Example(p. 142 #3.155). Given that find: (a) E(Y); (b) V(Y); (c) the distribution of Y. (c) Since and the support of Y consists of the coefficients of t in the exponents of the powers of e in the nonzero terms of the power series, Y has support {1, 2, 3}. The probability that Y assumes each of these values is the coefficient of the exponential in the corresponding term, so respectively. Y = 1, 2, 3, with probabilities

æ ö n n - ty y n y ç ÷ å e p q ç ÷ y è ø = y 0 ( ) æ ö n n y - t n y ç ÷ å = pe q ç ÷ y è ø = y 0 Example. Find the moment-generating function of Y ~ bin(n, p) and use it to find E(Y) and V(Y). Solution. Then, by Theorem 3.2 = (pet+ q)nby the Binomial Theorem

Theorem. If Y ~ bin(n, p), then its mgf is ) ( - n 1 t t + × n pe q pe - - n 1 n 1 ¢ Þ = = + × × = × × = E ( Y ) m ( 0 ) n ( p q ) p 1 n ( 1 ) p 1 np ( ) ( ) - - n 1 n 2 é ù t t t t t + × - + × × + n pe q pe n ( n 1 ) pe q pe pe ¢ ¢ = m ( t ) ë û ( ) 2 ¢ ¢ Þ = = E Y m ( 0 ) - - n 2 n 1 - × × × × + × × n ( n 1 ) ( 1 ) p 1 p 1 n ( 1 ) p 1 2 2 2 2 = - + = - + n ( n 1 ) p np n p np np 2 2 2 2 2 Þ = - + - V(Y) = E(Y2) [E(Y)] n p np np ( np ) 2 = - + = - = np np np ( 1 p ) npq . Now differentiate to find E(Y) and V(Y): (a) (b)

( ) 2 5, bin 3 2 3 3 2 æ ö æ ö 5 5 æ ö æ ö æ ö æ ö 2 1 2 1 ç ÷ ç ÷ + ç ÷ ç ÷ ç ÷ ç ÷ ç ÷ ç ÷ 3 3 3 3 è ø è ø è ø è ø 2 3 è ø è ø Example. If the moment-generating function of a random variable, Y, , find Pr(Y = 2 or 3). Solution. From the form of the moment-generating function, , so that we know that Y ~ P(Y = 2 or 3) = P(Y = 2) + P(Y = 3) =

Homework Problem Results (pp. 142-143) 3.147 If Y ~ Geom(p), then 3.158 If Y is a random variable with moment-generating function, mY(t), and W = aY + b where a and b are constants, then mW (t) = maY+b (t) = et bmY (at).

( ) t l - e 1 = m ( t ) e . Y Other Moment-Generating Functions 1. If Y ~ NegBin(r, p), then This is most easily proved using results in Chapter 6. Exercise. Use mY(t) to find E(Y) and V(Y). 2. If Y ~ Poisson(), then This is Example 3.23 on p. 140, where it is proved and then used (in Example 3.24) to find E(Y) and V(Y).