Random Variables

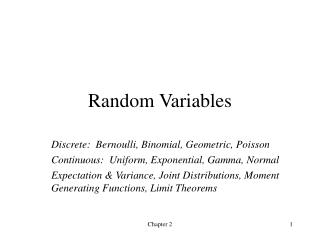

Random Variables. Discrete: Bernoulli, Binomial, Geometric, Poisson Continuous: Uniform, Exponential, Gamma, Normal Expectation & Variance, Joint Distributions, Moment Generating Functions, Limit Theorems. Definition of random variable.

Random Variables

E N D

Presentation Transcript

Random Variables Discrete: Bernoulli, Binomial, Geometric, Poisson Continuous: Uniform, Exponential, Gamma, Normal Expectation & Variance, Joint Distributions, Moment Generating Functions, Limit Theorems Chapter 2

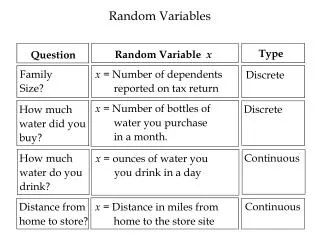

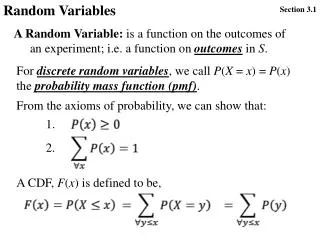

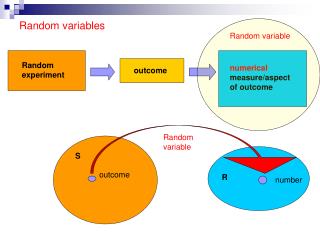

Definition of random variable A random variable is a function that assigns a number to each outcome in a sample space. • If the set of all possible values of a random variable X is countable, then X is discrete. The distribution of X is described by a probability mass function: • Otherwise, X is a continuous random variable if there is a nonnegative function f(x), defined for all real numbers x, such that for any set B, f(x) is called the probability density function of X. Chapter 2

pmf’s and cdf’s • The probability mass function (pmf) for a discrete random variable is positive for at most a countable number of values of X: x1, x2, …, and • The cumulative distribution function (cdf) for any random variable X is F(x) is a nondecreasing function with • For a discrete random variable X, Chapter 2

Bernoulli Random Variable • An experiment has two possible outcomes, called “success” and “failure”: sometimes called a Bernoulli trial • The probability of success is p • X = 1 if success occurs, X = 0 if failure occurs Then p(0) = P{X = 0} = 1 – p and p(1) = P{X = 1} = p X is a Bernoulli random variable with parameter p. Chapter 2

Binomial Random Variable • A sequence of n independent Bernoulli trials are performed, where the probability of success on each trial is p • X is the number of successes Then for i = 0, 1, …, n, where X is a binomial random variable with parameters n and p. Chapter 2

Geometric Random Variable • A sequence of independent Bernoulli trials is performed with p = P(success) • X is the number of trials until (including) the first success. Then X may equal 1, 2, … and X is named after the geometric series: Use this to verify that Chapter 2

Poisson Random Variable X is a Poisson random variable with parameter l > 0 if note: X can represent the number of “rare events” that occur during an interval of specified length A Poisson random variable can also approximate a binomial random variable with large n and small p if l = np: split the interval into n subintervals, and label the occurrence of an event during a subinterval as “success”. Chapter 2

Continuous random variables A probability density function (pdf) must satisfy: The cdf is: means that f(a) measures how likely X is to be near a. Chapter 2

Uniform random variable X is uniformly distributed over an interval (a, b) if its pdf is Then its cdf is: all we know about X is that it takes a value between a and b Chapter 2

Exponential random variable X has an exponential distribution with parameter l > 0 if its pdf is Then its cdf is: This distribution has very special characteristics that we will use often! Chapter 2

Gamma random variable X has an gamma distribution with parameters l > 0 and a > 0 if its pdf is It gets its name from the gamma function If a is an integer, then Chapter 2

Normal random variable X has a normal distribution with parameters m and s2 if its pdf is This is the classic “bell-shaped” distribution widely used in statistics. It has the useful characteristic that a linear function Y = aX+b is normally distributed with parameters am+b and (as)2 . In particular, Z = (X – m)/s has the standard normal distribution with parameters 0 and 1. Chapter 2

Expectation Expected value (mean) of a random variable is Also called first moment – like moment of inertia of the probability distribution If the experiment is repeated and random variable observed many times, it represents the long run average value of the r.v. Chapter 2

Expectations of Discrete Random Variables • Bernoulli: E[X] = 1(p) + 0(1-p) = p • Binomial: E[X] = np • Geometric: E[X] = 1/p (by a trick, see text) • Poisson: E[X] = l : the parameter is the expected or average number of “rare events” per interval; the random variable is the number of events in a particular interval chosen at random Chapter 2

Expectations of Continuous Random Variables • Uniform: E[X] = (a + b)/2 • Exponential: E[X] = 1/l • Gamma: E[X] = ab • Normal: E[X] = m : the first parameter is the expected value: note that its density is symmetric about x = m: Chapter 2

Expectation of a function of a r.v. • First way: If X is a r.v., then Y = g(X) is a r.v.. Find the distribution of Y, then find • Second way: If X is a random variable, then for any real-valued function g, If g(X) is a linear function of X: Chapter 2

Higher-order moments The nth moment of X is E[Xn]: The variance is It is sometimes easier to calculate as Chapter 2

Variances of Discrete Random Variables • Bernoulli: E[X2] = 1(p) + 0(1-p) = p; Var(X) = p – p2 = p(1-p) • Binomial: Var(X) = np(1-p) • Geometric: Var(X) = 1/p2 (similar trick as for E[X]) • Poisson: Var(X) = l : the parameter is also the variance of the number of “rare events” per interval! Chapter 2

Variances of Continuous Random Variables • Uniform: Var(X) = (b - a)2/2 • Exponential: Var(X) = 1/l • Gamma: Var(X) = ab2 • Normal: Var(X) = s2: the second parameter is the variance Chapter 2

Jointly Distributed Random Variables See text pages 46-47 for definitions of joint cdf, pmf, pdf, marginal distributions. Main results that we will use: especially useful with indicator r.v.’s: IA = 1 if A occurs, 0 otherwise Chapter 2

Independent Random Variables X and Y are independent if This implies that: Also, if X and Y are independent, then for any functions h and g, Chapter 2

Covariance The covariance of X and Y is: If X and Y are independent then Cov(X,Y) = 0. Properties: Chapter 2

Variance of a sum of r.v.’s If X1, X2, …, Xn are independent, then Chapter 2

Moment generating function The moment generating function of a r.v. X is Its name comes from the fact that Also, if X and Y are independent, then And, there is a one-to-one correspondence between the m.g.f. and the distribution function of a r.v. – this helps to identify distributions with the reproductive property Chapter 2