Understanding Data Center Traffic Characteristics

190 likes | 311 Vues

This research explores the low-level traffic characteristics in 19 data centers, focusing on understanding the arrival processes and their distinct patterns compared to wide area networks. The study reveals a predominance of ON-OFF traffic patterns and identifies utilization and loss rates across various links. Insights include localized packet loss and unutilized links, suggesting improvements in traffic management. The development of a traffic generator simulates realistic conditions to evaluate data center designs, emphasizing the importance of traffic behavior in infrastructure optimization.

Understanding Data Center Traffic Characteristics

E N D

Presentation Transcript

Understanding Data Center Traffic Characteristics Theophilus Benson1, Ashok Anand1, Aditya Akella1, Ming Zhang2 University Of Wisconsin – Madison1, Microsoft Research2

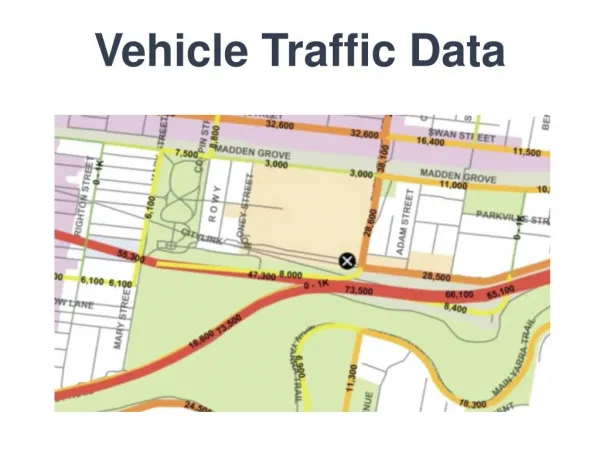

Data Centers Background • Built to optimize cost and performance • Tiered Architecture • 3 layers; edge, aggregation, core • Cheap devices at edges and expensive devices at core • Over-subscription of links closer to the core • Fewer links towards core reduce cost • Trade negligible loss/delay for fewer devices and links

Data Centers Today Few large links Expensive and scarce Many little links Cheap and abundant Cisco Canonical DC Architecture

Challenges In Designing For Data Centers • Very little is known about data centers • No models for evaluation • Lack of knowledge effects evaluation • Use properties of wide area network traffic. • Make up traffic matrixes/random traffic patterns. • Insufficient for the following reasons • Can’t accurately compare techniques • Oblivious to actual characteristics of data centers

Data Center Traffic Characterization • Goals of our project • Understand low level characteristics of traffic in data centers • What is the arrival process? • Is it similar or distinct from wide area networks? • How does low level traffic impact the data center?

Data Center Traffic Characterization • In studying data center traffic we found that: • Few links experience loss • Many links are unutilized • Traffic adheres to ON-OFF • Arrival process is log normal

Outline • Background • Goals • Data set • Observations and insights • Overview of traffic generator (see paper for details) • Conclusion

Data Sets • Data from 19 data centers • Differences in size and architecture • Data for intranet and extranet server farms • Applications: messaging, search, video streaming, email • Data consists of • Packet traces from edge switches in one data center • SNMP MIB of devices in all data centers • Data collected over a span of 10 days

Analyzing Snmp Data • Analyze link utilization and drops • Analysis from one 5 minute interval • Lot of un-utilized links • Back-up/redundant links • Aggregation layer has the most used links • Funneling of traffic from aggregation • Very few links with losses

Analyzing Snmp Data: Link Utilization • 95th percentile used • Core > Edge > Aggregation • Core has fewest links • Edge has smaller, (1Gbps) links higher util. than aggregation.

Analyzing Snmp Data: Link Loss Rates • Aggregation > Edges > Core • Utilization: Core > Edges > Aggregation • Core has relatively little loss but high utilization • All links loose less than 2% of packets • Aggregation of flow leads to stability • Edge & Aggr have significantly higher losses • Few links (20%) experience high losses (over 40%) • Most likely due to bursty traffic

Insights From Snmp • Loss is localized to a few links (4%) • Loss may be avoided by utilizing all links • 40% of links are unused in some areas • Reroute traffic • Move applications/migrate virtual machine • Inverse correlation between loss and utilization • Should examine low level packet traces • Traces from same 10 days as SNMP

Analyzing Packet Traces • Time series of traffic on an edge link • ON-OFF traffic at edges • Time series shows ON-OFF patterns • Binned in 15 and 100 m. secs • ON-OFF persists

Analyzing Packet Traces • What is the arrival process? • Matlab curve-fitting (least mean square) • Weibull, log normal, pareto, exponential • Curve fits log-normal for the 3 distributions • Inter-arrival, on-times, off-times • All switches exhibit identical patterns • Different from pareto (WAN) traffic

Data Center Traffic Generator • Based on our insights we created a traffic generator • Goal: produce a stream of packets that exhibits an ON-OFF arrival pattern • Input: distribution of traffic volumes and loss rates from SNMP pulls for a link • Output: the parameters for a fine grained arrival process that will produce the input distribution

Data Center Traffic Generator • Approach • Search the space of available parameters • Simulate each set of parameters • Accept parameters that pass a similarity test with high confidence • Wilcoxon used for the similarity test

Sharing Insights • Implications for research and operations • Evaluate designs with traffic generator • Implications for Fat-tree • Fat-tree: congestion eliminated through no over-provision and traffic balancing • Parameterization: traffic engineering, flow classification, assumes stableness on the order of ‘T’ seconds • Our work can inform the setting of ‘T’

Conclusion • Analyzed traffic from 19 data centers • Bottle neck aggregation layer • Characterized arrival process at edge links • Described a traffic generator for data centers • Utilized for evaluation of data center designs • Future work • Analyze packet trace • stableness of traffic matrix • ratio of inter/intra-dc communication