Probability theory

This overview covers key concepts from Week 2 of the Probability Theory course (LING 570) by Fei Xia, focusing on elementary probability theory, such as sample spaces, random variables, and their distributions. It introduces relevant Unix commands for data manipulation, linguistic structures, and effective programming practices. Detailed discussions include types of probabilities (joint, conditional, and marginal), maximum likelihood estimation, and basic linguistic constructs like parts of speech. The session aims to facilitate a solid understanding of both theory and practical tools essential for analysis.

Probability theory

E N D

Presentation Transcript

Probability theory LING 570 Fei Xia Week 2: 10/01/07

Misc. • Patas account and dropbox • Course website, “Collect it”, and GoPost. • Mailing list • Received message on Thursday? • Questions about hw1?

Outline • Quiz #1 • Unix commands • Linguistics • Elementary Probability theory: M&S 2.1

Quiz #1 Five areas: weight ave • Programming: 4.0 (3.74) • Try Perl or Python • Unix commands: 1.2 (0.99) • Probability: 2.0 (1.09) • Regular expression: 2.0 (1.62) • Linguistics knowledge: 0.8 (0.71)

Results • 9.0-10: 4 • 8.0-8.9: 8 • < 8.0: 8

Unix commands • ls (list), cp (copy), rm (remove) • more, less, cat • cd, mkdir, rmdir, pwd • chmod: to change file permission • tar, gzip: to tar/zip files • ssh, sftp: to log on or ftp files • man: to learn a command

Unix commands (cont) • compilers: javac, gcc, g++, perl, … • ps, top, • which • Pipe: cat input_file | eng_tokenizer.sh | make_voc.sh > output_file • sort, unique, awk, grep grep “the” voc | awk ‘{print $2}’ | sort | uniq –c | sort -nr

Examples • Set the permission of foo.pl so it is readable and executable by the user and the group. rwx rwx rwx => 101 101 000 chmod 550 foo.pl • Move a file, foo.pl, from your home dir to /tmp mv ~/foo.pl /tmp

Linguistics: POS tags • Open class: Noun, verb, adjective, adverb • Auxiliary verb/modal: can, will, might, .. • Temporal noun: tomorrow • Adverb: adj+ly, always, still, not, … • Closed class: Preposition, conjunction, determiner, pron, • Conjunction: CC (and), SC (if, although) • Complementizer: that,

Linguistics: syntactic structure • Two kinds: • Phrase structure (a.k.a. parse tree): • Dependency structure • Examples: • John said that he would call Mary tomorrow

Outline • Quiz #1 • Unix commands • Linguistics • Elementary Probability theory

Basic concepts • Sample space, event, event space • Random variable and random vector • Conditional probability, joint probability, marginal probability (prior)

Sample space, event, event space • Sample space (Ω): the set of all possible outcomes. • Ex: toss a coin three times: {HHH, HHT, HTH, HTT, …} • Event: an event is a subset of Ω. • Ex: an event is {HHT, HTH, THH} • Event space (2Ω): the set of all possible events.

Probability function • A probability function (a.k.a. a probability distribution) distributes a probability mass of 1 throughout the sample space . • It is a function from 2! [0,1] such that: P() = 1 For any disjoint sets Aj2 2, P( Aj) = P(Aj) - Ex: P({HHT, HTH, HTT}) = P({HHT}) + P({HTH}) + P({HTT})

The coin example • The prob of getting a head is 0.1 for one toss. What is the prob of getting two heads out of three tosses? • P(“Getting two heads”) = P({HHT, HTH, THH}) = P(HHT) + P(HTH) + P(THH) = 0.1*0.1*0.9 + 0.1*0.9*0.1+0.9*0.1*0.1 = 3*0.1*0.1*0.9

Random variable • The outcome of an experiment need not be a number. • We often want to represent outcomes as numbers. • A random variable X is a function: ΩR. • Ex: the number of heads with three tosses: X(HHT)=2, X(HTH)=2, X(HTT)=1, …

The coin example (cont) • X = the number of heads with three tosses • P(X=2) = P({HHT, HTH, THH}) = P({HHT}) + P({HTH}) + P({THH})

Two types of random variables • Discrete: X takes on only a countable number of possible values. • Ex: Toss a coin three times. X is the number of heads that are noted. • Continuous: X takes on an uncountable number of possible values. • Ex: X is the speed of a car

Common trick #1: Maximum likelihood estimation • An example: toss a coin 3 times, and got two heads. What is the probability of getting a head with one toss? • Maximum likelihood: (ML) * = arg max P(data | ) • In the example, • P(X=2) = 3 * p * p * (1-p) e.g., the prob is 3/8 when p=1/2, and is 12/27 when p=2/3 3/8 < 12/27

Random vector • Random vector is a finite-dimensional vector of random variables: X=[X1,…,Xk]. • P(x) = P(x1,x2,…,xn)=P(X1=x1,…., Xn=xn) • Ex: P(w1, …, wn, t1, …, tn)

Notation • X, Y, Xi, Yi are random variables. • x, y, xi are values. • P(X=x) is written as P(x) • P(X=x | Y=y) is written as P(x | y).

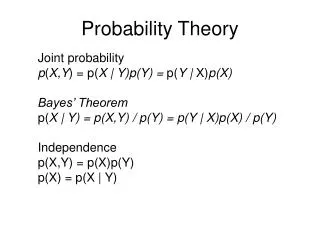

Three types of probability • Joint prob: P(x,y)= prob of X=x and Y=y happening together • Conditional prob: P(x | y) = prob of X=x given a specific value of Y=y • Marginal prob: P(x) = prob of X=x for all possible values of Y.

An example • There are two coins. Choose a coin and then toss it. Do that 10 times. • Coin 1 is chosen 4 times: one head and three tails. • Coin 2 is chosen six times: four heads and two tails. • Let’s calculate the probabilities.

Probabilities • P(C=1) = 4/10, P(C=2) = 6/10 • P(X=h) = 5/10, P(X=t) = 5/10 • P(X=h | C=1) = ¼, P(X=h |C=2) =4/6 • P(X=t | C=1) = ¾, P(X=t |C=2) = 2/6 • P(X=h, C=1) =1/10, P(X=h, C=2)= 4/10 • P(X=t, C=1) = 3/10, P(X=t | C=2) = 2/10

Relation between different types of probabilities P(X=h, C=1) = P(C=1) * P(X=h | C=1) = 4/10 * ¼ = 1/10 P(X=h) = P(X=h, C=1) + P(X=h, C=2) = 1/10 + 4/10 = 5/10

Independent random variables • Two random variables X and Y are independent iff the value of X has no influence on the value of Y and vice versa. • P(X,Y) = P(X) P(Y) • P(Y|X) = P(Y) • P(X|Y) = P(X) • Our previous examples: P(X, C) != P(X) P(C)

Conditional independence Once we know C, the value of A does not affect the value of B and vice versa. • P(A,B | C) = P(A|C) P(B|C) • P(A|B,C) = P(A | C) • P(B|A, C) = P(B |C)

Independence and conditional independence • If A and B are independent, are they conditional independent? • Example: • Burglar, Earthquake • Alarm

An example • P(w1 w2 … wn) = P(w1) P(w2 | w1) P(w3 | w1 w2) * … * P(wn | w1 …, wn-1) ¼ P(w1) P(w2 | w1) …. P(wn | wn-1) • Why do we make independence assumption which we know are not true?

Summary of elementaryprobability theory • Basic concepts: sample space, event space, random variable, random vector • Joint / conditional /marginal probability • Independence and conditional independence • Five common tricks: • Max likelihood estimation • Chain rule • Calculating marginal probability from joint probability • Bayes’ rule • Independence assumption

Outline • Quiz #1 • Unix commands • Linguistics • Elementary Probability theory

Next time • J&M Chapt 2 • Formal language and formal grammar • Regular expression • Hw1 is due at 3pm on Wed.